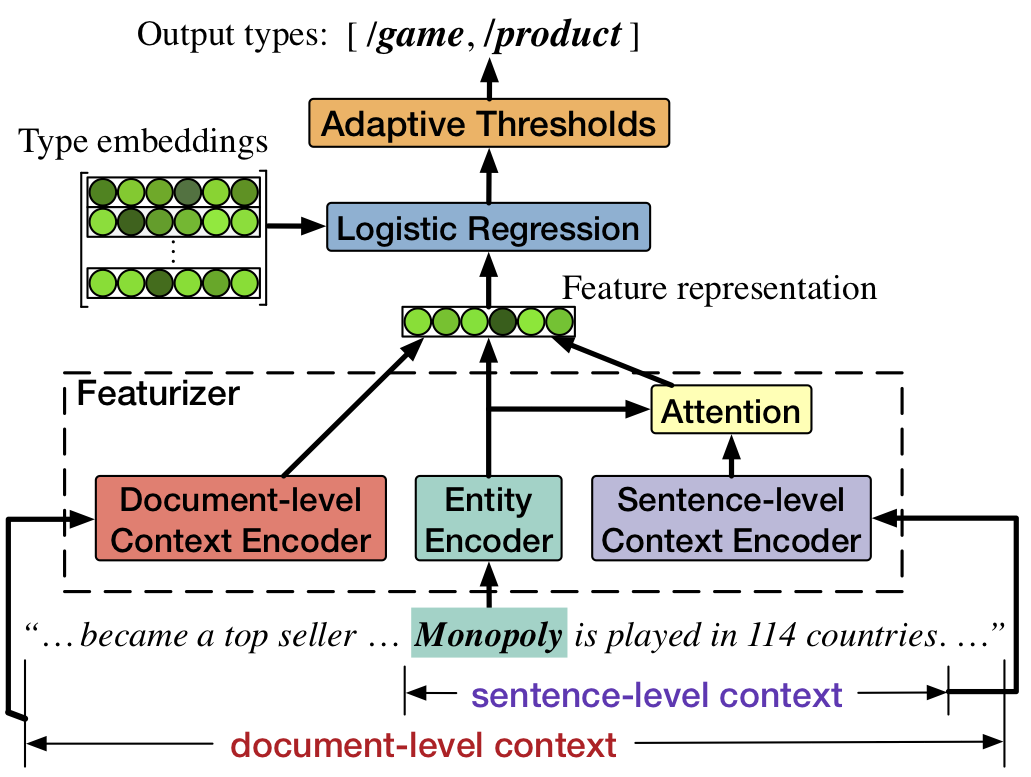

Fine-grained Entity Typing through Increased Discourse Context and Adaptive Classification Thresholds

Source code and data for StarSem'18 paper Fine-grained Entity Typing through Increased Discourse Context and Adaptive Classification Thresholds.

Citation

The source code and data in this repository aims at facilitating the study of fine-grained entity typing. If you use the code/data, please cite it as follows:

@InProceedings{zhang-EtAl:2018:starSEM,

author = {Zhang, Sheng and Duh, Kevin and {Van Durme}, Benjamin},

title = {{Fine-grained Entity Typing through Increased Discourse Context and Adaptive Classification Thresholds}},

booktitle = {Proceedings of the 7th Joint Conference on Lexical and Computational Semantics (*SEM 2018)},

month = {June},

year = {2018}

}

Benchmark Performance

1. OntoNotes (Gillick et al., 2014)

| Approach | Strict F1 | Macro F1 | Micro F1 |

|---|---|---|---|

| Our Approach | 55.52 | 73.33 | 67.61 |

| w/o Adaptive thresholds | 53.49 | 73.11 | 66.78 |

| w/o Document-level contexts | 53.17 | 72.14 | 66.51 |

2. Wiki (Ling and Weld, 2012)

| Approach | Strict F1 | Macro F1 | Micro F1 |

|---|---|---|---|

| Our Approach | 60.23 | 78.67 | 75.52 |

| w/o Adaptive thresholds | 60.05 | 78.50 | 75.39 |

3. BBN (Weischedel and Brunstein, 2005)

| Approach | Strict F1 | Macro F1 | Micro F1 |

|---|---|---|---|

| Our Approach | 60.87 | 77.75 | 76.94 |

| w/o Adaptive thresholds | 58.47 | 75.84 | 75.03 |

| w/o Document-level contexts | 58.12 | 75.65 | 75.11 |

Prerequisites

- Python 2.7

- PyTorch 0.2.0 (w/ CUDA support)

- Numpy

- tqdm

Running

Once getting the prerequisites, you can run the whole process very easily. Take the OntoNotes corpus for example,

Step 1: Download the data

./scripts/ontonotes.sh get_dataStep 2: Preprocess the data

./scripts/ontonotes.sh preprocessStep 3: Train the model

./scripts/ontonotes.sh trainStep 4: Tune the threshold

./scripts/ontonotes.sh adaptive-thresStep 5: Do inference

./scripts/ontonotes.sh inferenceAcknowledgements

The datasets (Wiki and OntoNotes) are copies from Sonse Shimaoka's repository.