A Virtual Desktop Infrastructure running on Kubernetes.

Except as I've continued to work on this, I've noticed this is really just a free and open-source VDI solution based on docker containers. Kubernetes just makes it easier to implement 😄.

ATTENTION: The helm chart repository has been relocated (since the repo has been relocated). To update your repository you can do the following:

helm repo remove tinyzimmer

helm repo add kvdi https://kvdi.github.io/kvdi/deploy/charts

helm repo update

helm install kvdi kvdi/kvdi # yes, that's a lot of kvdiThis project has reached a point where I am not going to be making enormous changes all the time anymore. As such I am tagging a "stable" release and incrementing from there. That still doesn't mean I highly recommend it's usage, but rather I am relatively confident in its overall stability.

If you are interested in helping out or just simply launching a design discussion, feel free to send PRs and/or issues.

I wrote up a CONTRIBUTING doc just outlining some of the stuff I have in mind that would need to be acomplished for this to be considered "stable".

-

Containerized user desktops running on Kubernetes with no virtualization required (

libvirtoptions may come in the future). All traffic between the end user and the "desktop" is encrypted. -

Persistent user data

-

Audio playback and microphone support

-

File transfer to/from "desktop" sessions. Directories get archived into a gzipped tarball prior to download.

-

RBAC system for managing user access to templates, roles, users, namespaces, serviceaccounts, etc.

-

MFA Support

-

Configurable backend for internal secrets. Currently

vaultor Kubernetes Secrets -

Use built-in local authentication, LDAP, or OpenID.

-

App metrics to either scrape externally or view in the UI. More details in the

helmdoc.

- "App Profiles" - I have a POC implementation on

mainbut it is still pretty buggy - DOSBox/Game profiles could be cool...same as "App Profiles"

- UI could use a serious makeover from someone who actually knows what they are doing

For building and running locally you will need:

go >= 1.14docker

If you don't have access to a Kubernetes cluster, or you just want to try kVDI out on a VM real quick, there is a script in this repository for setting up kVDI using k3s.

It requires the instance running the script to have docker and the dialog package installed.

If you have an existing k3s installation, the ingress may not work since this script assumes kVDI will be the only LoadBalancer installed.

# Download the script from this repository.

curl -JLO https://raw.githubusercontent.com/kvdi/kvdi/main/deploy/architect/kvdi-architect.sh

# Run the script. You will be prompted via dialogs to make configuration changes.

bash kvdi-architect.sh # Use --help to see all available options.NOTE: This script is fairly new and still has some bugs

For more complete installation instructions see the helm chart docs here for available configuration options.

The API Reference can also be used for details on kVDI app-level configurations.

helm repo add kvdi https://kvdi.github.io/kvdi/deploy/charts # Add the kvdi repo

helm repo update # Sync your repositories

# Install kVDI

helm install kvdi kvdi/kvdiIt will take a minute or two for all the parts to start running after the install command.

Once the app is launched, you can retrieve the admin password from kvdi-admin-secret in your cluster (if you are using ldap auth, log in with a user in one of the adminGroups).

To access the app interface either do a port-forward (make forward-app is another helper for that when developing locally with kind), or go to the "LoadBalancer" IP of the service.

By default there are no desktop templates configured. If you'd like, you can apply the ones in deploy/examples/example-desktop-templates.yaml to get started quickly.

There is a manifest in this repository that will just lay down the manager instance, its dependencies, and all of the CRDs. You can then create a VDICluster object manually to spin up an actual application instance.

To install the manifest:

export KVDI_VERSION=v0.3.1

kubectl apply -f https://raw.githubusercontent.com/kvdi/kvdi/${KVDI_VERSION}/deploy/bundle.yaml --validate=falseThe kustomize manifests in this repository are generated by kubebuilder and are usable as well similar to the Bundle Manifest.

They can be found in the config directory in this repository.

Most of the time you can just run a regular helm upgrade to update your deployment manifests to the latest images.

helm upgrade kvdi kvdi/kvdi --version v0.3.2However, sometimes there may be changes to the CRDs, though I will always do my best to make sure they are backwards compatible. Due to the way helm manages CRDs, it will ignore changes to those on an existing installation. You can get around this by applying the CRDs for the version you are upgrading to directly from this repo.

For example:

export KVDI_VERSION=v0.3.2

kubectl apply \

-f https://raw.githubusercontent.com/kvdi/kvdi/${KVDI_VERSION}/deploy/charts/kvdi/crds/app.kvdi.io_vdiclusters.yaml \

-f https://raw.githubusercontent.com/kvdi/kvdi/${KVDI_VERSION}/deploy/charts/kvdi/crds/desktops.kvdi.io_sessions.yaml \

-f https://raw.githubusercontent.com/kvdi/kvdi/${KVDI_VERSION}/deploy/charts/kvdi/crds/desktops.kvdi.io_templates.yaml \

-f https://raw.githubusercontent.com/kvdi/kvdi/${KVDI_VERSION}/deploy/charts/kvdi/crds/rbac.kvdi.io_vdiroles.yamlWhen there is a change to one or more CRDs, it will be mentioned in the notes for that release.

The Makefiles contain helpers for testing the full solution locally using kind. Run make help to see all the available options.

If you choose to pull the images from the registry instead of building and loading first - you probably want to set VERSION=latest (or a previous version) in your environment also.

The Makefile is usually pointed at the next version to be released and published images may not exist yet.

# Builds all the docker images (optional, they are also available in the github registry)

$> make build-all

# Spin up a kind cluster for local testing

$> make test-cluster

# Load all the docker images into the kind cluster (optional for same reason as build)

$> make load-all

# Deploy the manager, kvdi, and setup the example templates

$> make deploy example-vdi-templates

# To test using custom helm values

$> HELM_ARGS="-f my_values.yaml" make deployAfter the manager has started the app instance, get the IP of its service with kubectl get svc to access the frontend, or you can run make-forward-app to start a local port-forward.

If not using anonymous auth, look for kvdi-admin-secret to retrieve the admin password.

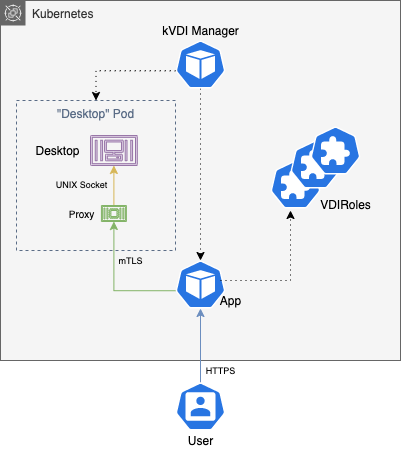

All traffic between processes is encrypted with mTLS. The UI for the "desktop" containers is placed behind a VNC server listening on a UNIX socket and a sidecar to the container will proxy validated websocket connections to it.

User authentication is provided by "providers". There are currently three implementations:

-

local-auth: Apasswdlike file is kept in the Secrets backend (k8s or vault) mapping users to roles and password hashes. This is primarily meant for development, but you could secure your environment in a way to make it viable for a small number of users. -

ldap-auth: An LDAP/AD server is used for autenticating users. VDIRoles can be tied to security groups in LDAP via annotations. When a user is authenticated, their groups are queried to see if they are bound to any VDIRoles. -

oidc-auth: An OpenID or OAuth provider is used for authenticating users. If using an Oauth provider, it must support theopenidscope. When a user is authenticated, a configurablegroupsclaim is requested from the provider that can be mapped to VDIRoles similarly toldap-auth. If the provider does not support agroupsclaim, you can configurekVDIto allow all authenticated users.

All three authentication methods also support MFA.