This is the official codebase for the RAL 2022 paper: "See Eye to Eye: A Lidar-Agnostic 3D Detection Framework for Unsupervised Multi-Target Domain Adaptation".

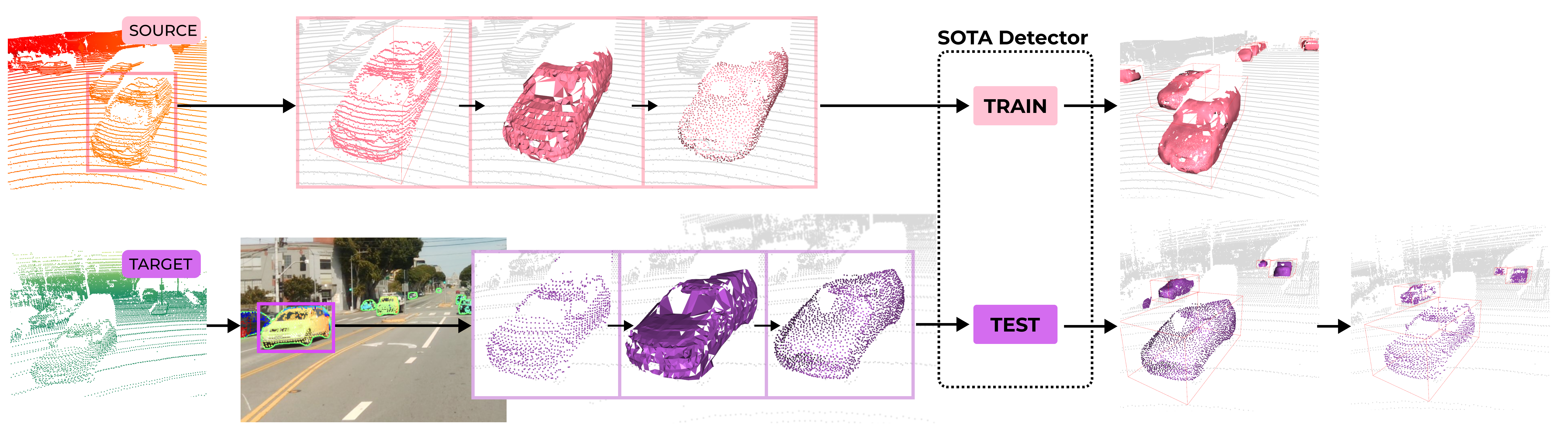

Being a multi-target domain adaptation approach, we enable any novel SOTA detectors to become an agnostic model for different lidar sensors without requiring any form of training. The same model trained with SEE can support any kind of scan pattern. We provide download links for all the models that were used in the paper.

We aim to support novel SOTA detectors as they emerge in order to provide accessibility for those who do not have the training or manual labelling resources. We believe that this will be a great contribution to supporting novel and non-conventional lidars that differ from the popular lidars used in the research context.

See this video for a quick explanation of our work.

See this video for a quick explanation of our work.

This project builds upon the progress of other outstanding codebases in the computer vision community. We acknowledge the works of the following codebases in our project:

- Our instance segmentation code uses mmdetection.

- Our detector code is based on OpenPCDet v0.3.0 with DA configurations adopted from ST3D.

In the figure below, source-only denotes the approach where there is no domain adaptation. For the 2nd and 3rd columns, the same trained model is used for SEE. From below, we demonstrate that SEE-trained detectors give tighter bounding boxes and less false positives.

Please place all downloaded models into the model_zoo folder. See the model zoo readme for more details. All models were trained with a single 2080Ti for approximately 30-40hrs with our nuScenes and Waymo subsets.

Pre-trained instance segmentation models can be obtained from the model zoo of mmdetection. Our paper uses Hybrid Task Cascade (download model here).

| Model | Method | Download |

|---|---|---|

| SECOND-IoU | SEE | model |

| PV-RCNN | SEE | model |

Due to the Waymo Dataset License Agreement we can't provide the Source-only models for the above. You should achieve a similar performance by training with the default configs and training subsets.

| Model | Method | Download |

|---|---|---|

| SECOND-IoU | Source-only | model |

| SECOND-IoU | SEE | model |

| PV-RCNN | Source-only | model |

| PV-RCNN | SEE | model |

This repo is structured in 2 parts: see and detector. For each part, we have provided a separate docker image which can be obtained as follows:

docker pull darrenjkt/see-mtda:see-v1.0docker pull darrenjkt/see-mtda:detector-v1.0

We have provided a script to run the necessary docker images for each part. Please edit the folder names for mounting local volumes into the docker image. We currently do not provide other installation methods. If you'd like to install natively, please refer to the Dockerfile for see or detector for more information about installation requirements.

Note that nvidia-docker2 is required to run these images. We have tested that this works with NVIDIA Docker: 2.5.0 or higher. Additionally, unless you are training the models, the tasks below require at least 1 GPU with a minimum of 5GB memory. This may vary if using different types of image instance segmentation networks or 3D detectors.

Please refer to DATASET_PREPARATION for instructions on downloading and preparing datasets.

In this section, we provide instructions specifically for the Baraja Spectrum-Scan™ (Download link) Dataset as an example of adoption to a novel industry lidar. Please modify the configuration files as necessary to train/test for different datasets.

In this phase, we isolate the objects, create meshes and sample from them. Firstly, run the docker image as follows. For instance segmentation, a single GPU is sufficient. If you simply wish to train/test the Source-only baseline, then you can skip this "SEE" section.

# Run docker image for SEE

bash docker/run.sh -i see -g 0

# Enter docker container. Use `docker ps` to find the name of the newly created container from the above command.

docker exec -it ${CONTAINER_NAME} /bin/bash

a) Instance Segmentation: Get instance masks for all images using the HTC model (link). If you are using the baraja dataset, we've provided the masks in the download link. Feel free to skip this part. We also provide a script for generating KITTI masks.

bash see/scripts/prepare_baraja_masks.sh

b) Transform to Canonical Domain: Once we have the masks, we can isolate the objects and transform them into the canonical domain. We have multiple configurations for nuScenes, Waymo, KITTI and Baraja datasets. We code "DM" (Det-Mesh) as using instance segmentation (target domain) and "GM" (GT-Mesh) as using ground truth boxes (source domain) to transform the objects. Here is an example for the Baraja dataset.

cd see

python surface_completion.py --cfg_file sc/cfgs/BAR-DM-ORH005.yaml

To run train/test the detector, run the following docker image with our provided script. If you'd like to specify gpus to use in the docker container, you can do so with e.g. -g 0 or -g 0,1,2. For testing, a single gpu is sufficient. With docker, this should work out-of-the-box; however, if there are issues finding libraries, please refer to INSTALL.md.

# Run docker image for Point Cloud Detector

bash docker/run.sh -i detector -g 0

# Enter docker container. Use `docker ps` to find the newly created container from the above image.

docker exec -it ${CONTAINER_NAME} /bin/bash

a) Training: see the following example. Replace the cfg file with any of the other cfg files in the tools/cfgs folder (cfg file links provided in Model Zoo). Number of epochs, batch sizes and other training related configurations can be found in the cfg file.

cd detector/tools

python train.py --cfg_file cfgs/source-waymo/secondiou/see/secondiou_ros_custom1000_GM-ORH005.yaml

b) Testing: download the models from the links above and modify the cfg and ckpt paths below. For the cfg files, please link to the yaml files in the output folder instead of the ones in the tools/cfg folder. Here is an example for the Baraja dataset.

cd detector/tools

python test.py --cfg_file /SEE-MTDA/detector/output/source-waymo/secondiou/see/secondiou_ros_custom1000_GM-ORH005/default/secondiou_ros_custom1000_GM-ORH005_eval-baraja100.yaml \

--ckpt /SEE-MTDA/model_zoo/waymo_secondiou_see_6552.pth \

--batch_size 1

The location of further testing configuration files for the different tasks can be found here. These should give similar results as our paper. Please modify the cfg file path and ckpt model in the command above accordingly.

If you find our work useful in your research, please consider citing our paper:

@article{tsai2022see,

title={See Eye to Eye: A Lidar-Agnostic 3D Detection Framework for Unsupervised Multi-Target Domain Adaptation},

author={Tsai, Darren and Berrio, Julie Stephany and Shan, Mao and Worrall, Stewart and Nebot, Eduardo},

journal={IEEE Robotics and Automation Letters},

year={2022},

publisher={IEEE}

}