Training and evaluation environment: Python3.8.8, PyTorch 1.11.0, Ubuntu 20.4, CUDA 11.0. Run the following command to install required packages.

pip3 install -r requirements.txt

You can build a container with the configured environment using our Dockerfiles. Our Dockerfiles only support CUDA 11.0/11.4/11.6. If you use different CUDA drivers, you need to modify the base image in the Dockerfile (This is annoying that you need a matched image in Dockerfile for your CUDA driver, otherwise the gpu doesn't work in the container. Any better solutions?). You also need to configue the paths to the datasets in config.yml before training or testing.

An example script to run the demo.

python3 demo.py --checkpoint=./weights/simpleclick_models/cocolvis_vit_huge.pth --gpu 0

Some test images can be find here.

Before evaluation, please download the datasets and models, and then configure the path in config.yml.

Use the following code to evaluate the huge model.

python scripts/evaluate_model.py NoBRS \

--gpu=0 \

--checkpoint=./weights/simpleclick_models/cocolvis_vit_huge.pth \

--eval-mode=cvpr \

--datasets=GrabCut,Berkeley,DAVIS,PascalVOC,SBD,COCO_MVal,ssTEM,BraTS,OAIZIB

Before training, please download the MAE pretrained weights (click to download: ViT-Base, ViT-Large, ViT-Huge) and configure the dowloaded path in config.yml.

Use the following code to train a huge model on C+L:

python train.py models/iter_mask/plainvit_huge448_cocolvis_itermask.py \

--batch-size=32 \

--ngpus=4

Pre-trained SimpleClick models: Google Drive

BraTS dataset (369 cases): Google Drive

OAI-ZIB dataset (150 cases): Google Drive

Other datasets: RITM Github

[10/25/2022] Add docker files.

[10/02/2022] Release the main models. This repository is still under active development.

The code is released under the MIT License. It is a short, permissive software license. Basically, you can do whatever you want as long as you include the original copyright and license notice in any copy of the software/source.

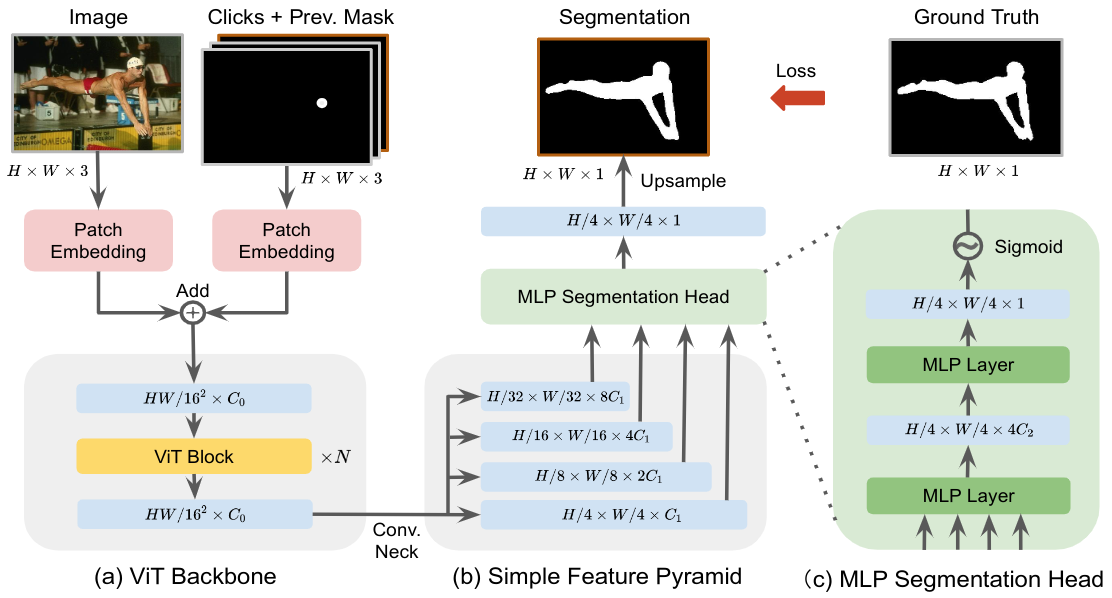

@article{liu2022simpleclick,

title={SimpleClick: Interactive Image Segmentation with Simple Vision Transformers},

author={Liu, Qin and Xu, Zhenlin and Bertasius, Gedas and Niethammer, Marc},

journal={arXiv preprint arXiv:2210.11006},

year={2022}

}Our project is developed based on RITM. Thanks for the nice demo GUI :)