- The following

10 stepsare crucial for constructing a custom chat-oriented GPT based on the chosen document. - The runtime of each cell will be determined by the system you are using.

- Steps 2, 5, and 9 entail utilizing HuggingFace and free models, which can potentially lead to longer runtime.

- You are encouraged to explore alternatives such as OpenAI or AzureOpenAI in place of HuggingFaceAPI, as they may offer enhanced performance.

-

Installation of Essential Python Modules

-

Setting Environment Variables in the System

-

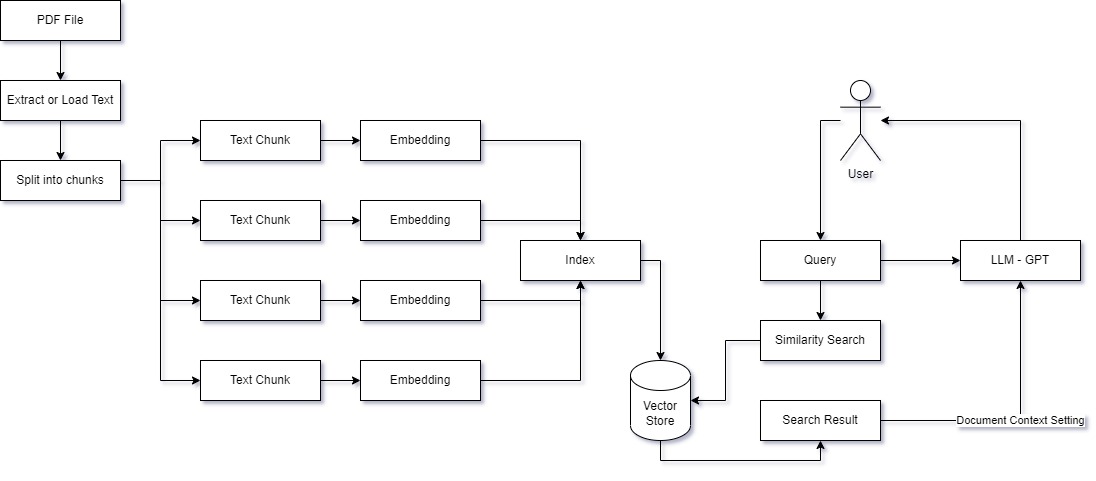

Parsing PDF Documents

-

Dividing Text into Chunks

-

Converting Chunks into Embeddings & Storing them in a Vector Store

- Utilizing the

intfloat/e5-large-v2model from HuggingFace for Embedding & Facebook'sFAISSfor Vector Store

- Utilizing the

-

Saving the Vector Store for Future Reuse with

Pickle -

Directly Loading the Vector Store from the

vectorstore.pklFile, Skipping Steps 3, 4, 5, and 6 😃 -

Performing Similarity Search Using the Vector Store

-

Creating a Large Language Model (LLM) with HuggingFace's

google/flan-t5-xxlOptional: Creating a Custom Chat History to Set Context

-

Combining All the Information into a Unified Chain Named

Conversational Retrieval QA Chain