Advanced Deep Learning with Keras This is the code repository for Advanced Deep Learning with Keras , published by Packt . It contains all the supporting project files necessary to work through the book from start to finish.

This book covers advanced deep learning techniques to create successful AI. Using MLPs, CNNs, and RNNs as building blocks to more advanced techniques, you’ll study deep neural network architectures, Autoencoders, Generative Adversarial Networks (GANs), Variational AutoEncoders (VAEs), and Deep Reinforcement Learning (DRL) critical to many cutting-edge AI results.

Instructions and Navigation All of the code is organized into folders. Each folder starts with a number followed by the application name. For example, chapter2-deep-networks.

The code will look like the following:

def encoder_layer(inputs,

filters=16,

kernel_size=3,

strides=2,

activation='relu',

instance_norm=True):

"""Builds a generic encoder layer made of Conv2D-IN-LeakyReLU

IN is optional, LeakyReLU may be replaced by ReLU

"""

conv = Conv2D(filters=filters,

kernel_size=kernel_size,

strides=strides,

padding='same')

MLP on MNIST CNN on MNIST RNN on MNIST

Functional API on MNIST Y-Network on MNIST ResNet v1 and v2 on CIFAR10 DenseNet on CIFAR10

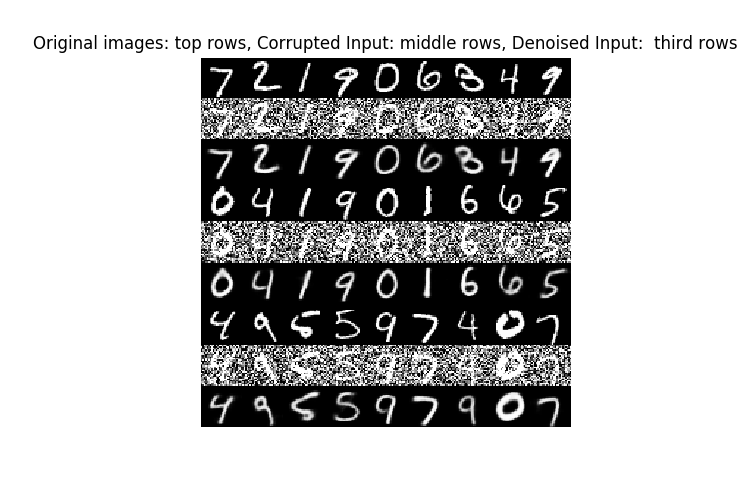

Denoising AutoEncoders

Sample outputs for random digits:

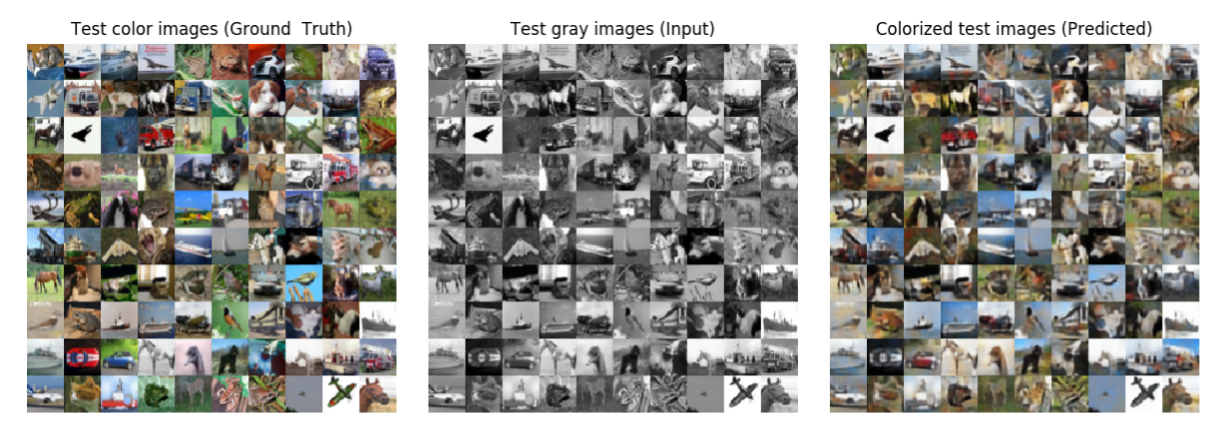

Colorization AutoEncoder

Sample outputs for random cifar10 images:

Deep Convolutional GAN (DCGAN)

Radford, Alec, Luke Metz, and Soumith Chintala. "Unsupervised representation learning with deep convolutional generative adversarial networks." arXiv preprint arXiv:1511.06434 (2015).

Sample outputs for random digits:

Conditional (GAN)

Mirza, Mehdi, and Simon Osindero. "Conditional generative adversarial nets." arXiv preprint arXiv:1411.1784 (2014).

Sample outputs for digits 0 to 9:

Wasserstein GAN (WGAN)

Arjovsky, Martin, Soumith Chintala, and Léon Bottou. "Wasserstein GAN." arXiv preprint arXiv:1701.07875 (2017).

Sample outputs for random digits:

Least Squares GAN (LSGAN)

Mao, Xudong, et al. "Least squares generative adversarial networks." 2017 IEEE International Conference on Computer Vision (ICCV). IEEE, 2017.

Sample outputs for random digits:

Auxiliary Classfier GAN (ACGAN)

Odena, Augustus, Christopher Olah, and Jonathon Shlens. "Conditional image synthesis with auxiliary classifier GANs. Proceedings of the 34th International Conference on Machine Learning, Sydney, Australia, PMLR 70, 2017."

Sample outputs for digits 0 to 9:

Information Maximizing GAN (InfoGAN)

Chen, Xi, et al. "Infogan: Interpretable representation learning by information maximizing generative adversarial nets."

Advances in Neural Information Processing Systems. 2016.

Sample outputs for digits 0 to 9:

Stacked GAN

Huang, Xun, et al. "Stacked generative adversarial networks." IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Vol. 2. 2017

Sample outputs for digits 0 to 9:

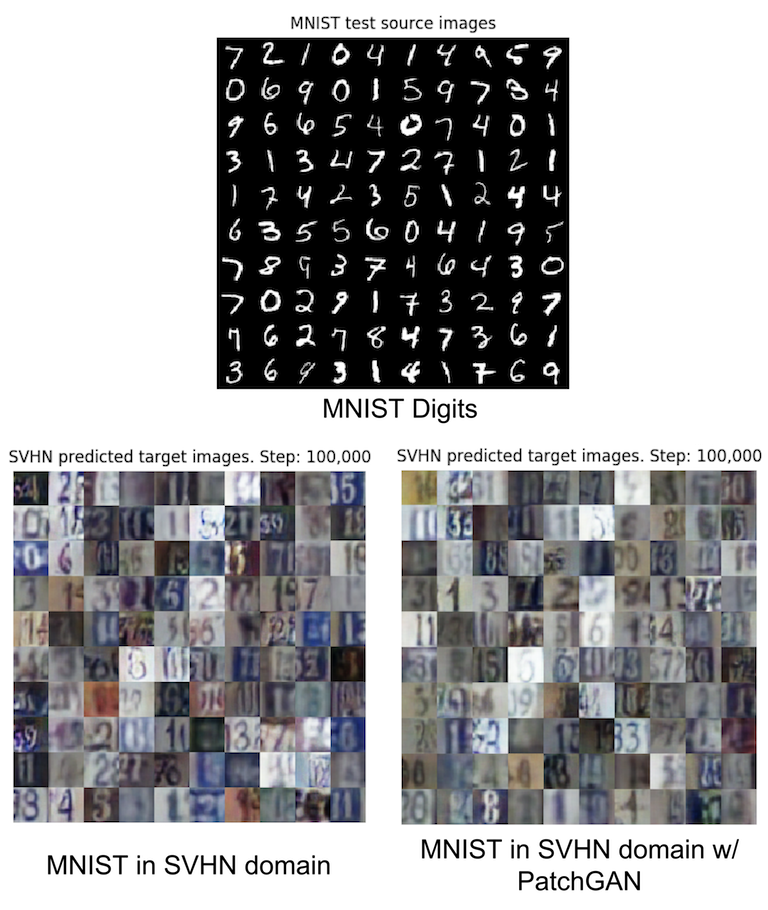

CycleGAN

Zhu, Jun-Yan, et al. "Unpaired Image-to-Image Translation Using Cycle-Consistent Adversarial Networks." 2017 IEEE International Conference on Computer Vision (ICCV). IEEE, 2017.

Sample outputs for random cifar10 images:

Sample outputs for MNIST to SVHN:

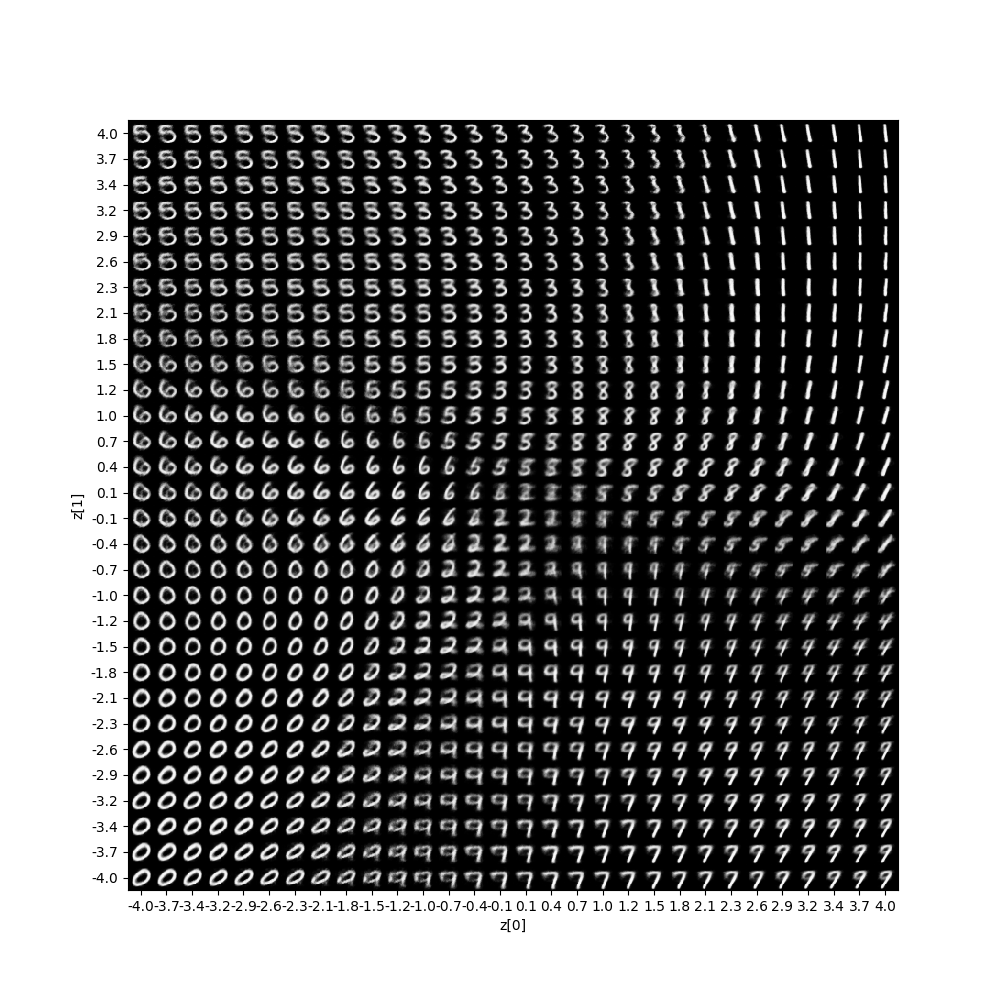

VAE MLP MNIST VAE CNN MNIST Conditional VAE and Beta VAE

Kingma, Diederik P., and Max Welling. "Auto-encoding Variational Bayes." arXiv preprint arXiv:1312.6114 (2013).

Sohn, Kihyuk, Honglak Lee, and Xinchen Yan. "Learning structured output representation using deep conditional generative models." Advances in Neural Information Processing Systems. 2015.

I. Higgins, L. Matthey, A. Pal, C. Burgess, X. Glorot, M. Botvinick, S. Mohamed, and A. Lerchner. β-VAE: Learning basic visual concepts with a constrained variational framework. ICLR, 2017.

Generated MNIST by navigating the latent space:

Q-Learning Q-Learning on Frozen Lake Environment DQN and DDQN on Cartpole Environment

Mnih, Volodymyr, et al. "Human-level control through deep reinforcement learning." Nature 518.7540 (2015): 529

DQN on Cartpole Environment:

REINFORCE, REINFORCE with Baseline, Actor-Critic, A2C

Sutton and Barto, Reinforcement Learning: An Introduction

Mnih, Volodymyr, et al. "Asynchronous methods for deep reinforcement learning." International conference on machine learning. 2016.

Policy Gradient on MountainCar Continuous Environment:

If you find this work useful, please cite:

@book{atienza2018advanced,

title={Advanced Deep Learning with Keras},

author={Atienza, Rowel},

year={2018},

publisher={Packt Publishing Ltd}

}