MegaBlocks is a light-weight library for mixture-of-experts (MoE) training. The core of the system is efficient "dropless-MoE" (dMoE, paper) and standard MoE layers.

MegaBlocks is built on top of Megatron-LM, where we support data, expert and pipeline parallel training of MoEs. We're working on extending more frameworks to support MegaBlocks.

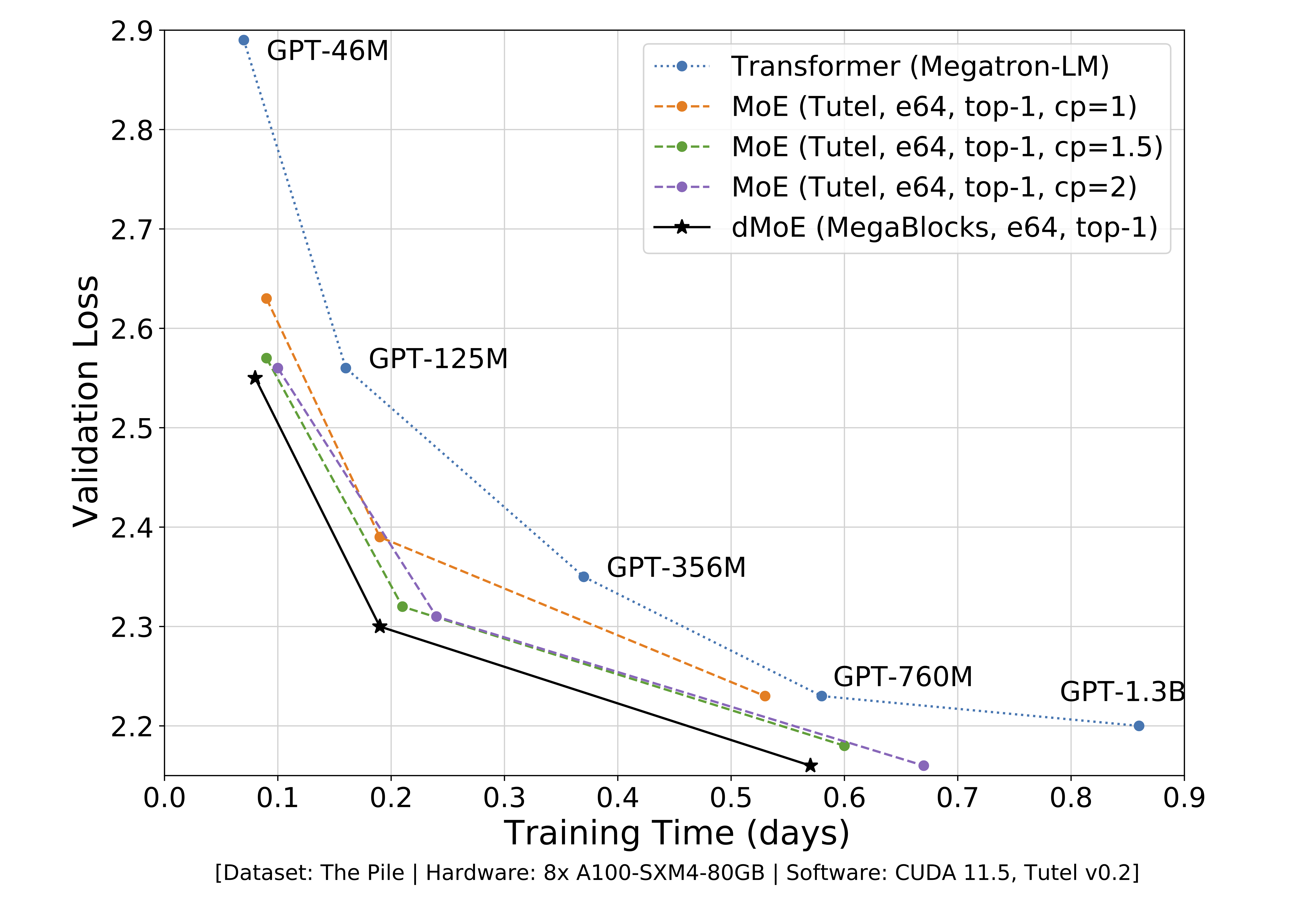

MegaBlocks dMoEs outperform MoEs trained with Tutel by up to 40% compared to Tutel's best performing capacity_factor configuration. MegaBlocks dMoEs use a reformulation of MoEs in terms of block-sparse operations, which allows us to avoid token dropping without sacrificing hardware efficiency. In addition to being faster, MegaBlocks simplifies MoE training by removing the capacity_factor hyperparameter altogether. Compared to dense Transformers trained with Megatron-LM, MegaBlocks dMoEs can accelerate training by as much as 2.4x. Check out our paper for more details!

NOTE: This assumes you have numpy and torch installed.

Training models with Megatron-LM: We recommend using NGC's nvcr.io/nvidia/pytorch:23.09-py3 PyTorch container. The Dockerfile builds on this image with additional dependencies. To build the image, run docker build . -t megablocks-dev and then bash docker.sh to launch the container. Once inside the container, install MegaBlocks with pip install .. See Usage for instructions on training MoEs with MegaBlocks + Megatron-LM.

Using MegaBlocks in other packages: To install the MegaBlocks package for use in other frameworks, run pip install megablocks. For example, Mixtral-8x7B can be run with vLLM + MegaBlocks with this installation method.

Extras: MegaBlocks has optional dependencies that enable additional features.

Installing megablocks[quant] enables configurable quantization of saved activations in the dMoE layer to save memory during training. The degree of quantization is controlled via the quantize_inputs_num_bits, quantize_rematerialize_num_bits and quantize_scatter_num_bits arguments.

Installing megablocks[gg] enables dMoE computation with grouped GEMM. This feature is enabled by setting the grouped_mlp argument to the dMoE layer. This is currently our recommended path for Hopper-generation GPUs.

MegaBlocks can be installed with all dependencies via the megablocks[all] package.

We provide scripts for pre-training Transformer MoE and dMoE language models under the top-level directory. The quickest way to get started is to use one of the experiment launch scripts. These scripts require a dataset in Megatron-LM's format, which can be created by following their instructions.

@article{megablocks,

title={{MegaBlocks: Efficient Sparse Training with Mixture-of-Experts}},

author={Trevor Gale and Deepak Narayanan and Cliff Young and Matei Zaharia},

journal={Proceedings of Machine Learning and Systems},

volume={5},

year={2023}

}