This is the official PyTorch implementation of the paper Learning Trajectory-Aware Transformer for Video Super-Resolution.

- Introduction

- Requirements and dependencies

- Model and results

- Dataset

- Test

- Train

- Related projects

- Citation

- Acknowledgment

- Contact

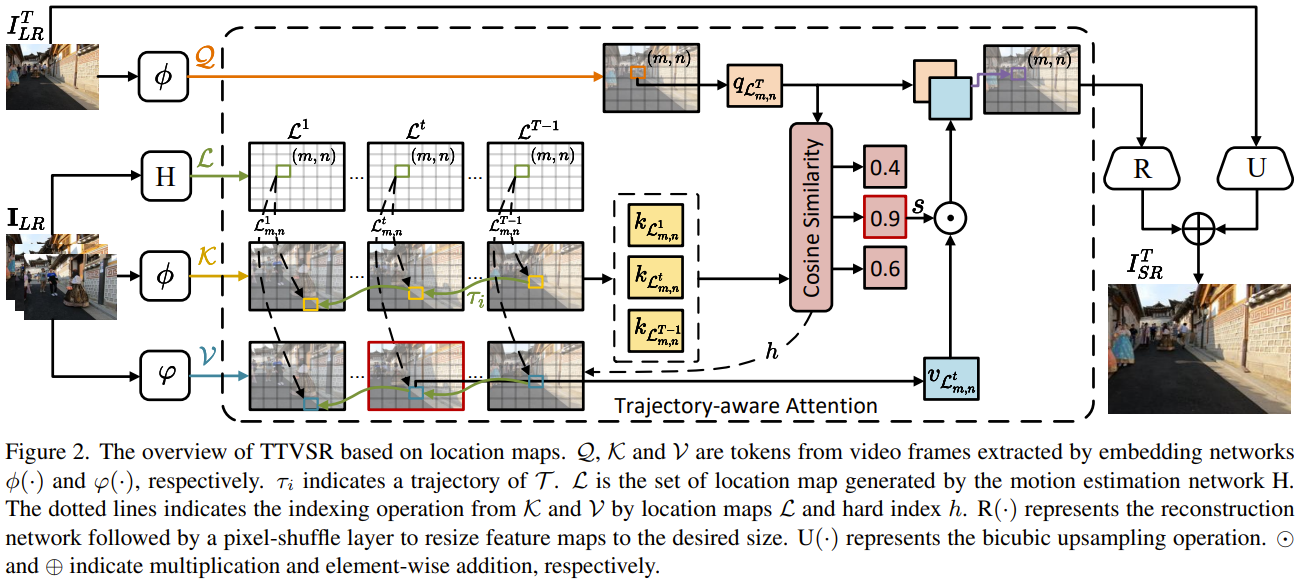

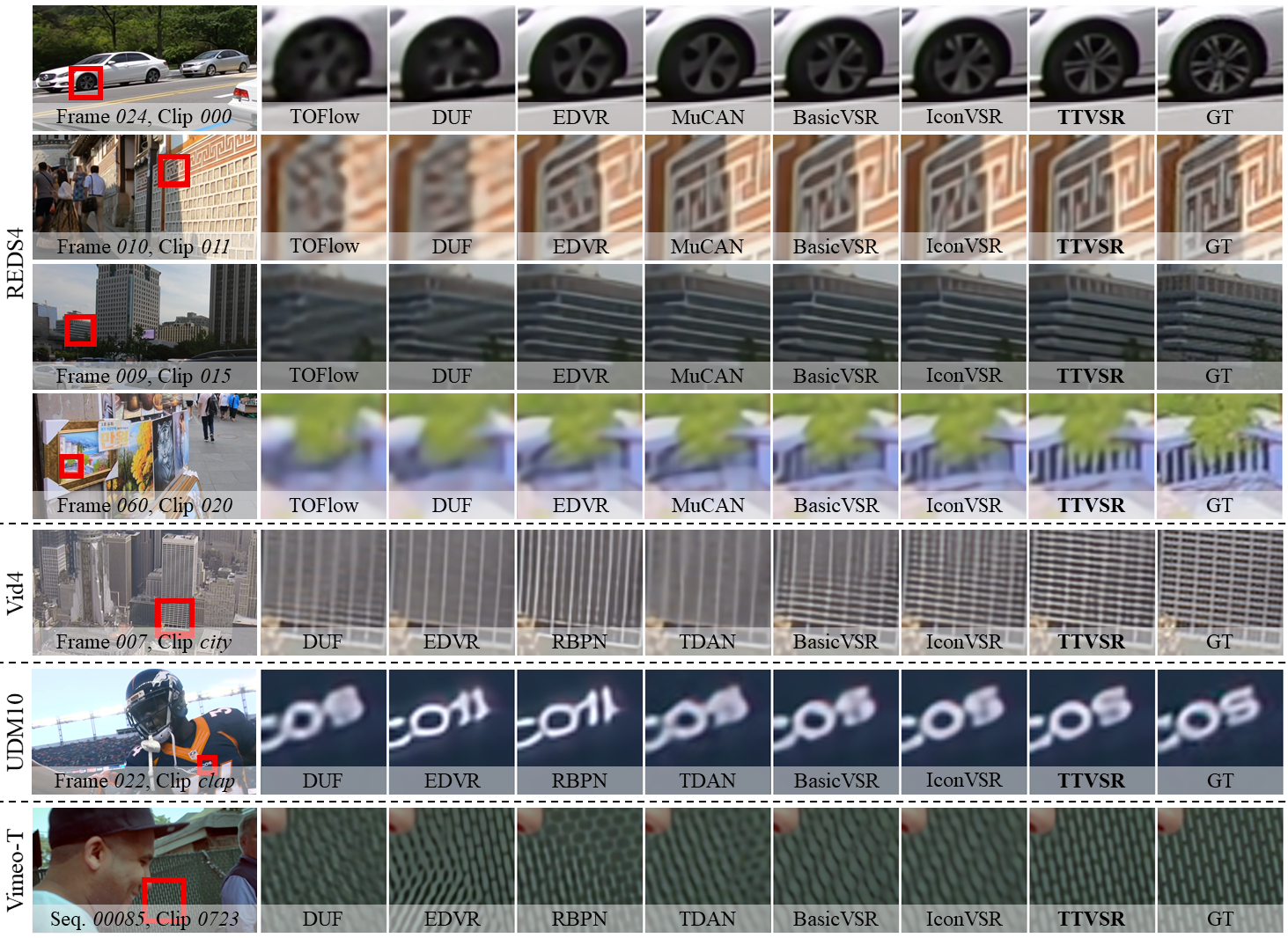

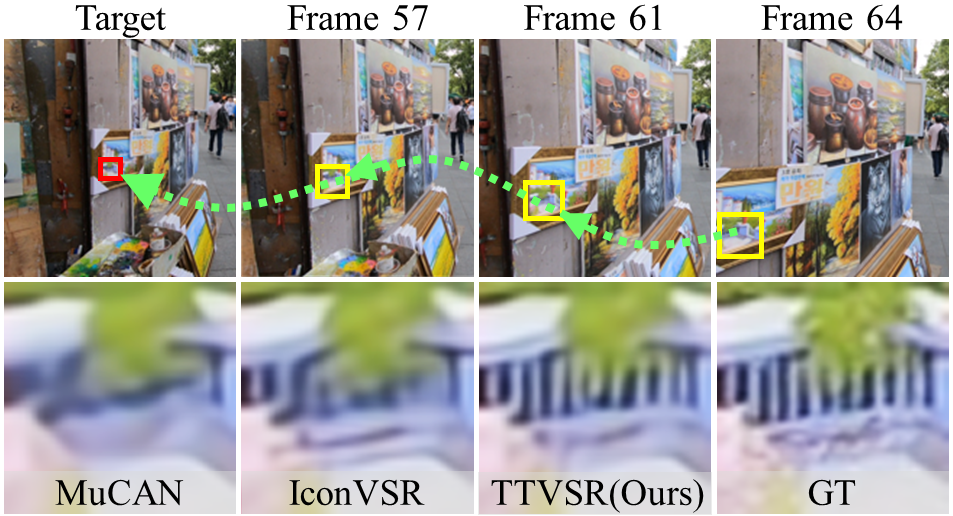

We proposed an approach named TTVSR to study video super-resolution by leveraging long-range frame dependencies. TTVSR introduces Transformer architectures in video super-resolution tasks and formulates video frames into pre-aligned trajectories of visual tokens to calculate attention along trajectories.

We propose a novel trajectory-aware Transformer, which is one of the first works to introduce Transformer into video super-resolution tasks. TTVSR reduces computational costs and enables long-range modeling in videos. TTVSR can outperform existing SOTA methods in four widely-used VSR benchmarks.

- python 3.7 (recommend to use Anaconda)

- pytorch == 1.9.0

- torchvision == 0.10.0

- opencv-python == 4.5.3

- mmcv-full == 1.3.9

- scipy==1.7.3

- scikit-image == 0.19.0

- lmdb == 1.2.1

- yapf == 0.31.0

- tensorboard == 2.6.0

Pre-trained models can be downloaded from onedrive, google drive, and baidu cloud(nbgc).

- TTVSR_REDS.pth: trained on REDS dataset with BI degradation.

- TTVSR_Vimeo90K.pth: trained on Vimeo-90K dataset with BD degradation.

The output results on REDS4, Vid4 and UMD10 can be downloaded from onedrive, google drive, and baidu cloud(nbgc).

-

Training set

- REDS dataset. We regroup the training and validation dataset into one folder. The original training dataset has 240 clips from 000 to 239. The original validation dataset were renamed from 240 to 269.

- Make REDS structure be:

├────REDS ├────train ├────train_sharp ├────000 ├────... ├────269 ├────train_sharp_bicubic ├────X4 ├────000 ├────... ├────269 - Viemo-90K dataset. Download the original training + test set and use the script 'degradation/BD_degradation.m' (run in MATLAB) to generate the low-resolution images. The

sep_trainlist.txtfile listing the training samples in the download zip file.- Make Vimeo-90K structure be:

├────vimeo_septuplet ├────sequences ├────00001 ├────... ├────00096 ├────sequences_BD ├────00001 ├────... ├────00096 ├────sep_trainlist.txt ├────sep_testlist.txt

- REDS dataset. We regroup the training and validation dataset into one folder. The original training dataset has 240 clips from 000 to 239. The original validation dataset were renamed from 240 to 269.

-

Testing set

- REDS4 dataset. The 000, 011, 015, 020 clips from the original training dataset of REDS.

- Viemo-90K dataset. The

sep_testlist.txtfile listing the testing samples in the download zip file. - Vid4 and UDM10 dataset. Use the script 'degradation/BD_degradation.m' (run in MATLAB) to generate the low-resolution images.

- Make Vid4 and UDM10 structure be:

├────VID4 ├────BD ├────calendar ├────... ├────HR ├────calendar ├────... ├────UDM10 ├────BD ├────archpeople ├────... ├────HR ├────archpeople ├────...

- Clone this github repo

git clone https://github.com/researchmm/TTVSR.git

cd TTVSR

- Download pre-trained weights (onedrive|google drive|baidu cloud(nbgc)) under

./checkpoint - Prepare testing dataset and modify "dataset_root" in

configs/TTVSR_reds4.pyandconfigs/TTVSR_vimeo90k.py - Run test

# REDS model

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 ./tools/dist_test.sh configs/TTVSR_reds4.py checkpoint/TTVSR_REDS.pth 8 [--save-path 'save_path']

# Vimeo model

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 ./tools/dist_test.sh configs/TTVSR_vimeo90k.py checkpoint/TTVSR_Vimeo90K.pth 8 [--save-path 'save_path']

- The results are saved in

save_path.

- Clone this github repo

git clone https://github.com/researchmm/TTVSR.git

cd TTVSR

- Prepare training dataset and modify "dataset_root" in

configs/TTVSR_reds4.pyandconfigs/TTVSR_vimeo90k.py - Run training

# REDS

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 ./tools/dist_train.sh configs/TTVSR_reds4.py 8

# Vimeo

CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7 ./tools/dist_train.sh configs/TTVSR_vimeo90k.py 8

- The training results are saved in

./ttvsr_reds4and./ttvsr_vimeo90k(also can be set by modifying "work_dir" inconfigs/TTVSR_reds4.pyandconfigs/TTVSR_vimeo90k.py)

We also sincerely recommend some other excellent works related to us. ✨

- FTVSR: Learning Spatiotemporal Frequency-Transformer for Compressed Video Super-Resolution

- TTSR: Learning Texture Transformer Network for Image Super-Resolution

- CKDN: Learning Conditional Knowledge Distillation for Degraded-Reference Image Quality Assessment

If you find the code and pre-trained models useful for your research, please consider citing our paper. 😊

@InProceedings{liu2022learning,

author = {Liu, Chengxu and Yang, Huan and Fu, Jianlong and Qian, Xueming},

title = {Learning Trajectory-Aware Transformer for Video Super-Resolution},

booktitle = {CVPR},

year = {2022},

month = {June}

}

This code is built on mmediting. We thank the authors of BasicVSR for sharing their code.

If you meet any problems, please describe them in issues or contact:

- Chengxu Liu: liuchx97@gmail.com