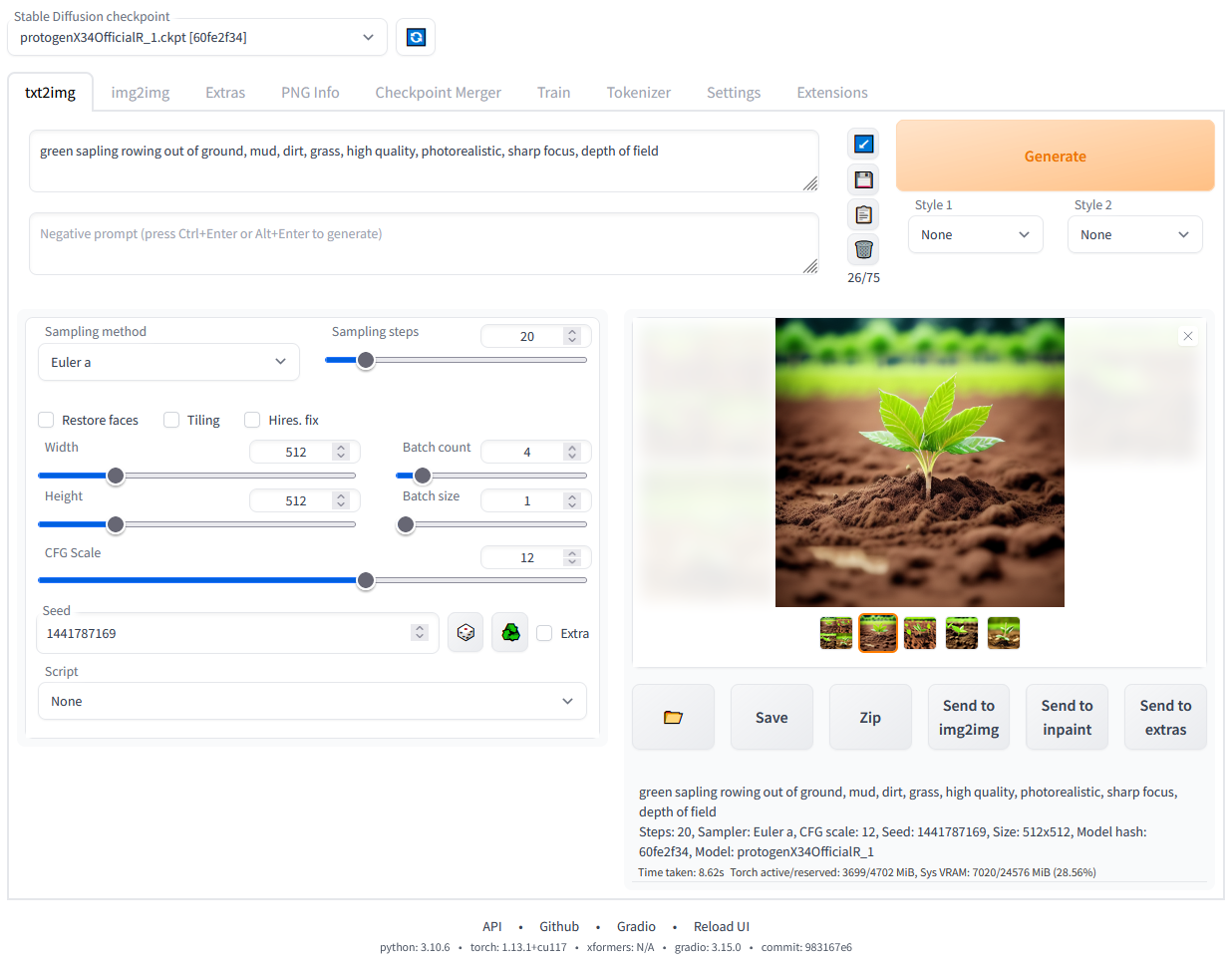

Stable Diffusion web UI

A browser interface based on Gradio library for Stable Diffusion.

Features

Detailed feature showcase with images:

- Original txt2img and img2img modes

- One click install and run script (but you still must install python and git)

- Outpainting

- Inpainting

- Color Sketch

- Prompt Matrix

- Stable Diffusion Upscale

- Attention, specify parts of text that the model should pay more attention to

- a man in a

((tuxedo))- will pay more attention to tuxedo - a man in a

(tuxedo:1.21)- alternative syntax - select text and press

Ctrl+UporCtrl+Down(orCommand+UporCommand+Downif you're on a MacOS) to automatically adjust attention to selected text (code contributed by anonymous user)

- a man in a

- Loopback, run img2img processing multiple times

- X/Y/Z plot, a way to draw a 3 dimensional plot of images with different parameters

- Textual Inversion

- have as many embeddings as you want and use any names you like for them

- use multiple embeddings with different numbers of vectors per token

- works with half precision floating point numbers

- train embeddings on 8GB (also reports of 6GB working)

- Extras tab with:

- GFPGAN, neural network that fixes faces

- CodeFormer, face restoration tool as an alternative to GFPGAN

- RealESRGAN, neural network upscaler

- ESRGAN, neural network upscaler with a lot of third party models

- SwinIR and Swin2SR (see here), neural network upscalers

- LDSR, Latent diffusion super resolution upscaling

- Resizing aspect ratio options

- Sampling method selection

- Adjust sampler eta values (noise multiplier)

- More advanced noise setting options

- Interrupt processing at any time

- 4GB video card support (also reports of 2GB working)

- Correct seeds for batches

- Live prompt token length validation

- Generation parameters

- parameters you used to generate images are saved with that image

- in PNG chunks for PNG, in EXIF for JPEG

- can drag the image to PNG info tab to restore generation parameters and automatically copy them into UI

- can be disabled in settings

- drag and drop an image/text-parameters to promptbox

- Read Generation Parameters Button, loads parameters in promptbox to UI

- Settings page

- Running arbitrary python code from UI (must run with

--allow-codeto enable) - Mouseover hints for most UI elements

- Possible to change defaults/mix/max/step values for UI elements via text config

- Tiling support, a checkbox to create images that can be tiled like textures

- Progress bar and live image generation preview

- Can use a separate neural network to produce previews with almost none VRAM or compute requirement

- Negative prompt, an extra text field that allows you to list what you don't want to see in generated image

- Styles, a way to save part of prompt and easily apply them via dropdown later

- Variations, a way to generate same image but with tiny differences

- Seed resizing, a way to generate same image but at slightly different resolution

- CLIP interrogator, a button that tries to guess prompt from an image

- Prompt Editing, a way to change prompt mid-generation, say to start making a watermelon and switch to anime girl midway

- Batch Processing, process a group of files using img2img

- Img2img Alternative, reverse Euler method of cross attention control

- Highres Fix, a convenience option to produce high resolution pictures in one click without usual distortions

- Reloading checkpoints on the fly

- Checkpoint Merger, a tab that allows you to merge up to 3 checkpoints into one

- Custom scripts with many extensions from community

- Composable-Diffusion, a way to use multiple prompts at once

- separate prompts using uppercase

AND - also supports weights for prompts:

a cat :1.2 AND a dog AND a penguin :2.2

- separate prompts using uppercase

- No token limit for prompts (original stable diffusion lets you use up to 75 tokens)

- DeepDanbooru integration, creates danbooru style tags for anime prompts

- xformers, major speed increase for select cards: (add

--xformersto commandline args) - via extension: History tab: view, direct and delete images conveniently within the UI

- Generate forever option

- Training tab

- hypernetworks and embeddings options

- Preprocessing images: cropping, mirroring, autotagging using BLIP or deepdanbooru (for anime)

- Clip skip

- Hypernetworks

- Loras (same as Hypernetworks but more pretty)

- A sparate UI where you can choose, with preview, which embeddings, hypernetworks or Loras to add to your prompt

- Can select to load a different VAE from settings screen

- Estimated completion time in progress bar

- API

- Support for dedicated inpainting model by RunwayML

- via extension: Aesthetic Gradients, a way to generate images with a specific aesthetic by using clip images embeds (implementation of https://github.com/vicgalle/stable-diffusion-aesthetic-gradients)

- Stable Diffusion 2.0 support - see wiki for instructions

- Alt-Diffusion support - see wiki for instructions

- Now without any bad letters!

- Load checkpoints in safetensors format

- Eased resolution restriction: generated image's domension must be a multiple of 8 rather than 64

- Now with a license!

- Reorder elements in the UI from settings screen

Installation and Running

Make sure the required dependencies are met and follow the instructions available for both NVidia (recommended) and AMD GPUs.

Alternatively, use online services (like Google Colab):

Installation on Windows 10/11 with NVidia-GPUs using release package

- Download

sd.webui.zipfrom v1.0.0-pre and extract it's contents. - Run

update.bat. - Run

run.bat.

For more details see Install-and-Run-on-NVidia-GPUs

Automatic Installation on Windows

- Install Python 3.10.6 (Newer version of Python does not support torch), checking "Add Python to PATH".

- Install git.

- Download the stable-diffusion-webui repository, for example by running

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git. - Run

webui-user.batfrom Windows Explorer as normal, non-administrator, user.

Automatic Installation on Linux

- Install the dependencies:

# Debian-based:

sudo apt install wget git python3 python3-venv

# Red Hat-based:

sudo dnf install wget git python3

# Arch-based:

sudo pacman -S wget git python3- Navigate to the directory you would like the webui to be installed and execute the following command:

bash <(wget -qO- https://raw.githubusercontent.com/AUTOMATIC1111/stable-diffusion-webui/master/webui.sh)- Run

webui.sh. - Check

webui-user.shfor options.

Installation on Apple Silicon

Find the instructions here.

Contributing

Here's how to add code to this repo: Contributing

Documentation

The documentation was moved from this README over to the project's wiki.

Credits

Licenses for borrowed code can be found in Settings -> Licenses screen, and also in html/licenses.html file.

- Stable Diffusion - https://github.com/CompVis/stable-diffusion, https://github.com/CompVis/taming-transformers

- k-diffusion - https://github.com/crowsonkb/k-diffusion.git

- GFPGAN - https://github.com/TencentARC/GFPGAN.git

- CodeFormer - https://github.com/sczhou/CodeFormer

- ESRGAN - https://github.com/xinntao/ESRGAN

- SwinIR - https://github.com/JingyunLiang/SwinIR

- Swin2SR - https://github.com/mv-lab/swin2sr

- LDSR - https://github.com/Hafiidz/latent-diffusion

- MiDaS - https://github.com/isl-org/MiDaS

- Ideas for optimizations - https://github.com/basujindal/stable-diffusion

- Cross Attention layer optimization - Doggettx - https://github.com/Doggettx/stable-diffusion, original idea for prompt editing.

- Cross Attention layer optimization - InvokeAI, lstein - https://github.com/invoke-ai/InvokeAI (originally http://github.com/lstein/stable-diffusion)

- Sub-quadratic Cross Attention layer optimization - Alex Birch (Birch-san/diffusers#1), Amin Rezaei (https://github.com/AminRezaei0x443/memory-efficient-attention)

- Textual Inversion - Rinon Gal - https://github.com/rinongal/textual_inversion (we're not using his code, but we are using his ideas).

- Idea for SD upscale - https://github.com/jquesnelle/txt2imghd

- Noise generation for outpainting mk2 - https://github.com/parlance-zz/g-diffuser-bot

- CLIP interrogator idea and borrowing some code - https://github.com/pharmapsychotic/clip-interrogator

- Idea for Composable Diffusion - https://github.com/energy-based-model/Compositional-Visual-Generation-with-Composable-Diffusion-Models-PyTorch

- xformers - https://github.com/facebookresearch/xformers

- DeepDanbooru - interrogator for anime diffusers https://github.com/KichangKim/DeepDanbooru

- Sampling in float32 precision from a float16 UNet - marunine for the idea, Birch-san for the example Diffusers implementation (https://github.com/Birch-san/diffusers-play/tree/92feee6)

- Instruct pix2pix - Tim Brooks (star), Aleksander Holynski (star), Alexei A. Efros (no star) - https://github.com/timothybrooks/instruct-pix2pix

- Security advice - RyotaK

- UniPC sampler - Wenliang Zhao - https://github.com/wl-zhao/UniPC

- TAESD - Ollin Boer Bohan - https://github.com/madebyollin/taesd

- Initial Gradio script - posted on 4chan by an Anonymous user. Thank you Anonymous user.

- (You)

my running notes:

git clone https://github.com/racinmat/stable-diffusion-webui.git

git pull --recurse-submodules

modify the webui-user.bat, point to the correct python

set the python, it can be the base one, it will use it to run the scripts which make and run the env var

run the webui-user.bat, it will start installing and preparing everything, usually it takes ~25 mins for the first time, in case it was trying to install from pip using company artifactory and then it timeouted, so it shoul be a bit less, but most of the time was installing gfpgan, clip, open_clip etc.

I ran into issue:

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

@ WARNING: REMOTE HOST IDENTIFICATION HAS CHANGED! @

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

IT IS POSSIBLE THAT SOMEONE IS DOING SOMETHING NASTY!

I followed: https://levelup.gitconnected.com/how-to-deal-with-the-remote-host-identification-has-changed-message-with-github-1dea015dae8d in ~/.ssh/known_hosts I removed github.com row and then into some unrelated temp directory I closed some repo using ssh (not https) link, then I confirmed adding github.com to known hosts and issue was resolved

after starting the webui-user.bat I got

AssertionError: Torch is not able to use GPU; add --skip-torch-cuda-test to COMMANDLINE_ARGS variable to disable this check

according to https://stackoverflow.com/questions/60987997/why-torch-cuda-is-available-returns-false-even-after-installing-pytorch-with I need to check versions of compute capability, cuda etc.

to activate the venv for local usage in cli, I ran .\venv\Scripts\activate.bat

running pip freeze showed torch==1.13.1+cu117.

The GPU should support it.

Checking driver version:

Device manager showed I have 31.0.15.2849

Nvidia control panel showed version 528.49

https://docs.nvidia.com/cuda/cuda-toolkit-release-notes/index.html shows it should be supported

during the first-time setup I got

INCOMPATIBLE PYTHON VERSION

This program is tested with 3.10.6 Python, but you have 3.9.7.

If you encounter an error with "RuntimeError: Couldn't install torch." message,

or any other error regarding unsuccessful package (library) installation,

please downgrade (or upgrade) to the latest version of 3.10 Python

and delete current Python and "venv" folder in WebUI's directory.

so I need to run same python version as a base. Installation started in 11:07, finished 11:33

config notes: I have ui-config-ntb for my laptop, most should be same as ui-config.json, but has lower steps num and batch size to be practical for just trying it out, although with ugly images

web server for showing generated images:

https://stackoverflow.com/questions/5050851/best-lightweight-web-server-only-static-content-for-windows easiest, multi-platform and fully working seems to be just running python http server:

cd <sd base>/outputs/txt2img-images

python -m http.server 8081with redirect of stdout to some log file e.g.

cd <sd base>/outputs/txt2img-images

python -m http.server 8081 > img_access.log 2>&1gradio seems to serve all local images from the working directory, e.g.: http://localhost:7860/file=C:/Projects/others/stable-diffusion-webui/outputs/txt2img-images/2023-04-30/00008-2994637937.png is for showing the image. I don't want to have this open to public internet, could be used to see configs. It can show other file types, e.g. http://localhost:7860/file=C:/Projects/others/stable-diffusion-webui/ui-config.json, so we want to keep this only on the local server, and make public only something other

I use https://pypi.org/project/qrcode/ to generate images. Pillow is already installed as dependency, so no need to install it as qrcode[pil].

On target pc: copy these 3 bat files to desktop: change the path in cd there to absolute one. change the path to firefox or other browser to correct one

checklist at place:

- install python & git

- clone

- copy models

- edit bat files

- run everything from bat files

- generate anything

- verify QR code

- check QR code link over wifi and data from phone

- if not working, check firewall settings

if you create file user.css in root and place css there, it's loaded.

the ui can't do txt2img batch processing, but here is some custom script: AUTOMATIC1111#7852 https://github.com/Z-nonymous/sd_webui_batchscripts.git

to add the extensions: just add these urls it in the UI:

- https://github.com/racinmat/DiffusionDefender.git

- https://github.com/Z-nonymous/sd_webui_batchscripts.git

to use the batchscript, go to txt2img tab, script, and there select the script.

branches: old master contains many commits and their reverts caused by migrating the code logic to the extension. master contains just relevant commits with the changes:q