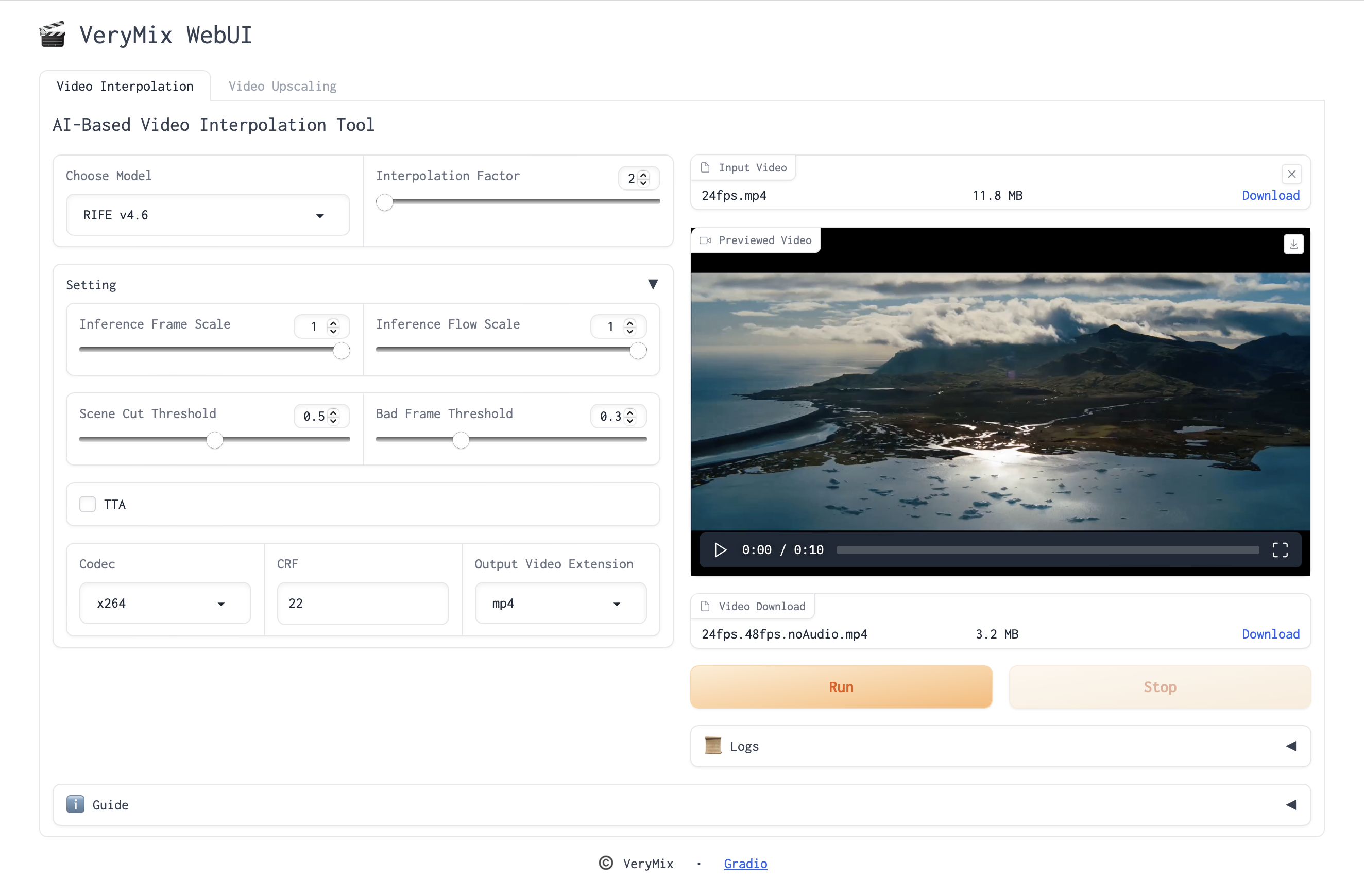

Very Mix WebUI is a video enhancement tool, based on AI models, which achieving 2x, 4x, and 6x frame interpolation for videos, as well as 2x, and 4x upscaling.

- Frame Interpolation

- RIFE

- EMAVFI

- Upscaling

- RLFN

- RealESRGAN

- ShuffleCUGAN

- Restore

Ubuntu 18.04

CUDA 11.3

CUDNN 8

Python 3.10.12 (Currently supporting versions not lower than 3.10)

PyTorch 1.12.0

Gradio 3.36.1

For other dependencies, please refer to

requirements.txt

A few features rely on FFmpeg being available on the system path

It is recommended to use Miniconda.

wget https://mirrors.tuna.tsinghua.edu.cn/anaconda/miniconda/Miniconda3-py38_4.9.2-Linux-x86_64.sh

chmod u+x Miniconda3-py38_4.9.2-Linux-x86_64.sh

./Miniconda3-py38_4.9.2-Linux-x86_64.shconda create -n verymix-webui python=3.10 pytorch==1.12.0 cudatoolkit=11.3 -c pytorch -yconda activate verymix-webui

python -m pip --no-cache-dir install -r requirements.txtRIFE v4.6 | flownet.pkl

EMAVFI | ours_small_t.pkl, ours_t.pkl (please rename ours_small_t.pkl, ours_t.pkl to emavfi_s_t.pkl, emavfi_t.pkl respectively)

RLFN | rlfn_s_x2.pth, rlfn_s_x4.pth

RealESRGAN

ShuffleCUGAN

Video Restore

Place the model file in the appropriate folder, eg:

ckpt/

├── EMAVFI

│ ├── PutCheckpointsHere.txt

│ ├── emavfi_s_t.pkl

│ └── emavfi_t.pkl

├── RIFEv4.6

│ ├── PutCheckpointsHere.txt

│ └── flownet.pklStart the web service:

export GRADIO_TEMP_DIR=./temp/gradio && python webui.py --config=config.yaml # GRADIO_TEMP_DIR: Specify the file storage pathInterpoalte or upsampling video: