Author: Zeyu Li zyli@cs.ucla.edu or zeyuli@g.ucla.edu

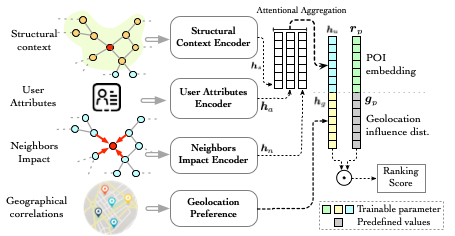

GEAPR stands for "Graph Enhanced Attention network for explainable POI Recommendation". The major architecture of GEAPR is the following:

In short, it uses the four different modules to analyze four motivating factors of a POI visit, namely structural context, neighbor impact, user attribute, and geolocation.

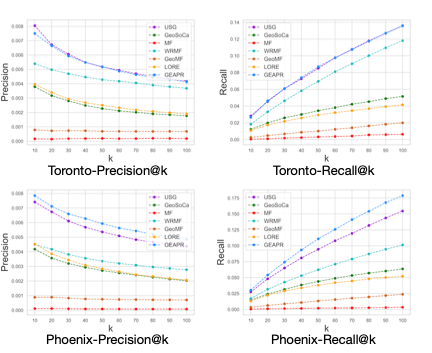

GEAPR can achieve a great performance shown below.

We will show how to process the raw data, run GEAPR, and get results. Please let us know through EMAIL for any questions! Please cite our paper if you used the code (after publish).

Successfully running GEARP requires a few dependent Python packages.

ujson

argparse

pandas==0.25.0

numpy

scikit-learn==0.22.1

tqdm

configparser

Run these cmds to install all dependencies by one-click.

$ cd path/to/GEAPR

$ pip install -r requirements.txtMOST IMPORTANTLY, GEARP is run on TensorFlow 1.14.0. A GPU-enabled environment is recommended because we have only test run it on GPU machines.

Please download the data set from here.

After download, untar it into ./data/raw/yelp by the steps below, and ready to the next step!

$ cd path/to/GEAPR

$ mkdir -p data/raw/yelp/

$ tar -vxf path/to/yelp_dataset.tar -C data/raw/yelpRun this script for a one-click parsing for Toronto and Phoenix datasets by our default settings. However, you should run them separately for custom settings.

$ bash preprocess_datasets.shJust run the cmd below, it doesn't require any dataset-specific arguments.

$ python preprocess/prep_yelp.py preprocessNow we separate the dataset by different cities. Set the minimum number of business and user.

NOTE that in the README and source code, we use business and POI interchangeably.

$ python preprocess/prep_yelp.py city_cluster --business_min_count [bmc] --user_min_count [umc]For example, if both minimum business count and minimum user count are 10, then we have:

$ python preprocess/prep_yelp.py city_cluster --business_min_count 10 --user_min_count 10Running this step will generate the statistics of datasets. We summarize them as the following.

lv stands for Las Vegas, tor stands for Toronto, and phx stands for Pheonix.

Generate train:test dateset, the ratio should be two integers.

$ python preprocess/prep_yelp.py gen_data --train_test_ratio=[train:test]For example, if we choose to use train:test as 9:1, then we should use:

$ python preprocess/prep_yelp.py gen_data --train_test_ratio=9:1The statistics for the three datasets.

Until here, all the datasets are processed. If you are curious about what's inside the processed dataset. Please check below.

In ./data/parse/yelp, you would be able to see four folders:

train_test: the training set, testing set, and the negative sampling set.citycluster: all information clustered by cities (lv,tor, orphx)preprocess: undivided features of preprocessing.interactions: user-POI interactons

Among them, citycluster and interactions will be used in the future procedures.

Want to run? Not done yet, we need to run some code to extract the features for user and POI such as attributes and POI locations. Based on the preprocessed features, we further create adjacency matrix features and user/POI attribute features. Both of them will be fed into our model.

We are using structural context graphs for later computations. Structural context graphs can be generated beforehand. Here's an example to generate neighbor graphs and structural context graphs:

$ python preprocess/build_graphs.py --yelp_city=tor --rwr_order=3 --rwr_constant 0.05 --use_sparse_mat=TrueHere are two tunable hyperparameters:

rwr_order: choose between 2 and 3, number > 3 will generate a much denser graph. Defult is 3.rwr_constant: rate of re-starting. Default is 0.05.use_sparse: whether or not to usescipy.sparsematrix to save data. Well, the option ofFalsehas not been tested. Please stick toTrue.

We also need to extract features from the user side. This has two steps:

Go to ./configs/, there are three examples to set numerical and categorical features in columns_xx.ini. The format is the following

[CATEGORICAL]

col1 = yes

; `ini` requires an assignment, but the assigned value doesn't matter

col2 = alsoyes

; ...

[NUMERICAL]

col3 = [#.buckets] ; [#.buckets] should be an integer.

col4 = [#.buckets] ;This tells how many buckets should an attribute col be mapped to.

After setting the ini files, just run the following commands to parse attributes:

$ python preprocess/attributes_extractor.py [city]city can be lv, tor, phx, and all. all will auto run all cities.

This will generate processed_city_user_profile.csv, processed_city_business_profile.csv, processed_city_user_profile_distinct.csv, and cols_disc_info.pkl.

in data/parse/yelp/citycluster/[city].

It will also print out the percentage of empty values under each feature column.

In the later training steps, the model will NOT use processed_city_user_profile.csv and processed_city_business_profile.csv because:

(1) we don't use business information in the model; (2) processed_city_user_profile.csv isn't parsed to discrete categorical features by bucketing yet.

Use the following command to extract the geolocation features and user/POI adjacency features.

$ python src/geolocations.py --city=[city] --num_lat_grid [n_lat] --num_long_grid [n_long] --num_user [n_user] --num_business [n_poi]For example, in order to handle tor dataset, we will input

$ python src/geolocations.py --city=tor --num_lat_grid 30 --num_long_grid 30 --num_user 9582 --num_business 9102Here's a table telling you how many user/POI/attrs are there (in the default setting).

City #.user #.POI #.attr

phx 11289 9633 140

tor 9582 9102 140

Finally, we are ready to run!

Please run this command are an example.

$ bash run_yelp.shPlease step into that for the different settings of the parameters.

There are two ways of checking the performance.

- Check out the standard the output;

- Check out

path/to/geapr/output/performance/[trail_id].perf. It has all the training and testing logs.

You will need to manually fetch the output_dict from the computational graph.

You can find an example in Line 84 of ./geapr/train.py.

- To disable the wordy

Warningsof TensorFlow please add the following:

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '3' # << this line disables the warnings

import tensorflow as tfHowever, the deprecation warnings are not removable.