PCNet-M Experiments on COCOA Dataset

Contents

1. Overview

This repo contains the code for my experiments on mask completion using the PCNet-M model proposed in Self-Supervised Scene De-occlusion.

2. Setup Instructions

- Clone the repo:

git clone https://github.com/praeclarumjj3/PCNetM-Experiments.git

cd PCNetM-Experiments- Install pycocotools:

pip install "git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI"- Install Pytorch and other dependencies:

pip3 install -r requirements.txtDataset Preparation

- Download the MS-COCO 2014 images and unzip:

wget http://images.cocodataset.org/zips/train2014.zip

wget http://images.cocodataset.org/zips/val2014.zip

- Download the annotations and untar:

gdown https://drive.google.com/uc?id=0B8e3LNo7STslZURoTzhhMFpCelE

tar -xf annotations.tar.gz

- Unzip the files according to the following structure

PCNetM-Experiments

├── data

│ ├── COCOA

│ │ ├── annotations

│ │ ├── train2014

│ │ ├── val2014

Run Demos

-

Download released models here and put the folder

releasedunderPCNetM-Experiments. -

Run

demos/demo_cocoa.ipynb. There are some test examples fordemos/demo_cocoa.ipynbin the repo, so you don't have to download the COCOA dataset if you just want to try a few samples. -

If you want to use predicted modal masks by existing instance segmentation models, you need to adjust some parameters in the demo, please refer to the answers in this issue.

3. Experiments

Training

- Run the following command:

sh experiments/COCOA/pcnet_m/train.sh # you may have to set--nproc_per_node=#YOUR_GPUS

Best Loss: 0.0674 after 44000 iterations.

Evaluate

- Execute:

sh tools/test_cocoa.shResults

| Metric | Value |

|---|---|

| acc_allpair | 0.96014 |

| acc_occpair | 0.87112 |

| mIoU | 0.81346 |

| pAcc | 0.87744 |

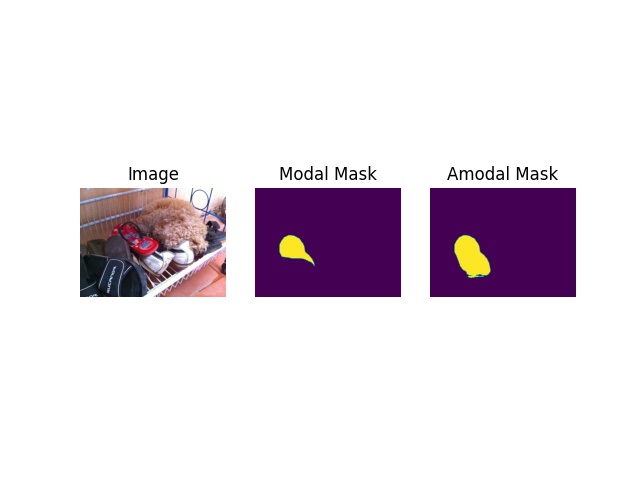

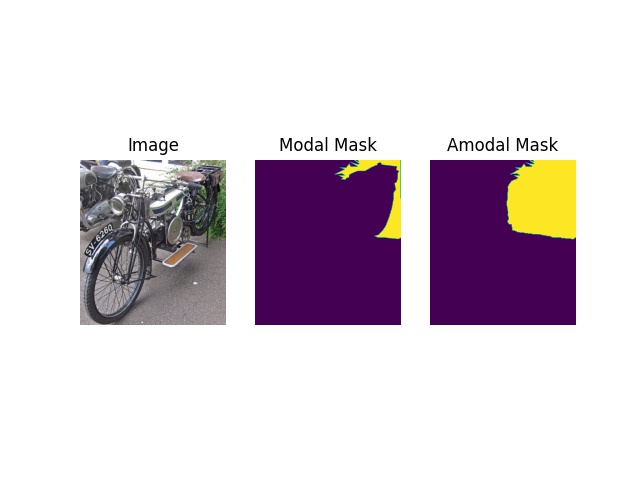

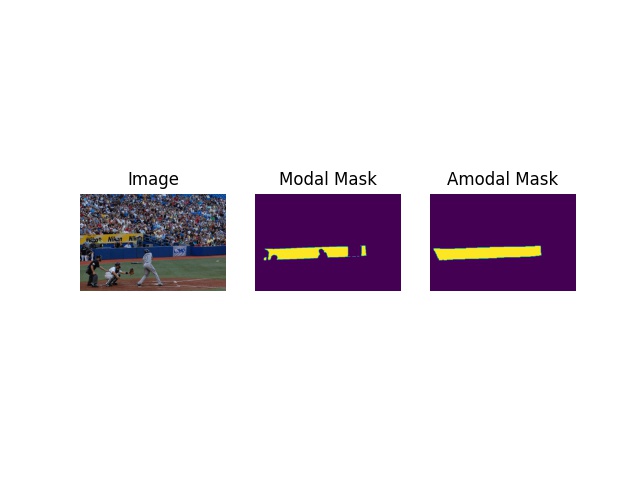

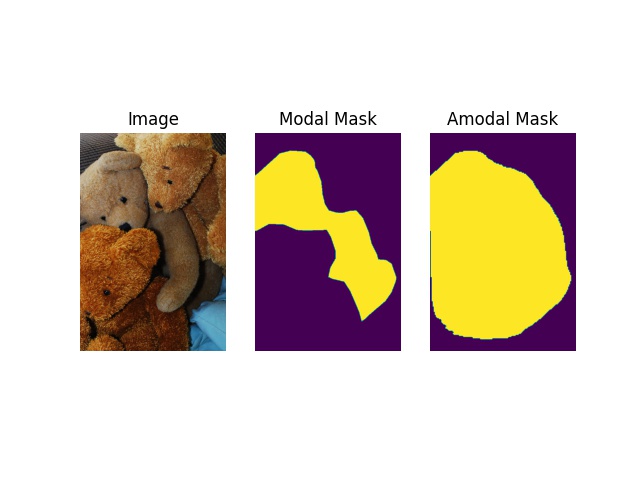

Find more results in visualizations.

Acknowledgement

This repo borrows heavily from deocclusion.