We propose a simple technique to espose the implicit attention of Convolutional Neural Networks on the image. It highlights the most informative image regions relevant to the predicted class. You could get attention-based model instantly by tweaking your own CNN a little bit more. The paper is published at CVPR'16.

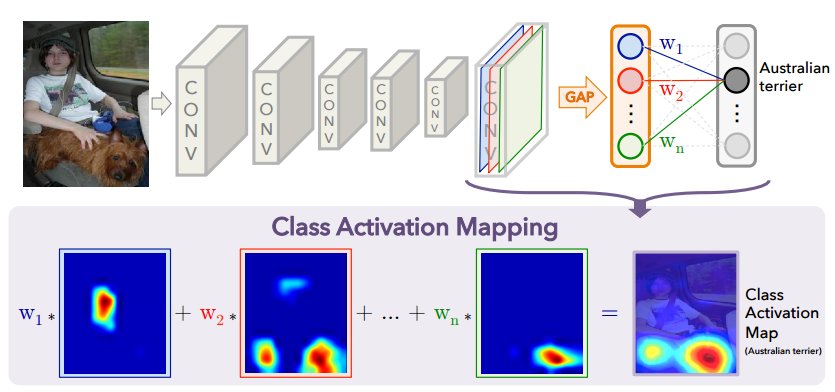

The framework of the Class Activation Mapping is as below:

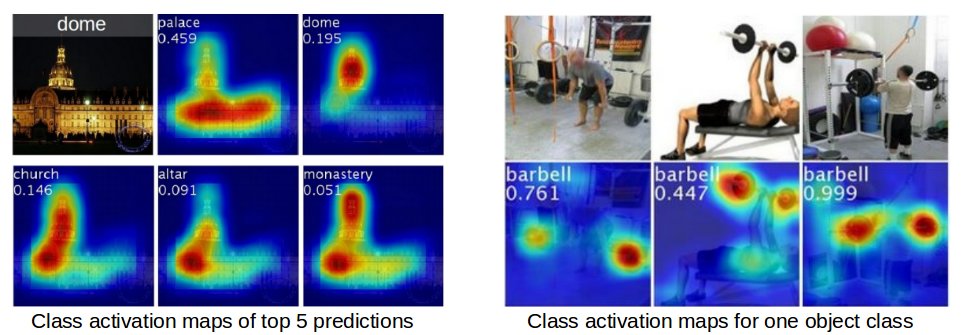

Some predicted class activation maps:

- GoogLeNet-CAM model on ImageNet:

models/deploy_googlenetCAM.prototxtweights:[http://cnnlocalization.csail.mit.edu/demoCAM/models/imagenet_googleletCAM_train_iter_120000.caffemodel] - VGG16-CAM model on ImageNet:

models/deploy_vgg16CAM.prototxtweights:[http://cnnlocalization.csail.mit.edu/demoCAM/models/vgg16CAM_train_iter_90000.caffemodel] - GoogLeNet-CAM model on Places205:

models/deploy_googlenetCAM_places205.prototxtweights:[http://cnnlocalization.csail.mit.edu/demoCAM/models/places_googleletCAM_train_iter_120000.caffemodel] - AlexNet-CAM on Places205 (used in the online demo):

models/deploy_alexnetplusCAM_places205.prototxtweights:[http://cnnlocalization.csail.mit.edu/demoCAM/models/alexnetplusCAM_places205.caffemodel]

- Install caffe, compile the matcaffe (matlab wrapper for caffe), and make sure you could run the prediction example code classification.m.

- In matlab, run demo.m.

The demo video of what the CNN is looking is here. The reimplementation in tensorflow is here.

B. Zhou, A. Khosla, A. Lapedriza, A. Oliva, and A. Torralba

Learning Deep Features for Discriminative Localization.

Computer Vision and Pattern Recognition (CVPR), 2016

The pre-trained models and techniques could be used without constraints.

Contact Bolei Zhou if you have questions.