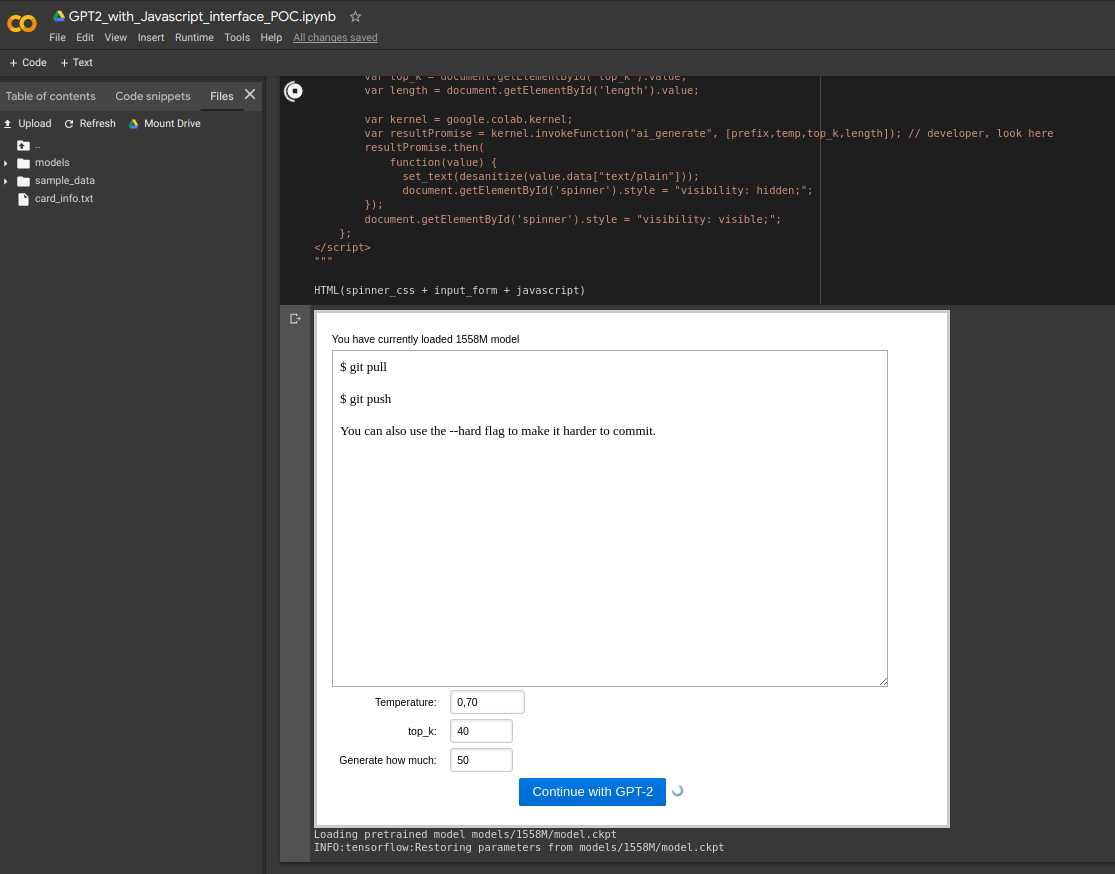

Make GPT-2 complete your text in Colab. Use fancy JS, instead of default ugly colab interface. This is proof of concept for developers that want to create apps with graphics in Colab.

If you want to use this code as your playground check out the 'multisample' branch for more useful notebooks.

If your model is uploaded to HuggingFace, check out the 'multisample' branch.

For custom models the idea is the following:

- Train your model outside of this notebook. This notebook is supposed to inference from already pretrained models.

- Ensure you have a way to call your model from within Python code and get a string. That means if you infer text via calling external script -- something like

!python main.py --predict output.txt) -- you need to examine the inference code of the script and write some sort of a function or an object that will handle your inference and return strings to the code, not just print the result or write it to file. - Discard all of the code that goes before

import google.colab.outputand copy your own code that loads and prepares your model. Addmodel_nameandspinner_speedvariables or your HTML code won't run. Note thatspinner_speedis a string variable that looks like"400ms". - Use the

ai_generatefunction to connect your model to the JS:- The simplest way is to not change any arguments and just use your function/object to generate a string and put it into

resultvariable before returningJsonRepr(result).- Note that the function will receive

top_k,tempandlengthas string variables and will convert these into numbers internally. - Also note that you aren't supposed to output any part of

prefixas part of your output string.

- Note that the function will receive

- If you want to use other parameters (e.g.

top_p) you need to fix HTML and JS code (generate()function) in the second block. Again, note that JS will pass any arguments as strings.

- The simplest way is to not change any arguments and just use your function/object to generate a string and put it into