AWS MLOps Handson

This repository is designed to provide a comprehensive ML infrastructure for CTR (Click-Through Rate) prediction.

With a focus on AWS services, this repository offer practical learning experience for MLOps.

Slide[japanese]: https://speakerdeck.com/nsakki55/cyberagent-aishi-ye-ben-bu-mlopsyan-xiu-ying-yong-bian

Key Features

Python Development Environment

We guide you through setting up a Python development environment that ensures code quality and maintainability.

This environment is carefully configured to enable efficient development practices and facilitate collaboration.

Train Pipeline

This repository includes the implementation of a training pipeline.

This pipeline covers the stages, including data preprocessing, model training, and evaluation.

Prediction Server

This repository provides an implementation of a prediction server that serves predictions based on your trained CTR prediction model.

AWS Deployment

To showcase industry-standard practices, this repository guide you in deploying the training pipeline and inference server on AWS.

AWS Infra Architecture

AWS Infra Architecture made by this repository.

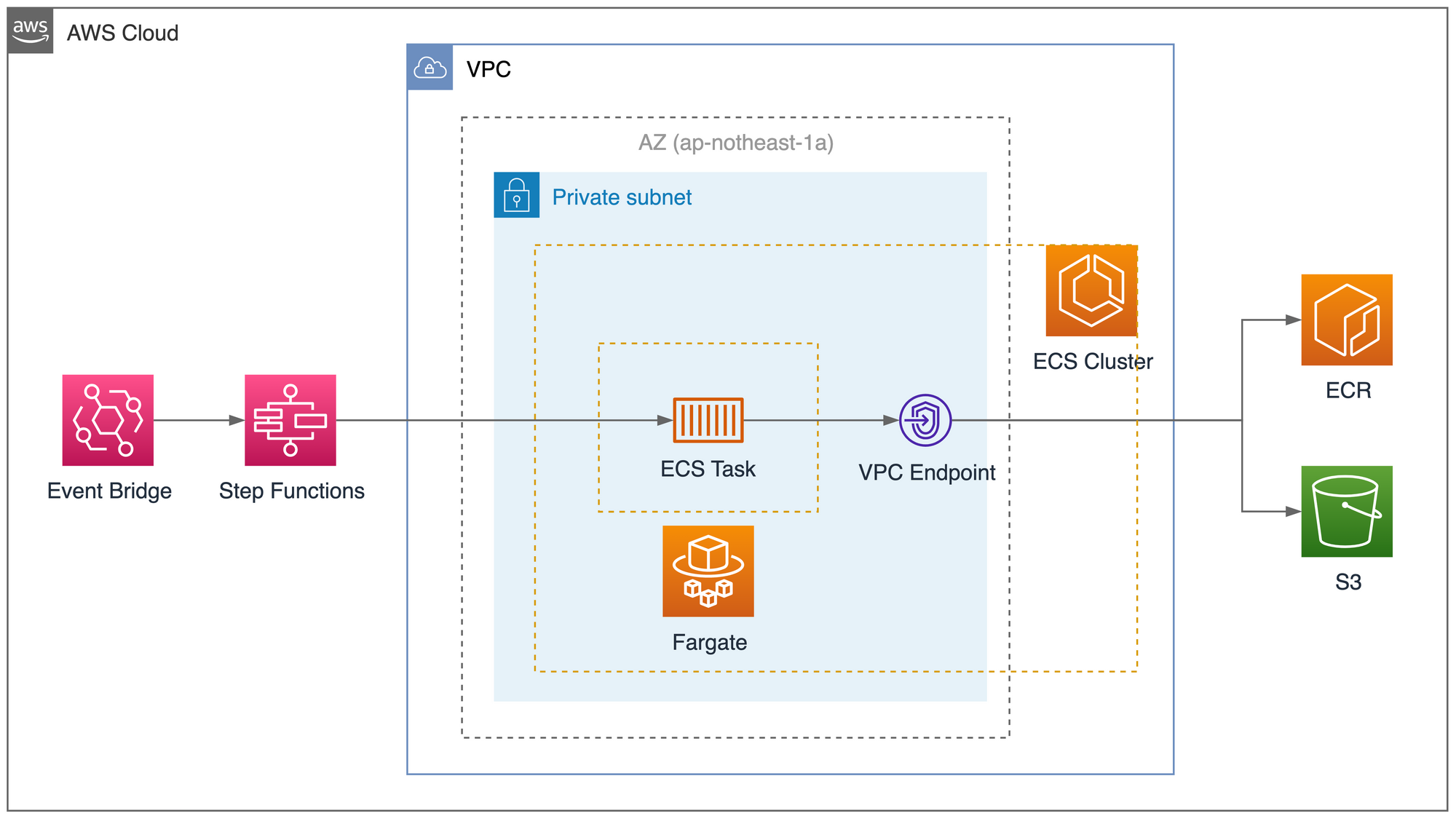

ML Pipeline

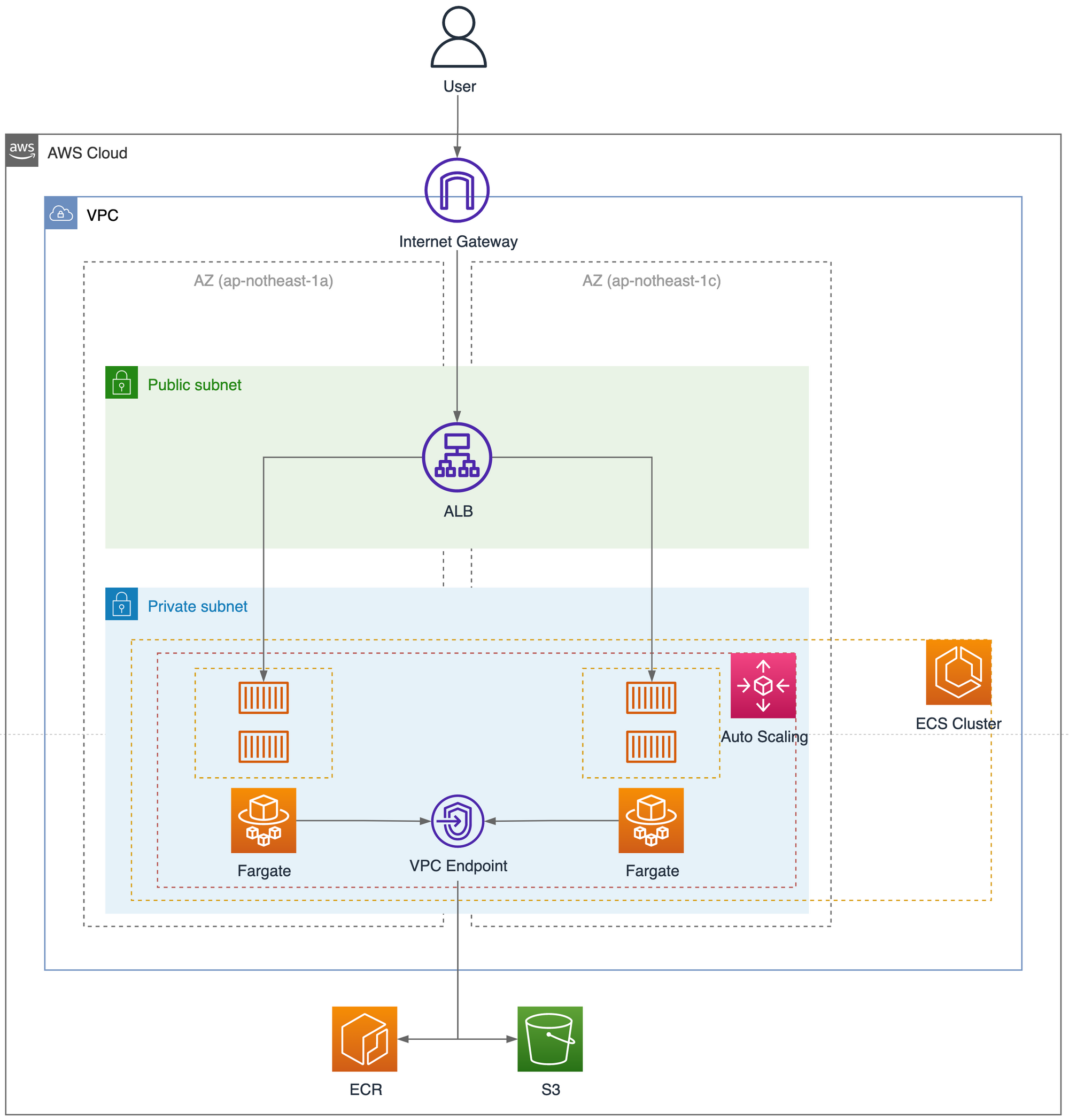

Predict Server

Requirements

| Software | Install (Mac) |

|---|---|

| pyenv | brew install pyenv |

| Poetry | curl -sSL https://install.python-poetry.org | python3 - |

| direnv | brew install direnv |

| Terraform | brew install terraform |

| Docker | install via dmg |

| awscli | curl "https://awscli.amazonaws.com/AWSCLIV2.pkg" -o "AWSCLIV2.pkg" |

Setup

Install Python Dependencies

Use pyenv to install Python 3.9.0 environment

$ pyenv install 3.9.0

$ pyenv local 3.9.0Use poetry to install library dependencies

$ poetry installConfigure environment variable

Use direnv to configure environment variable

$ cp .env.example .env

$ direnv allow .Set your environment variable setting

AWS_REGION=

AWS_ACCOUNT_ID=

AWS_PROFILE=

AWS_BUCKET=

AWS_ALB_DNS=

USER_NAME=

VERSION=2023-05-11

MODEL=sgd_classifier_ctr_modelCreate AWS Resources

move current directory to infra

$ cd infraUse terraform to create aws resources.

Apply terraform

$ terraform init

$ terraform applyPrepare train data

unzip train data

$ unzip train_data.zipupload train data to S3

$ aws s3 mv train_data s3://$AWS_BUCKETCode static analysis tool

| Tool | Usage |

|---|---|

| isort | library import statement check |

| black | format code style |

| flake8 | code quality check |

| mypy | static type checking |

| pysen | manage static analysis tool |

Usage

Build ML Pipeline

$ make build-mlRun ML Pipeline

$ make run-mlBuild Predict API

$ make build-predictorRun Predict API locally

$ make upShutdown Predict API locally

$ make downRun formatter

$ make formatRun linter

$ make lint Run pytest

$ make test