- Example Solution File

- 05/03/22

- James Irving

Data Enrichment Mock Exam

API results:

https://drive.google.com/file/d/10iWPhZtId0R9RCiVculSozCwldG-V3eH/view?usp=sharing

- Read in the json file

- Separate the records into 4 tables each a pandas dataframe

- Transform In this case remove dollar signs from funded amount in the financials records and convert to numeric datatype

- Create a database with SQLAlchemy and add the tables to the datbase

- Perform a hypothesis test to determine if there is a signficant difference between the funded amount when it is all males and when there is at least one female in the group.

import json

import pandas as pd

import numpy as np

import seaborn as sns

from scipy import stats

import pymysql

pymysql.install_as_MySQLdb()

from sqlalchemy import create_engine

from sqlalchemy_utils import create_database, database_exists## Loading json file

with open('Mock_Crowdsourcing_API_Results.json') as f:

results = json.load(f)

results.keys()dict_keys(['meta', 'data'])

## explore each key

type(results['meta'])str

## display meta

results['meta']'Practice Lesson: Mock API Call'

## display data

type(results['data'])dict

## preview the dictionary

# results['data']## preview just the keys

results['data'].keys()dict_keys(['crowd', 'demographics', 'financials', 'use'])

## what does the crowd key look like?

# results['data']['crowd']## checking single entry of crowd

results['data']['crowd'][0]{'id': 658776,

'posted_time': '2014-01-17 21:21:10+00:00',

'funded_time': '2014-02-05 17:57:55+00:00',

'lender_count': 33}

## making crowd a dataframe

crowd = pd.DataFrame(results['data']['crowd'])

crowd.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | posted_time | funded_time | lender_count | |

|---|---|---|---|---|

| 0 | 658776 | 2014-01-17 21:21:10+00:00 | 2014-02-05 17:57:55+00:00 | 33 |

| 1 | 1314847 | 2017-06-07 02:02:41+00:00 | 2017-06-21 17:10:38+00:00 | 9 |

| 2 | 863063 | 2015-03-27 20:08:04+00:00 | 2015-04-04 15:01:22+00:00 | 1 |

| 3 | 1184347 | 2016-11-14 07:32:12+00:00 | 2016-11-25 03:07:13+00:00 | 47 |

| 4 | 729745 | 2014-06-24 07:35:46+00:00 | 2014-07-10 16:12:43+00:00 | 12 |

| ... | ... | ... | ... | ... |

| 9995 | 679499 | 2014-03-05 07:05:38+00:00 | 2014-03-13 01:01:41+00:00 | 11 |

| 9996 | 873525 | 2015-04-22 06:32:13+00:00 | None | 6 |

| 9997 | 917686 | 2015-07-15 11:53:33+00:00 | 2015-08-14 11:45:40+00:00 | 44 |

| 9998 | 905789 | 2015-06-22 07:44:18+00:00 | 2015-07-14 00:20:45+00:00 | 11 |

| 9999 | 1216411 | 2017-01-06 06:54:07+00:00 | 2017-01-08 01:17:28+00:00 | 1 |

10000 rows × 4 columns

## making demographics a dataframe

demo = pd.DataFrame(results['data']['demographics'])

demo.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | country | region | borrower_genders | |

|---|---|---|---|---|

| 0 | 658776 | El Salvador | Ciudad El Triunfo | male |

| 1 | 1314847 | Philippines | Bais, Negros Oriental | female |

| 2 | 863063 | Peru | Huarochiri | female, female, female, female, female, female... |

| 3 | 1184347 | Armenia | Vanadzor town | female |

| 4 | 729745 | Uganda | Masindi | female |

| ... | ... | ... | ... | ... |

| 9995 | 679499 | Pakistan | Lahore | female |

| 9996 | 873525 | Kenya | Machakos | male, male, female, female, male |

| 9997 | 917686 | Senegal | None | female, female |

| 9998 | 905789 | Philippines | Binalbagan, Negros Occidental | female |

| 9999 | 1216411 | Philippines | Carmen, Bohol | female |

10000 rows × 4 columns

## making financials a dataframe

financials = pd.DataFrame(results['data']['financials'])

financials.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | currency | term_in_months | |

|---|---|---|---|---|

| 0 | 658776 | $1000.0 | USD | 20.0 |

| 1 | 1314847 | $225.0 | PHP | 13.0 |

| 2 | 863063 | $1150.0 | PEN | 6.0 |

| 3 | 1184347 | $1700.0 | AMD | 26.0 |

| 4 | 729745 | $400.0 | UGX | 8.0 |

| ... | ... | ... | ... | ... |

| 9995 | 679499 | 400.0 | PKR | 12.0 |

| 9996 | 873525 | 375.0 | KES | 14.0 |

| 9997 | 917686 | 1375.0 | XOF | 8.0 |

| 9998 | 905789 | 450.0 | PHP | 13.0 |

| 9999 | 1216411 | 125.0 | PHP | 16.0 |

10000 rows × 4 columns

## making use a dataframe

use = pd.DataFrame(results['data']['use'])

use.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | activity | sector | use | |

|---|---|---|---|---|

| 0 | 658776 | Vehicle | Personal Use | to purchase a motorcycle in order to travel fr... |

| 1 | 1314847 | Pigs | Agriculture | to buy feed and other supplies like vitamins t... |

| 2 | 863063 | Bookstore | Retail | to buy notebooks, pencils, and pens. |

| 3 | 1184347 | Photography | Services | to pay for a new lens for providing photograph... |

| 4 | 729745 | Fuel/Firewood | Retail | to buy firewood to sell. |

| ... | ... | ... | ... | ... |

| 9995 | 679499 | Fruits & Vegetables | Food | to help her husband buy onions for resale. |

| 9996 | 873525 | Farming | Agriculture | to buy fertilizer and pesticides to boost his ... |

| 9997 | 917686 | Fish Selling | Food | buy fish |

| 9998 | 905789 | General Store | Retail | to buy more groceries to sell. |

| 9999 | 1216411 | Personal Housing Expenses | Housing | to buy cement, hollow blocks, GI sheets, sand,... |

10000 rows × 4 columns

## fixing funded amount column

financials['funded_amount'] = financials['funded_amount'].str.replace('$','')

financials['funded_amount'] = pd.to_numeric(financials['funded_amount'])

financials/var/folders/rf/vw4r41jd7vd95x1w0dth7v9h0000gp/T/ipykernel_20630/2638807975.py:2: FutureWarning: The default value of regex will change from True to False in a future version. In addition, single character regular expressions will *not* be treated as literal strings when regex=True.

financials['funded_amount'] = financials['funded_amount'].str.replace('$','')

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | currency | term_in_months | |

|---|---|---|---|---|

| 0 | 658776 | 1000.0 | USD | 20.0 |

| 1 | 1314847 | 225.0 | PHP | 13.0 |

| 2 | 863063 | 1150.0 | PEN | 6.0 |

| 3 | 1184347 | 1700.0 | AMD | 26.0 |

| 4 | 729745 | 400.0 | UGX | 8.0 |

| ... | ... | ... | ... | ... |

| 9995 | 679499 | 400.0 | PKR | 12.0 |

| 9996 | 873525 | 375.0 | KES | 14.0 |

| 9997 | 917686 | 1375.0 | XOF | 8.0 |

| 9998 | 905789 | 450.0 | PHP | 13.0 |

| 9999 | 1216411 | 125.0 | PHP | 16.0 |

10000 rows × 4 columns

## loading mysql credentials

with open('/Users/codingdojo/.secret/mysql.json') as f:

login = json.load(f)

login.keys()dict_keys(['user', 'password'])

## creating connection to database with sqlalchemy

connection_str = f"mysql+pymysql://{login['user']}:{login['password']}@localhost/mock-belt-exam"

engine = create_engine(connection_str)## Check if database exists, if not, create it

if database_exists(connection_str) == False:

create_database(connection_str)

else:

print('The database already exists.')## saving dataframes to database

financials.to_sql('financials', engine, index=False, if_exists = 'replace')

use.to_sql('use', engine, index=False, if_exists = 'replace')

demo.to_sql('demographics', engine, index=False, if_exists = 'replace')

crowd.to_sql('crowd',engine, index=False, if_exists = 'replace')10000

## checking if tables created

q= '''SHOW TABLES;'''

pd.read_sql(q,engine).dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| Tables_in_mock-belt-exam | |

|---|---|

| 0 | crowd |

| 1 | demographics |

| 2 | financials |

| 3 | use |

-

$H_0$ (Null Hypothesis): Funded amount is the same for teams that contain at least 1 female and teams that are all male. -

$H_A$ (Alternative Hypothesis): There is a significant difference between the funded amount for teams that contain at least 1 female and teams that are all male. -

Based upon the Choosing the Right Hypothesis Test workflow from the LP:

-

The appropriate test to perform would be:

- Since we are measuring a numeric quantity (funded amount)

- and we are comparing 2 groups/samples.

- We therefore want to perform a 2-sample t-test, A.K.A. an independent t-test.

-

The appropriate test to perform would be:

-

According the the work flow, the 2-sample T-Test has the following assumptions:

- No significant outliers

- Normality

- Equal Variance

- The next step is to get the data for each group in separate variables. All of the approaches below will lead to the same result: a

male_dfandfemale_dfvariable.

q = """SELECT

f.id, f.funded_amount, d.borrower_genders

FROM

financials AS f

JOIN

demographics AS d ON f.id = d.id;"""

df = pd.read_sql(q,engine)

df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | borrower_genders | |

|---|---|---|---|

| 0 | 658776 | 1000.0 | male |

| 1 | 1314847 | 225.0 | female |

| 2 | 863063 | 1150.0 | female, female, female, female, female, female... |

| 3 | 1184347 | 1700.0 | female |

| 4 | 729745 | 400.0 | female |

| ... | ... | ... | ... |

| 9995 | 1033255 | 1000.0 | male |

| 9996 | 998024 | 150.0 | female |

| 9997 | 771844 | 225.0 | female |

| 9998 | 679499 | 400.0 | female |

| 9999 | 917686 | 1375.0 | female, female |

10000 rows × 3 columns

## Create a column that defines the 2 groups, has female or not

df['has_female'] = df['borrower_genders'].str.contains('female', case=False)

df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | borrower_genders | has_female | |

|---|---|---|---|---|

| 0 | 658776 | 1000.0 | male | False |

| 1 | 1314847 | 225.0 | female | True |

| 2 | 863063 | 1150.0 | female, female, female, female, female, female... | True |

| 3 | 1184347 | 1700.0 | female | True |

| 4 | 729745 | 400.0 | female | True |

| ... | ... | ... | ... | ... |

| 9995 | 1033255 | 1000.0 | male | False |

| 9996 | 998024 | 150.0 | female | True |

| 9997 | 771844 | 225.0 | female | True |

| 9998 | 679499 | 400.0 | female | True |

| 9999 | 917686 | 1375.0 | female, female | True |

10000 rows × 4 columns

## Separate the column of interest based on the groups

male_df = df.loc[ df['has_female']==False, ['funded_amount','has_female']]

female_df = df.loc[ df['has_female']==True, ['funded_amount','has_female']]

print(f"There are {len(female_df)} campaigns that had females on the team." )

print(f"There are {len(male_df)} campaigns that only had males on the team." )There are 7820 campaigns that had females on the team.

There are 2119 campaigns that only had males on the team.

- Due to a quirk with using "%" with sqlalchemy queries, in order to use a LIKE command with "%" for "%female": 1. Add quotation marks around the "%" expression.

python q = '''SELECT f.funded_amount, d.borrower_genders FROM financials AS f JOIN demographics AS d ON f.id = d.id WHERE d.borrower_genders LIKE "%female%";'''2. Use the sqlalchemy text function when running your query.python from sqlalchemy import text female_df = pd.read_sql(text(q),engine)

## importing text function to use on query with a "%" in it

from sqlalchemy import text## query to get campaigns that included female borrowers

q = '''SELECT

f.funded_amount,

d.borrower_genders LIKE "%female%" as "has_female"

FROM

financials AS f

JOIN

demographics AS d ON f.id = d.id

WHERE

d.borrower_genders LIKE "%female%";'''

female_df = pd.read_sql(text(q),engine)

female_df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| funded_amount | has_female | |

|---|---|---|

| 0 | 225.0 | 1 |

| 1 | 1150.0 | 1 |

| 2 | 1700.0 | 1 |

| 3 | 400.0 | 1 |

| 4 | 350.0 | 1 |

| ... | ... | ... |

| 7815 | 400.0 | 1 |

| 7816 | 375.0 | 1 |

| 7817 | 1375.0 | 1 |

| 7818 | 450.0 | 1 |

| 7819 | 125.0 | 1 |

7820 rows × 2 columns

## query to get campaigns that were only male borrowers

q = """SELECT

f.funded_amount,

d.borrower_genders LIKE "%female%" as "has_female"

FROM

financials AS f

JOIN

demographics AS d ON f.id = d.id

WHERE

d.borrower_genders NOT LIKE '%female%';"""

male_df = pd.read_sql(text(q),engine)

male_df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| funded_amount | has_female | |

|---|---|---|

| 0 | 1000.0 | 0 |

| 1 | 925.0 | 0 |

| 2 | 875.0 | 0 |

| 3 | 600.0 | 0 |

| 4 | 375.0 | 0 |

| ... | ... | ... |

| 2114 | 1000.0 | 0 |

| 2115 | 800.0 | 0 |

| 2116 | 125.0 | 0 |

| 2117 | 100.0 | 0 |

| 2118 | 3000.0 | 0 |

2119 rows × 2 columns

print(f"There are {len(female_df)} campaigns that had females on the team." )

print(f"There are {len(male_df)} campaigns that only had males on the team." )There are 7820 campaigns that had females on the team.

There are 2119 campaigns that only had males on the team.

df = pd.merge(financials, demo, on='id')

df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | currency | term_in_months | country | region | borrower_genders | |

|---|---|---|---|---|---|---|---|

| 0 | 658776 | 1000.0 | USD | 20.0 | El Salvador | Ciudad El Triunfo | male |

| 1 | 1314847 | 225.0 | PHP | 13.0 | Philippines | Bais, Negros Oriental | female |

| 2 | 863063 | 1150.0 | PEN | 6.0 | Peru | Huarochiri | female, female, female, female, female, female... |

| 3 | 1184347 | 1700.0 | AMD | 26.0 | Armenia | Vanadzor town | female |

| 4 | 729745 | 400.0 | UGX | 8.0 | Uganda | Masindi | female |

| ... | ... | ... | ... | ... | ... | ... | ... |

| 9995 | 679499 | 400.0 | PKR | 12.0 | Pakistan | Lahore | female |

| 9996 | 873525 | 375.0 | KES | 14.0 | Kenya | Machakos | male, male, female, female, male |

| 9997 | 917686 | 1375.0 | XOF | 8.0 | Senegal | None | female, female |

| 9998 | 905789 | 450.0 | PHP | 13.0 | Philippines | Binalbagan, Negros Occidental | female |

| 9999 | 1216411 | 125.0 | PHP | 16.0 | Philippines | Carmen, Bohol | female |

10000 rows × 7 columns

df['has_female'] = df['borrower_genders'].str.contains('female', case=False)

df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| id | funded_amount | currency | term_in_months | country | region | borrower_genders | has_female | |

|---|---|---|---|---|---|---|---|---|

| 0 | 658776 | 1000.0 | USD | 20.0 | El Salvador | Ciudad El Triunfo | male | False |

| 1 | 1314847 | 225.0 | PHP | 13.0 | Philippines | Bais, Negros Oriental | female | True |

| 2 | 863063 | 1150.0 | PEN | 6.0 | Peru | Huarochiri | female, female, female, female, female, female... | True |

| 3 | 1184347 | 1700.0 | AMD | 26.0 | Armenia | Vanadzor town | female | True |

| 4 | 729745 | 400.0 | UGX | 8.0 | Uganda | Masindi | female | True |

| ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 9995 | 679499 | 400.0 | PKR | 12.0 | Pakistan | Lahore | female | True |

| 9996 | 873525 | 375.0 | KES | 14.0 | Kenya | Machakos | male, male, female, female, male | True |

| 9997 | 917686 | 1375.0 | XOF | 8.0 | Senegal | None | female, female | True |

| 9998 | 905789 | 450.0 | PHP | 13.0 | Philippines | Binalbagan, Negros Occidental | female | True |

| 9999 | 1216411 | 125.0 | PHP | 16.0 | Philippines | Carmen, Bohol | female | True |

10000 rows × 8 columns

## Separate the column of interest based on the groups

male_df = df.loc[ df['has_female']==False, ['funded_amount','has_female']]

female_df = df.loc[ df['has_female']==True,['funded_amount','has_female']]

print(f"There are {len(female_df)} campaigns that had females on the team." )

print(f"There are {len(male_df)} campaigns that only had males on the team." )There are 7820 campaigns that had females on the team.

There are 2119 campaigns that only had males on the team.

## concatenate the two dataframes for visualziation.

plot_df = pd.concat([male_df, female_df], axis=0)

plot_df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| funded_amount | has_female | |

|---|---|---|

| 0 | 1000.0 | False |

| 8 | 925.0 | False |

| 18 | 875.0 | False |

| 22 | 600.0 | False |

| 32 | 375.0 | False |

| ... | ... | ... |

| 9995 | 400.0 | True |

| 9996 | 375.0 | True |

| 9997 | 1375.0 | True |

| 9998 | 450.0 | True |

| 9999 | 125.0 | True |

9939 rows × 2 columns

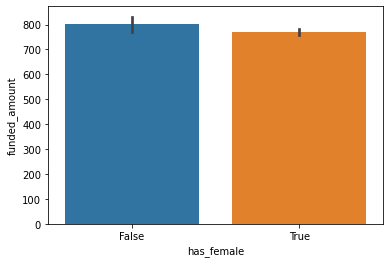

## visualizing means. ci=68 makes easier to compare error bars (will discuss in class)

ax = sns.barplot(data=plot_df, x='has_female', y='funded_amount', ci=68)## now that we have visualized the groups, we can save a final male_group and female_group

# that are a pandas Series. This will make the rest of our workflow simpler than if

# we still had a dataframe

female_group = female_df['funded_amount']

male_group = male_df['funded_amount']

display(female_group.head(), male_group.head())1 225.0

2 1150.0

3 1700.0

4 400.0

5 350.0

Name: funded_amount, dtype: float64

0 1000.0

8 925.0

18 875.0

22 600.0

32 375.0

Name: funded_amount, dtype: float64

- According the the work flow, the 2-sample T-Test has the following assumptions:

- No significant outliers

- Normality

- Equal Variance

- Check each group SEPARATELY!

## Checking for abs vlaue of z-scores that are > 3

is_outlier_females = np.abs(stats.zscore(female_group)) > 3

print(f"There are {is_outlier_females.sum()} outliers in the female group out of {len(female_group)})")There are 202 outliers in the female group out of 7820)

female_df.loc[~is_outlier_females].dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| funded_amount | has_female | |

|---|---|---|

| 1 | 225.0 | True |

| 2 | 1150.0 | True |

| 3 | 1700.0 | True |

| 4 | 400.0 | True |

| 5 | 350.0 | True |

| ... | ... | ... |

| 9995 | 400.0 | True |

| 9996 | 375.0 | True |

| 9997 | 1375.0 | True |

| 9998 | 450.0 | True |

| 9999 | 125.0 | True |

7618 rows × 2 columns

## removing outliers from female_group

female_group = female_group.loc[~is_outlier_females]

female_group1 225.0

2 1150.0

3 1700.0

4 400.0

5 350.0

...

9995 400.0

9996 375.0

9997 1375.0

9998 450.0

9999 125.0

Name: funded_amount, Length: 7618, dtype: float64

## Checking for abs vlaue of z-scores that are > 3

is_outlier_males = np.abs(stats.zscore(male_group)) > 3

print(f"There are {is_outlier_males.sum()} outliers in the male group of out of {len(male_group)}.")There are 26 outliers in the male group of out of 2119.

## removing outliers from male_group

male_group = male_group.loc[~is_outlier_males]

male_group0 1000.0

8 925.0

18 875.0

22 600.0

32 375.0

...

9984 1000.0

9985 800.0

9991 125.0

9992 100.0

9993 3000.0

Name: funded_amount, Length: 2093, dtype: float64

- According to the workflow on the LP, since both groups have n > 15, we can safely ignore the assumption of normality.

result = stats.levene(male_group, female_group)

print(result)

print(result.pvalue<.05)LeveneResult(statistic=5.919603200045773, pvalue=0.014991261165002913)

True

-

According to the documentation for stats.levene, the null hypothesis for the test is that both groups have equal variance. Since our p-value is less than .05 we reject that null hypothesis and conclude that our groups do NOT have equal variance.

-

Since we did NOT meet the assumption of equal variance, we will run our stats.ttest_ind using equal_var=False. This will run a Welch's T-Test, which is designed to account for unequal variance.

result = stats.ttest_ind(male_group, female_group, equal_var=False)

print(result)

result.pvalue < .05Ttest_indResult(statistic=4.5701408946264275, pvalue=5.046604720900298e-06)

True

-

Our Welch's T-Test return a p-value < .05 (it was actually p <.0001!) we reject the null hypothesis and support the alternative hypothesis that there is a significant difference in funded amounts for teams that included at least 1 female.

-

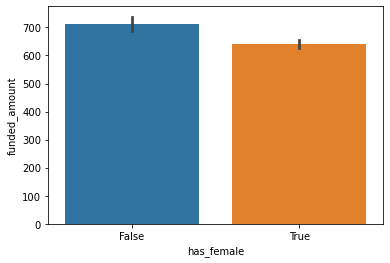

In order to know if they are funded significantly MORE or LESS, we look at the actual means of our final groups.

print(f"The average funded_amount for male groups was {male_group.mean():.2f}")

print(f"The average funded_amount for female groups was {female_group.mean():.2f}")The average funded_amount for male groups was 712.06

The average funded_amount for female groups was 640.80

- Male groups are funded at significantly higher amounts than female groups.

## concatenate the two dataframes for visualziation.

plot_df = pd.concat([male_df.loc[~is_outlier_males],

female_df.loc[~is_outlier_females]], axis=0)

plot_df.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| funded_amount | has_female | |

|---|---|---|

| 0 | 1000.0 | False |

| 8 | 925.0 | False |

| 18 | 875.0 | False |

| 22 | 600.0 | False |

| 32 | 375.0 | False |

| ... | ... | ... |

| 9995 | 400.0 | True |

| 9996 | 375.0 | True |

| 9997 | 1375.0 | True |

| 9998 | 450.0 | True |

| 9999 | 125.0 | True |

9711 rows × 2 columns

sns.barplot(data=plot_df, x='has_female',y='funded_amount')<AxesSubplot:xlabel='has_female', ylabel='funded_amount'>