LCF-ATEPC

codes for our paper A Multi-task Learning Model for Chinese-oriented Aspect Polarity Classification and Aspect Term Extraction

LCF-ATEPC,面向中文及多语言的ATE和APC联合学习模型,基于PyTorch和pytorch-transformers.

LCF-ATEPC, a multi-task learning model for Chinese and multilingual-oriented ATE and APC task, based on PyTorch

Requirement

- Python >= 3.7

- PyTorch >= 1.0

- pytorch-transformers >= 1.2.0

- 现在,BERT-SPC不能被用于训练和测试ATE任务。 但指定

use_bert_spc = True可以提升英语数据集上的APC任务性能。 - Removed the BERT-SPC input format to keep the reliability of the ATE performance. Set

use_bert_spc = Trueto improve the APC performance while only APC subtask is considered.

Training

We use the configuration file to manage experiments setting.

Training in batches by experiments configuration file, refer to the experiments.json to manage experiments.

Then,

python train.py --config_path experiments.jsonOut of Memory

Since BERT models require a lot of memory. If the out-of-memory problem while training the model, here are the ways to mitigate the problem:

- Reduce the training batch size ( train_batch_size = 4 or 8 )

- Reduce the longest input sequence ( max_seq_length = 40 or 60 )

- Use a unique BERT layer to model for both local and global contexts

Model Performance

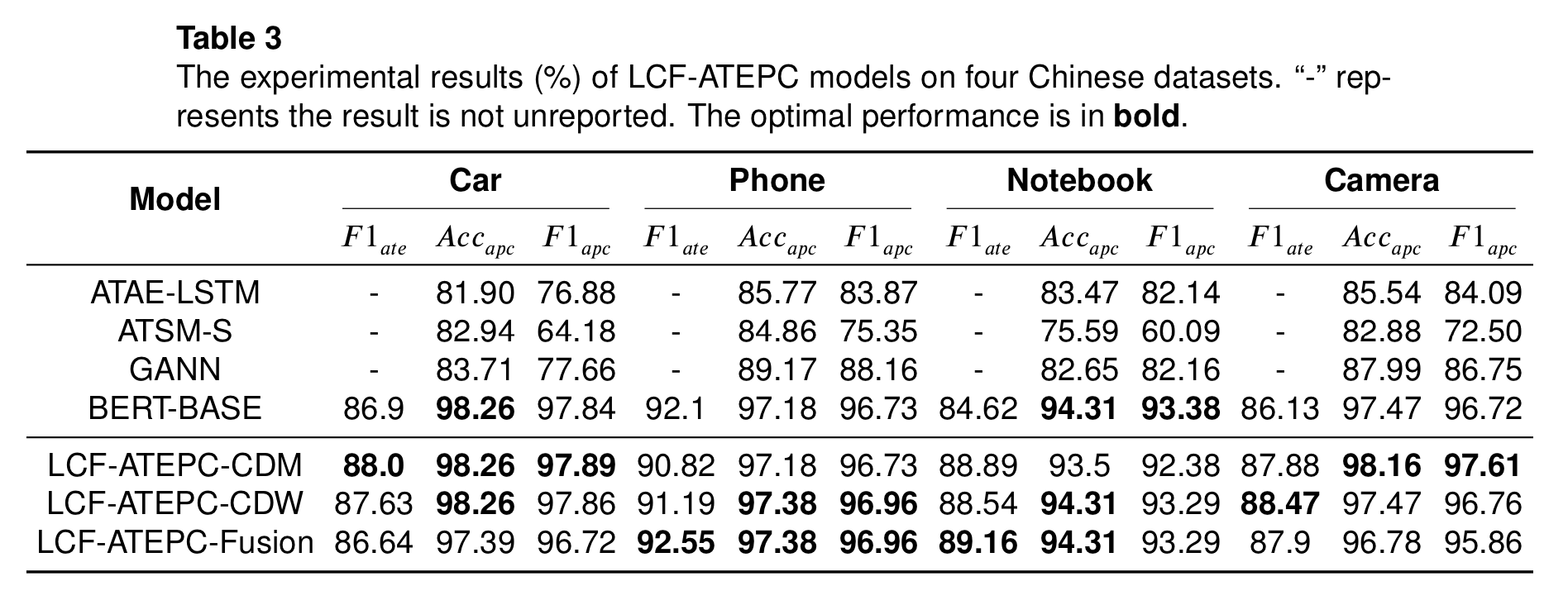

Performance on Chinese Datasets

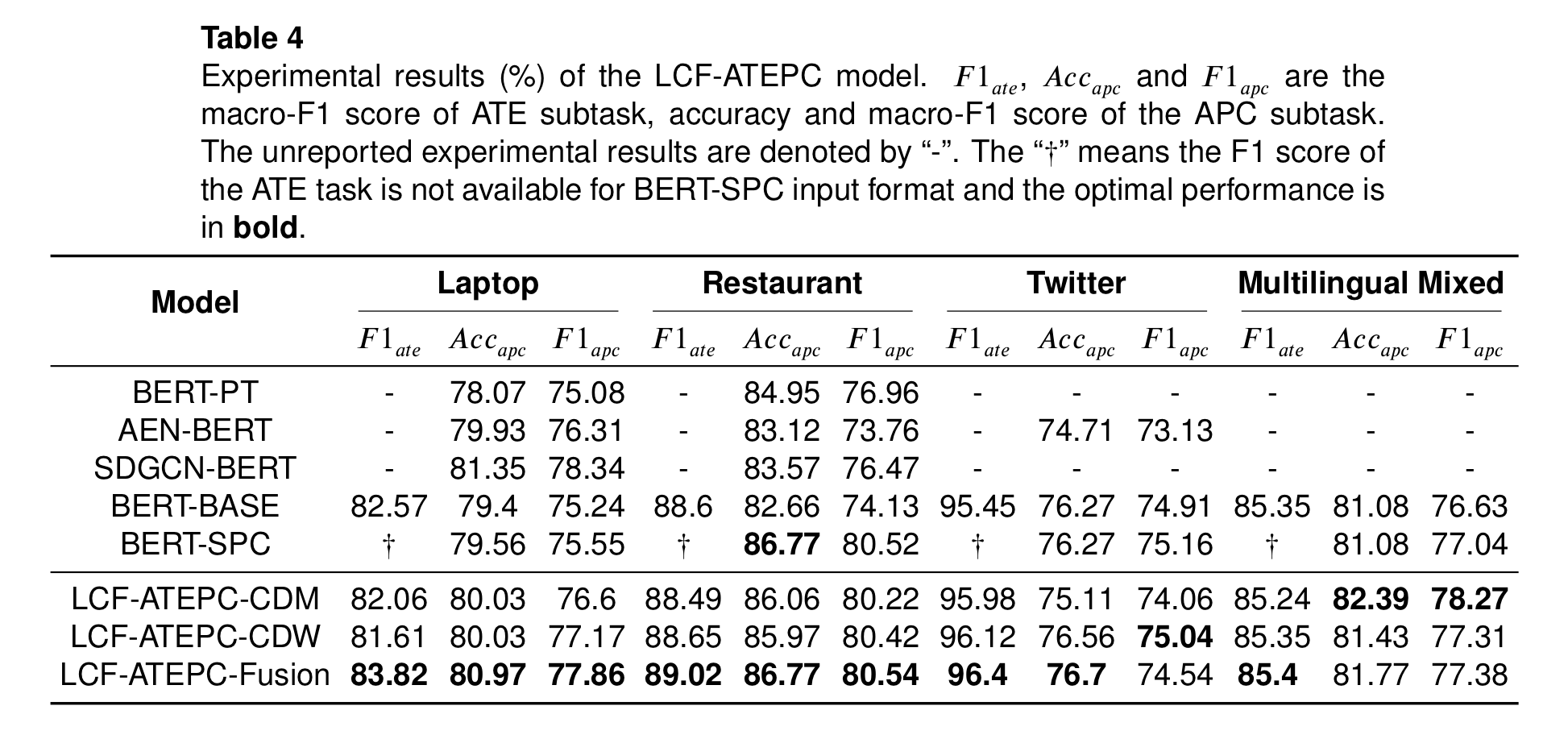

Performance on Multilingual Datasets

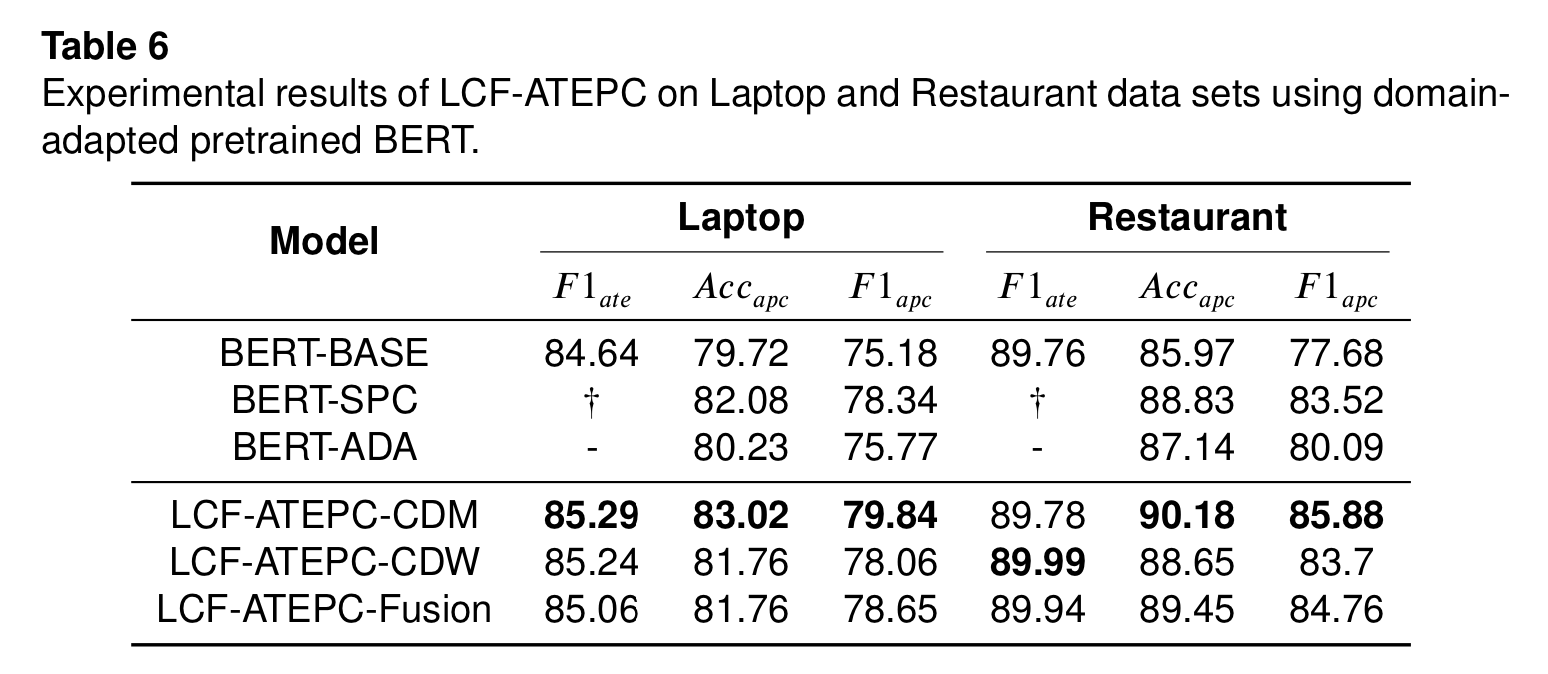

Optimal Performance on Laptop and Restaurant Datasets

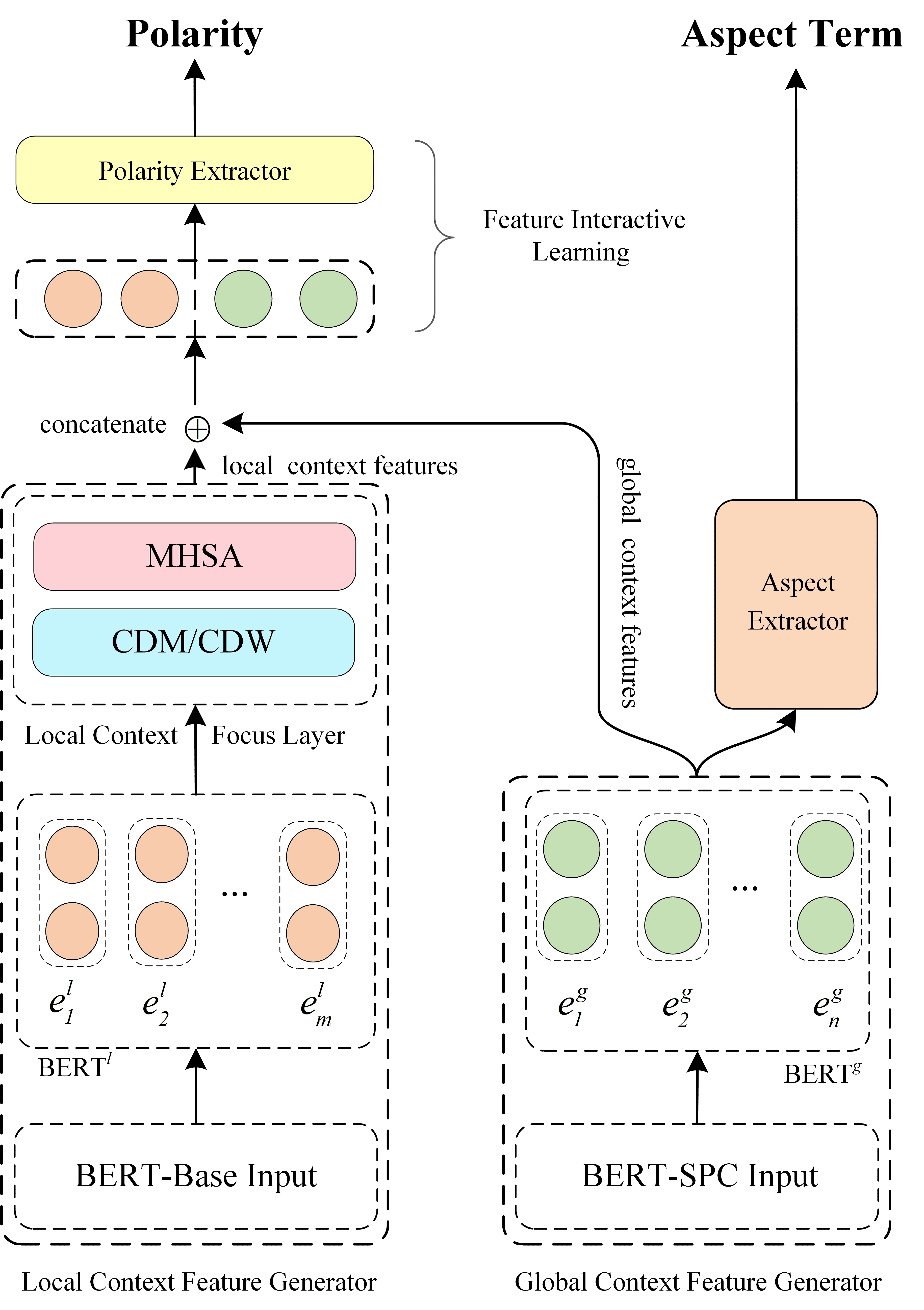

Model Architecture

Notice

We cleaned up and refactored the original codes for easy understanding and reproduction. Due to the busy schedule, we didn't test all the training situations. If you find any issue in this repo, You can raise an issue or submit a pull request, whichever is more convenient for you.

Citation

If this repository is helpful to you, please cite our paper:

@misc{yang2019multitask,

title={A Multi-task Learning Model for Chinese-oriented Aspect Polarity Classification and Aspect Term Extraction},

author={Heng Yang and Biqing Zeng and JianHao Yang and Youwei Song and Ruyang Xu},

year={2019},

eprint={1912.07976},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Licence

MIT License