Features • Installation • Tutorials • Community • Citing • License

fasterai is a library created to make neural network smaller and faster. It essentially relies on common compression techniques for networks such as pruning, knowledge distillation, Lottery Ticket Hypothesis, ...

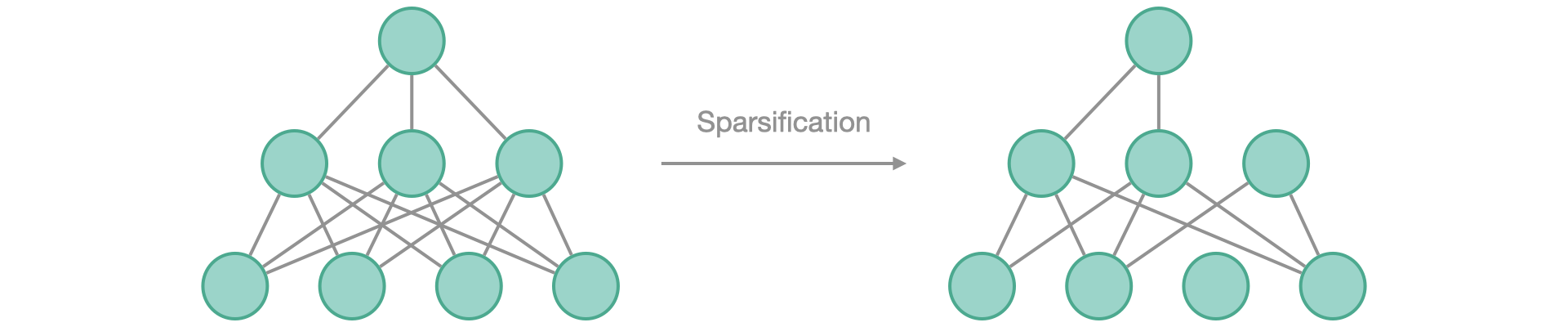

The core feature of fasterai is its Sparsifying capabilities, constructed on 4 main modules: granularity, context, criteria, schedule. Each of these modules is highly customizable, allowing you to change them according to your needs or even to come up with your own !

Visit Read The Docs Project Page or read following README to know more about using fasterai.

Make your model sparse (i.e. prune it) according to a:

- Sparsity: the percentage of weights that will be replaced by 0

- Granularity: the granularity at which you operate the pruning (removing weights, vectors, kernels, filters)

- Context: prune either each layer independantly (local pruning) or the whole model (global pruning)

- Criteria: the criteria used to select the weights to remove (magnitude, movement, ...)

- Schedule: which schedule you want to use for pruning (one shot, iterative, gradual, ...)

This can be achieved by using the SparsifyCallback(sparsity, granularity, context, criteria, schedule)

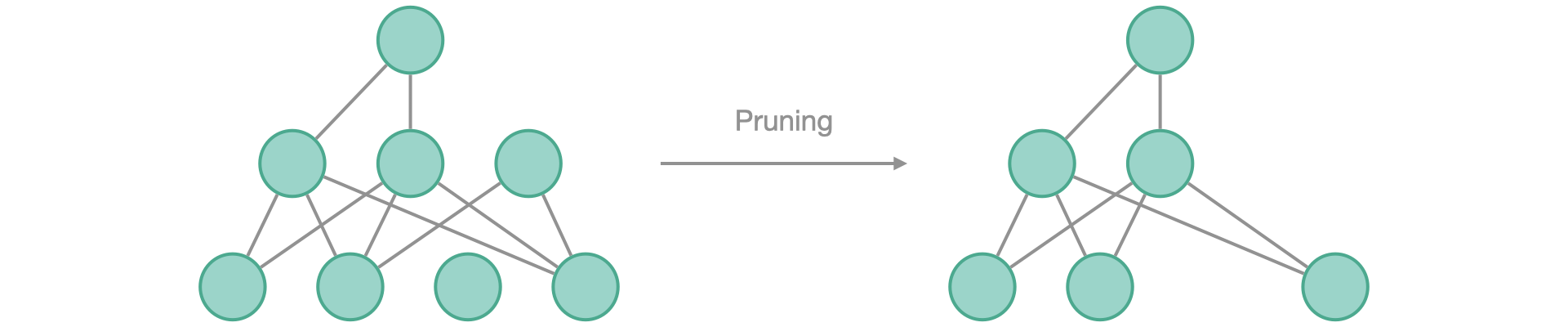

Once your model has useless nodes due to zero-weights, they can be removed to not be a part of the network anymore.

This can be achieved by using the Pruner() method

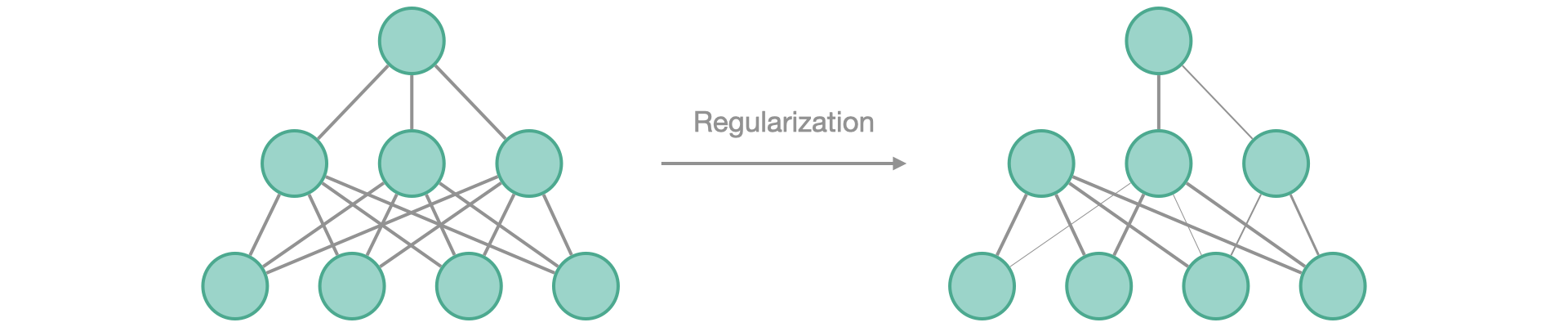

Instead of explicitely make your network sparse, let it train towards sparse connections by pushing the weights to be as small as possible.

Regularization can be applied to groups of weights, following the same granularities as for sparsifying, i.e.:

- Granularity: the granularity at which you operate the regularization (weights, vectors, kernels, filters, ...)

This can be achieved by using the RegularizationCallback(granularity)

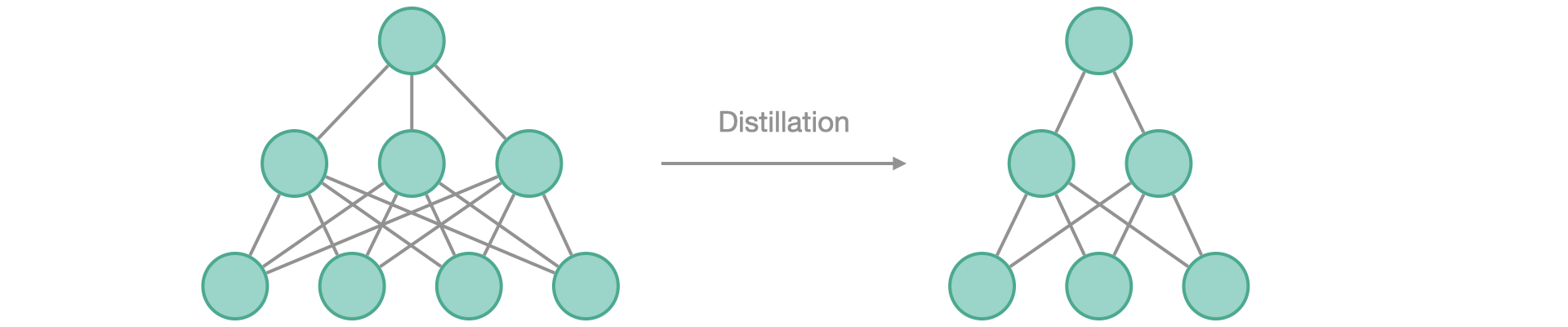

Distill the knowledge acquired by a big model into a smaller one, by using the KnowledgeDistillation callback.

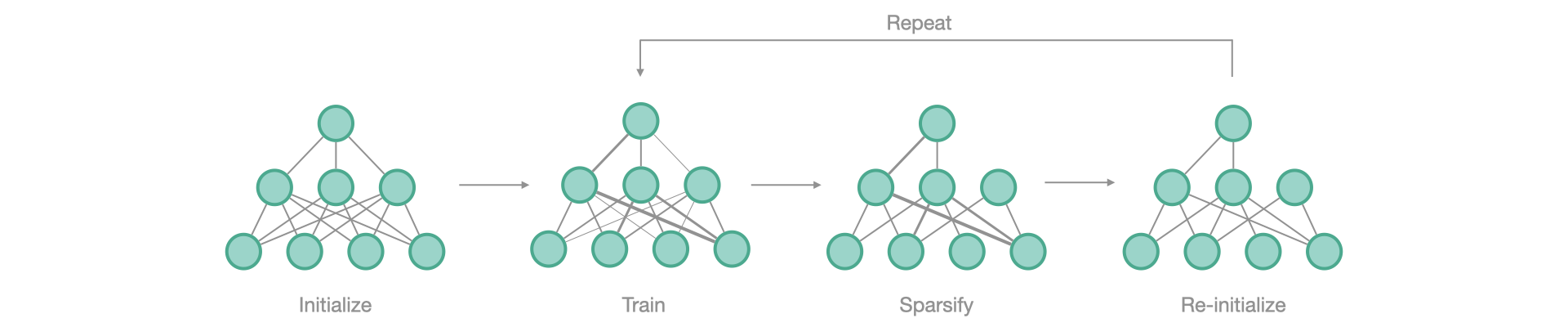

Find the winning ticket in you network, i.e. the initial subnetwork able to attain at least similar performances than the network as a whole.

from fasterai.sparse.all import *learn = cnn_learner(dls, model)sp_cb=SparsifyCallback(sparsity, granularity, context, criteria, schedule)learn.fit_one_cycle(n_epochs, cbs=sp_cb)pip install git+https://github.com/nathanhubens/fasterai.git

or

pip install fasterai

- Get Started with FasterAI

- Create your own pruning schedule

- Find winning tickets using the Lottery Ticket Hypothesis

- Use Knowledge Distillation to help a student model to reach higher performance

- Sparsify Transformers

- More to come...

Join our discord server to meet other FasterAI users and share your projects!

@software{Hubens,

author = {Nathan Hubens},

title = {fasterai},

year = 2022,

publisher = {Zenodo},

version = {v0.1.6},

doi = {10.5281/zenodo.6469868},

url = {https://doi.org/10.5281/zenodo.6469868}

}

Apache-2.0 License.