Sparkify has grown their user base and song database even more and want to move their data warehouse to a data lake. Their data resides in S3, in a directory of JSON logs on user activity on the app, as well as a directory with JSON metadata on the songs in their app.

As their data engineer, you are tasked with building an ETL pipeline that extracts their data from S3, processes them using Spark, and loads the data back into S3 as a set of dimensional tables. This will allow their analytics team to continue finding insights in what songs their users are listening to.

You'll be able to test your database and ETL pipeline by running queries given to you by the analytics team from Sparkify and compare your results with their expected results.

You will build a data lake and an ETL pipeline in Spark that loads data from S3, processes the data into analytics tables, and loads them back into S3.

- python

- AWS

- AWS EMR

- Spark

You will work with two datasets which reside in S3 with the following S3 links:

- Song data:

s3://udacity-dend/song_data - Log data:

s3://udacity-dend/log_data

The song dataset is a subset of real data from the Million Song Dataset. Each file is in JSON format and contains metadata about a song and the artist of that song. The files are partitioned by the first three letters of each song's track ID. For example, here re filepaths to two files in this dataset.

song_data/A/B/C/TRABCEI128F424C983.json

song_data/A/A/B/TRAABJL12903CDCF1A.json

Here is an example of what a single song file looks like.

{"num_songs": 1, "artist_id": "ARJIE2Y1187B994AB7", "artist_latitude": null, "artist_longitude": null, "artist_location": "", "artist_name": "Line Renaud", "song_id": "SOUPIRU12A6D4FA1E1", "title": "Der Kleine Dompfaff", "duration": 152.92036, "year": 0}The log dataset consists of log files in JSON format generated by this event simulator based on the songs in the dataset above. These simulate app activity logs from an imaginary music streaming app based on configuration settings.

The log files are partitioned by year and month. For example, here are filepaths to two files in this dataset.

log_data/2018/11/2018-11-12-events.json

log_data/2018/11/2018-11-13-events.json

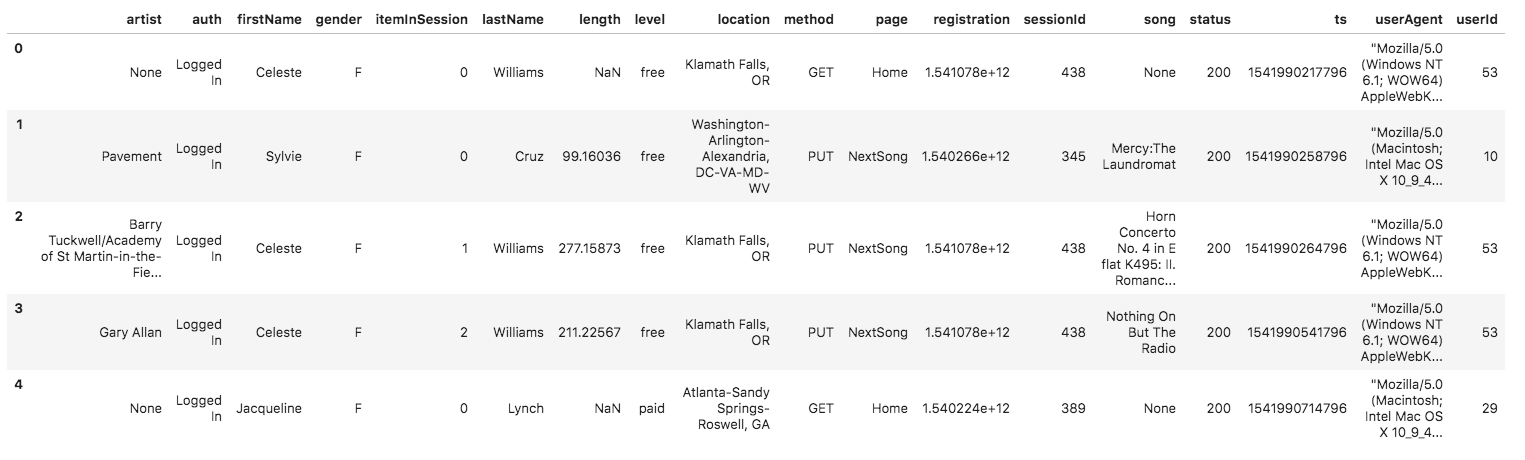

And below is an example of what the data in a log file looks like.

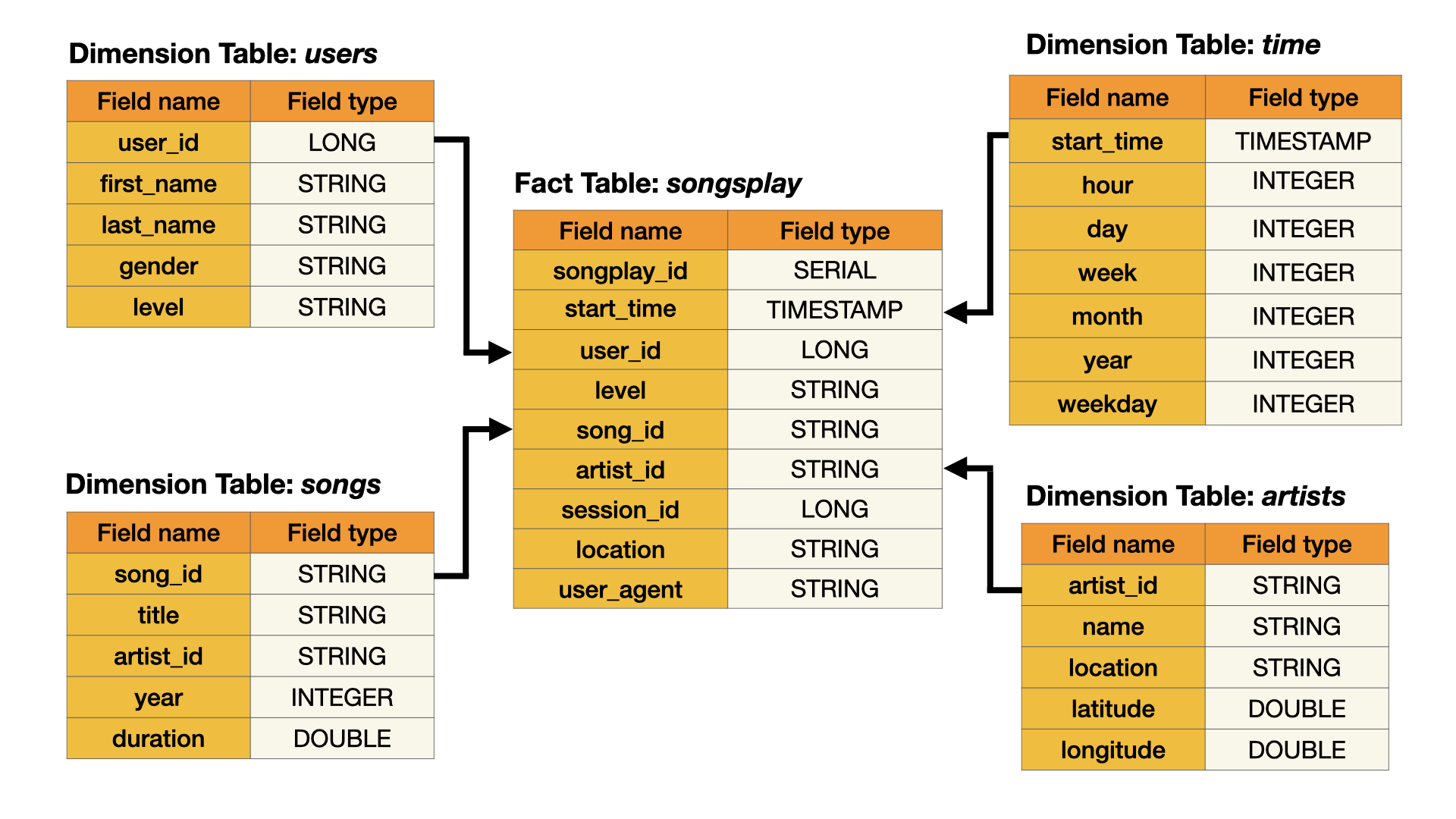

You will use a star database schema as a data model for this ETL pipeline. This model includes one fact table and four dimensions tables which will enable fast read queries.

The ERD of the data model is given below.

Clone this repository

git clone https://github.com/najuzilu/DL-Spark.git- AWS

- AWS EMR

- AWS S3

- Use

dl_example.cfgto create and populate adl.cfgfile with the AWS Access Key and Secret Key fields. - Run

./create_cluster.shto create the EMR cluster. Note: Submityeswhen asked "Are you sure you want to continue connecting (yes/no/[fingerprint])?" - Copy the last line printed during the execution of the previous step to connect to the EMR cluster master node. The command syntax will be

aws emr ssh --cluster-id <cluster-id> --key-pair-file <aws-pem-key-file-path>.pem. - Once you're connected to the EMR cluster, execute the ETL pipeline through Spark

spark-submit --master yarn ./etl.py

- Lastly, to avoid unexpected costs, you will terminate the cluster and delete the S3 bucket. Simply run

terminate_cluster.shon your terminal../terminate_cluster.sh

Yuna Luzi - @najuzilu

Distributed under the MIT License. See LICENSE for more information.