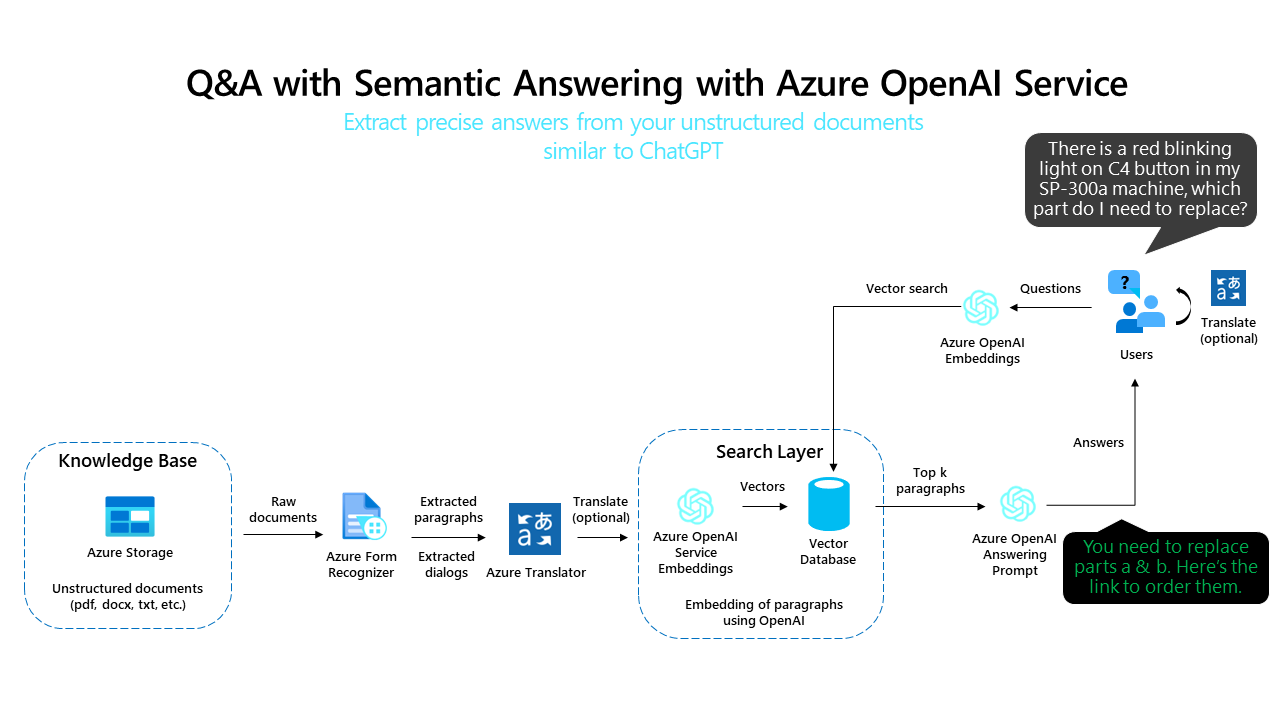

Azure OpenAI Embeddings QnA

A simple web application for a OpenAI-enabled document search. This repo uses Azure OpenAI Service for creating embeddings vectors from documents. For answering the question of a user, it retrieves the most relevant document and then uses GPT-3 to extract the matching answer for the question.

Learning More about Enterprise QnA

Enterprise QnA is built on a pattern the AI community calls "Retrieval-Augmented Generation" (RAG). In addition to this repository having a reference architecture on how to implement this pattern on Azure, here are resources to familiarize yourself with the concepts in RAG, and samples to learn each underlying product's APIs:

| Resource | Links | Purpose | Highlights |

|---|---|---|---|

| Reference Architecture | GitHub (This Repo) | Starter template for enterprise development. - Easily deployable reference architecture following best practices. | - Frontend is Azure OpenAI chat orchestrated with Langchain. - Composes Form Recognizer, Azure Search, Redis in an end-to-end design. - Supports working with Azure Search, Redis. |

| Educational Blog Post | Microsoft Blog, GitHub |

Learn about the building blocks in a RAG solution. | - Introduction to the key elements in a RAG architecture. - Understand the role of vector search in RAG scenarios. - See how Azure Search supports this pattern. - Understand the role of prompts and orchestrator like Langchain. |

| Azure OpenAI API Sample | GitHub | Get started with Azure OpenAI features. | - Sample code to make an interactive chat client as a web page. - Helps you get started with latest Azure OpenAI APIs |

| Business Process Automation Samples | GitHub | Showcase multiple BPA scenarios implemented with Form Recognizer and Azure services. | - Consolidates in one repository multiple samples related to BPA and document understanding. - Includes an end to end app, GUI to create and customize a pipeline to integrate multiple Azure Cognitive services. - Samples include document intelligence and search. |

IMPORTANT NOTE (OpenAI generated)

We have made some changes to the data format in the latest update of this repo.

The new format is more efficient and compatible with the latest standards and libraries. However, we understand that some of you may have existing applications that rely on the previous format and may not be able to migrate to the new one immediately.

Therefore, we have provided a way for you to continue using the previous format in a running application. All you need to do is to set your web application tag to fruocco/oai-embeddings:2023-03-27_25. This will ensure that your application will use the data format that was available on March 27, 2023. We strongly recommend that you update your applications to use the new format as soon as possible.

If you want to move to the new format, please go to:

- "Add Document" -> "Add documents in Batch" and click on "Convert all files and add embeddings" to reprocess your documents.

Use the Repo with Chat based deployment (gpt-35-turbo or gpt-4-32k or gpt-4)

By default, the repo uses an Instruction based model (like text-davinci-003) for QnA and Chat experience.

If you want to use a Chat based deployment (gpt-35-turbo or gpt-4-32k or gpt-4), please change the environment variables as described here

Running this repo

You have multiple options to run the code:

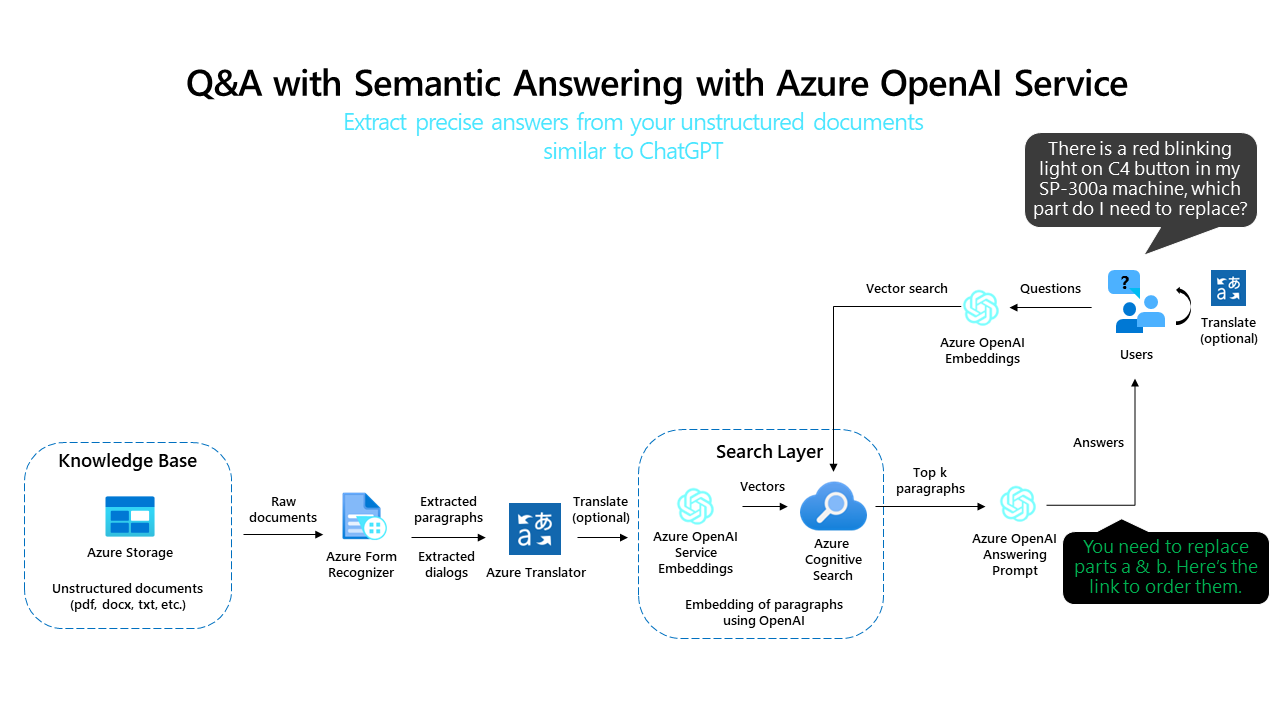

- Deploy on Azure (WebApp + Batch Processing) with Azure Cognitive Search

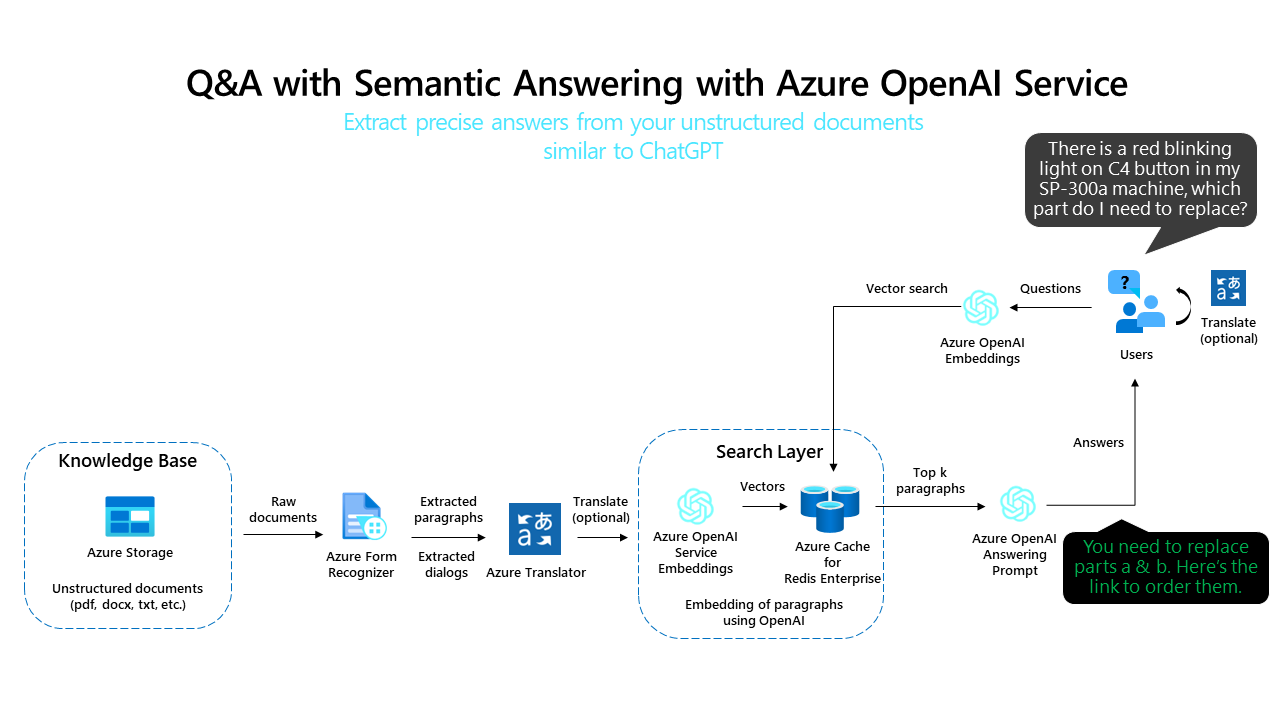

- Deploy on Azure (WebApp + Azure Cache for Redis + Batch Processing)

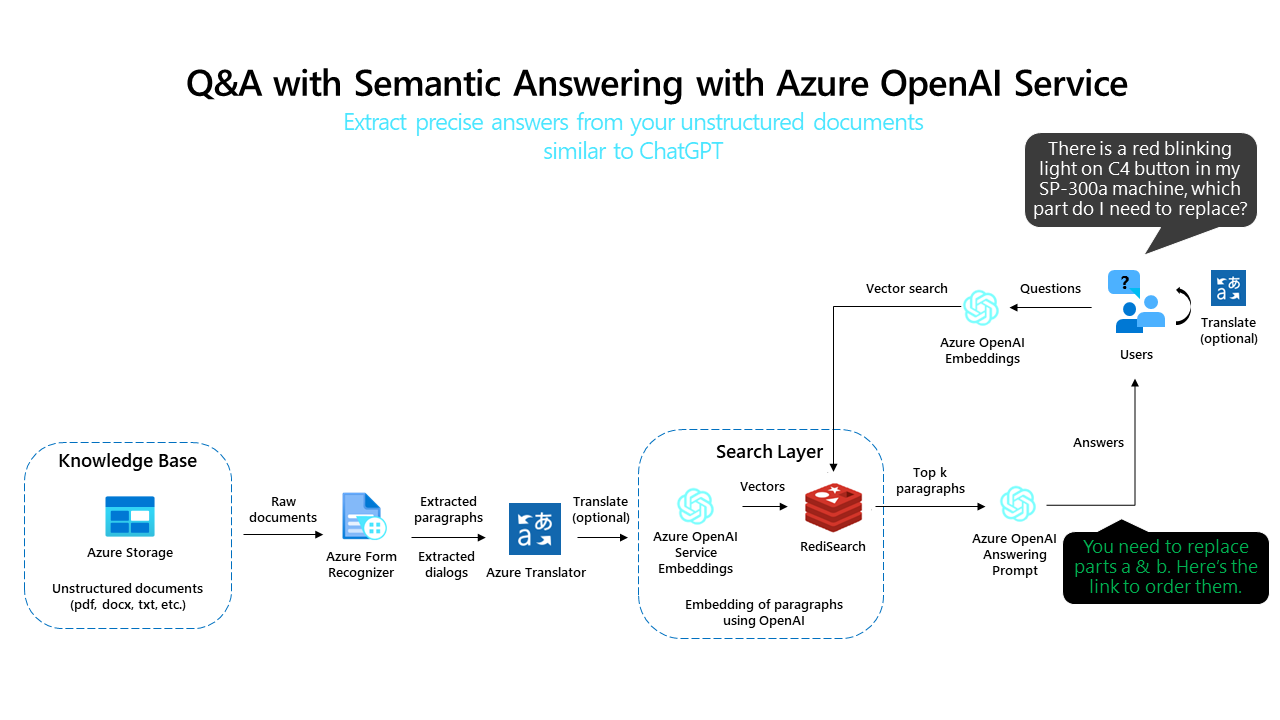

- Deploy on Azure (WebApp + Redis Stack + Batch Processing)

- Run everything locally in Docker (WebApp + Redis Stack + Batch Processing)

- Run everything locally in Python with Conda (WebApp only)

- Run everything locally in Python with venv

- Run WebApp locally in Docker against an existing Redis deployment

Deploy on Azure (WebApp + Batch Processing) with Azure Cognitive Search

Click on the Deploy to Azure button and configure your settings in the Azure Portal as described in the Environment variables section.

Please be aware that you need:

- an existing Azure OpenAI resource with models deployments (instruction models e.g. text-davinci-003, and embeddings models e.g. text-embedding-ada-002)

Signing up for Vector Search Private Preview in Azure Cognitive Search

Azure Cognitive Search supports searching using pure vectors, pure text, or in hybrid mode where both are combined. For the vector-based cases, you'll need to sign up for Vector Search Private Preview. To sign up, please fill in this form: https://aka.ms/VectorSearchSignUp.

Preview functionality is provided under Supplemental Terms of Use, without a service level agreement, and isn't recommended for production workloads.

Deploy on Azure (WebApp + Azure Cache for Redis Enterprise + Batch Processing)

Click on the Deploy to Azure button to automatically deploy a template on Azure by with the resources needed to run this example. This option will provision an instance of Azure Cache for Redis with RediSearch installed to store vectors and perform the similiarity search.

Please be aware that you still need:

- an existing Azure OpenAI resource with models deployments (instruction models e.g.

text-davinci-003, and embeddings models e.g.text-embedding-ada-002) - an existing Form Recognizer Resource

- an existing Translator Resource

- Azure marketplace access. (Azure Cache for Redis Enterprise uses the marketplace for IP billing)

You will add the endpoint and access key information for these resources when deploying the template.

Deploy on Azure (WebApp + Redis Stack + Batch Processing)

Click on the Deploy to Azure button and configure your settings in the Azure Portal as described in the Environment variables section.

Please be aware that you need:

- an existing Azure OpenAI resource with models deployments (instruction models e.g.

text-davinci-003, and embeddings models e.g.text-embedding-ada-002) - an existing Form Recognizer Resource (OPTIONAL - if you want to extract text out of documents)

- an existing Translator Resource (OPTIONAL - if you want to translate documents)

Run everything locally in Docker (WebApp + Redis Stack + Batch Processing)

First, clone the repo:

git clone https://github.com/ruoccofabrizio/azure-open-ai-embeddings-qna

cd azure-open-ai-embeddings-qnaNext, configure your .env as described in Environment variables:

cp .env.template .env

vi .env # or use whatever you feel comfortable withFinally run the application:

docker compose upOpen your browser at http://localhost:8080

This will spin up three Docker containers:

- The WebApp itself

- Redis Stack for storing the embeddings

- Batch Processing Azure Function

NOTE: Please note that the Batch Processing Azure Function uses an Azure Storage Account for queuing the documents to process. Please create a Queue named "doc-processing" in the account used for the "AzureWebJobsStorage" env setting.

Run everything locally in Python with Conda (WebApp only)

This requires Redis running somewhere and expects that you've setup .env as described above. In this case, point REDIS_ADDRESS to your Redis deployment.

You can run a local Redis instance via:

docker run -p 6379:6379 redis/redis-stack-server:latestYou can run a local Batch Processing Azure Function:

docker run -p 7071:80 fruocco/oai-batch:latestCreate conda environment for Python:

conda env create -f code/environment.yml

conda activate openai-qna-envConfigure your .env as described in as described in Environment variables

Run WebApp:

cd code

streamlit run OpenAI_Queries.pyRun everything locally in Python with venv

This requires Redis running somewhere and expects that you've setup .env as described above. In this case, point REDIS_ADDRESS to your Redis deployment.

You can run a local Redis instance via:

docker run -p 6379:6379 redis/redis-stack-server:latestYou can run a local Batch Processing Azure Function:

docker run -p 7071:80 fruocco/oai-batch:latestPlease ensure you have Python 3.9+ installed.

Create venv environment for Python:

python -m venv .venv

.venv\Scripts\activateInstall PIP Requirements

pip install -r code\requirements.txtConfigure your .env as described in as described in Environment variables

Run the WebApp

cd code

streamlit run OpenAI_Queries.pyRun WebApp locally in Docker against an existing Redis deployment

Option 1 - Run the prebuilt Docker image

Configure your .env as described in as described in Environment variables

Then run:

docker run --env-file .env -p 8080:80 fruocco/oai-embeddings:latestOption 2 - Build the Docker image yourself

Configure your .env as described in as described in Environment variables

docker build . -f Dockerfile -t your_docker_registry/your_docker_image:your_tag

docker run --env-file .env -p 8080:80 your_docker_registry/your_docker_image:your_tagNote: You can use

- WebApp.Dockerfile to build the Web Application

- BatchProcess.Dockerfile to build the Azure Function for Batch Processing

Use the QnA API from the backend

You can use a QnA API on your data exposed by the Azure Function for Batch Processing.

POST https://YOUR_BATCH_PROCESS_AZURE_FUNCTION_URL/api/apiQnA

Body:

question: str

history: (str,str) -- OPTIONAL

custom_prompt: str -- OPTIONAL

custom_temperature: float --OPTIONAL

Return:

{'context': 'Introduction to Azure Cognitive Search - Azure Cognitive Search '

'(formerly known as "Azure Search") is a cloud search service that '

'gives developers infrastructure, APIs, and tools for building a '

'rich search experience over private, heterogeneous content in '

'web, mobile, and enterprise applications...'

'...'

'...',

'question': 'What is ACS?',

'response': 'ACS stands for Azure Cognitive Search, which is a cloud search service'

'that provides infrastructure, APIs, and tools for building a rich search experience'

'over private, heterogeneous content in web, mobile, and enterprise applications...'

'...'

'...',

'sources': '[https://learn.microsoft.com/en-us/azure/search/search-what-is-azure-search](https://learn.microsoft.com/en-us/azure/search/search-what-is-azure-search)'}Call the API with no history for QnA mode

import requests

r = requests.post('http://http://YOUR_BATCH_PROCESS_AZURE_FUNCTION_URL/api/apiQnA', json={

'question': 'What is the capital of Italy?'

})Call the API with history for Chat mode

r = requests.post('http://YOUR_BATCH_PROCESS_AZURE_FUNCTION_URL/api/apiQnA', json={

'question': 'can I use python SDK?',

'history': [

("what's ACS?",

'ACS stands for Azure Cognitive Search, which is a cloud search service that provides infrastructure, APIs, and tools for building a rich search experience over private, heterogeneous content in web, mobile, and enterprise applications. It includes a search engine for full-text search, rich indexing with lexical analysis and AI enrichment for content extraction and transformation, rich query syntax for text search, fuzzy search, autocomplete, geo-search, and more. ACS can be created, loaded, and queried using the portal, REST API, .NET SDK, or another SDK. It also includes data integration at the indexing layer, AI and machine learning integration with Azure Cognitive Services, and security integration with Azure Active Directory and Azure Private Link integration.'

)

]

})Environment variables

Here is the explanation of the parameters:

| App Setting | Value | Note |

|---|---|---|

| OPENAI_ENGINE | text-davinci-003 | Engine deployed in your Azure OpenAI resource. E.g. Instruction based model: text-davinci-003 or Chat based model: gpt-35-turbo or gpt-4-32k or gpt-4. Please use the deployment name and not the model name. |

| OPENAI_DEPLOYMENT_TYPE | Text | Text for Instruction engines (text-davinci-003), Chat for Chat based deployment (gpt-35-turbo or gpt-4-32k or gpt-4) |

| OPENAI_EMBEDDINGS_ENGINE_DOC | text-embedding-ada-002 | Embedding engine for documents deployed in your Azure OpenAI resource |

| OPENAI_EMBEDDINGS_ENGINE_QUERY | text-embedding-ada-002 | Embedding engine for query deployed in your Azure OpenAI resource |

| OPENAI_API_BASE | https://YOUR_AZURE_OPENAI_RESOURCE.openai.azure.com/ | Your Azure OpenAI Resource name. Get it in the Azure Portal |

| OPENAI_API_KEY | YOUR_AZURE_OPENAI_KEY | Your Azure OpenAI API Key. Get it in the Azure Portal |

| OPENAI_TEMPERATURE | 0.7 | Azure OpenAI Temperature |

| OPENAI_MAX_TOKENS | -1 | Azure OpenAI Max Tokens |

| VECTOR_STORE_TYPE | AzureSearch | Vector Store Type. Use AzureSearch for Azure Cognitive Search, leave it blank for Redis or Azure Cache for Redis Enterprise |

| AZURE_SEARCH_SERVICE_NAME | YOUR_AZURE_SEARCH_SERVICE_URL | Your Azure Cognitive Search service name. Get it in the Azure Portal |

| AZURE_SEARCH_ADMIN_KEY | AZURE_SEARCH_ADMIN_KEY | Your Azure Cognitive Search Admin key. Get it in the Azure Portal |

| REDIS_ADDRESS | api | URL for Redis Stack: "api" for docker compose |

| REDIS_PORT | 6379 | Port for Redis |

| REDIS_PASSWORD | redis-stack-password | OPTIONAL - Password for your Redis Stack |

| REDIS_ARGS | --requirepass redis-stack-password | OPTIONAL - Password for your Redis Stack |

| REDIS_PROTOCOL | redis:// | |

| CHUNK_SIZE | 500 | OPTIONAL: Chunk size for splitting long documents in multiple subdocs. Default value: 500 |

| CHUNK_OVERLAP | 100 | OPTIONAL: Overlap between chunks for document splitting. Default: 100 |

| CONVERT_ADD_EMBEDDINGS_URL | http://batch/api/BatchStartProcessing | URL for Batch processing Function: "http://batch/api/BatchStartProcessing" for docker compose |

| AzureWebJobsStorage | AZURE_BLOB_STORAGE_CONNECTION_STRING FOR_AZURE_FUNCTION_EXECUTION | Azure Blob Storage Connection string for Azure Function - Batch Processing |

Optional parameters for additional features (e.g. document text extraction with OCR):

| App Setting | Value | Note |

|---|---|---|

| BLOB_ACCOUNT_NAME | YOUR_AZURE_BLOB_STORAGE_ACCOUNT_NAME | OPTIONAL - Get it in the Azure Portal if you want to use the document extraction feature |

| BLOB_ACCOUNT_KEY | YOUR_AZURE_BLOB_STORAGE_ACCOUNT_KEY | OPTIONAL - Get it in the Azure Portalif you want to use document extraction feature |

| BLOB_CONTAINER_NAME | YOUR_AZURE_BLOB_STORAGE_CONTAINER_NAME | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| FORM_RECOGNIZER_ENDPOINT | YOUR_AZURE_FORM_RECOGNIZER_ENDPOINT | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| FORM_RECOGNIZER_KEY | YOUR_AZURE_FORM_RECOGNIZER_KEY | OPTIONAL - Get it in the Azure Portal if you want to use document extraction feature |

| PAGES_PER_EMBEDDINGS | Number of pages for embeddings creation. Keep in mind you should have less than 3K token for each embedding. | Default: A new embedding is created every 2 pages. |

| TRANSLATE_ENDPOINT | YOUR_AZURE_TRANSLATE_ENDPOINT | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |

| TRANSLATE_KEY | YOUR_TRANSLATE_KEY | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |

| TRANSLATE_REGION | YOUR_TRANSLATE_REGION | OPTIONAL - Get it in the Azure Portal if you want to use translation feature |

| VNET_DEPLOYMENT | false | Boolean variable to set "true" if you want to deploy the solution in a VNET. Please check your Azure Form Recognizer and Azure Translator endpoints as well. |

DISCLAIMER

This presentation, demonstration, and demonstration model are for informational purposes only and (1) are not subject to SOC 1 and SOC 2 compliance audits, and (2) are not designed, intended or made available as a medical device(s) or as a substitute for professional medical advice, diagnosis, treatment or judgment. Microsoft makes no warranties, express or implied, in this presentation, demonstration, and demonstration model. Nothing in this presentation, demonstration, or demonstration model modifies any of the terms and conditions of Microsoft’s written and signed agreements. This is not an offer and applicable terms and the information provided are subject to revision and may be changed at any time by Microsoft.

This presentation, demonstration, and demonstration model do not give you or your organization any license to any patents, trademarks, copyrights, or other intellectual property covering the subject matter in this presentation, demonstration, and demonstration model.

The information contained in this presentation, demonstration and demonstration model represents the current view of Microsoft on the issues discussed as of the date of presentation and/or demonstration, for the duration of your access to the demonstration model. Because Microsoft must respond to changing market conditions, it should not be interpreted to be a commitment on the part of Microsoft, and Microsoft cannot guarantee the accuracy of any information presented after the date of presentation and/or demonstration and for the duration of your access to the demonstration model.

No Microsoft technology, nor any of its component technologies, including the demonstration model, is intended or made available as a substitute for the professional advice, opinion, or judgment of (1) a certified financial services professional, or (2) a certified medical professional. Partners or customers are responsible for ensuring the regulatory compliance of any solution they build using Microsoft technologies.