This repository is the official implementation of GeoSynth [CVPRW, EarthVision, 2024]. GeoSynth is a suite of models for synthesizing satellite images with global style and image-driven layout control.

Models available in 🤗 HuggingFace diffusers:

All model ckpt files available here - Model Zoo

- Update Gradio demo

- Release Location-Aware GeoSynth Models to 🤗 HuggingFace

- Release PyTorch

ckptfiles for all models - Release GeoSynth Models to 🤗 HuggingFace

Example inference using 🤗 HuggingFace pipeline:

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel

import torch

from PIL import Image

img = Image.open("osm_tile_18_42048_101323.jpeg")

controlnet = ControlNetModel.from_pretrained("MVRL/GeoSynth-OSM")

pipe = StableDiffusionControlNetPipeline.from_pretrained("stabilityai/stable-diffusion-2-1-base", controlnet=controlnet)

pipe = pipe.to("cuda:0")

# generate image

generator = torch.manual_seed(10345340)

image = pipe(

"Satellite image features a city neighborhood",

generator=generator,

image=img,

).images[0]

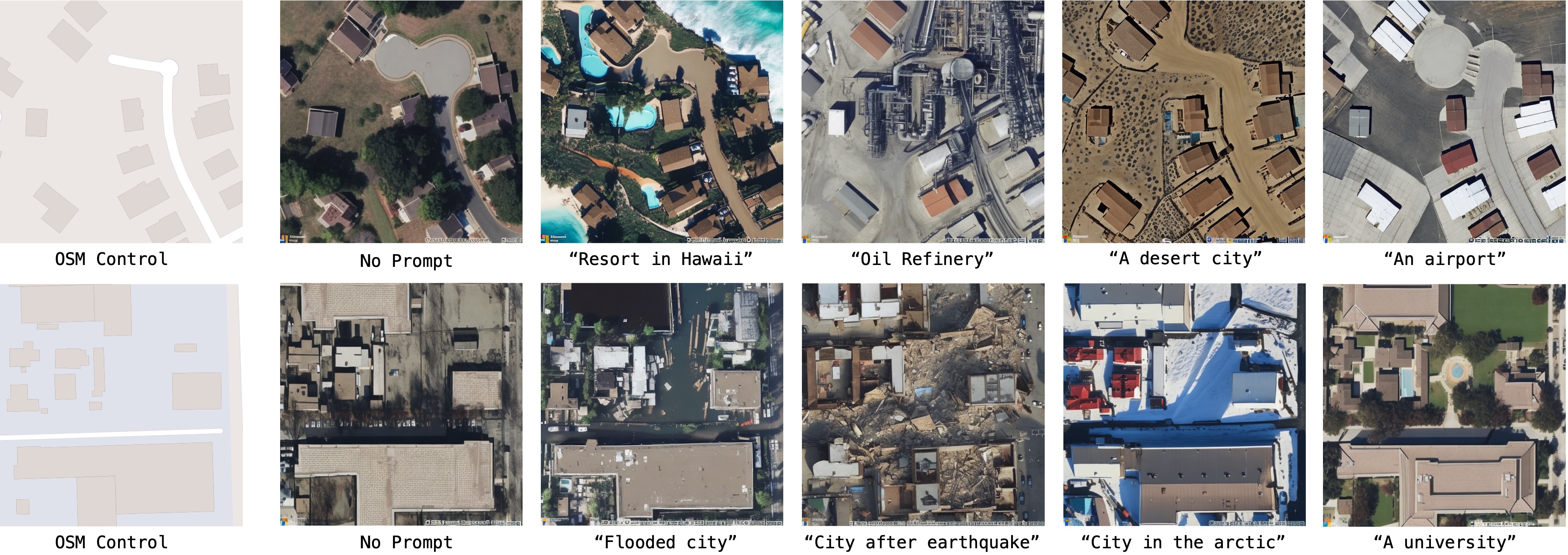

image.save("generated_city.jpg")Our model is able to synthesize based on high-level geography of a region:

Look at train.md for details on setting up the environment and training models on your own data.

Download GeoSynth models from the given links below:

| Control | Location | Download Url |

|---|---|---|

| - | ❌ | Link |

| OSM | ❌ | Link |

| SAM | ❌ | Link |

| Canny | ❌ | Link |

| - | ✅ | Link |

| OSM | ✅ | Link |

| SAM | ✅ | Link |

| Canny | ✅ | Link |

@inproceedings{sastry2024geosynth,

title={GeoSynth: Contextually-Aware High-Resolution Satellite Image Synthesis},

author={Sastry, Srikumar and Khanal, Subash and Dhakal, Aayush and Jacobs, Nathan},

booktitle={IEEE/ISPRS Workshop: Large Scale Computer Vision for Remote Sensing (EARTHVISION),

year={2024}

}Check out our lab website for other interesting works on geospatial understanding and mapping: