Copyright(c) 2014-2016 Milo Yip (miloyip@gmail.com)

This benchmark evaluates the conformance and performance of 41 open-source C/C++ libraries with JSON parsing/generation capabilities. Performance means speed, memory, and code size.

Performance should be concerned only if the results are correct. This benchmark also test the conformance of library towards the JSON standards (RFC7159, ECMA-404).

Performance of JSON parsing/generation may be critical for server-side applications, mobile/embedded systems, or any application that requires processing of large size or number of JSONs. Native (C/C++) libraries are important because they should provide the best possible performance, while other languages may create bindings of native libraries.

The results show that several performance measurements vary in large scale among libraries. For example, the parsing time can be over 100 times. These differences came from many factors, including design and implementation details. For example, memory allocation strategies, design of variant type for JSON, number-string conversions, etc.

This benchmark may be useful for optimizing existing libraries and developing new, high-performance libraries.

The original author (Milo Yip) of this benchmark is also the primary author of RapidJSON.

Although the development of benchmark is attempted to be as objective and fair as possible, every benchmarks have their drawbacks, and are limited to particular testing procedures, datasets and platforms. And also, this benchmark does not compare additional features that a library may support, or the user-friendliness of APIs, securities, cross-platform, etc. The author encourage users to benchmarks with their own data sets and platforms.

| Benchmark | Description |

|---|---|

| Parse Validation | Use JSON_checker test suite to test whether the library can identify valid and invalid JSONs. (fail01.json is excluded as it is relaxed in RFC7159. fail18.json is excluded as depth of JSON is not specified.) |

| Parse Double | 66 JSONs, each with a decimal value in an array, are parsed. The parsed double values are compared to the correct answer. |

| Parse String | 9 JSONs, each with a string value in an array, are parsed. The parsed strings are compared to the correct answer. |

| Roundtrip | 27 condensed JSONs are parsed and stringified. The results are compared to the original JSONs. |

| Benchmark | Description |

|---|---|

| Parse | Parse in-memory JSON into DOM (tree structure). |

| Stringify | Serialize DOM into condensed JSON in memory. |

| Prettify | Serialize DOM into prettified (with indentation and new lines) JSON in memory. |

| Statistics | Traverse DOM and count the number of JSON types, total length of string, and total numbers of elements/members in array/objects. |

| Sax Round-trip | Parse in-memory JSON into events and use events to generate JSON in memory. |

| Sax Statistics | Parse in-memory JSON into events and use events to conduct the statistics. |

| Code size | Executable size in byte. (Currently only support jsonstat program, which calls "Parse" and "Statistics" to print out statistics of a JSON file. ) |

All benchmarks contain the following measurements:

| Measurement | Description |

|---|---|

| Time | Duration in millisecond |

| Memory | Memory consumption in bytes for the result data structure. |

| MemoryPeak | Peak memory consumption in bytes throughout the parsing process. |

| AllocCount | Number of memory allocation (including malloc, realloc(), new et al.) |

Currently 43 libraries are successfully benchmarked. They are listed in alphabetic order:

| Library | Language | Version | Notes |

|---|---|---|---|

| ArduinoJson | C++ | 5.6.6 | |

| Boost.JSON | C++ | 1.80.0 | |

| CAJUN | C++ | 2.0.3 | |

| C++ REST SDK | C++11 | v2.8.0 | Need Boost on non-Windows platform. DOM strings must be UTF16 on Windows and UTF8 on non-Windows platform. |

| ccan/json | C | ||

| cJSON | C | 1.5.0 | |

| Configuru | C++ | 2015-12-18 | gcc/clang only |

| dropbox/json11 | C++11 | ||

| Facil.io | C | 0.5.3 | |

| FastJson | C++ | Not parsing number per se, so do it as post-process. | |

| folly | C++11 | 2016.08.29.00 | Need installation |

| gason | C++11 | ||

| jansson | C | v2.7 | |

| jeayeson | C++14 | ||

| json-c | C | 0.12.1 | |

| jsoncons | C++11 | 0.97.1 | |

| json-voorhees | C++ | v1.1.1 | |

| json spirit | C++ | 4.08 | Need Boost |

| Json Box | C++ | 0.6.2 | |

| JsonCpp | C++ | 1.0.0 | |

| hjiang/JSON++ | C++ | ||

| jsmn | C | Not parsing number per se, so do it as post-process. | |

| jvar | C++ | v1.0.0 | gcc/clang only |

| Jzon | C++ | v2-1 | |

| nbsdx/SimpleJSON | C++11 | ||

| Nlohmann/json | C++11 | v2.0.3 | |

| parson | C | ||

| picojson | C++ | 1.3.0 | |

| pjson | C | No numbers parsing, no DOM interface | |

| POCO | C++ | 1.7.5 | Need installation |

| qajson4c | C | 1.0.0 | gcc/clang only |

| Qt | C++ | 5.6.1-1 | Need installation |

| RapidJSON | C++ | v1.1.0 | There are four configurations: RapidJSON (default), RapidJSON_AutoUTF (transcoding any UTF JSON), RapidJSON_Insitu (insitu parsing) & RapidJSON_FullPrec (full precision number parsing) |

| sajson | C++ | ||

| SimpleJSON | C++ | ||

| sheredom/json.h | C | Not parsing number per se, so do it as post-process. | |

| udp/json | C | 1.1.0 | Actually 2 libraries: udp/json-parser & udp/json-builder. |

| taocpp/json | C++11 | 1.0.0-beta.7 | Uses PEGTL for parsing |

| tunnuz/JSON++ | C++ | ||

| ujson | C++ | 2015-04-12 | |

| ujson4c | C | ||

| V8 | C++ | 5.1.281.47 | Need installation |

| vincenthz/libjson | C | 0.8 | |

| YAJL | C | 2.1.0 | |

| ULib | C++ | v1.4.2 | Need building: (./configure --disable-shared && make) |

Libraries with Git repository are included as submodule in thirdparty path. Other libraries are add as files in thirdparty path.

The exact commit of submodule can be navigated at here.

To measure the overheads of the benchmark process, a strdup test is added for comparison. It simply allocate and copy the input string in Parse and Stringify benchmark.

Besides, some libraries was tried to integrated in this benchmark but failed:

| Library | Issue |

|---|---|

| libjson | Unable to parse UTF-8 string |

| lastjson | |

| StiX Json | |

| boost property_tree | number, true, false, null types are converted into string. |

All tested JSON data are in UTF-8.

| JSON file | Size | Description |

|---|---|---|

canada.json source |

2199KB | Contour of Canada border in GeoJSON format. Contains a lot of real numbers. |

citm_catalog.json source |

1737KB | A big benchmark file with indentation used in several Java JSON parser benchmarks. |

twitter.json |

632KB | Search "一" (character of "one" in Japanese and Chinese) in Twitter public time line for gathering some tweets with CJK characters. |

The benchmark program reads data/data.txt which contains file names of JSON to be tested.

- Execute

git submodule update --initandgit -C thirdparty/boost update --initto download all submodules (libraries). - Obtain premake5.

- Copy premake5 executable to

build/path (or system path). - Run

premake.batorpremake.shinbuild/ - On Windows, build the solution at

build/vs2015/. - On other platforms, run GNU

make -f benchmark.make config=release_x32 && make -f nativejson.make config=release_x32(orrelease_x64) atbuild/gmake/ - Optional: run

build/machine.shfor UNIX or CYGWIN to use CPU info to generate prefix of result filename. - Run the

nativejson_release_...executable is generated atbin/ - The results in CSV format will be written to

result/. - Run GNU

makeinresult/to generate results in HTML.

For simplicity, on Linux/OSX users can simply run make (or make CONFIG=release_x32) at project root to run 4-10 above.

Some libraries, such as Boost, POCO, V8, etc., need to be installed by user manually.

Update on: 2016-9-9

A collection of benchmarks results can be viewed HERE. Select "Benchmark" from the menu to check available benchmark configurations. The presentation is powered by Google Charts with interactivity.

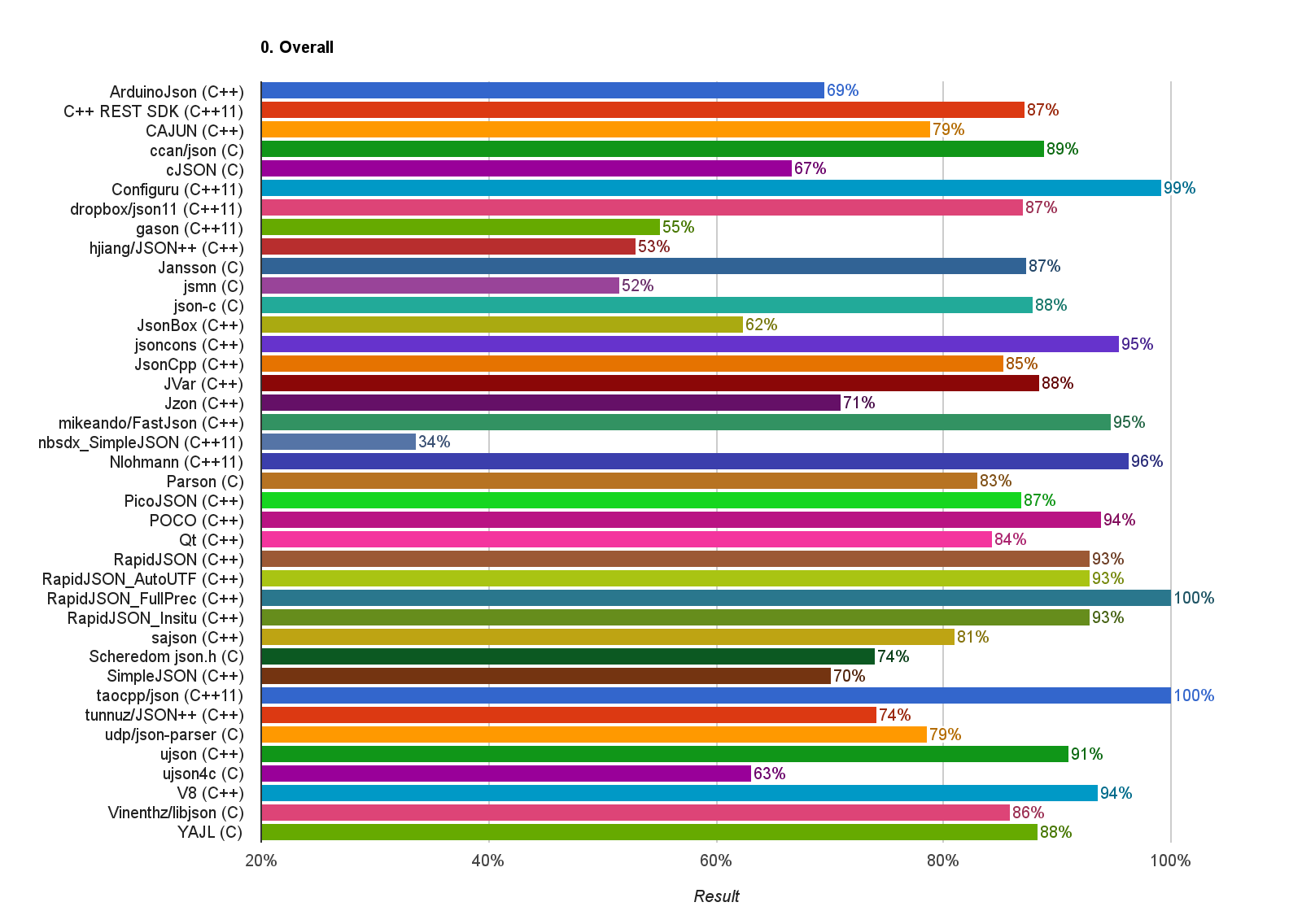

The followings are some snapshots from the results of MacBook Pro (Retina, 15-inch, Mid 2015, Corei7-4980HQ@2.80GHz) with clang 7.0 64-bit.

This is the average score of 4 conformance benchmarks. Higher is better. Details.

This is the total duration of parsing 3 JSONs to DOM representation, sorted in ascending order. Lower is better. Details

This is the total memory after parsing 3 JSONs to DOM representation, sorted in ascending order. Lower is better. Details

(Note: The results for Qt is incorrect as the benchmark failed to hook its memory allocations)

This is the total duration of stringifying 3 DOMs to JSONs, sorted in ascending order. Lower is better. Details

This is the total duration of prettifying 3 DOMs to JSONs, sorted in ascending order. Lower is better. Details

The is the size of executable program, which parses a JSON from stdin to a DOM and then computes the statistics of the DOM. Lower is better. Details

-

How to add a library?

Use

submodule add https://...xxx.git thirdparty/xxxto add the libary's repository as a submobule. If that is not possible, just copy the files into 'thirdparty/xxx'.For C libary, add a

xxx_all.cinsrc/cjsonlibs, which#includeall the necessary.cfiles of the library. And then create atests/xxxtest.cpp.itFor C++ library, just need to create a

tests/xxxtest.cpp, which#includeall the necessary.cppfiles of the library.You may find a existing library which similar to your case as a start of implementing

tests/xxxtest.cpp.Please submit a pull request if the integration work properly.

-

What if the build process is failed, or the benchmark crashes?

This happens on some platforms as not every libaray is stable for all platforms.

You can simply delete the local

tests/xxxtest.cppand re-run the build.BTW, if you are adding a library, you can remove all

tests/xxxtest.cppexcepttests/rapidjsontest.cppand your test. This make the build process fast. RapidJSON's test is needed as a reference to compare statistics results only. -

On which platform the benchmark can be run?

The author tests it on OSX/clang, Winwdows/vs2015, Ubuntu/clang3.7+gcc5.0 (via Travis CI). The benchmark may work in other platforms as well but you will need to generate the build files via premake5, and resolve any potential issues.

-

How to reduce the build process time?

You can preserve the tests of libaries that you only need, as in question 2. You can also use

make ARGS=--verify-only,make ARGS=--performance-only,make ARGS=--conformance-onlyto execute the required part.

-

Basic benchmarks for miscellaneous C++ JSON parsers and generators by Mateusz Loskot (Jun 2013)

-

JSON Parser Benchmarking by Chad Austin (Jan 2013)

-

vjson (Replaced by gason)

.png)

.png)

.png)

.png)

.png)