- Hugging Face demo

- Release training code

- Release test code

- Release pre-trained models

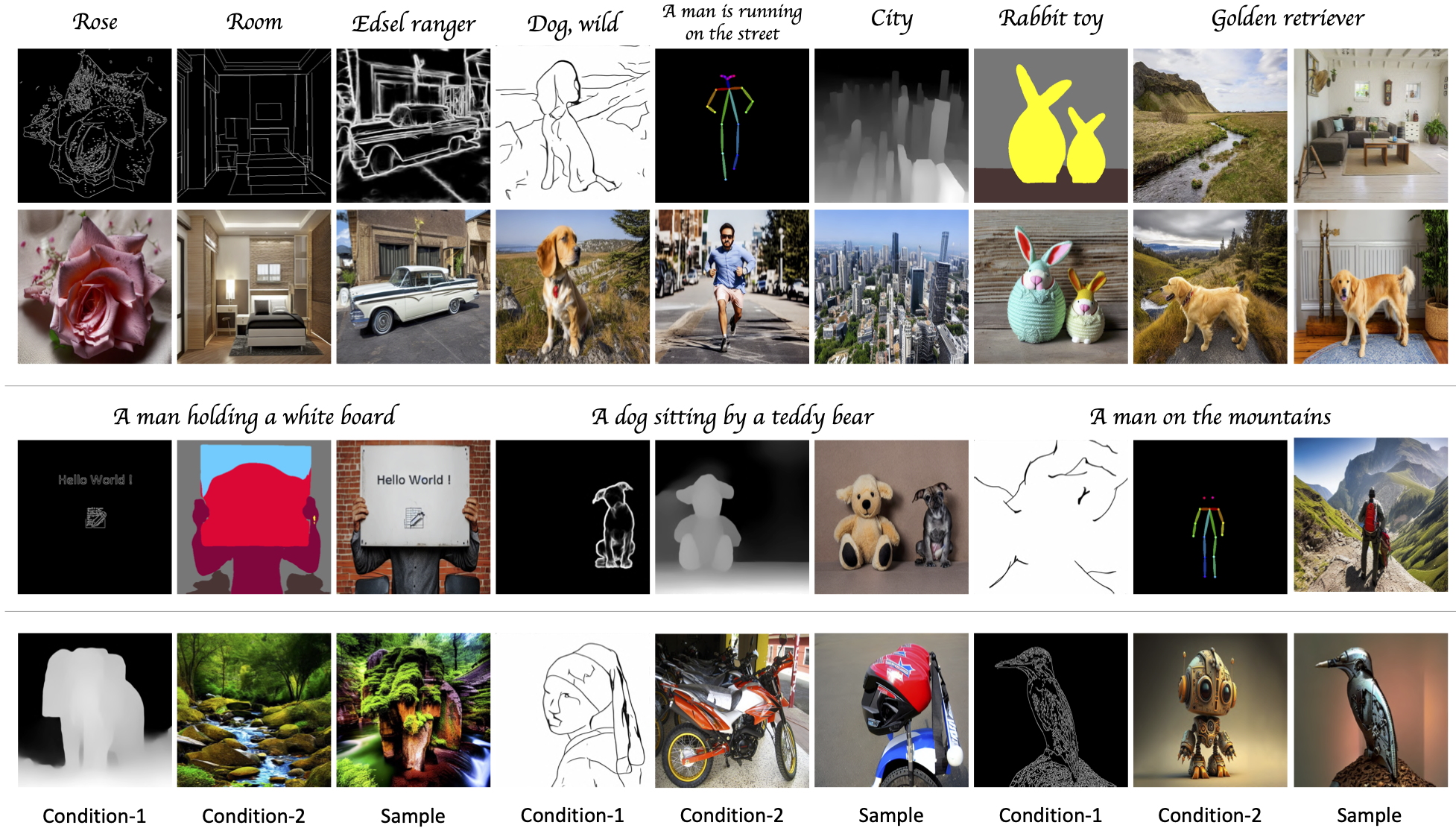

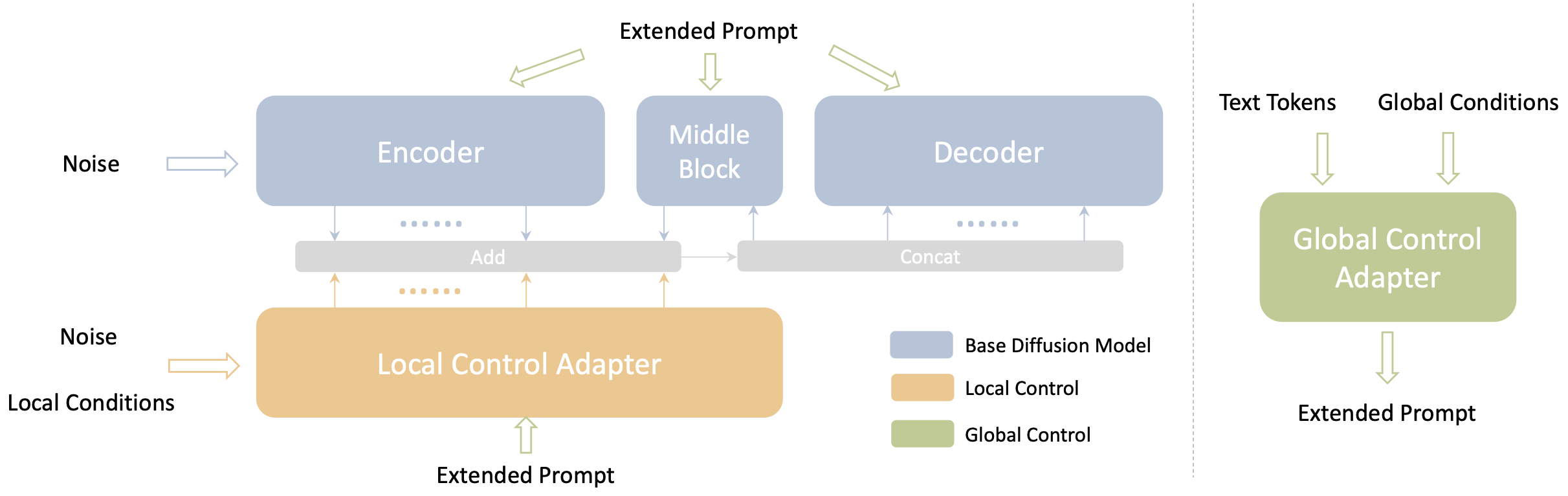

Uni-ControlNet is a novel controllable diffusion model that allows for the simultaneous utilization of different local controls and global controls in a flexible and composable manner within one model. This is achieved through the incorporation of two adapters - local control adapter and global control adapter, regardless of the number of local or global controls used. These two adapters can be trained separately without the need for joint training, while still supporting the composition of multiple control signals.

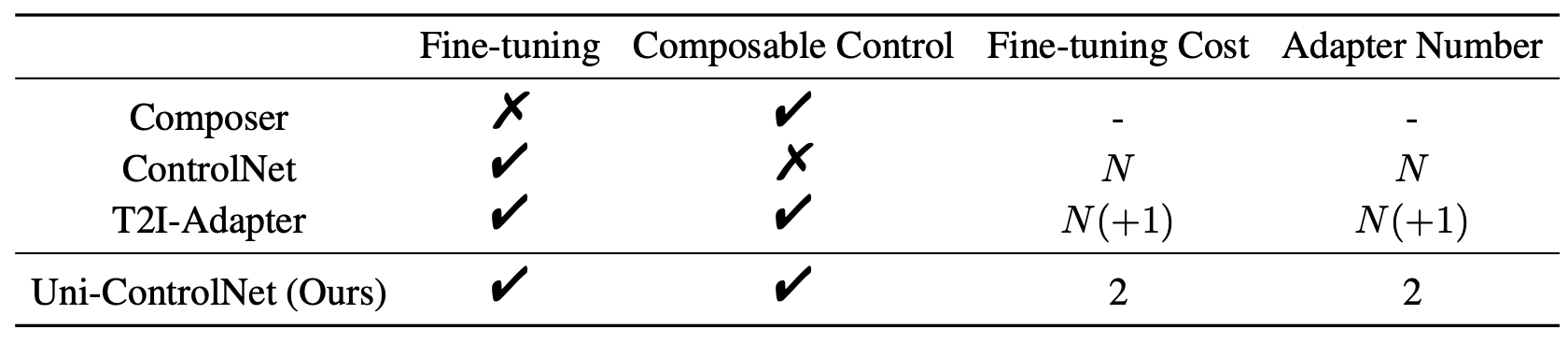

Here are the comparisons of different controllable diffusion models. N is the number of conditions. Uni-ControlNet not only reduces the fine-tuning costs and model size as the number of the control conditions grows, but also facilitates composability of different conditions.

First create a new conda environment

conda env create -f environment.yaml

conda activate unicontrol

Then download the pretrained model (or here) and put it to ./ckpt/ folder. The model is built upon Stable Diffusion v1.5.

You can launch the gradio demo by:

python src/test/test.py

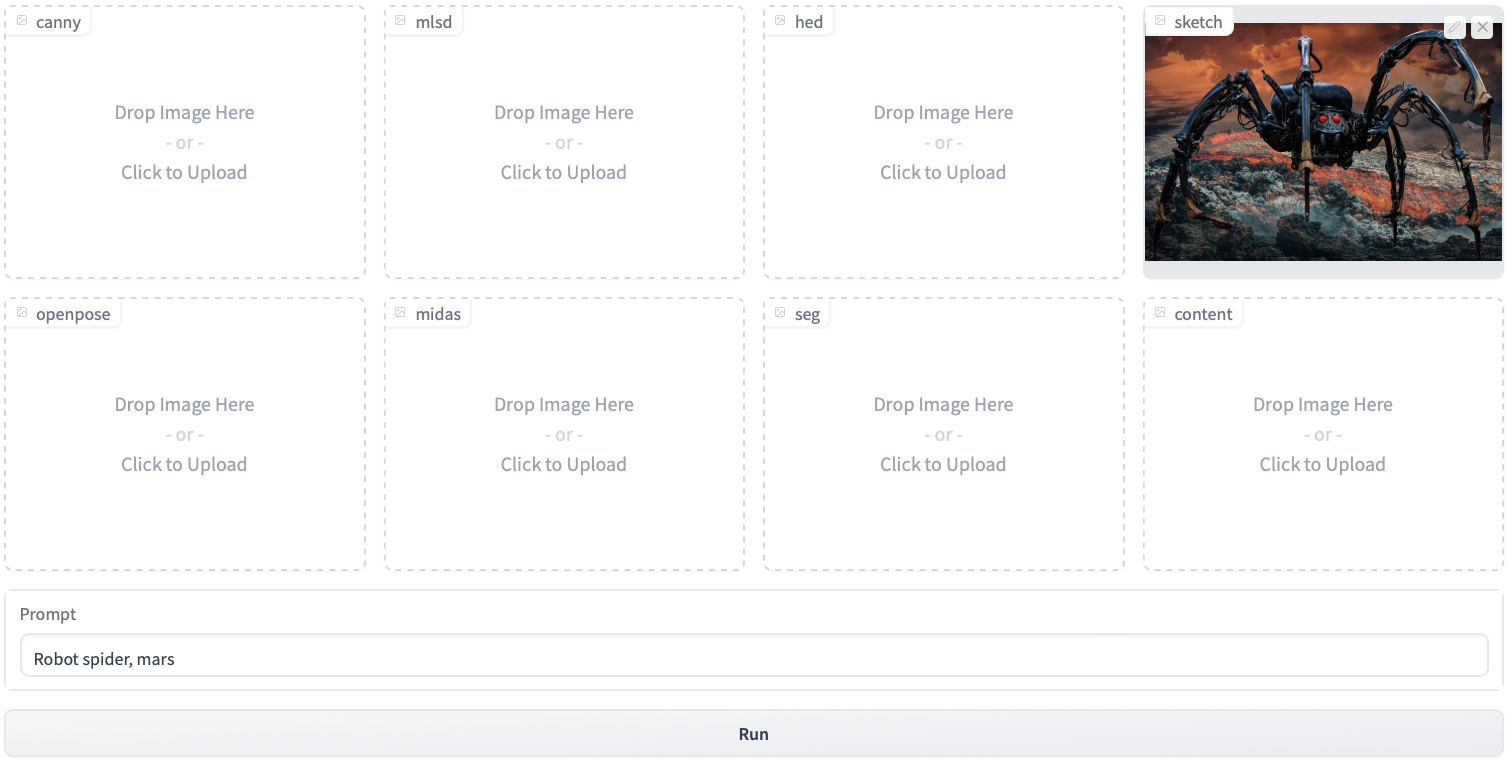

This command will load the downloaded pretrained weights and start the App. We include seven example local conditions: Canny edge, MLSD edge, HED boundary, sketch, Openpose, Midas depth, segmentation mask, and one example global condition: content.

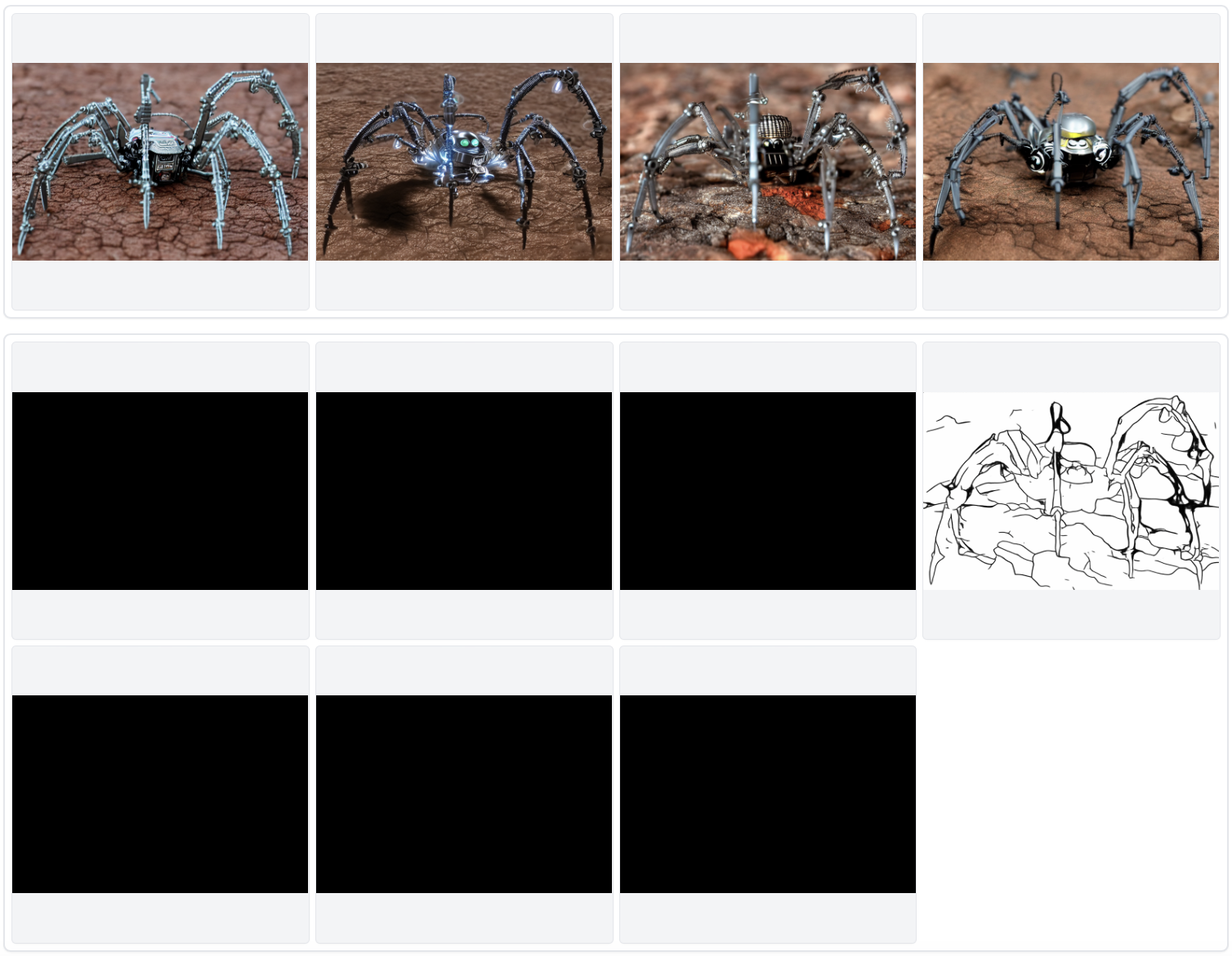

You can first upload a source image and our code automatically detects its sketch. Then Uni-ControlNet generates samples following the sketch and the text prompt which in this example is "Robot spider, mars". The results are shown at the bottom of the demo page, with generated images in the upper part and detected conditions in the lower part:

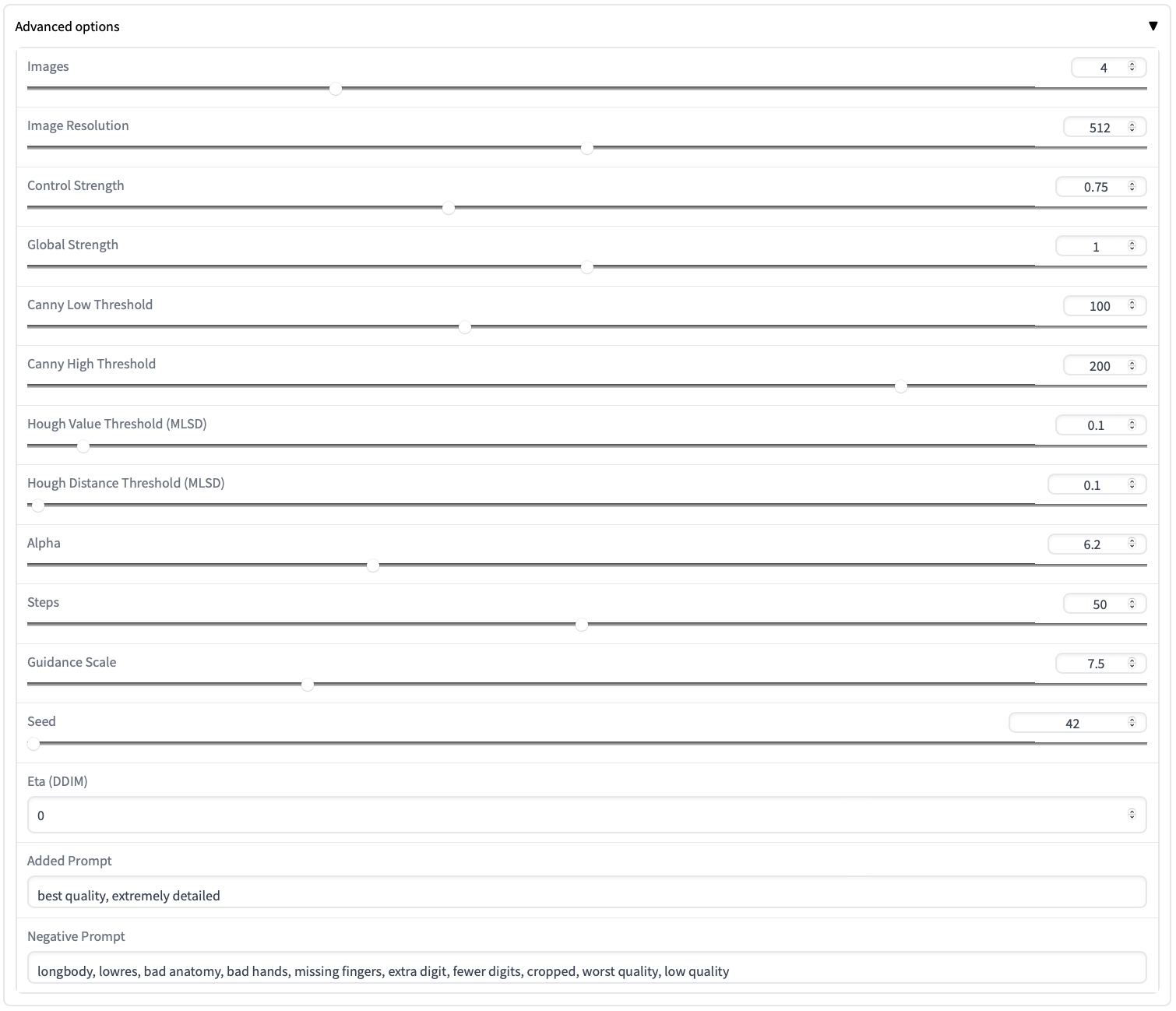

You can further detail your configuration in the panel:

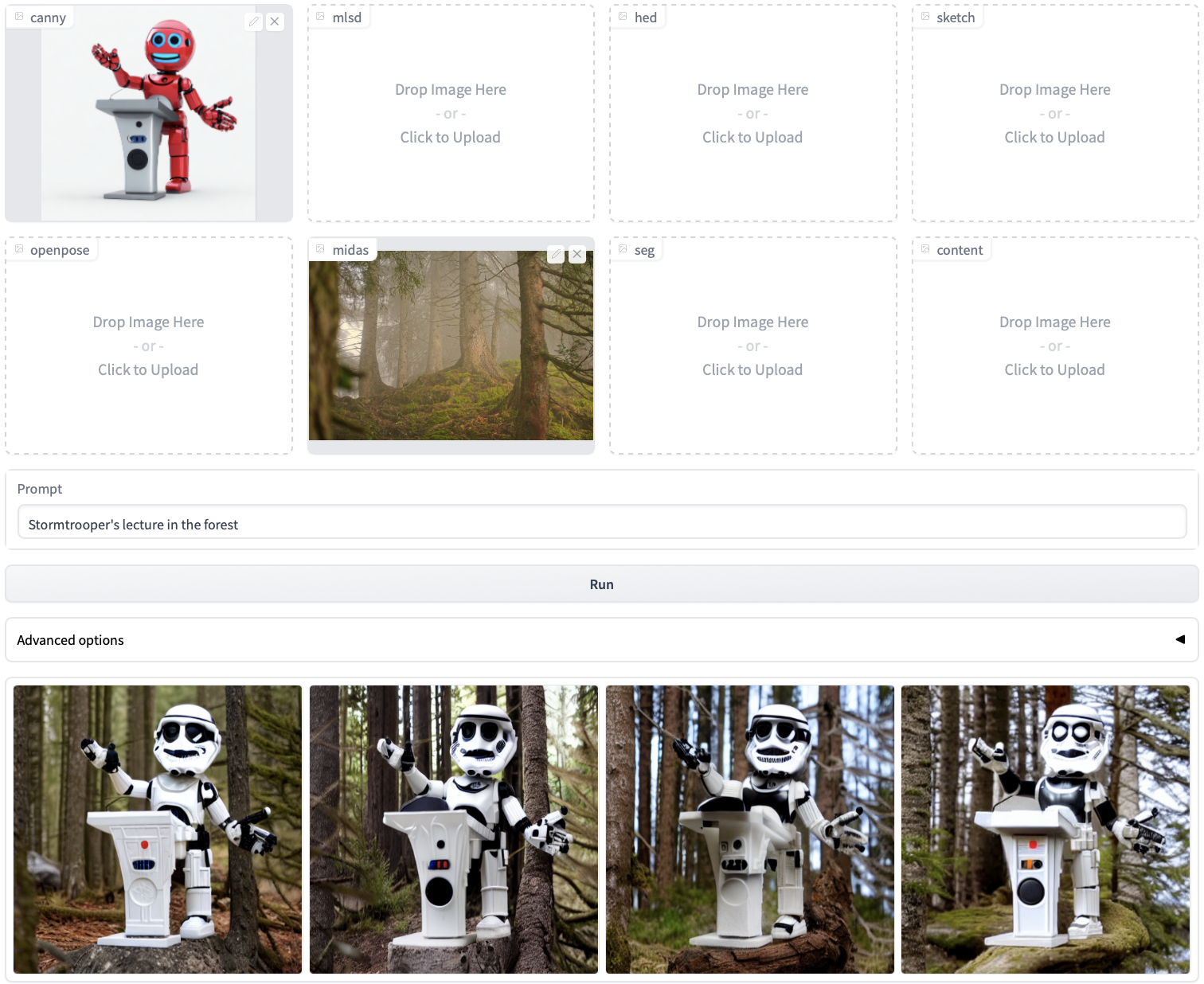

Uni-ControlNet also handles multi-conditions setting well. Here is an example of the combination of two local conditions: Canny edge of the Stormtrooper and the depth map of a forest. The prompt is set to "Stormtrooper's lecture in the forest" and here are the results:

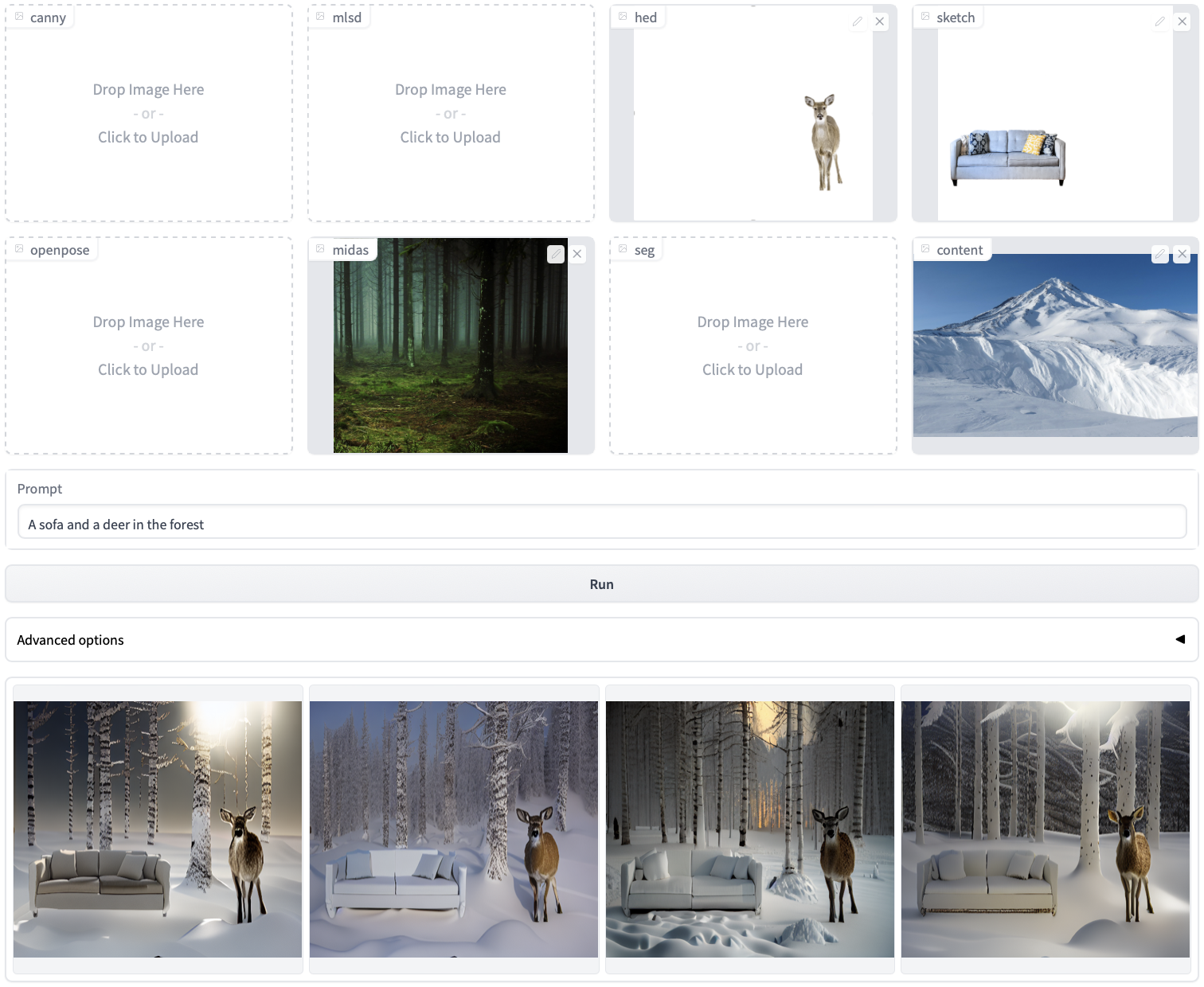

With Uni-ControlNet, you can go even further and incorporate more conditions. For instance, you can provide the local conditions of a deer, a sofa, a forest, and the global condition of snow to create a scene that is unlikely to occur naturally. The prompt is set to "A sofa and a deer in the forest" and here are the results.

Coming soon!

This repo is built upon ControlNet and really thank to their great work!

@article{zhao2023uni,

title={Uni-ControlNet: All-in-One Control to Text-to-Image Diffusion Models},

author={Zhao, Shihao and Chen, Dongdong and Chen, Yen-Chun and Bao, Jianmin and Hao, Shaozhe and Yuan, Lu and Wong, Kwan-Yee K},

journal={arXiv preprint arXiv:2305.16322},

year={2023}

}