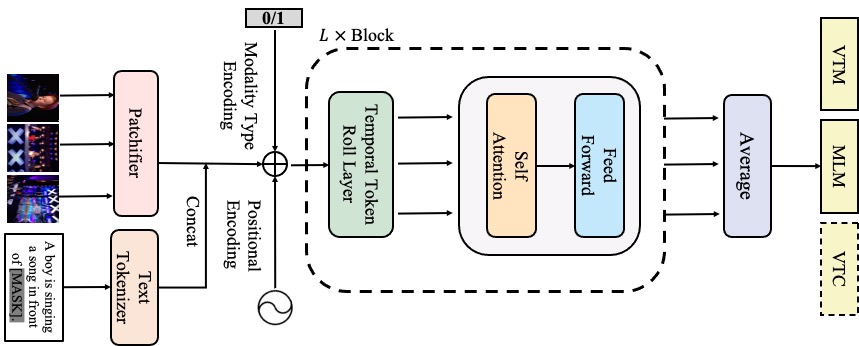

Code for the paper: All in One: Exploring Unified Video-Language Pre-training Arxiv

In this work, we use PytorchLighting for distributed training with mixed precision. Install pytorch and PytorchLighting first.

conda create -n allinone python=3.7

source activate allinone

conda install pytorch torchvision torchaudio cudatoolkit=10.2 -c pytorch

cd [Path_To_This_Code]

pip install -r requirements.txtTo speed up the pre-training, we adopt on-the-fly decode for fast IO. Install ffmpeg and pytorchvideo (for data augmentation) as below.

sudo conda install -y ffmpeg

pip install ffmpeg-python

pip install pytorchvideoPlease install the required packages if not included in the requirements.

We provide three pretrained weights in google driver.

| Model | Parameter | Pretrained Weight | Trained Log | Hparams |

|---|---|---|---|---|

| All-in-one-Ti | 12M | Google Driver | Google Driver | Google Driver |

| All-in-one-S | 33M | Google Driver | Google Driver | Google Driver |

| All-in-one-B | 110M | Google Driver | Google Driver | Google Driver |

After downloaded these pretrained weights, move them into pretrained dir.

mkdir pretrained

cp *.ckpt pretrained/See DATA.md

See TRAIN.md

See EVAL.md

By unified design and sparse sampling, AllInOne show much small flops.

If you find our work helps, please cite our paper.

@article{wang2022allinone,

title={All in One: Exploring Unified Video-Language Pre-training},

author={Wang, Alex Jinpeng and Ge, Yixiao and Yan, Rui and Ge Yuying and Lin, Xudong and Cai, Guanyu and Wu, Jianping and Shan, Ying and Qie, Xiaohu and Shou, Mike Zheng},

journal={arXiv preprint arXiv:2203.07303},

year={2022}

}