XCloud is an open-source AI platform which provides common AI services (computer vision, NLP, data mining and etc.) with RESTful APIs. It allows you to serve your machine learning models with few lines of code. The platform is developed and maintained by @LucasXU based on Django and PyTorch.

The codes of building RESTful APIs are listed in cv/nlp/dm/data module, research branch holds the training/testing scripts and several research idea prototype implementations.

- Computer Vision

- Face Analysis

- Face Comparison

- Facial Beauty Prediction

- Gender Recognition

- Race Recognition

- Age Estimation

- Facial Expression Recognition

- Face Retrieval

- Image Recognition

- Scene Recognition

- Food Recognition

- Flower Recognition

- Plant Disease Recognition

- Pet Insects Detection & Recognition

- Pornography Image Recognition

- Skin Disease Recognition

- Image Processing

- Image Deblurring

- Image Dehazing

- Face Analysis

- NLP

- Text Similarity Comparison

- Sentiment Classification for douban.com

- News Classification

- Data Mining

- Data Services

- Zhihu Live & Comments

- Major Hospital Information

- Primary and Secondary School on Baidu Baike

- Weather History

- Research

- Age Estimation

- Medical Image Analysis (Skin Lesion Analysis)

- Crowd Counting

- Intelligent Agriculture

- Content-based Image Retrieval

- Image Segmentation

- Image Dehazing

- Image Quality Assessment

- Data Augmentation

- Knowledge Distillation

- create a virtual enviroment named

pyWebfollow this tutorial - install Django and PyTorch

- install all dependent libraries:

pip3 install -r requirements.txt - activate Python Web environment:

source ~/pyWeb/bin/activate pyWeb - start django server:

-

test with Django built-in server:

python3 manage.py runserver 0.0.0.0:8001 -

start with gunicorn:

CUDA_VISIBLE_DEVICES=0,1,2,3 nohup gunicorn BCloud.wsgi -b YOUR_MACHINE_IP:8008 --timeout=500

-

- open your browser and visit welcome page:

YOUR_MACHINE_IP:8001/index

In order to construct a more efficient inference engine, it is highly recommended to use TensorRT. With the help of TensorRT, we are able to achieve 147.23 FPS (DenseNet169 as backbone) on 2080TI GPU without performance drop, which is significantly faster than its counterpart PyTorch model (29.45 FPS).

The installation is listed as follows:

- download installation package from NVIDIA official websites. I use

.tar.gzin this project - add nvcc to you PATH:

export PATH=/usr/local/cuda/bin/nvcc:$PATH - install pyCUDA:

pip3 install 'pycuda>=2017.1.1' - unzip

.tar.gzfile, and modify your environment by adding:export LD_LIBRARY_PATH=/data/lucasxu/Software/TensorRT-5.1.5.0/lib:$LD_LIBRARY_PATH - install TensorRT Python wheel:

pip3 install ~/Software/TensorRT-5.1.5.0/python/tensorrt-5.1.5.0-cp37-none-linux_x86_64.whl - install torch2trt

- then you can use model_converter.py to convert a PyTorch model to TensorRT model

ONNX Runtime is a performance-focused engine for ONNX models, which inferences efficiently across multiple platforms and hardware (Windows, Linux, and Mac and on both CPUs and GPUs). We also provide a easy-to-use script model_converter.py to allow you to easily convert a PyTorch model to ONNX model with ONNX-Runtime inference engine.

Before use model_converter.py, make sure you have installed PyTorch, ONNX, and ONNX-Runtime. If not, just try:

pip3 install onnx onnxruntime-gpu| Inference Engine | FPS |

|---|---|

| PyTorch | 29.45 |

| TensorRT | 147.23 |

| ONNX (CPU) | 6.93 |

| ONNX (GPU) | 68.42 |

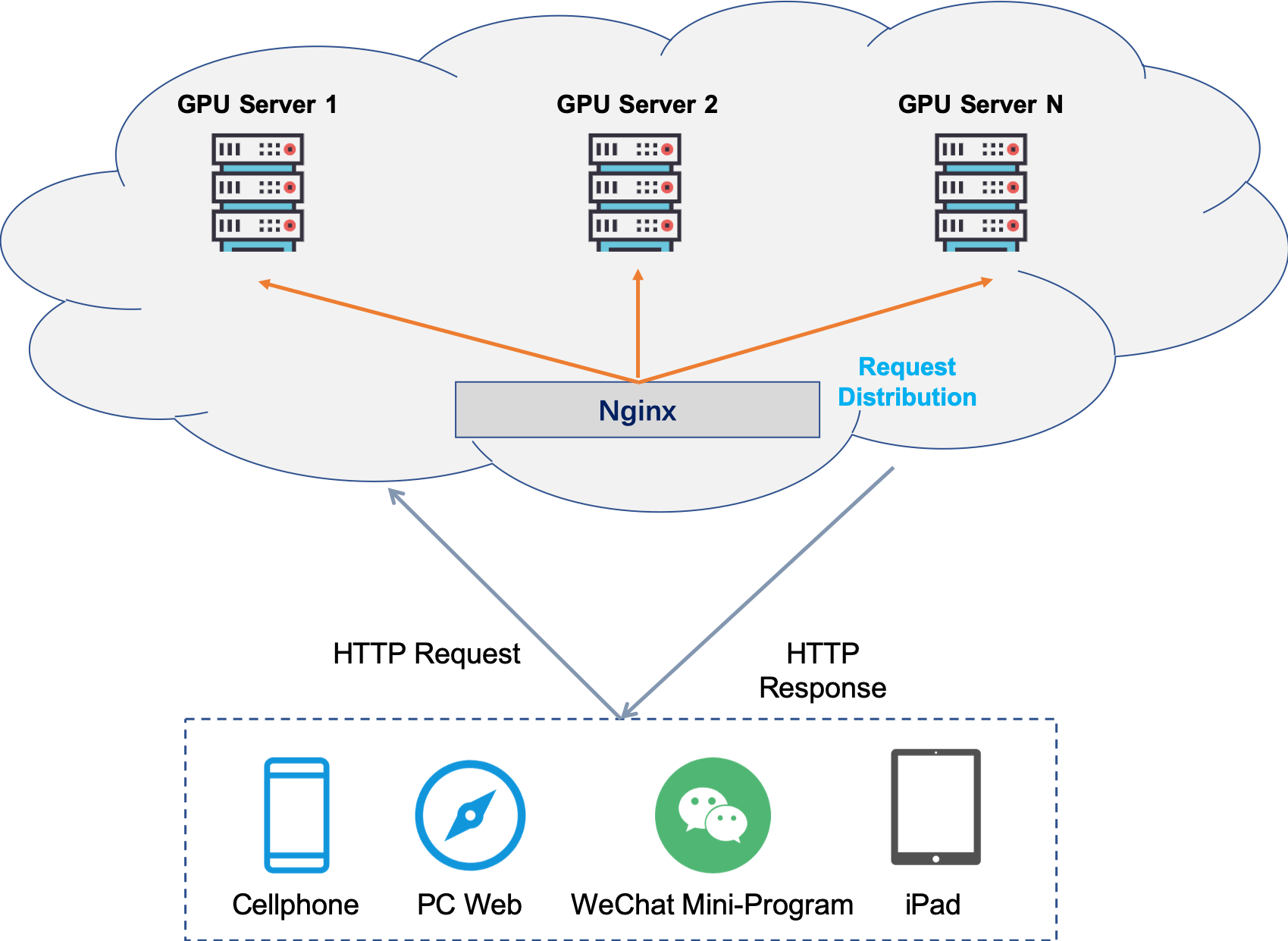

As suggested in Django doc, DO NOT USE THIS SERVER IN A PRODUCTION SETTING, it may bring you potential security risk and performance problems. Henceforth, you'd better upgrade Django built-in server to a stronger one, such as Nginx.

- install Gunicorn:

pip3 install gunicorn - run your server (with multi threads support):

gunicorn XCloud.wsgi -b YOUR_MACHINE_IP:8001 --threads THREADS_NUM --timeout=200 - open your browser and visit welcome page:

http://YOUR_MACHINE_IP:8001/index

- install uWSGI:

pip3 install uwsgi. Tryconda install -c conda-forge uwsgiif you prefer Anaconda - start your uWSGI server:

uwsgi --http :8001 --chdir /data/lucasxu/Projects/XCloud -w XCloud.wsgi - you can specify more configuration in uwsgi.ini, and start uWSGI by:

uwsgi --ini uwsgi.ini - open your browser and visit welcome page:

http://YOUR_MACHINE_IP:8001/index

Note: this tutorial gives more details about Nginx and Django

- install Nginx:

sudo apt-get install nginx - install uwsgi:

sudo pip3 install uwsgi - start Nginx:

sudo /etc/init.d/nginx start. Typeps -ef |grep -i nginxto see whether Nginx has started successfully - open your browser and visit

YOUR_IP_ADDRESS:80, if you see nginx welcome page, then you have installed Nginx successfully - restart Nginx:

sudo /etc/init.d/nginx restart - config your Nginx:

sudo vim /etc/nginx/nginx.confas follows:user nginx; worker_processes 1; error_log /var/log/nginx/error.log warn; pid /var/run/nginx.pid; events { use epoll; worker_connections 1024; } http { include /etc/nginx/mime.types; default_type application/octet-stream; log_format main '$remote_addr - $remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '"$http_user_agent" "$http_x_forwarded_for"'; access_log /var/log/nginx/access.log main; sendfile on; #tcp_nopush on; keepalive_timeout 65; gzip on; include /etc/nginx/conf.d/*.conf; upstream backend { server YOUR_MACHINE_IP:8001; server YOUR_MACHINE_IP:8002; server YOUR_MACHINE_IP:8003; server YOUR_MACHINE_IP:8004; } server { listen 8008; server_name YOUR_MACHINE_IP; access_log /var/log/nginx/access.log main; charset utf-8; gzip on; gzip_types text/plain application/x-javascript text/css text/javascript application/x-httpd-php application/json text/json image/jpeg image/gif image/png application/octet-stream; # set project uwsgi path location /cv/ { include uwsgi_params; # import an Nginx module to communicate with uWSGI uwsgi_connect_timeout 30; uwsgi_pass unix:/opt/project_teacher/script/uwsgi.sock; # set uwsgi's sock file, so all dynamical requests will be sent to uwsgi_pass proxy_connect_timeout 300; proxy_buffering off; proxy_pass http://backend; } location /static/ { alias /data/lucasxu/Projects/XCloud/cv/static/; index index.html index.htm; } } }

Note: suppose you start 4 deep learning services with ports from 8001 to 8004, on CUDA_VISIBLE_DEVICES from 0 to 3, respectively. The above configuration indicates that all concurrent requests will be proxied to YOUR_MACHINE_IP:8008/cv/. So it's easy to solve concurrent requests from clients.

- restart Nginx:

sudo /etc/init.d/nginx restart, then enjoy it!

In the near future, I will explore more methods in Machine Leanring in Production fields, and share related articles on ML_IN_PRODUCTION.md or my blog.

For stress testing, please refer to API_STRESS_TESTING_WITH_JMETER.md for more details!

We support 3 types of request type in API, namely, web form uploaded file, base64 image and image URL (such as Swift).

- Bash

curl -F "image=@111.jpg" YOUR_MACHINE_IP:8001/cv/xxxreccurl -d "image=https://xxx.com/file/test.jpg" YOUR_MACHINE_IP:8001/cv/xxxrec- Python

import base64

import requests

req_url = 'YOUR_MACHINE_IP:8001/cv/xxxrec'

with open("/path/to/test.jpg", mode='rb') as f:

image = base64.b64encode(f.read())

resp = requests.post(req_url, data={

'image': image,

})

print(resp.json())- @LucasXU: system/algorithm/deployment/report

- @Yummy: front-end developer

- @reallinfo: logo design

- XCloud is freely accessible for everyone, you can email me AT xulu0620@gmail.com to inquire tech support.

- Please ensure that your machine has a strong GPU equipment.

- For XCloud in Java, please refer to CVLH for more details!

- Technical details can be read from our Technical Report.

- Add docker support

- Add NVIDIA DALI support

- Add NVIDIA Apex support

- Add Quantization support to accelerate deep models

- Add RPC API support

If you use our codebase or models in your research, please cite this project. We have released a Technical Report about this project.

@article{xu2019xcloud,

title={XCloud: Design and Implementation of AI Cloud Platform with RESTful API Service},

author={Xu, Lu and Wang, Yating},

journal={arXiv preprint arXiv:1912.10344},

year={2019}

}