Kanva: Knowledge-Aware laNguage-and-Vision Assistant, by the KaLM team.

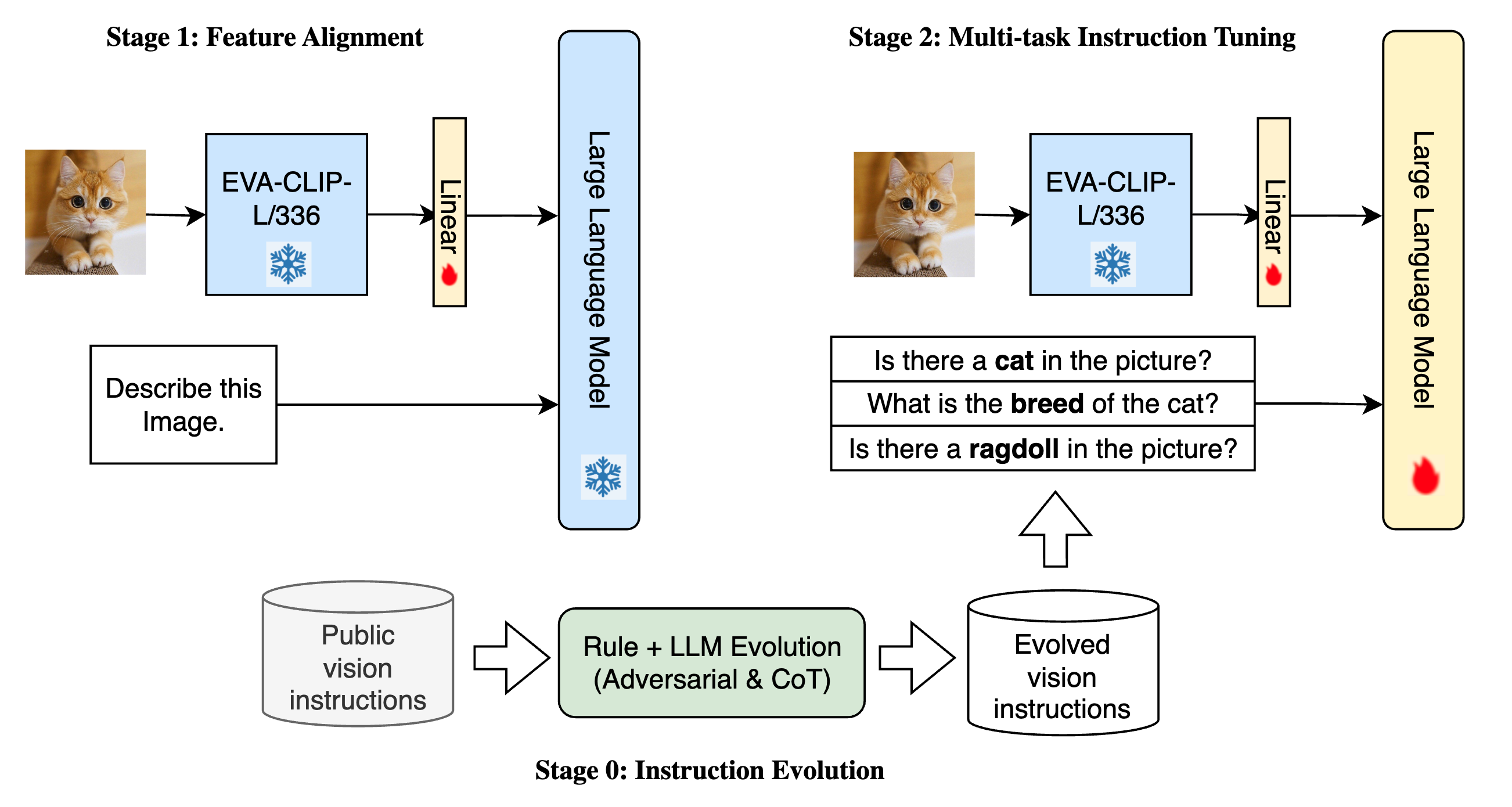

The quality of instructions is a pivotal element for Instruction-tuned Vision Language Models. We propose a mechanism integrating world knowledge in LLMs to evolve visual instructions to improve the quality of such datasets. Using this mechanism, we construct a dataset evolved from existing public resources.

We show that by applying the dataset on existing model architectures and training recipes, their zero-shot capabilities are significantly improved. After applying the evolved dataset on off-the-shelf language models, our new model series, Kanva, achieve remarkably higher results on MME and MMBench benchmarks compared to the baseline models such as LLaVA.

As demonstrated in the figure, we simply adopt the LLaVA model's architecture as well as the training recipe. The models are trained based on public vision-language instructions data, evolved with our rule-based and LLM-based instruction evolution procedure.

| Model | Vision | Language | Parameters |

|---|---|---|---|

| Kanva-7B | EVA-CLIP-L/336 | Baichuan2-7B | 7.2B |

| Kanva-14B | EVA-CLIP-L/336 | Qwen-14B | 14.2B |

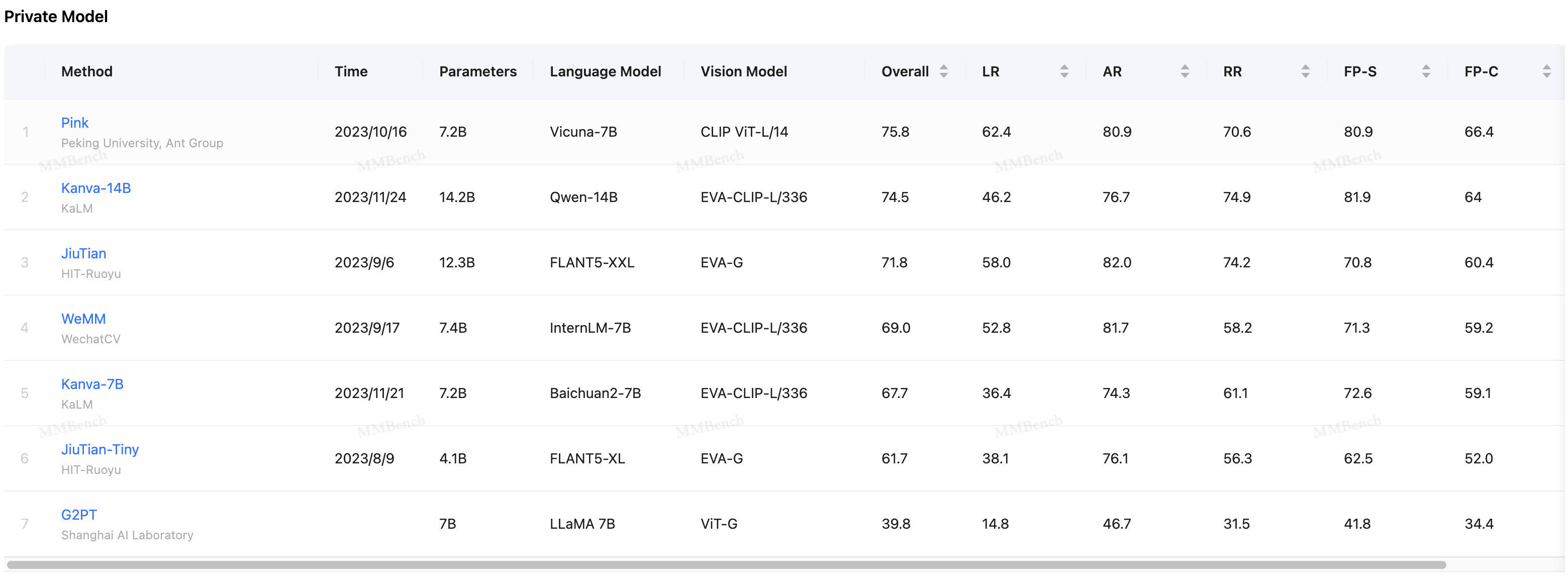

We benchmark two models in the Kanva series, Kanva-14B and Kanva-7B, trained with different language components. The results are reported below.

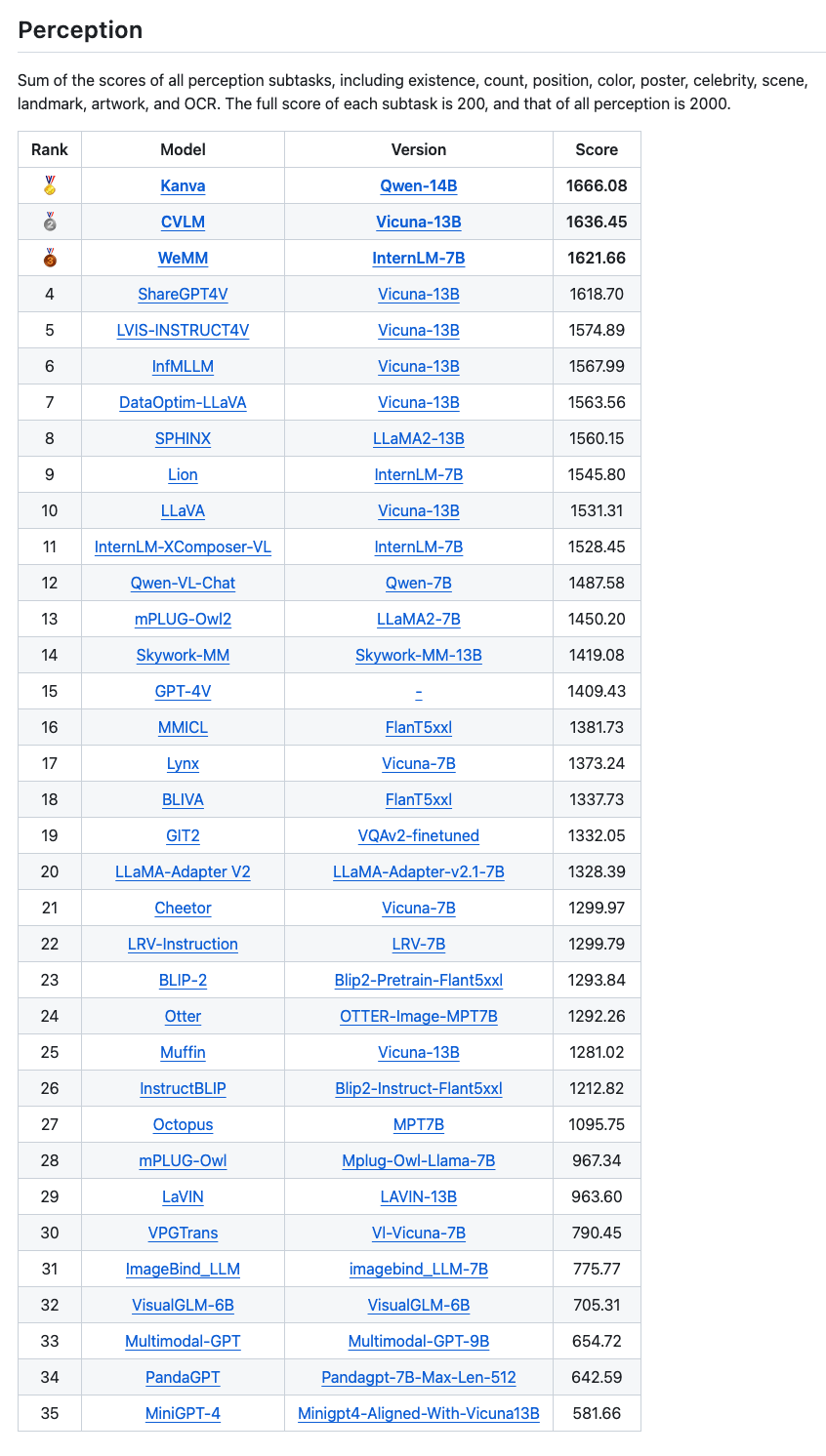

Kanva achieved 1666.08 perception score, which was top1 on MME full benchmark on 2023-11-24

Kanva-14B achieved 74.5 on MMBench-test, which ranks second place on Private Model on 2023-11-24