The official implementation of the ICCV 2023 paper Robust Object Modeling for Visual Tracking

[Models and Raw Results] (Google Drive) [Models and Raw Results] (Baidu Netdisk: romt)

[September 21, 2023]

- We release Models and Raw Results of ROMTrack.

- We refine README for more details.

[August 6, 2023]

- We release Code of ROMTrack.

[July 14, 2023]

- ROMTrack is accepted to ICCV2023.

- Code for ROMTrack

- Model Zoo and Raw Results

- Refine README

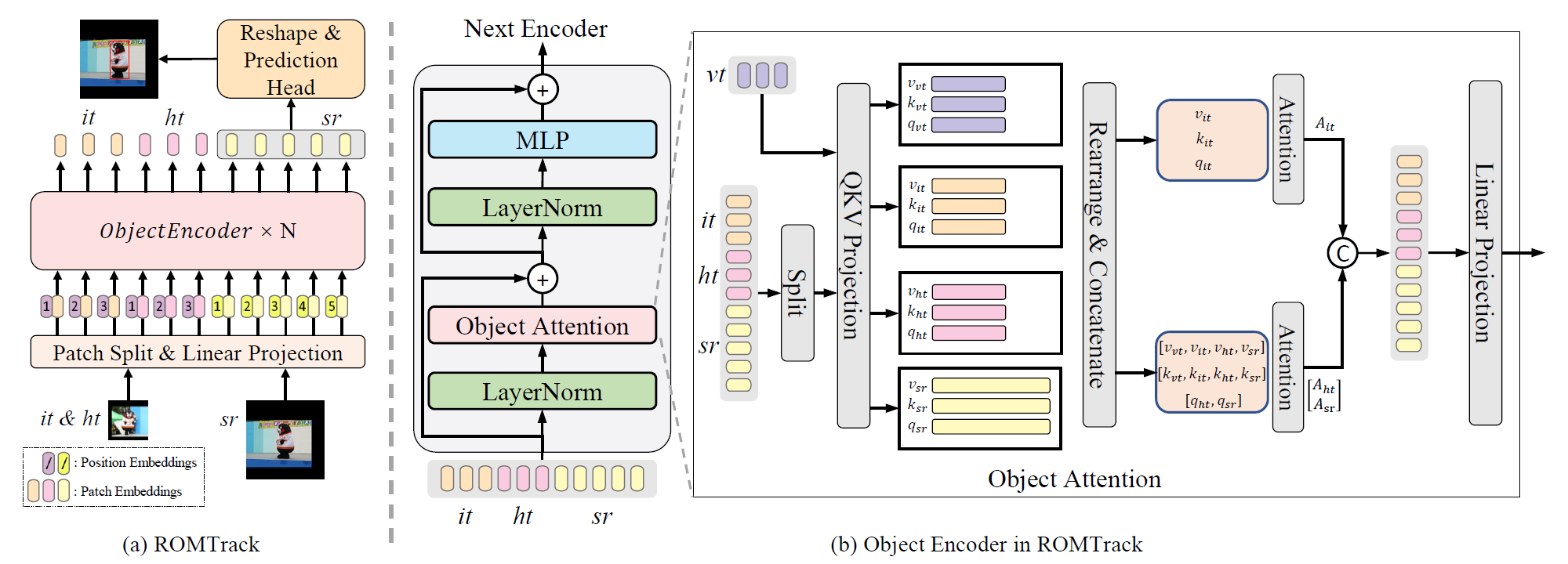

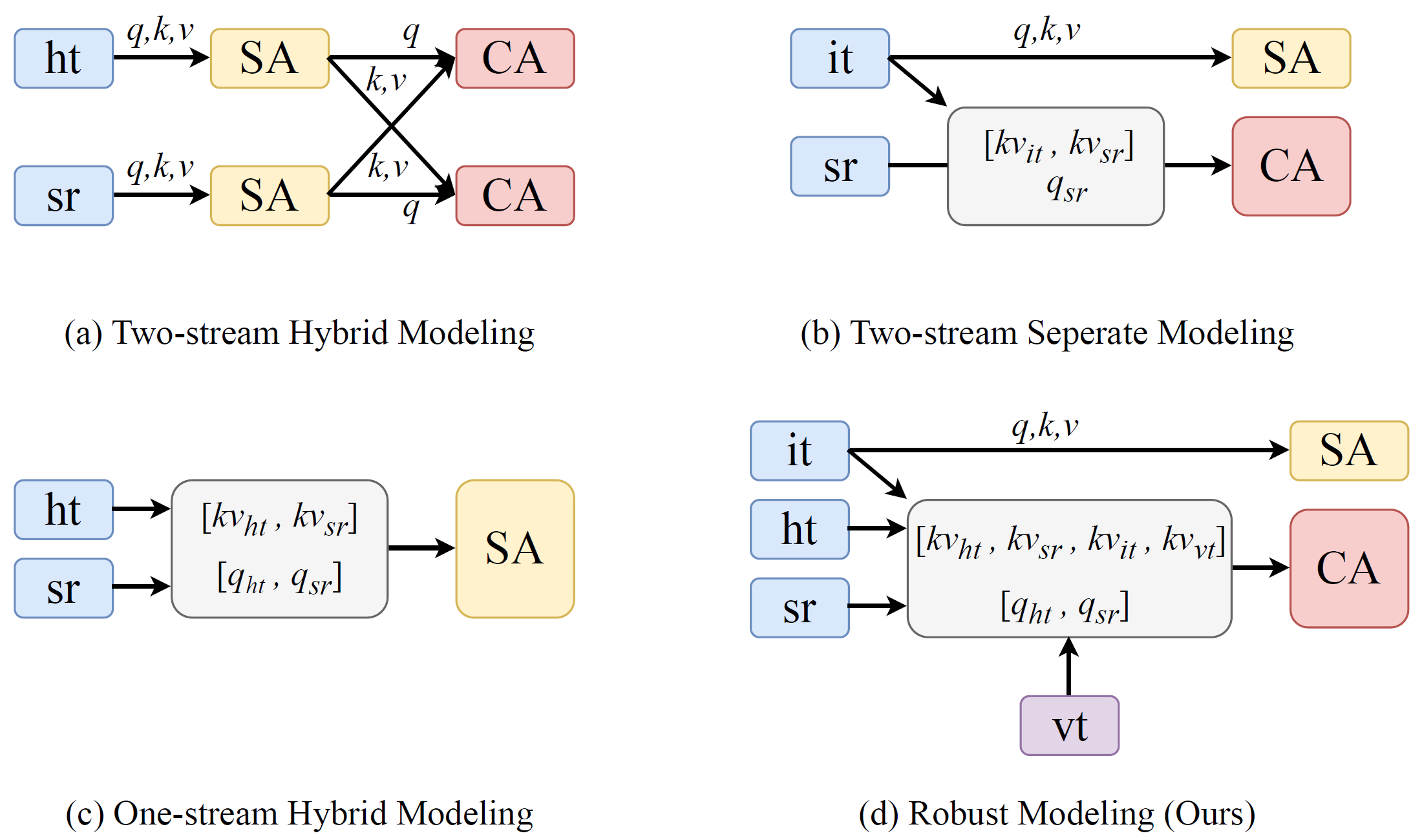

- ROMTrack employes a robust object modeling design which can keep the inherent information of the target template and enables mutual feature matching between the target and the search region simultaneously.

Use the Anaconda

conda create -n romtrack python=3.6

conda activate romtrack

bash install_pytorch17.sh

Put the tracking datasets in ./data. It should look like:

${ROMTrack_ROOT}

-- data

-- lasot

|-- airplane

|-- basketball

|-- bear

...

-- lasot_ext

|-- atv

|-- badminton

|-- cosplay

...

-- got10k

|-- test

|-- train

|-- val

-- coco

|-- annotations

|-- train2017

-- trackingnet

|-- TRAIN_0

|-- TRAIN_1

...

|-- TRAIN_11

|-- TEST

Run the following command to set paths for this project

python tracking/create_default_local_file.py --workspace_dir . --data_dir ./data --save_dir .

After running this command, you can also modify paths by editing these two files

lib/train/admin/local.py # paths about training

lib/test/evaluation/local.py # paths about testing

Training with multiple GPUs using DDP. More details of other training settings can be found at tracking/train_romtrack.sh

bash tracking/train_romtrack.sh

- LaSOT/LaSOT_ext/GOT10k-test/TrackingNet/OTB100/UAV123/NFS30. More details of test settings can be found at

tracking/test_romtrack.sh

bash tracking/test_romtrack.sh

python tracking/profile_model.py --config="baseline_stage1"

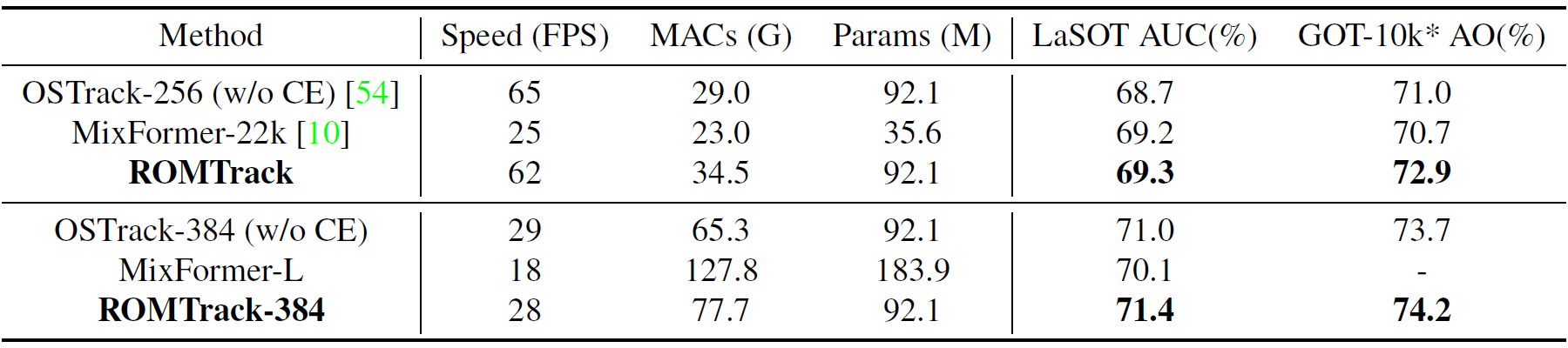

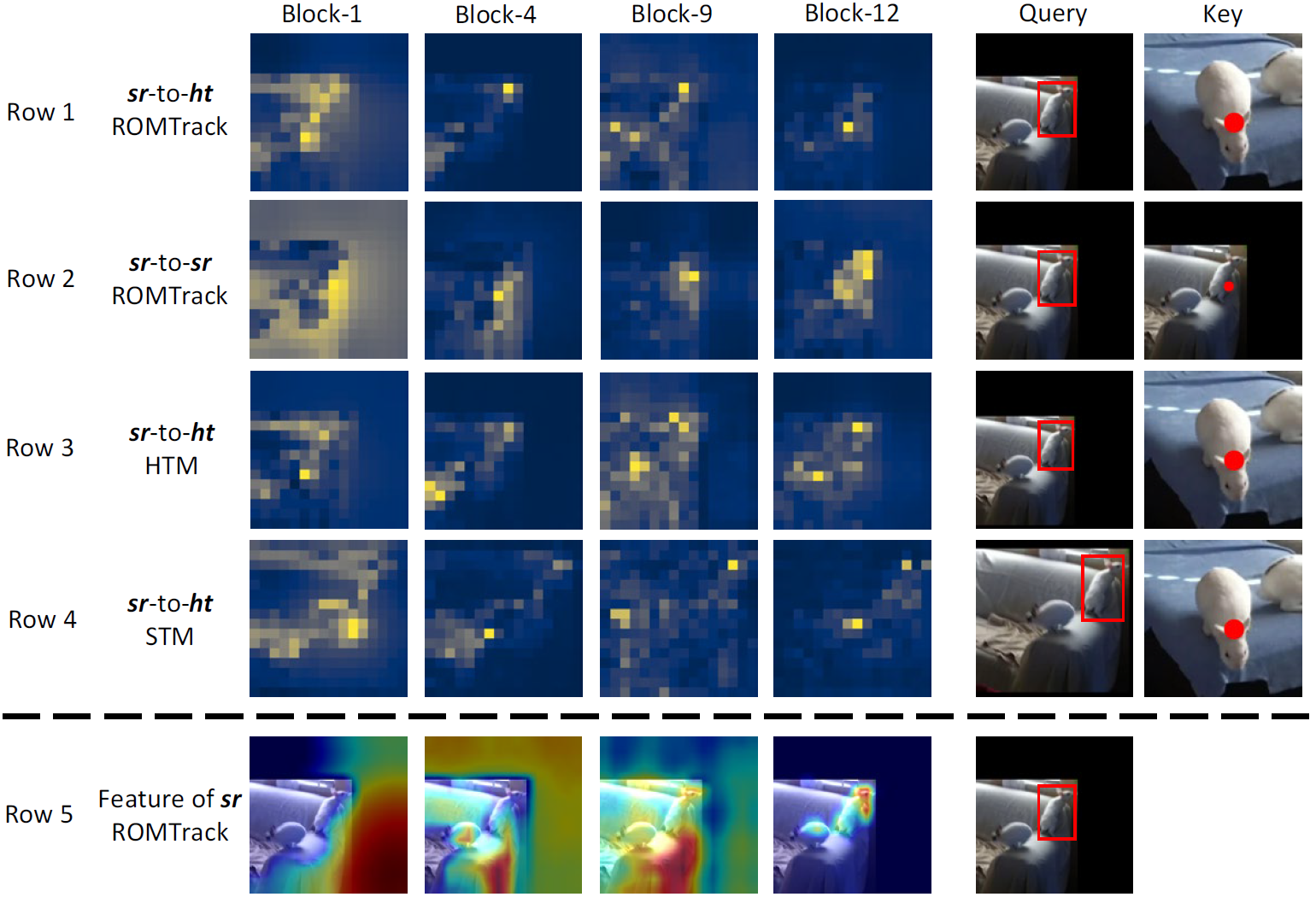

We provide attention maps and feature maps for several sequences on LaSOT. Detailed analysis can be found in our paper.

- Thanks for STARK, PyTracking and MixFormer Library, which helps us to quickly implement our ideas and test our performances.

- Our implementation of the ViT is modified from the Timm repo.

If our work is useful for your research, please feel free to star:star: and cite our paper:

@article{DBLP:journals/corr/abs-2308-05140,

author = {Yidong Cai and

Jie Liu and

Jie Tang and

Gangshan Wu},

title = {Robust Object Modeling for Visual Tracking},

journal = {CoRR},

volume = {abs/2308.05140},

year = {2023}

}