reinforcement_learning

Experiments with Reinforcement learning.

Project Organization

├── LICENSE

├── Makefile <- Makefile with commands like `make data` or `make train`

├── README.md <- The top-level README for developers using this project.

├── data

│ ├── external <- Data from third party sources.

│ ├── interim <- Intermediate data that has been transformed.

│ ├── processed <- The final, canonical data sets for modeling.

│ └── raw <- The original, immutable data dump.

│

├── docs <- A default Sphinx project; see sphinx-doc.org for details

│

├── models <- Trained and serialized models, model predictions, or model summaries

│

├── notebooks <- Jupyter notebooks. Naming convention is a number (for ordering),

│ the creator's initials, and a short `-` delimited description, e.g.

│ `1.0-jqp-initial-data-exploration`.

│

├── references <- Data dictionaries, manuals, and all other explanatory materials.

│

├── reports <- Generated analysis as HTML, PDF, LaTeX, etc.

│ └── figures <- Generated graphics and figures to be used in reporting

│

├── requirements.txt <- The requirements file for reproducing the analysis environment, e.g.

│ generated with `pip freeze > requirements.txt`

│

├── src <- Source code for use in this project.

│ ├── __init__.py <- Makes src a Python module

│ │

│ ├── data <- Scripts to download or generate data

│ │ └── make_dataset.py

│ │

│ ├── features <- Scripts to turn raw data into features for modeling

│ │ └── build_features.py

│ │

│ ├── models <- Scripts to train models and then use trained models to make

│ │ │ predictions

│ │ ├── predict_model.py

│ │ └── train_model.py

│ │

│ └── visualization <- Scripts to create exploratory and results oriented visualizations

│ └── visualize.py

│

└── tox.ini <- tox file with settings for running tox; see tox.testrun.org

Requirements

Docker

docker-compose

Setup

- Install docker and docker-compose for your platfrom

- Clone this repo and run

./start.shin the project root folder. This starts a docker container with correct port configuration. - Run

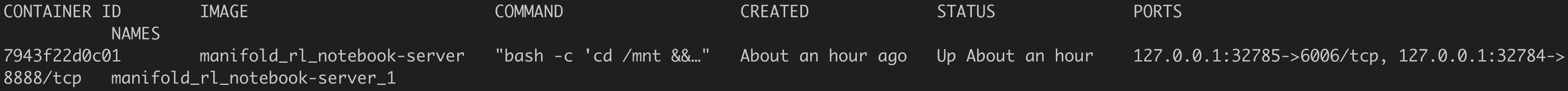

docker psat command line. This prints the port forwarding information. - The output looks something like

- Go to https://localhost/port_number where port_number is where 8888 is mapped to. In the above example, it is 32784.

- You can access the notebooks from that url.

- For the curious, we also mapped tensorboard port (6006) to a port. (In the above example, it is mapped to 32785 on your local machine). So if tensorboard is running inside your container, you could access it at https://localhost/32785

- If you want to exec into the container and run code there, do

docker exec -it container_id /bin/bashwhere container_id is from the output ofdocker pscommand

Project based on the cookiecutter data science project template. #cookiecutterdatascience