Semantically Contrastive Learning for Low-light Image Enhancement

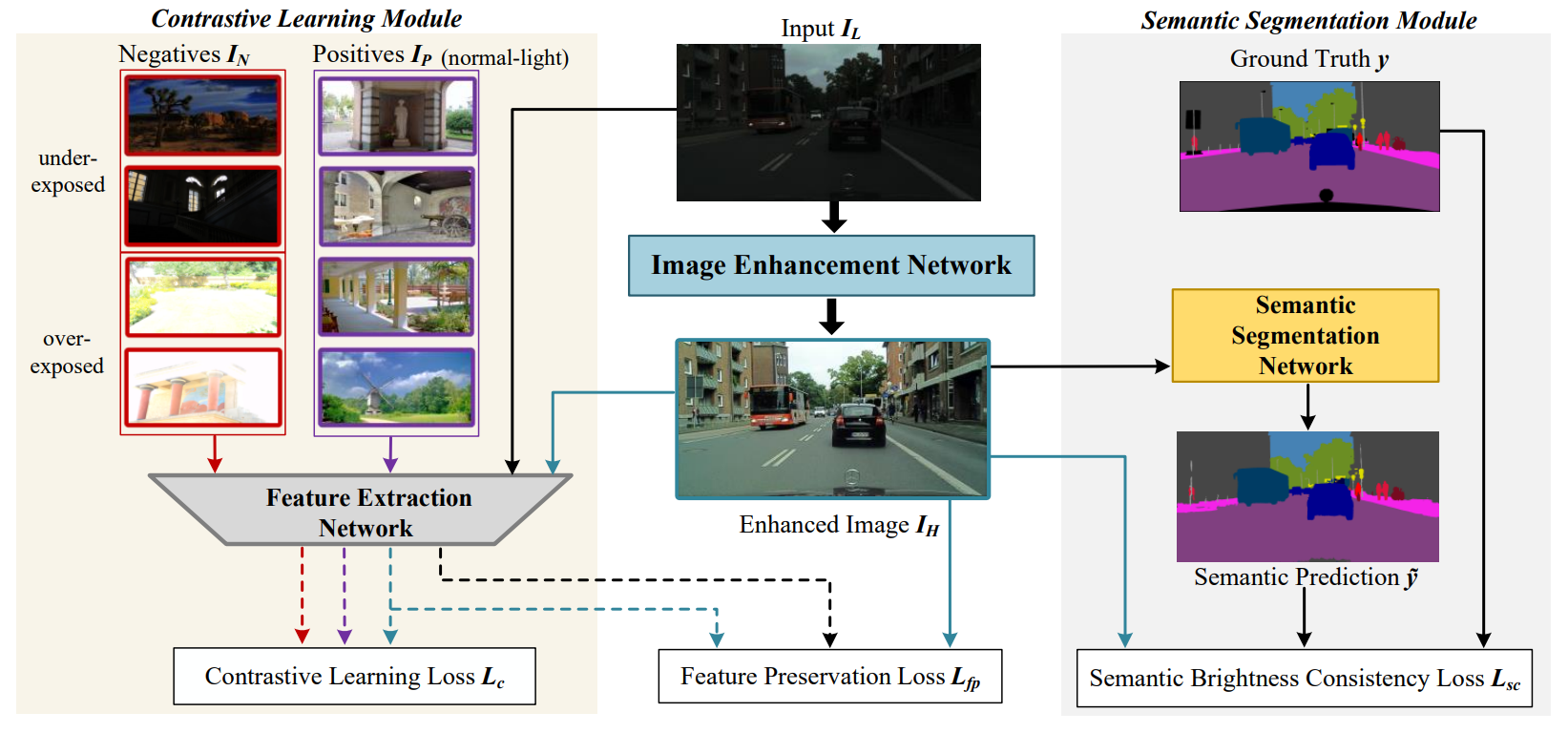

Here, we propose an effective semantically contrastive learning paradigm for Low-light image enhancement (namely SCL-LLE). Beyond the existing LLE wisdom, it casts the image enhancement task as multi-task joint learning, where LLE is converted into three constraints of contrastive learning, semantic brightness consistency, and feature preservation for simultaneously ensuring the exposure, texture, and color consistency. SCL-LLE allows the LLE model to learn from unpaired positives (normal-light)/negatives (over/underexposed), and enables it to interact with the scene semantics to regularize the image enhancement network, yet the interaction of high-level semantic knowledge and the low-level signal prior is seldom investigated in previous methods.

Network

- Overall architecture of our proposed SCL-LLE. It includes a low-light image enhancement network, a contrastive learning module and a semantic segmentation module.

Experiment

PyTorch implementation of SCL-LLE

Requirements

- Python 3.7

- PyTorch 1.4.0

- opencv

- torchvision

- numpy

- pillow

- scikit-learn

- tqdm

- matplotlib

- visdom

SCL-LLE does not need special configurations. Just basic environment.

Folder structure

The following shows the basic folder structure.

├── datasets

│ ├── data

│ │ ├── cityscapes

│ │ └── Contrast

| ├── test_data

│ ├── cityscapes.py

| └── util.py

├── network # semantic segmentation model

├── lowlight_test.py # low-light image enhancement testing code

├── train.py # training code

├── lowlight_model.py

├── Myloss.py

├── checkpoints

│ ├── best_deeplabv3plus_mobilenet_cityscapes_os16.pth # A pre-trained semantic segmentation model

│ ├── LLE_model.pth # A pre-trained SCL-LLE modelTest

- cd SCL-LLE

python lowlight_test.py

The script will process the images in the sub-folders of "test_data" folder and make a new folder "result" in the "datasets". You can find the enhanced images in the "result" folder.

Train

- cd SCL-LLE

- download the Cityscapes dataset

- download the cityscapes training data google drive and contrast training data google drive

- unzip and put the downloaded "train" folder and "Contrast" folder to "datasets/data/cityscapes/leftImg8bit" folder and "datasets/data" folder

- download the pre-trained semantic segmentation model and put it to "checkpoints" folder

python train.py

Contact

If you have any question, please contact liling@nuaa.edu.cn