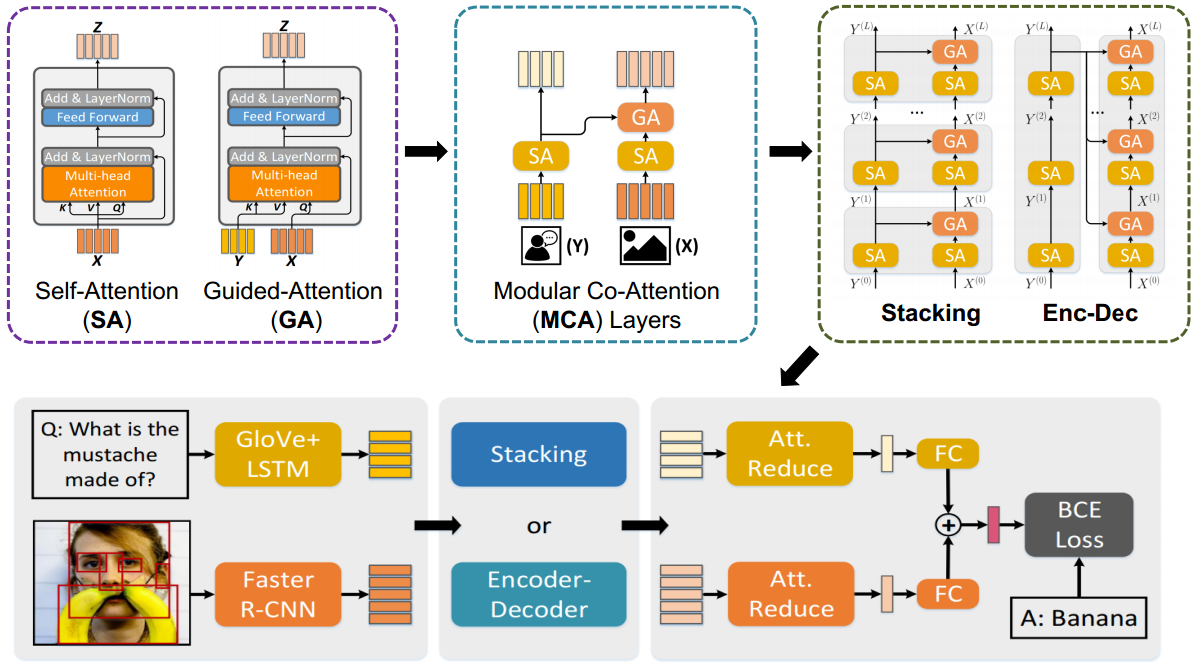

This repository corresponds to the PyTorch implementation of the MCAN for VQA, which won the champion in VQA Challgen 2019. With an ensemble of 27 models, we achieved an overall accuracy 75.23% and 75.26% on test-std and test-challenge splits, respectively. See our slides for details.

By using the commonly used bottom-up-attention visual features, a single MCAN model delivers 70.70% (small model) and 70.93% (large model) overall accuracy on the test-dev split of VQA-v2 dataset respectively, which significantly outperform existing state-of-the-arts. Please check our paper for details.

- Only download and use validation set

- Edit

coreto allow use on CPU - Add script for validation

The image features are extracted using the bottom-up-attention strategy, with each image being represented as an dynamic number (from 10 to 100) of 2048-D features. We store the features for each image in a .npz file. Download the extracted features from OneDrive or BaiduYun. Download the val2014.tar.gz package corresponding to the features for the val images for VQA-v2. You should place it as follow:

|-- datasets

|-- coco_extract

| |-- val2014.tar.gz

Besides, we use the VQA samples from the visual genome dataset to expand the training samples. Similar to existing strategies, we preprocessed the samples by two rules:

- Select the QA pairs with the corresponding images appear in the MSCOCO train and val splits.

- Select the QA pairs with the answer appear in the processed answer list (occurs more than 8 times in whole VQA-v2 answers).

For convenience, we provide our processed vg questions and annotations files, you can download them from OneDrive or BaiduYun, and unzip and place them as follow:

|-- datasets

|-- vqa

| |-- VG_questions.json

| |-- VG_annotations.json

After that, you can run the following script to setup all the needed configurations for the experiments

$ virtualenv --python=python3 venv

$ source venv/bin/activate

$ sh setup.shRunning the script will:

- Download the QA files for VQA-v2.

- Install requirements

Finally, the datasets folders will have the following structure:

|-- datasets

|-- coco_extract

| |-- val2014

| | |-- COCO_val2014_...jpg.npz

| | |-- ...

|-- vqa

| |-- v2_OpenEnded_mscoco_val2014_questions.json

| |-- v2_mscoco_val2014_annotations.json

| |-- VG_questions.json

| |-- VG_annotations.json

Download the pretrained small model and place it as below from OneDrive or BaiduYun, and you should unzip and put them to the correct folders as follows:

|-- ckpts

|-- ckpt_small

| |-- epoch13.pkl

run sh validate.sh

If this repository is helpful for your research, we'd really appreciate it if you could cite the following paper:

@inProceedings{yu2019mcan,

author = {Yu, Zhou and Yu, Jun and Cui, Yuhao and Tao, Dacheng and Tian, Qi},

title = {Deep Modular Co-Attention Networks for Visual Question Answering},

booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

pages = {6281--6290},

year = {2019}

}