USER STORY | DEPLOY | SUPPORTING DOCUMENTATION | CUSTOMER TRUTH

Welcome to the Chat with your data Solution accelerator repository! The Chat with your data Solution accelerator is a powerful tool that combines the capabilities of Azure AI Search and Large Language Models (LLMs) to create a conversational search experience. This solution accelerator uses an Azure OpenAI GPT model and an Azure AI Search index generated from your data, which is integrated into a web application to provide a natural language interface, including speech-to-text functionality, for search queries. Users can drag and drop files, point to storage, and take care of technical setup to transform documents. There is a web app that users can create in their own subscription with security and authentication.

This repository provides a template for setting up the solution accelerator, along with detailed instructions on how to use and customize it to fit your specific needs. It provides the following features:

- Chat with an Azure OpenAI model using your own data

- Upload and process your documents

- Index public web pages

- Easy prompt configuration

- Multiple chunking strategies

If you need to customize your scenario beyond what Azure OpenAI on your data offers out-of-the-box, use this repository.

The accelerator presented here provides several options, for example:

- The ability to ground a model using both data and public web pages

- Advanced prompt engineering capabilities

- An admin site for ingesting/inspecting/configuring your dataset on the fly

- Running a Retrieval Augmented Generation (RAG) solution locally

*Have you seen ChatGPT + Enterprise data with Azure OpenAI and AI Search demo? If you would like to experiment: Play with prompts, understanding RAG pattern different implementation approaches, see how different features interact with the RAG pattern and choose the best options for your RAG deployments, take a look at that repo.

Here is a comparison table with a few features offered by Azure, an available GitHub demo sample and this repo, that can provide guidance when you need to decide which one to use:

| Name | Feature or Sample? | What is it? | When to use? |

|---|---|---|---|

| Azure OpenAI on your data | Azure feature | Azure OpenAI Service offers out-of-the-box, end-to-end RAG implementation that uses a REST API or the web-based interface in the Azure AI Studio to create a solution that connects to your data to enable an enhanced chat experience with Azure OpenAI ChatGPT models and Azure AI Search. | This should be the first option considered for developers that need an end-to-end solution for Azure OpenAI Service with an Azure AI Search retriever. Simply select supported data sources, that ChatGPT model in Azure OpenAI Service , and any other Azure resources needed to configure your enterprise application needs. |

| Azure Machine Learning prompt flow | Azure feature | RAG in Azure Machine Learning is enabled by integration with Azure OpenAI Service for large language models and vectorization. It includes support for Faiss and Azure AI Search as vector stores, as well as support for open-source offerings, tools, and frameworks such as LangChain for data chunking. Azure Machine Learning prompt flow offers the ability to test data generation, automate prompt creation, visualize prompt evaluation metrics, and integrate RAG workflows into MLOps using pipelines. | When Developers need more control over processes involved in the development cycle of LLM-based AI applications, they should use Azure Machine Learning prompt flow to create executable flows and evaluate performance through large-scale testing. |

| "Chat with your data" Solution Accelerator - (This repo) | Azure sample | End-to-end baseline RAG pattern sample that uses Azure AI Search as a retriever. | This sample should be used by Developers when the RAG pattern implementations provided by Azure are not able to satisfy business requirements. This sample provides a means to customize the solution. Developers must add their own code to meet requirements, and adapt with best practices according to individual company policies. |

| ChatGPT + Enterprise data with Azure OpenAI and AI Search demo | Azure sample | RAG pattern demo that uses Azure AI Search as a retriever. | Developers who would like to use or present an end-to-end demonstration of the RAG pattern should use this sample. This includes the ability to deploy and test different retrieval modes, and prompts to support business use cases. |

- Private LLM access on your data: Get all the benefits of ChatGPT on your private, unstructured data.

- Single application access to your full data set: Minimize endpoints required to access internal company knowledgebases

- Natural language interaction with your unstructured data: Use natural language to quickly find the answers you need and ask follow-up queries to get the supplemental details.

- Easy access to source documentation when querying: Review referenced documents in the same chat window for additional context.

- Data upload: Batch upload documents

- Accessible orchestration: Prompt and document configuration (prompt engineering, document processing, and data retrieval)

Note: The current model allows users to ask questions about unstructured data, such as PDF, text, and docx files.

Out-of-the-box, you can upload the following file types:

- JPEG

- JPG

- PNG

- TXT

- HTML

- MD (Markdown)

- DOCX

Company personnel (employees, executives) looking to research against internal unstructured company data would leverage this accelerator using natural language to find what they need quickly.

This accelerator also works across industry and roles and would be suitable for any employee who would like to get quick answers with a ChatGPT experience against their internal unstructured company data.

Tech administrators can use this accelerator to give their colleagues easy access to internal unstructured company data. Admins can customize the system configurator to tailor responses for the intended audience.

The sample data illustrates how this accelerator could be used in the financial services industry (FSI).

In this scenario, a financial advisor is preparing for a meeting with a potential client who has expressed interest in Woodgrove Investments’ Emerging Markets Funds. The advisor prepares for the meeting by refreshing their understanding of the emerging markets fund's overall goals and the associated risks.

Now that the financial advisor is more informed about Woodgrove’s Emerging Markets Funds, they're better equipped to respond to questions about this fund from their client.

Note: Some of the sample data included with this accelerator was generated using AI and is for illustrative purposes only.

Many users are used to the convenience of speech-to-text functionality in their consumer products. With hybrid work increasing, speech-to-text supports a more flexible way for users to chat with their data, whether they’re at their computer or on the go with their mobile device. The speech-to-text capability is combined with NLP capabilities to extract intent and context from spoken language, allowing the chatbot to understand and respond to user requests more intelligently.

Chat with your unstructured data

Chat with your unstructured data

Get responses using natural language

Get responses using natural language

By bringing the Chat with your data experience into Teams, users can stay within their current workflow and get the answers they need without switching platforms. Rather than building the Chat with your data accelerator within Teams from scratch, the same underlying backend used for the web application is leveraged within Teams.

Learn more about deploying the Teams extension here.

- Azure subscription - Create one for free with owner access.

- Approval to use Azure OpenAI services with your Azure subcription. To apply for approval, see here.

- Enable custom Teams apps and turn on custom app uploading (optional: Teams extension only)

- Azure App Service

- Azure Application Insights

- Azure Bot

- Azure OpenAI

- Azure Document Intelligence

- Azure Function App

- Azure Search Service

- Azure Storage Account

- Azure Speech Service

- Teams (optional: Teams extension only)

- Microsoft 365 (optional: Teams extension only)

This solution accelerator deploys multiple resources. Evaluate the cost of each component prior to deployment.

The following are links to the pricing details for some of the resources:

- Azure OpenAI service pricing. GPT and embedding models are charged separately.

- Azure AI Search pricing. AI Search core service and semantic search are charged separately.

- Azure Blob Storage pricing

- Azure Functions pricing

- Azure AI Document Intelligence pricing

- Azure Web App Pricing

Note: The default configuration deploys an OpenAI Model "gpt-35-turbo" with version 0613. However, not all locations support this version. If you're deploying to a location that doesn't support version 0613, you'll need to switch to a lower version. To find out which versions are supported in different regions, visit the GPT-35 Turbo Model Availability page.

The easiest way to run this accelerator is in a VS Code Dev Containers, which will open the project in your local VS Code using the Dev Containers extension:

-

Start Docker Desktop (install it if not already installed)

-

In the VS Code window that opens, once the project files show up (this may take several minutes), open a terminal window

-

Run

azd auth login -

Run

azd up- This will provision Azure resources and deploy the accelerator to those resources.- Important: Beware that the resources created by this command will incur immediate costs, primarily from the AI Search resource. These resources may accrue costs even if you interrupt the command before it is fully executed. You can run

azd downor delete the resources manually to avoid unnecessary spending. - You will be prompted to select a subscription, and a location. That location list is based on the OpenAI model availability table and may become outdated as availability changes.

- If you do, accidentally, chose the wrong location; you will have to ensure that you use

azd downor delete the Resource Group as the deployment bases the location from this Resource Group.

- Important: Beware that the resources created by this command will incur immediate costs, primarily from the AI Search resource. These resources may accrue costs even if you interrupt the command before it is fully executed. You can run

-

After the application has been successfully deployed you will see a URL printed to the console. Click that URL to interact with the application in your browser.

NOTE: It may take 30 minutes for the application to be fully deployed. If you see a "Python Developer" welcome screen or an error page, then wait a bit and refresh the page.

Notes: the default auth type uses keys, if you want to switch to rbac, please run azd env set AUTH_TYPE rbac.

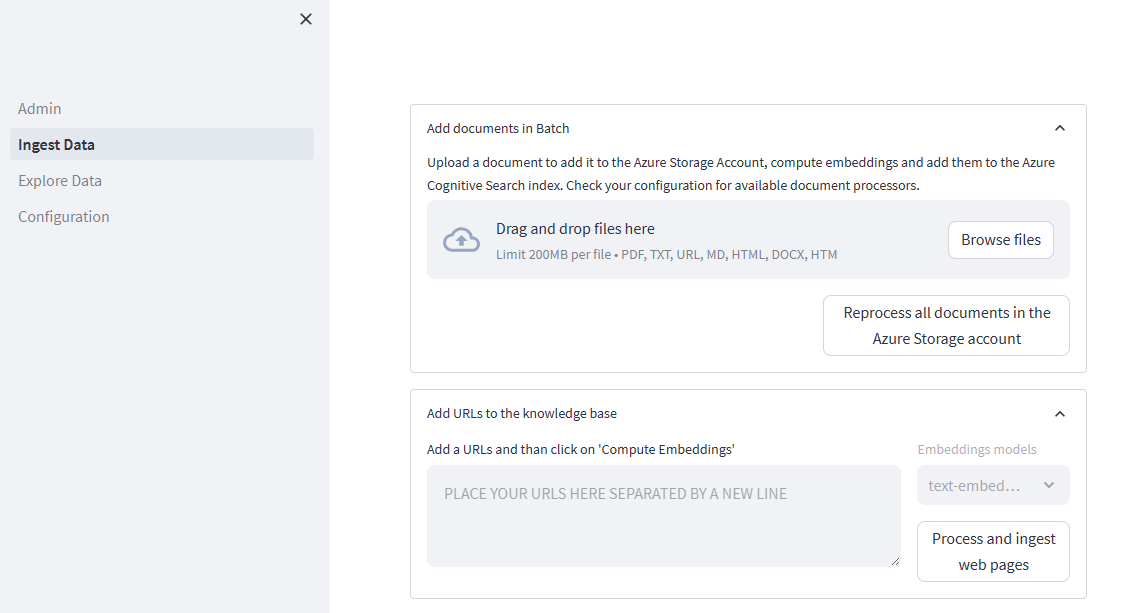

-

Navigate to the admin site, where you can upload documents. It will be located at:

https://{MY_RESOURCE_PREFIX}-website-admin.azurewebsites.net/Where

{MY_RESOURCE_PREFIX}is replaced with the resource prefix you used during deployment. Then select Ingest Data and add your data. You can find sample data in the/datadirectory. -

Navigate to the web app to start chatting on top of your data. The web app can be found at:

https://{MY_RESOURCE_PREFIX}-website.azurewebsites.net/Where

{MY_RESOURCE_PREFIX}is replaced with the resource prefix you used during deployment.

You can also run the sample directly locally (See below).

To customize the accelerator or run it locally, first, copy the .env.sample file to your development environment's .env file, and edit it according to environment variable values table. Learn more about deploying locally here.

Access to documents

Only upload data that can be accessed by any user of the application. Anyone who uses the application should also have clearance for any data that is uploaded to the application.

Depth of responses

The more limited the data set, the broader the questions should be. If the data in the repo is limited, the depth of information in the LLM response you can get with follow up questions may be limited. For more depth in your response, increase the data available for the LLM to access.

Response consistency

Consider tuning the configuration of prompts to the level of precision required. The more precision desired, the harder it may be to generate a response.

Numerical queries

The accelerator is optimized to summarize unstructured data, such as PDFs or text files. The ChatGPT 3.5 Turbo model used by the accelerator is not currently optimized to handle queries about specific numerical data. The ChatGPT 4 model may be better able to handle numerical queries.

Use your own judgement

AI-generated content may be incorrect and should be reviewed before usage.

Azure AI Search used as retriever in RAG

Azure AI Search, when used as a retriever in the Retrieval-Augmented Generation (RAG) pattern, plays a key role in fetching relevant information from a large corpus of data. The RAG pattern involves two key steps: retrieval of documents and generation of responses. Azure AI Search, in the retrieval phase, filters and ranks the most relevant documents from the dataset based on a given query.

The importance of optimizing data in the index for relevance lies in the fact that the quality of retrieved documents directly impacts the generation phase. The more relevant the retrieved documents are, the more accurate and pertinent the generated responses will be.

Azure AI Search allows for fine-tuning the relevance of search results through features such as scoring profiles, which assign weights to different fields, Lucene's powerful full-text search capabilities, vector search for similarity search, multi-modal search, recommendations, hybrid search and semantic search to use AI from Microsoft to rescore search results and moving results that have more semantic relevance to the top of the list. By leveraging these features, one can ensure that the most relevant documents are retrieved first, thereby improving the overall effectiveness of the RAG pattern.

Moreover, optimizing the data in the index also enhances the efficiency, the speed of the retrieval process and increases relevance which is an integral part of the RAG pattern.

Azure AI Search

- Consider switching security keys and using RBAC instead for authentication.

- Consider setting up a firewall, private endpoints for inbound connections and shared private links for built-in pull indexers.

- For the best results, prepare your index data and consider analyzers.

- Analyze your resource capacity needs.

Before deploying Azure RAG implementations to production

- Follow the best practices described in Azure Well-Architected-Framework.

- Understand the Retrieval Augmented Generation (RAG) in Azure AI Search.

- Understand the functionality and configuration that would adapt better to your solution and test with your own data for optimal retrieval.

- Experiment with different options, define the prompts that are optimal for your needs and find ways to implement functionality tailored to your business needs with this demo, so you can then adapt to the accelerator.

- Follow the Responsible AI best practices.

- Understand the levels of access of your users and application.

Chunking: Importance for RAG and strategies implemented as part of this repo

Chunking is essential for managing large data sets, optimizing relevance, preserving context, integrating workflows, and enhancing the user experience. See How to chunk documents for more information.

These are the chunking strategy options you can choose from:

- Layout: An AI approach to determine a good chunking strategy.

- Page: This strategy involves breaking down long documents into pages.

- Fixed-Size Overlap: This strategy involves defining a fixed size that’s sufficient for semantically meaningful paragraphs (for example, 250 words) and allows for some overlap (for example, 10-25% of the content). This usually helps creating good inputs for embedding vector models. Overlapping a small amount of text between chunks can help preserve the semantic context.

- Paragraph: This strategy allows breaking down a difficult text into more manageable pieces and rewrite these “chunks” with a summarization of all of them.

This solution accelerator deploys the following resources. It's critical to comprehend the functionality of each. Below are the links to their respective documentation:

- Application Insights overview - Azure Monitor | Microsoft Learn

- Azure OpenAI Service - Documentation, quickstarts, API reference - Azure AI services | Microsoft Learn

- Using your data with Azure OpenAI Service - Azure OpenAI | Microsoft Learn

- Content Safety documentation - Quickstarts, Tutorials, API Reference - Azure AI services | Microsoft Learn

- Document Intelligence documentation - Quickstarts, Tutorials, API Reference - Azure AI services | Microsoft Learn

- Azure Functions documentation | Microsoft Learn

- Azure Cognitive Search documentation | Microsoft Learn

- Speech to text documentation - Tutorials, API Reference - Azure AI services - Azure AI services | Microsoft Learn

- Bots in Microsoft Teams - Teams | Microsoft Learn (Optional: Teams extension only)

This repository is licensed under the MIT License.

The data set under the /data folder is licensed under the CDLA-Permissive-2 License.

Customer stories coming soon. For early access, contact: fabrizio.ruocco@microsoft.com

This Software requires the use of third-party components which are governed by separate proprietary or open-source licenses as identified below, and you must comply with the terms of each applicable license in order to use the Software. You acknowledge and agree that this license does not grant you a license or other right to use any such third-party proprietary or open-source components.

To the extent that the Software includes components or code used in or derived from Microsoft products or services, including without limitation Microsoft Azure Services (collectively, “Microsoft Products and Services”), you must also comply with the Product Terms applicable to such Microsoft Products and Services. You acknowledge and agree that the license governing the Software does not grant you a license or other right to use Microsoft Products and Services. Nothing in the license or this ReadMe file will serve to supersede, amend, terminate or modify any terms in the Product Terms for any Microsoft Products and Services.

You must also comply with all domestic and international export laws and regulations that apply to the Software, which include restrictions on destinations, end users, and end use. For further information on export restrictions, visit https://aka.ms/exporting.

You acknowledge that the Software and Microsoft Products and Services (1) are not designed, intended or made available as a medical device(s), and (2) are not designed or intended to be a substitute for professional medical advice, diagnosis, treatment, or judgment and should not be used to replace or as a substitute for professional medical advice, diagnosis, treatment, or judgment. Customer is solely responsible for displaying and/or obtaining appropriate consents, warnings, disclaimers, and acknowledgements to end users of Customer’s implementation of the Online Services.

You acknowledge the Software is not subject to SOC 1 and SOC 2 compliance audits. No Microsoft technology, nor any of its component technologies, including the Software, is intended or made available as a substitute for the professional advice, opinion, or judgement of a certified financial services professional. Do not use the Software to replace, substitute, or provide professional financial advice or judgment.

BY ACCESSING OR USING THE SOFTWARE, YOU ACKNOWLEDGE THAT THE SOFTWARE IS NOT DESIGNED OR INTENDED TO SUPPORT ANY USE IN WHICH A SERVICE INTERRUPTION, DEFECT, ERROR, OR OTHER FAILURE OF THE SOFTWARE COULD RESULT IN THE DEATH OR SERIOUS BODILY INJURY OF ANY PERSON OR IN PHYSICAL OR ENVIRONMENTAL DAMAGE (COLLECTIVELY, “HIGH-RISK USE”), AND THAT YOU WILL ENSURE THAT, IN THE EVENT OF ANY INTERRUPTION, DEFECT, ERROR, OR OTHER FAILURE OF THE SOFTWARE, THE SAFETY OF PEOPLE, PROPERTY, AND THE ENVIRONMENT ARE NOT REDUCED BELOW A LEVEL THAT IS REASONABLY, APPROPRIATE, AND LEGAL, WHETHER IN GENERAL OR IN A SPECIFIC INDUSTRY. BY ACCESSING THE SOFTWARE, YOU FURTHER ACKNOWLEDGE THAT YOUR HIGH-RISK USE OF THE SOFTWARE IS AT YOUR OWN RISK.