Data-parallel image stylization using Caffe. Implements A Neural Algorithm of Artistic Style [1].

Dependencies:

- Python 2.7 or 3.5

- Caffe, with pycaffe compiled for Python 2.7 or 3.5

- Python packages numpy, Pillow, posix-ipc, scipy, six

The current preferred Python distribution for style_transfer is Anaconda (Python 3.5 version). style_transfer will run faster with Anaconda than with other Python distributions due to its inclusion of the MKL BLAS (mathematics) library. In addition, if you are running Caffe without a GPU, style_transfer will run a great deal faster if compiled with MKL (BLAS := mkl in Makefile.config).

Cloud computing images are available with style_transfer and its dependencies preinstalled.

- The image is divided into tiles which are processed one per GPU at a time. Since the tiles can be sized so as to fit into GPU memory, this allows arbitrary size images to be processed—including print size. Tile seam suppression is applied after every iteration so that seams do not accumulate and become visible. (ex:

--size 2048 --tile-size 1024) - Images can be processed at multiple scales. For instance,

--size 512 768 1024 1536 2048 -i 100will run 100 iterations at 512x512, then 100 at 768x768, then 100 more at 1024x1024 etc. Each scale's final iterate is used as the initial iterate for the following scale. Processing a large image at smaller scales first markedly improves output quality. - Multi-GPU support (ex:

--devices 0 1 2 3). Four GPUs, for instance, can process four tiles at a time. - Averages successive iterates [2] to reduce image noise.

- Can perform simultaneous Deep Dream and image stylization.

- Adam (gradient descent) [4] and L-BFGS [6] optimizers. L-BFGS is used with a nonmonotone line search that has been adapted for nonsmooth, nonconvex problems.

- Use of more than one content layer will produce incorrect feature maps when there is more than one tile.

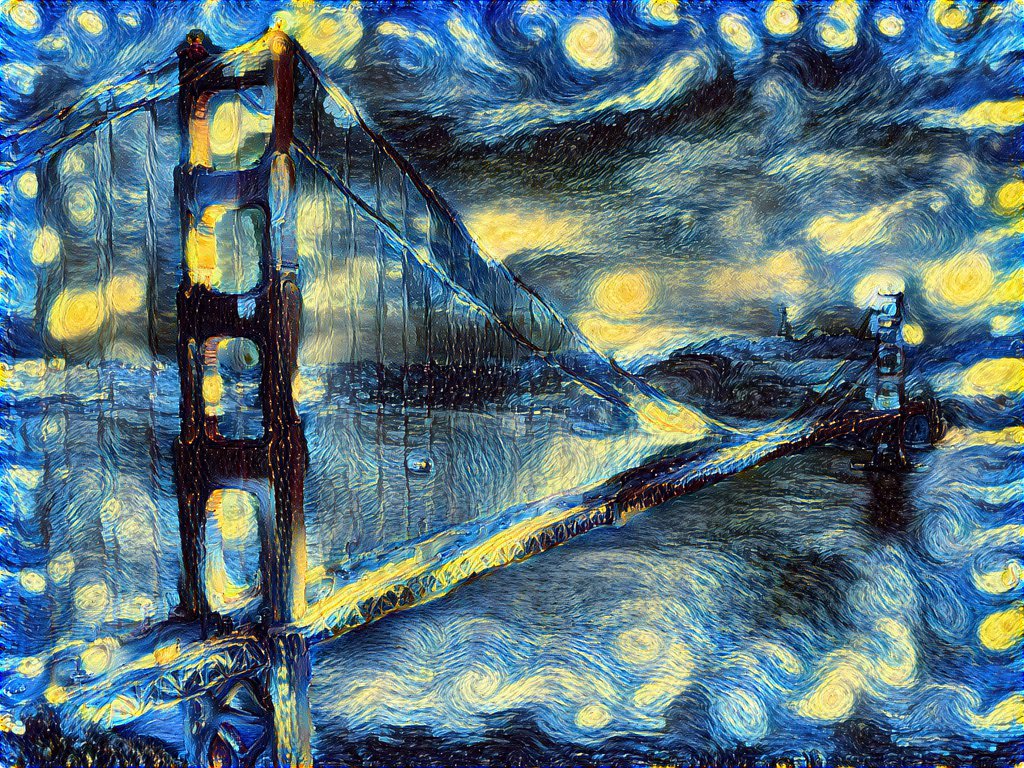

The obligatory Golden Gate Bridge + The Starry Night (van Gogh) style transfer (big version):

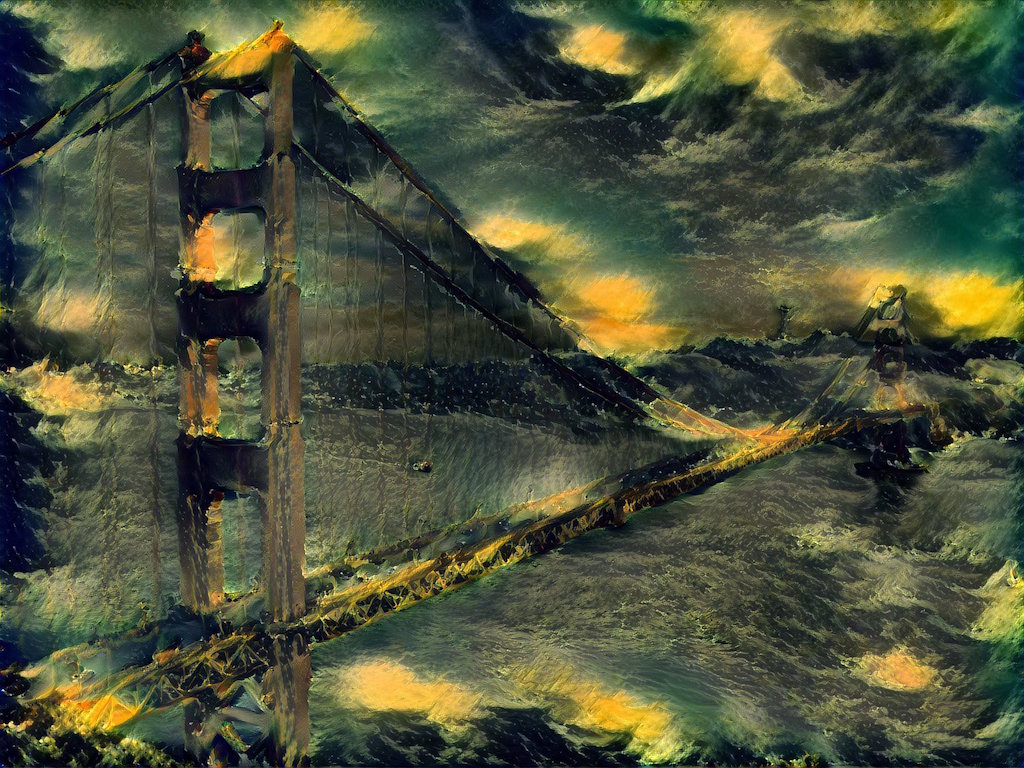

Golden Gate Bridge + The Shipwreck of the Minotaur (Turner) (big version):

barn and pond (Cindy Branham) + The Banks of the River (Renoir) (big version):

Python 2 support is relatively untested. Please feel free to report issues! Be sure to specify which Python version you are using if you report an issue.

If you use pycaffe for other things, you might want to build pycaffe for Python 3 in a second copy of Caffe so you don't break things using Python 2.

On OS X (with Homebrew-provided Boost.Python):

ANACONDA_HOME := $(HOME)/anaconda3

PYTHON_INCLUDE := $(ANACONDA_HOME)/include \

$(ANACONDA_HOME)/include/python3.5m \

$(ANACONDA_HOME)/lib/python3.5/site-packages/numpy/core/include

PYTHON_LIBRARIES := boost_python3 python3.5m

PYTHON_LIB := $(ANACONDA_HOME)/lib

The exact name of the Boost.Python library will differ on Linux but the rest should be the same.

On OS X, you can install Python 3 and Boost.Python using Homebrew:

brew install python3

brew install boost-python --with-python3

Then insert these lines into Caffe's Makefile.config to build against the Homebrew-provided Python 3.5:

PYTHON_DIR := /usr/local/opt/python3/Frameworks/Python.framework/Versions/3.5

PYTHON_LIBRARIES := boost_python3 python3.5m

PYTHON_INCLUDE := $(PYTHON_DIR)/include/python3.5m \

/usr/local/lib/python3.5/site-packages/numpy/core/include

PYTHON_LIB := $(PYTHON_DIR)/lib

make pycaffe ought to compile the Python 3 bindings now.

On Ubuntu 16.04, follow Caffe's Ubuntu 15.10/16.04 install guide. The required Makefile.config lines for Python 3.5 are:

PYTHON_LIBRARIES := boost_python-py35 python3.5m

PYTHON_INCLUDE := /usr/include/python3.5m \

/usr/local/lib/python3.5/dist-packages/numpy/core/include

PYTHON_LIB := /usr/lib

Using pip:

pip3 install -Ur requirements.txt

[1] L. Gatys, A. Ecker, M. Bethge, "A Neural Algorithm of Artistic Style"

[2] O. Shamir, T. Zhang, "Stochastic Gradient Descent for Non-smooth Optimization: Convergence Results and Optimal Averaging Schemes"

[3] A. Mahendran, A. Vedaldi, "Understanding Deep Image Representations by Inverting Them"

[4] D. Kingma, J. Ba, "Adam: A Method for Stochastic Optimization"

[5] K. Simonyan, A. Zisserman, "Very Deep Convolutional Networks for Large-Scale Image Recognition"

[6] D. Liu, J. Nocedal, "On the limited memory BFGS method for large scale optimization"

[7] A. Skajaa, "Limited Memory BFGS for Nonsmooth Optimization"