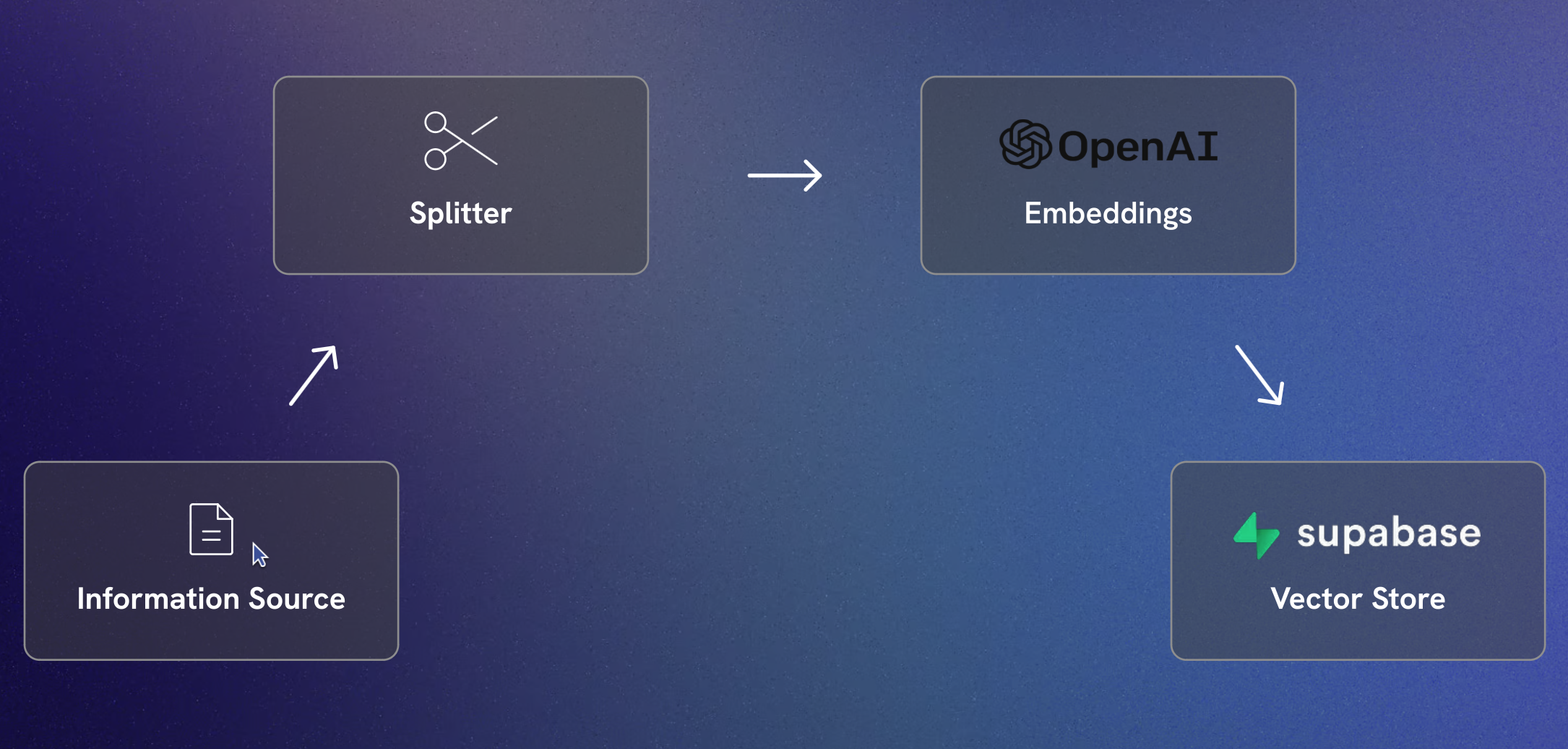

This project demonstrates how to use LangChain and Supabase to create a vector store for Documents using OpenAI embeddings. The text data is split into manageable chunks and stored in Supabase for efficient retrieval.

- Python 3.12

- Pipenv

-

Clone the repository:

git clone https://github.com/yourusername/supabase-vector-store.git cd supabase-vector-store -

Install dependencies using Pipenv:

pipenv install

-

Create a

.envfile in the root directory and add your Supabase and OpenAI credentials:SUPABASE_API_URL=your_supabase_api_url SUPABASE_API_KEY=your_supabase_api_key OPENAI_API_KEY=your_openai_api_key OPENAI_API_URL=your_openai_api_url

-

Ensure you have a text file named

personal-info.txtin the root directory with the content you want to process. -

Install the dependencies using Pipenv:

pipenv install

-

Run the script:

pipenv run python vector.py

-

If the script runs successfully, you should see the message:

Documents stored successfully.

This project is licensed under the MIT License.

Feel free to open issues or submit pull requests for improvements or bug fixes.