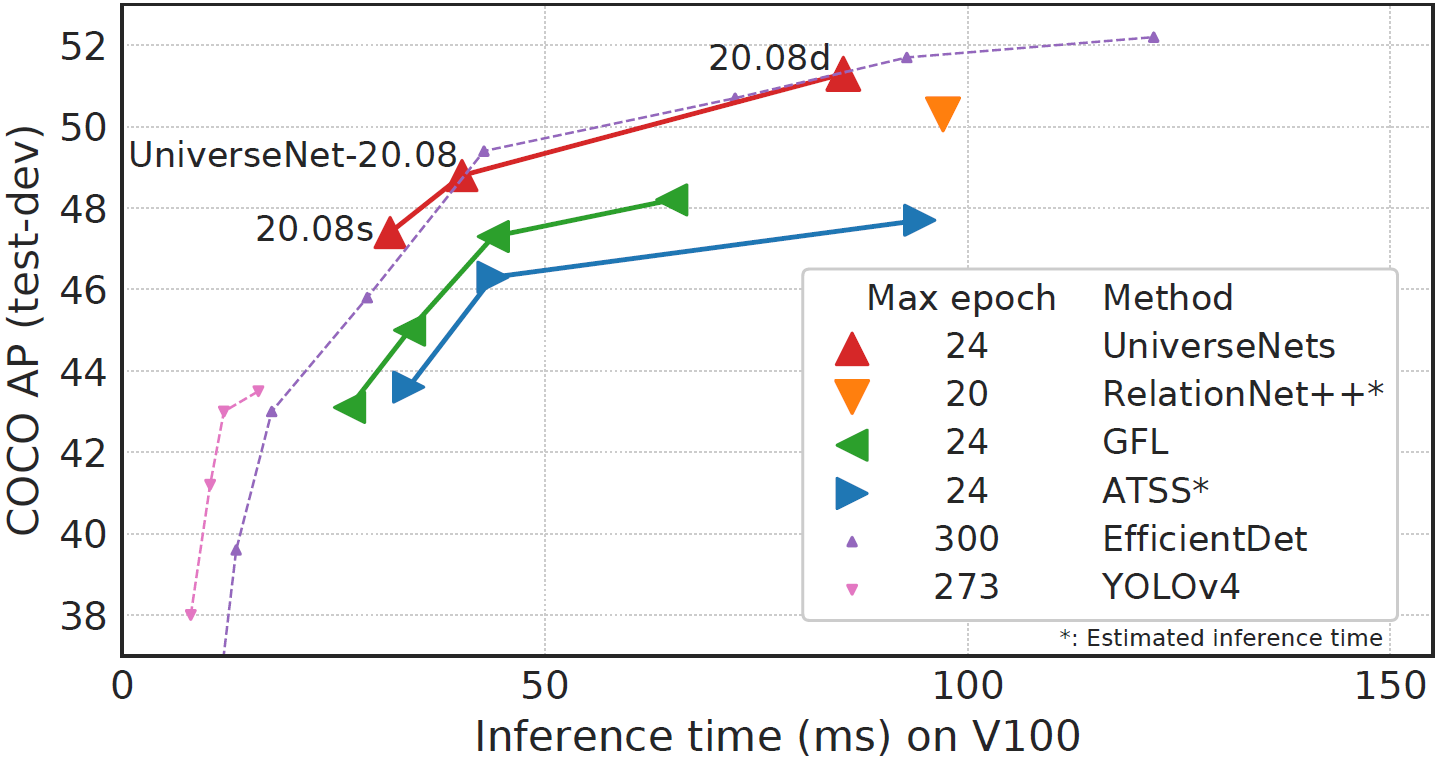

UniverseNets are state-of-the-art detectors for universal-scale object detection. Please refer to our paper for details. https://arxiv.org/abs/2103.14027

- 21.12 (Dec. 2021):

- Support finer scale-wise AP metrics

- Add codes for TOOD, ConvMLP, PoolFormer

- Update codes for PyTorch 1.9.0, mmdet 2.17.0, mmcv-full 1.3.13

- 21.09 (Sept. 2021):

- Support gradient accumulation to simulate large batch size with few GPUs (example)

- Add codes for CBNetV2, PVT, PVTv2, DDOD

- Update and fix codes for mmdet 2.14.0, mmcv-full 1.3.9

- 21.04 (Apr. 2021):

- Propose Universal-Scale object detection Benchmark (USB)

- Add codes for Swin Transformer, GFLv2, RelationNet++ (BVR)

- Update and fix codes for PyTorch 1.7.1, mmdet 2.11.0, mmcv-full 1.3.2

- 20.12 (Dec. 2020):

- Add configs for Manga109-s dataset

- Add ATSS-style TTA for SOTA accuracy (COCO test-dev AP 54.1)

- Add UniverseNet 20.08s for realtime speed (> 30 fps)

- 20.10 (Oct. 2020):

- Add variants of UniverseNet 20.08

- Update and fix codes for PyTorch 1.6.0, mmdet 2.4.0, mmcv-full 1.1.2

- 20.08 (Aug. 2020): UniverseNet 20.08

- Improve usage of batchnorm

- Use DCN modestly by default for faster training and inference

- 20.07 (July 2020): UniverseNet+GFL

- Add GFL to improve accuracy and speed

- Provide stronger pre-trained model (backbone: Res2Net-101)

- 20.06 (June 2020): UniverseNet

- Achieve SOTA single-stage detector on Waymo Open Dataset 2D detection

- Win 1st place in NightOwls Detection Challenge 2020 all objects track

Methods and architectures:

- UniverseNets (arXiv 2021)

- PoolFormer (arXiv 2021)

- ConvMLP (arXiv 2021)

- CBNetV2 (arXiv 2021)

- TOOD (ICCV 2021)

- PVTv2 (arXiv 2021) stronger models

-

PVT (ICCV 2021)supported - Swin Transformer (ICCV 2021) stronger models

- DDOD (ACMMM 2021)

- GFLv2 (CVPR 2021)

- RelationNet++ (BVR) (NeurIPS 2020)

- SEPC (CVPR 2020)

- ATSS-style TTA (CVPR 2020)

-

Test-time augmentation for ATSS and GFLmerged

Benchmarks and datasets:

- USB (arXiv 2021)

- Waymo Open Dataset (CVPR 2020)

- Manga109-s dataset (MTAP 2017, IEEE MultiMedia 2020)

- NightOwls dataset (ACCV 2018)

See get_started.md.

See MMDetection documents. Especially, see this document to evaluate and train existing models on COCO.

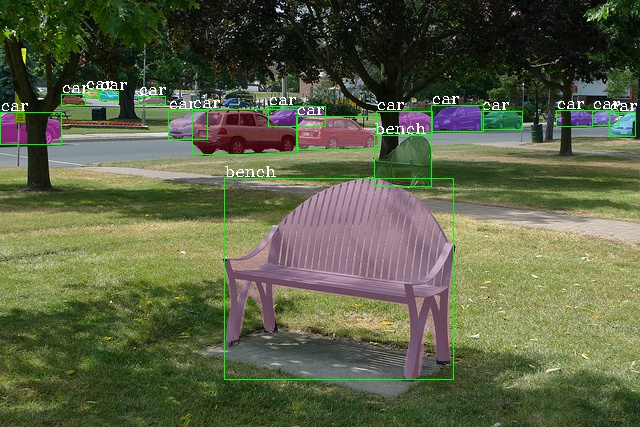

We show examples to evaluate and train UniverseNet-20.08 on COCO with 4 GPUs.

# evaluate pre-trained model

mkdir -p ${HOME}/data/checkpoints/

wget -P ${HOME}/data/checkpoints/ https://github.com/shinya7y/UniverseNet/releases/download/20.08/universenet50_2008_fp16_4x4_mstrain_480_960_2x_coco_20200815_epoch_24-81356447.pth

CONFIG_FILE=configs/universenet/universenet50_2008_fp16_4x4_mstrain_480_960_2x_coco.py

CHECKPOINT_FILE=${HOME}/data/checkpoints/universenet50_2008_fp16_4x4_mstrain_480_960_2x_coco_20200815_epoch_24-81356447.pth

GPU_NUM=4

bash tools/dist_test.sh ${CONFIG_FILE} ${CHECKPOINT_FILE} ${GPU_NUM} --eval bbox

# train model

CONFIG_FILE=configs/universenet/universenet50_2008_fp16_4x4_mstrain_480_960_2x_coco.py

CONFIG_NAME=$(basename ${CONFIG_FILE} .py)

WORK_DIR="${HOME}/logs/coco/${CONFIG_NAME}_`date +%Y%m%d_%H%M%S`"

GPU_NUM=4

bash tools/dist_train.sh ${CONFIG_FILE} ${GPU_NUM} --work-dir ${WORK_DIR} --seed 0Even if you have one GPU, we recommend using tools/dist_train.sh and tools/dist_test.sh to avoid a SyncBN issue.

@article{USB_shinya_2021,

title={{USB}: Universal-Scale Object Detection Benchmark},

author={Shinya, Yosuke},

journal={arXiv:2103.14027},

year={2021}

}

Major parts of the code are released under the Apache 2.0 license. Plsease check NOTICE for exceptions.

Some codes are modified from the repositories of PoolFormer, ConvMLP, TOOD, Swin Transformer, RelationNet++, SEPC, PVT, CBNetV2, GFLv2, DDOD, and NightOwls. When merging, please note that there are some minor differences from the above repositories and the original MMDetection repository.

Documentation: https://mmdetection.readthedocs.io/

English | 简体中文

MMDetection is an open source object detection toolbox based on PyTorch. It is a part of the OpenMMLab project.

The master branch works with PyTorch 1.3+. The old v1.x branch works with PyTorch 1.1 to 1.4, but v2.0 is strongly recommended for faster speed, higher performance, better design and more friendly usage.

-

Modular Design

We decompose the detection framework into different components and one can easily construct a customized object detection framework by combining different modules.

-

Support of multiple frameworks out of box

The toolbox directly supports popular and contemporary detection frameworks, e.g. Faster RCNN, Mask RCNN, RetinaNet, etc.

-

High efficiency

All basic bbox and mask operations run on GPUs. The training speed is faster than or comparable to other codebases, including Detectron2, maskrcnn-benchmark and SimpleDet.

-

State of the art

The toolbox stems from the codebase developed by the MMDet team, who won COCO Detection Challenge in 2018, and we keep pushing it forward.

Apart from MMDetection, we also released a library mmcv for computer vision research, which is heavily depended on by this toolbox.

v2.17.0 was released in 28/09/2021. Please refer to changelog.md for details and release history. A comparison between v1.x and v2.0 codebases can be found in compatibility.md.

Results and models are available in the model zoo.

Supported backbones:

- ResNet (CVPR'2016)

- ResNeXt (CVPR'2017)

- VGG (ICLR'2015)

- MobileNetV2 (CVPR'2018)

- HRNet (CVPR'2019)

- RegNet (CVPR'2020)

- Res2Net (TPAMI'2020)

- ResNeSt (ArXiv'2020)

- Swin (CVPR'2021)

- PVT (ICCV'2021)

- PVTv2 (ArXiv'2021)

Supported methods:

- RPN (NeurIPS'2015)

- Fast R-CNN (ICCV'2015)

- Faster R-CNN (NeurIPS'2015)

- Mask R-CNN (ICCV'2017)

- Cascade R-CNN (CVPR'2018)

- Cascade Mask R-CNN (CVPR'2018)

- SSD (ECCV'2016)

- RetinaNet (ICCV'2017)

- GHM (AAAI'2019)

- Mask Scoring R-CNN (CVPR'2019)

- Double-Head R-CNN (CVPR'2020)

- Hybrid Task Cascade (CVPR'2019)

- Libra R-CNN (CVPR'2019)

- Guided Anchoring (CVPR'2019)

- FCOS (ICCV'2019)

- RepPoints (ICCV'2019)

- Foveabox (TIP'2020)

- FreeAnchor (NeurIPS'2019)

- NAS-FPN (CVPR'2019)

- ATSS (CVPR'2020)

- FSAF (CVPR'2019)

- PAFPN (CVPR'2018)

- Dynamic R-CNN (ECCV'2020)

- PointRend (CVPR'2020)

- CARAFE (ICCV'2019)

- DCNv2 (CVPR'2019)

- Group Normalization (ECCV'2018)

- Weight Standardization (ArXiv'2019)

- OHEM (CVPR'2016)

- Soft-NMS (ICCV'2017)

- Generalized Attention (ICCV'2019)

- GCNet (ICCVW'2019)

- Mixed Precision (FP16) Training (ArXiv'2017)

- InstaBoost (ICCV'2019)

- GRoIE (ICPR'2020)

- DetectoRS (ArXiv'2020)

- Generalized Focal Loss (NeurIPS'2020)

- CornerNet (ECCV'2018)

- Side-Aware Boundary Localization (ECCV'2020)

- YOLOv3 (ArXiv'2018)

- PAA (ECCV'2020)

- YOLACT (ICCV'2019)

- CentripetalNet (CVPR'2020)

- VFNet (ArXiv'2020)

- DETR (ECCV'2020)

- Deformable DETR (ICLR'2021)

- CascadeRPN (NeurIPS'2019)

- SCNet (AAAI'2021)

- AutoAssign (ArXiv'2020)

- YOLOF (CVPR'2021)

- Seasaw Loss (CVPR'2021)

- CenterNet (CVPR'2019)

- YOLOX (ArXiv'2021)

- SOLO (ECCV'2020)

Some other methods are also supported in projects using MMDetection.

Please refer to get_started.md for installation.

Please see get_started.md for the basic usage of MMDetection. We provide colab tutorial, and full guidance for quick run with existing dataset and with new dataset for beginners. There are also tutorials for finetuning models, adding new dataset, designing data pipeline, customizing models, customizing runtime settings and useful tools.

Please refer to FAQ for frequently asked questions.

We appreciate all contributions to improve MMDetection. Please refer to CONTRIBUTING.md for the contributing guideline.

MMDetection is an open source project that is contributed by researchers and engineers from various colleges and companies. We appreciate all the contributors who implement their methods or add new features, as well as users who give valuable feedbacks. We wish that the toolbox and benchmark could serve the growing research community by providing a flexible toolkit to reimplement existing methods and develop their own new detectors.

If you use this toolbox or benchmark in your research, please cite this project.

@article{mmdetection,

title = {{MMDetection}: Open MMLab Detection Toolbox and Benchmark},

author = {Chen, Kai and Wang, Jiaqi and Pang, Jiangmiao and Cao, Yuhang and

Xiong, Yu and Li, Xiaoxiao and Sun, Shuyang and Feng, Wansen and

Liu, Ziwei and Xu, Jiarui and Zhang, Zheng and Cheng, Dazhi and

Zhu, Chenchen and Cheng, Tianheng and Zhao, Qijie and Li, Buyu and

Lu, Xin and Zhu, Rui and Wu, Yue and Dai, Jifeng and Wang, Jingdong

and Shi, Jianping and Ouyang, Wanli and Loy, Chen Change and Lin, Dahua},

journal= {arXiv preprint arXiv:1906.07155},

year={2019}

}

- MMCV: OpenMMLab foundational library for computer vision.

- MIM: MIM Installs OpenMMLab Packages.

- MMClassification: OpenMMLab image classification toolbox and benchmark.

- MMDetection: OpenMMLab detection toolbox and benchmark.

- MMDetection3D: OpenMMLab's next-generation platform for general 3D object detection.

- MMSegmentation: OpenMMLab semantic segmentation toolbox and benchmark.

- MMAction2: OpenMMLab's next-generation action understanding toolbox and benchmark.

- MMTracking: OpenMMLab video perception toolbox and benchmark.

- MMPose: OpenMMLab pose estimation toolbox and benchmark.

- MMEditing: OpenMMLab image and video editing toolbox.

- MMOCR: A Comprehensive Toolbox for Text Detection, Recognition and Understanding.

- MMGeneration: OpenMMLab image and video generative models toolbox.