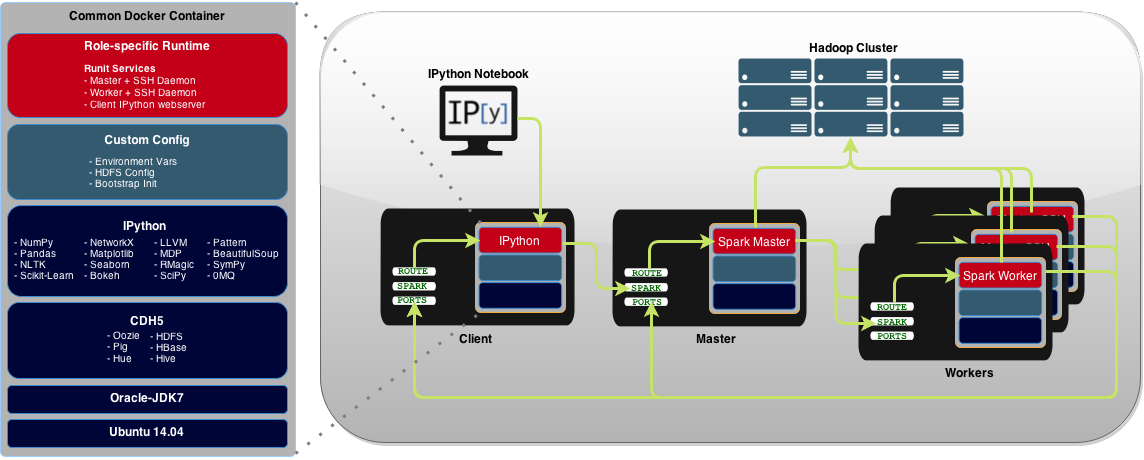

Please see the accompanying blog post, Using Docker to Build an IPython-driven Spark Deployment, for the technical details and motivation behind this project. This repo provides Docker containers to run:

- Spark master and worker(s) on dedicated hosts

- IPython user interface within a dedicated Spark client

Docker containers provide a portable and repeatable method for deploying the cluster:

| HDFS | Hbase | Hive | Oozie | Pig | Hue |

| Pattern | NLTK | Pandas | NumPy | SciPy | SymPy | Seaborn |

| Cython | Numba | Biopython | Rmagic | 0MQ | Matplotlib | Scikit-Learn |

| Statsmodels | Beautiful Soup | NetworkX | LLVM | Bokeh | Vincent | MDP |

Installation and Deployment - Build each Docker image and run each on separate dedicated hosts

- Prerequisites

- Deploy Hadoop/HDFS cluster. Spark uses a cluster to distrubute analysis of data pulled from multiple sources, including the Hadoop Distrubuted File System (HDFS). The ephemeral nature of Docker containers make them ill-suited for persisting long-term data in a cluster. Instead of attempting to store data within the Docker containers' HDFS nodes or mounting host volumes, it is recommended you point this cluster at an external Hadoop deployment. Cloudera provides complete resources for installing and configuring its distribution (CDH) of Hadoop. This repo has been tested using CDH5.

-

Build and configure hosts

-

Install Docker v1.5+, jq JSON processor, and iptables. For example, on an Ubuntu host:

./0-prepare-host.sh -

Update the Hadoop configuration files in

runtime/cdh5/<hadoop|hive>/<multiple-files>with the correct hostnames for your Hadoop cluster. Usegrep FIXME -R .to find hostnames to change. -

Generate new SSH keypair (

config/ssh/id_rsaandconfig/ssh/id_rsa.pub), adding the public key toconfig/ssh/authorized_keys. -

(optional) Update

SPARK_WORKER_CONFIGenvironment variable for Spark-specific options such as executor cores. Update the variable via a shellexportcommand or by updatingconfig/sv/spark-client-iython/ipython/run. -

(optional) Comment out any unwanted packages in the base Dockerfile image

dockerfiles/lab41/spark-base.dockerfile. -

Get Docker images:

docker pull lab41/spark-master

docker pull lab41/spark-worker

docker pull lab41/spark-client-ipython./1-build.sh- Deploy cluster nodes

./2-run-spark-master.sh./3-run-spark-worker.sh spark://spark-master-fqdn:7077./4-run-spark-client-ipython.sh spark://spark-master-fqdn:7077