Code for paper: "Federated Adversarial Debiasing for Fair and Transferable Representations" Junyuan Hong, Zhuangdi Zhu, Shuyang Yu, Zhangyang Wang, Hiroko Dodge, and Jiayu Zhou. KDD'21 [paper] [slides]

TL;DR: FADE is the first work showing that clients can optimize an group-to-group adversarial debiasing objective [1] without its adversarial data on local device. The technique is applicable for unsupervised domain adaptation (UDA) and group-fair learning. In UDA, our method outperforms the SOTA UDA w/o source data (SHOT) in federated learning.

[1] Ganin, Y., & Lempitsky, V. (2015). Unsupervised domain adaptation by backpropagation. ICML.

Abstract

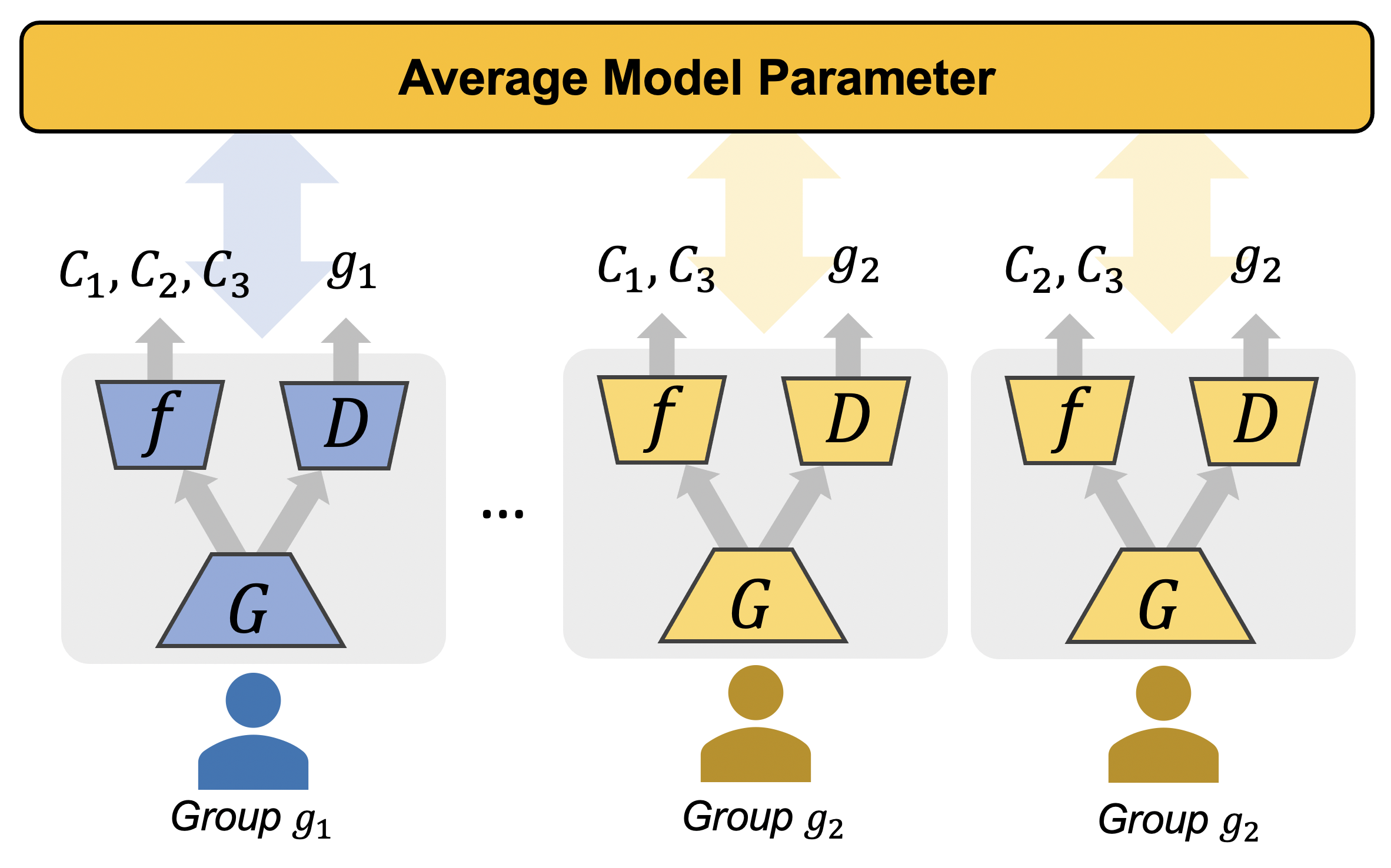

Federated learning is a distributed learning framework that is communication efficient and provides protection over participating users' raw training data. One outstanding challenge of federate learning comes from the users' heterogeneity, and learning from such data may yield biased and unfair models for minority groups. While adversarial learning is commonly used in centralized learning for mitigating bias, there are significant barriers when extending it to the federated framework. In this work, we study these barriers and address them by proposing a novel approach Federated Adversarial DEbiasing (FADE). FADE does not require users' sensitive group information for debiasing and offers users the freedom to opt-out from the adversarial component when privacy or computational costs become a concern. We show that ideally, FADE can attain the same global optimality as the one by the centralized algorithm. We then analyze when its convergence may fail in practice and propose a simple yet effective method to address the problem. Finally, we demonstrate the effectiveness of the proposed framework through extensive empirical studies, including the problem settings of unsupervised domain adaptation and fair learning.

Clone the repository and setup the environment.

git clone git@github.com:illidanlab/FADE.git

cd FADE

# create conda env

conda env create -f conda.yml

conda activate fade

# run

python -m fade.mainxTo run repeated experiments, we use wandb to log. Run

wandb sweep <sweep.yaml>Note, you need a wandb account which will be required at first run.

- Office: Download zip file from here (preprocessed by SHOT) and unpack into

./data/office31. Verify the file structure to make sure the missing image path exist. - OfficeHome: Download zip file from here (preprocessed by SHOT) and unpack into

./data/OfficeHome65. Verify the file structure to make sure the missing image path exist.

For each UDA tasks, we pre-train models on the source domain first. You can pre-train these models by yourself:

source sweeps/Office31_UDA/A_fedavg.shInstead, you may download the pre-trained source-domain models from here. Place under out/models/.

To add soon.

- Office dataset

Demo wandb project page: fade-demo-Office31_X2X_UDA. Check sweeps here.

# pretrain the model on domain A, D, W. source sweeps/Office31_UDA/A_fedavg.sh # create wandb sweeps for A2X, D2X, W2X where X is one of the rest two domains. # the command will prompt the agent commands. source sweeps/Office31_UDA/sweep_all.sh # Run wandb agent commands from the prompt or the sweep page. wandb agent <agent id>

- OfficeHome dataset

# pretrain the model on domain R source sweeps/OfficeHome65_1to3_uda_iid/R_fedavg.sh # create wandb sweeps for R2X where X is one of the rest domains. # the command will prompt the agent commands. source sweeps/OfficeHome65_1to3_uda_iid/sweep_all.sh # Run wandb agent commands from the prompt or the sweep page. wandb agent <agent id>

To extend FADE framework with other debias methods, you need to update the user and server codes. To start, please read the GroupAdvUser class in fade/user/group_adv.py and FedAdv in fade/server/FedAdv.py.

Typically, you will need to update the compute_loss function in GroupAdvUser class to customize your loss computation.

If you find the repository useful, please cite our paper.

@inproceedings{hong2021federated,

title={Federated Adversarial Debiasing for Fair and Transferable Representations},

author={Hong, Junyuan and Zhu, Zhuangdi and Yu, Shuyang and Wang, Zhangyang and Dodge, Hiroko and Zhou, Jiayu},

booktitle={Proceedings of the 27th ACM SIGKDD International Conference on Knowledge Discovery \& Data Mining},

year={2021}

}Acknowledgement

This material is based in part upon work supported by the National Science Foundation under Grant IIS-1749940, EPCN-2053272, Office of Naval Research N00014-20-1-2382, and National Institute on Aging (NIA) R01AG051628, R01AG056102, P30AG066518, P30AG024978, RF1AG072449.