(Disclaimer: this is work in progress and does not feature all the functionalities of detectron. Currently only inference and evaluation are supported -- no training) (News: Now supporting FPN and ResNet-101!)

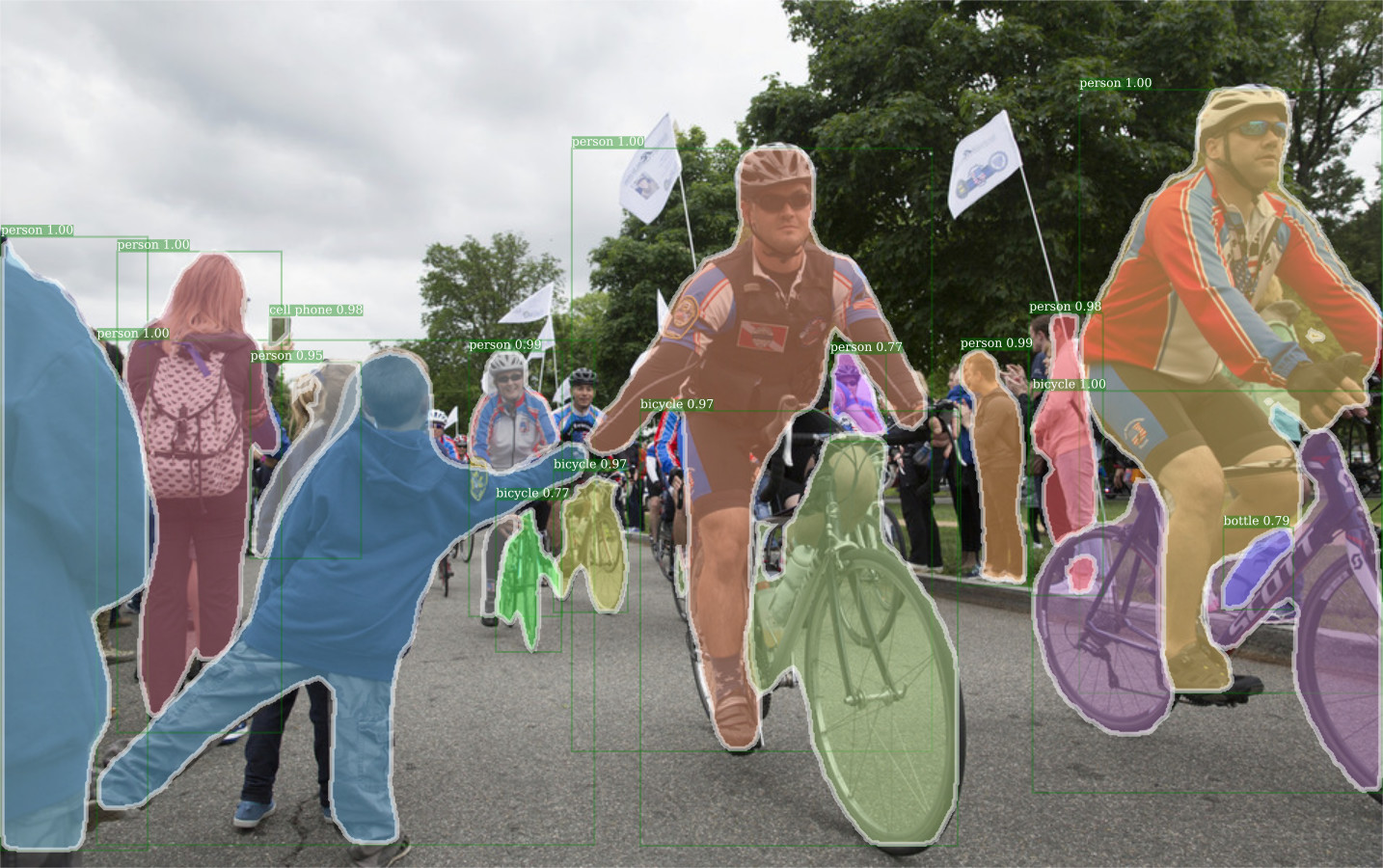

This code allows to use some of the Detectron models for object detection from Facebook AI Research with PyTorch.

It currently supports:

- Fast R-CNN

- Faster R-CNN

- Mask R-CNN

It supports ResNet-50/101 models with or without FPN. The pre-trained models from caffe2 can be imported and used on PyTorch.

Both bounding box evaluation and instance segmentation evaluation where tested, yielding the same results as in the Detectron caffe2 models. These results below have been computed using the PyTorch code:

| Model | box AP | mask AP | model id |

|---|---|---|---|

| fast_rcnn_R-50-C4_2x | 35.6 | 36224046 | |

| fast_rcnn_R-50-FPN_2x | 36.8 | 36225249 | |

| e2e_faster_rcnn_R-50-C4_2x | 36.5 | 35857281 | |

| e2e_faster_rcnn_R-50-FPN_2x | 37.9 | 35857389 | |

| e2e_mask_rcnn_R-50-C4_2x | 37.8 | 32.8 | 35858828 |

| e2e_mask_rcnn_R-50-FPN_2x | 38.6 | 34.5 | 35859007 |

| e2e_mask_rcnn_R-101-FPN_2x | 40.9 | 36.4 | 35861858 |

Training code is experimental. See train_fast.py for training Fast R-CNN. It seems to work, but slow.

First, clone the repo with git clone --recursive https://github.com/ignacio-rocco/detectorch so that you also clone the Coco API.

The code can be used with PyTorch 0.3.1 or PyTorch 0.4 (master) under Python 3. Anaconda is recommended. Other required packages

- torchvision (

conda install torchvision -c soumith) - opencv (

conda install -c conda-forge opencv) - cython (

conda install cython) - matplotlib (

conda install matplotlib) - scikit-image (

conda install scikit-image) - ninja (

conda install ninja) (required for Pytorch 0.4 only)

Additionally, you need to build the Coco API and RoIAlign layer. See below.

If you cloned this repo with git clone --recursive you should have also cloned the cocoapi in lib/cocoapi. Compile this with:

cd lib/cocoapi/PythonAPI

make install

The RoIAlign layer was converted from the caffe2 version. There are two different implementations for each PyTorch version:

- Pytorch 0.4: RoIAlign using ATen library (lib/cppcuda). Compiled JIT when loaded.

- PyTorch 0.3.1: RoIAlign using TH/THC and cffi (lib/cppcuda_cffi). Needs to be compiled with:

cd lib/cppcuda_cffi

./make.sh

Check the demo notebook.