A curated list for Efficient Large Language Models:

- Knowledge Distillation

- Network Pruning

- Quantization

- Inference Acceleration

- Efficient Structure Design

- Text Compression

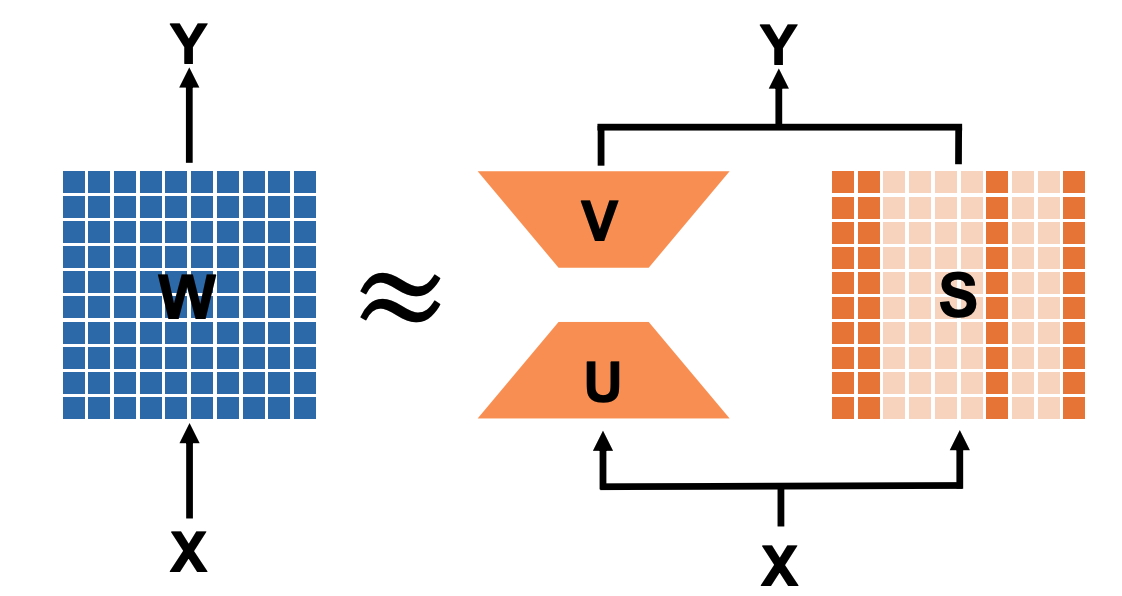

- Low-Rank Decomposition

- Survey

- Hardware

- Others

In light of the numerous publications that conducts experiments using PLMs (such as BERT, BART) currently, a new subdirectory efficient_plm/ is created to house papers that are applicable to PLMs but have yet to be verified for their effectiveness on LLMs (not implying that they are not suitable on LLM).

| Title & Authors | Introduction | Links |

|---|---|---|

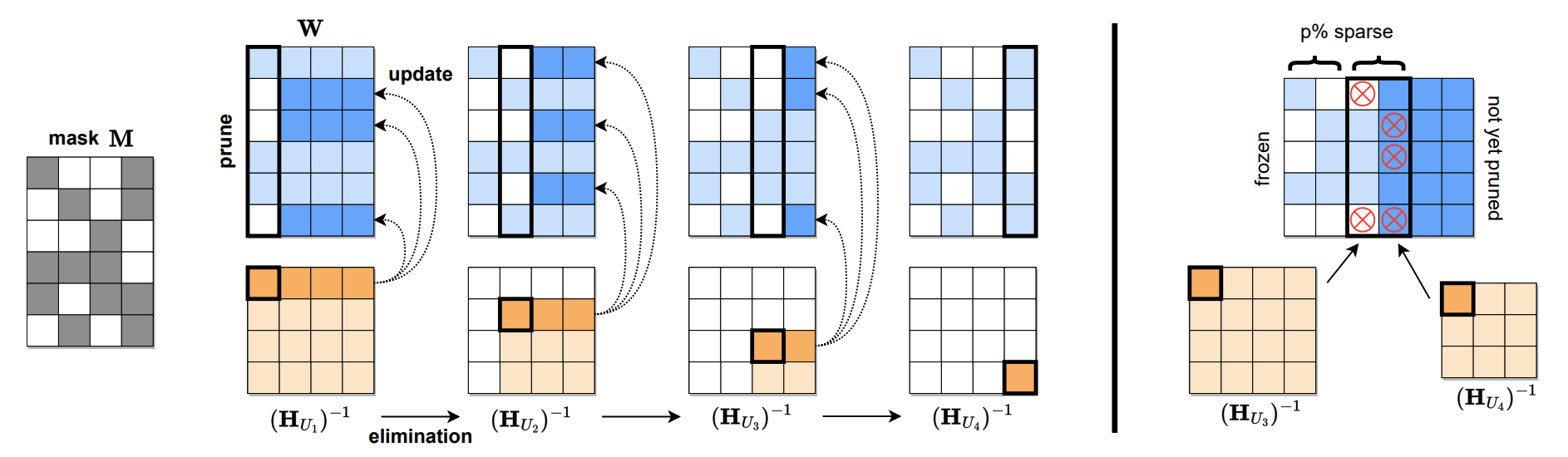

SparseGPT: Massive Language Models Can Be Accurately Pruned in One-Shot Elias Frantar, Dan Alistarh |

|

Github paper |

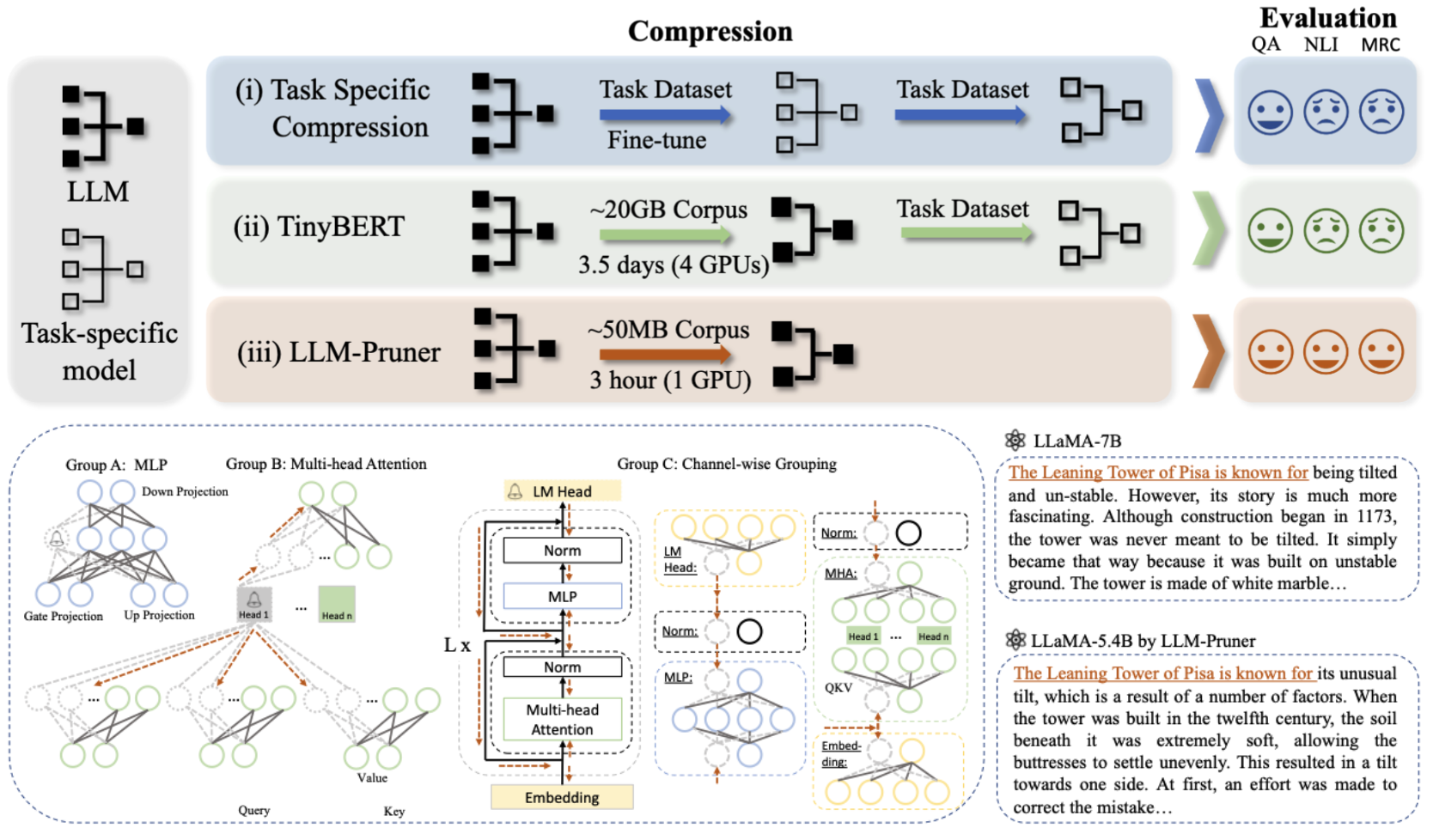

LLM-Pruner: On the Structural Pruning of Large Language Models Xinyin Ma, Gongfan Fang, Xinchao Wang |

|

Github paper |

A Simple and Effective Pruning Approach for Large Language Models Mingjie Sun, Zhuang Liu, Anna Bair, J. Zico Kolter |

|

Github Paper |

The Emergence of Essential Sparsity in Large Pre-trained Models: The Weights that Matter Ajay Jaiswal, Shiwei Liu, Tianlong Chen, Zhangyang Wang |

|

Github Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

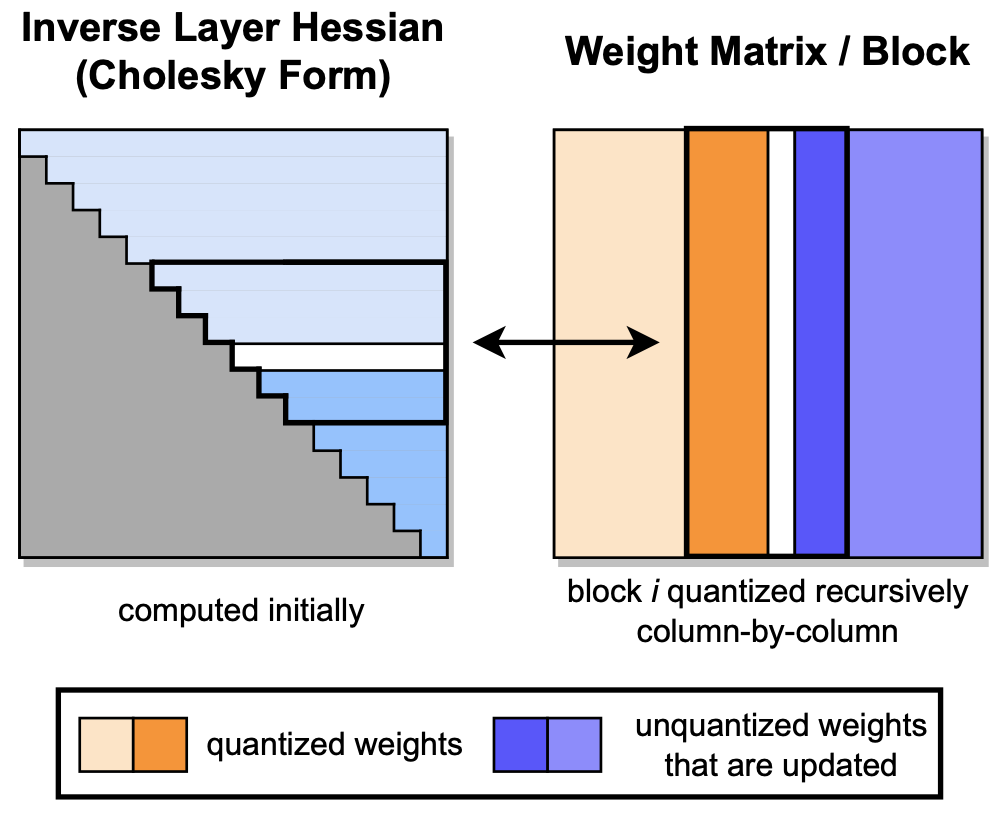

GPTQ: Accurate Post-Training Quantization for Generative Pre-trained Transformers Elias Frantar, Saleh Ashkboos, Torsten Hoefler, Dan Alistarh |

|

Github Paper |

GPTQ-for-LLaMA: 4 bits quantization of LLaMA using GPTQ. |

|

Github |

SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models Guangxuan Xiao, Ji Lin, Mickael Seznec, Hao Wu, Julien Demouth, Song Han |

|

Github Paper |

AWQ: Activation-aware Weight Quantization for LLM Compression and Acceleration Ji Lin, Jiaming Tang, Haotian Tang, Shang Yang, Xingyu Dang, Song Han |

|

Github Paper |

RPTQ: Reorder-based Post-training Quantization for Large Language Models Zhihang Yuan and Lin Niu and Jiawei Liu and Wenyu Liu and Xinggang Wang and Yuzhang Shang and Guangyu Sun and Qiang Wu and Jiaxiang Wu and Bingzhe Wu |

|

Github Paper |

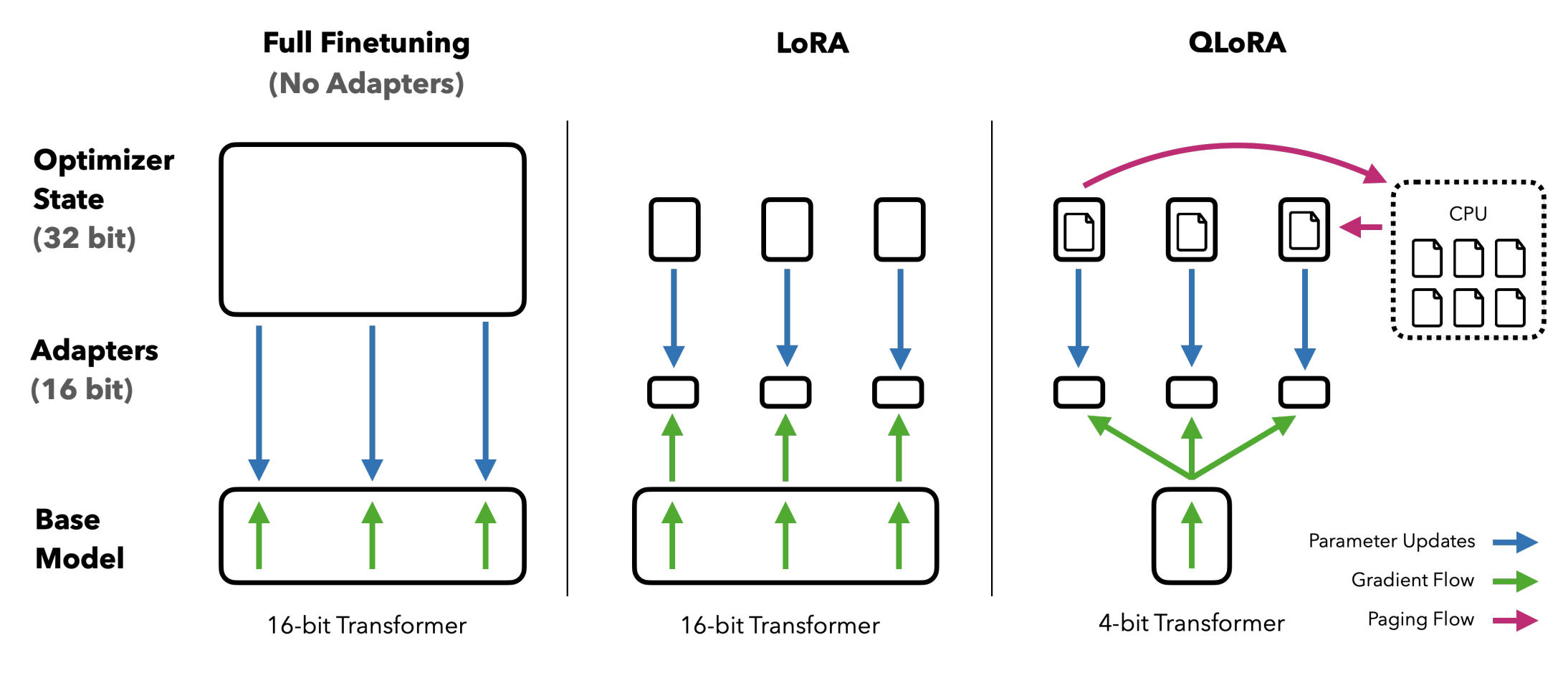

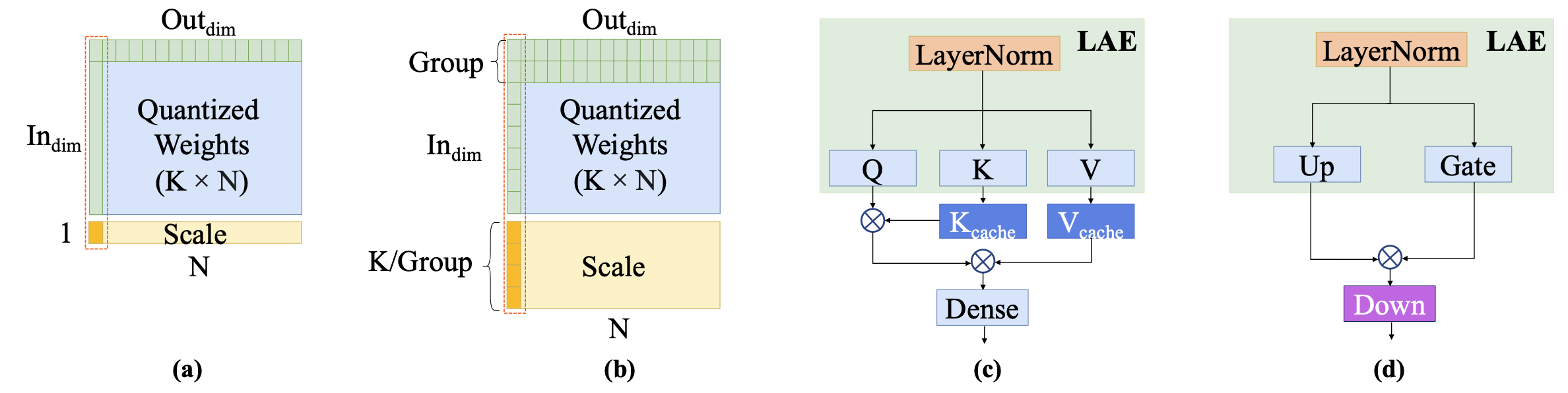

QLoRA: Efficient Finetuning of Quantized LLMs Tim Dettmers, Artidoro Pagnoni, Ari Holtzman, Luke Zettlemoyer |

|

Github Paper |

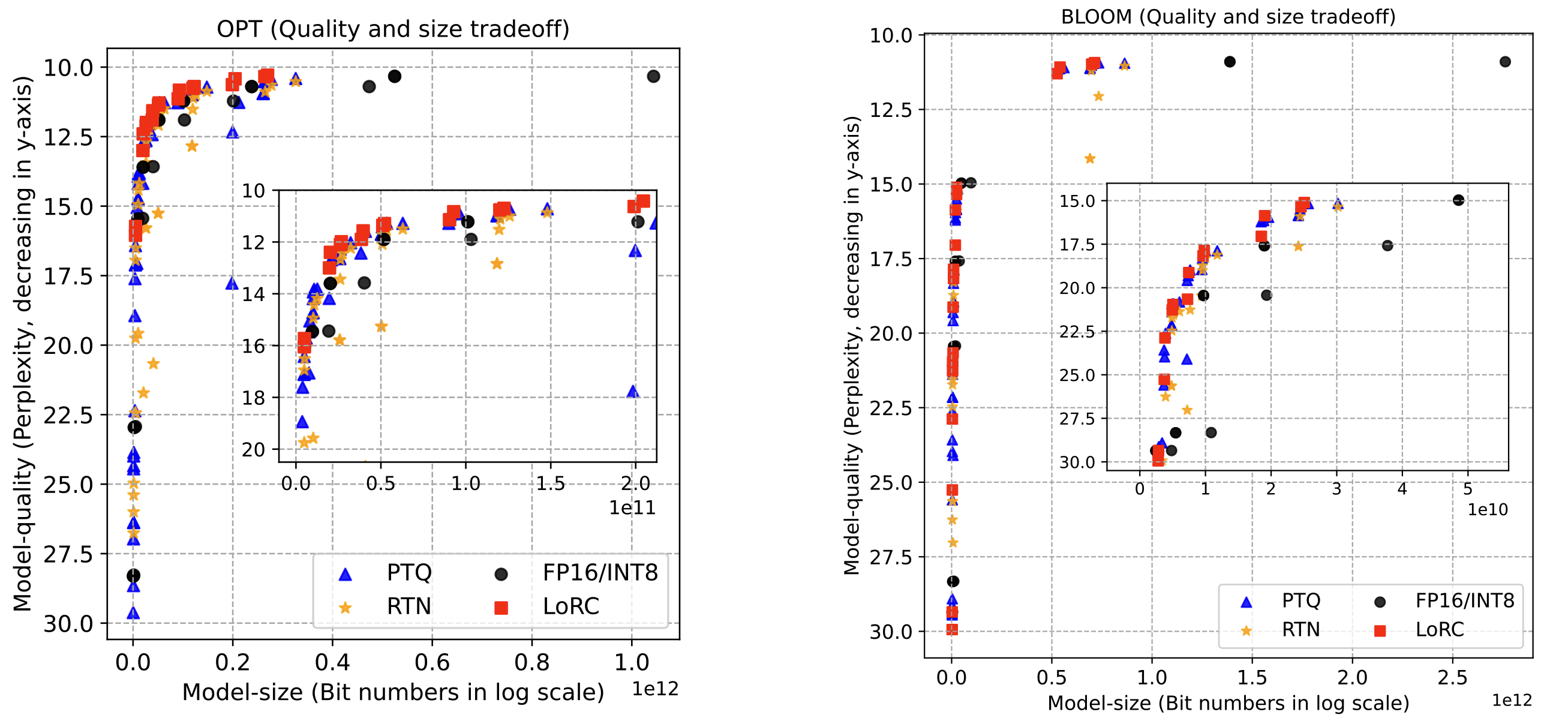

| ZeroQuant-V2: Exploring Post-training Quantization in LLMs from Comprehensive Study to Low Rank Compensation Zhewei Yao, Xiaoxia Wu, Cheng Li, Stephen Youn, Yuxiong He |

|

Paper |

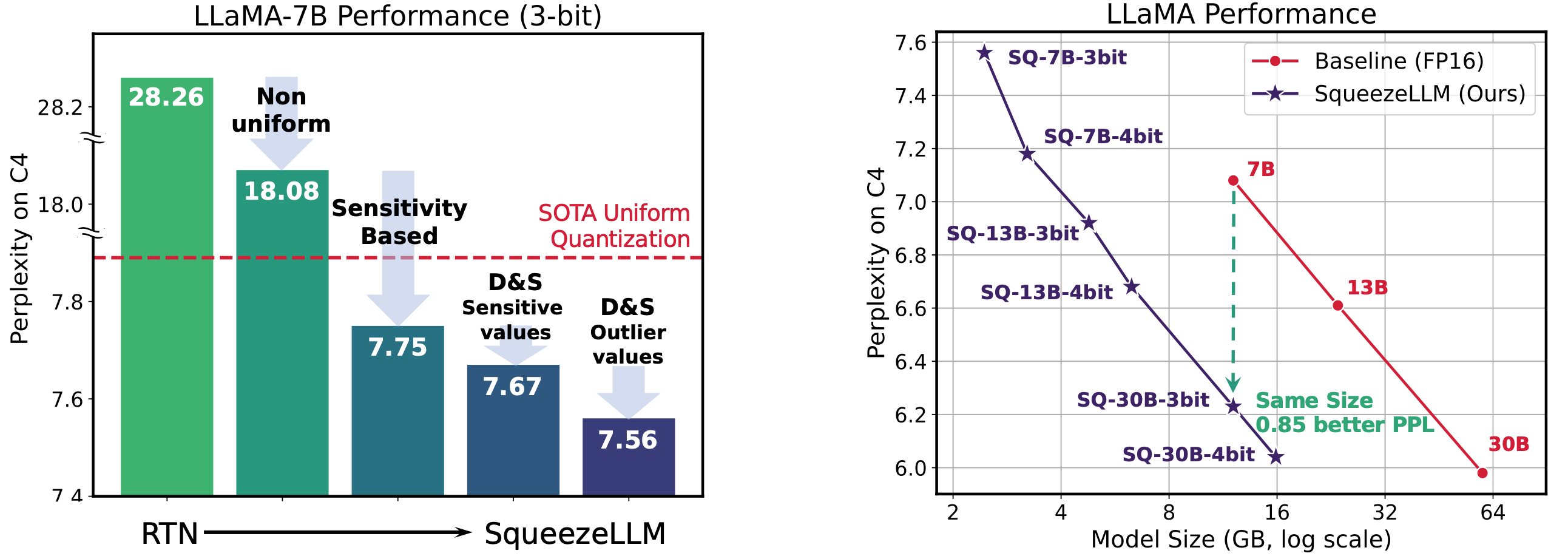

SqueezeLLM: Dense-and-Sparse Quantization Sehoon Kim, Coleman Hooper, Amir Gholami, Zhen Dong, Xiuyu Li, Sheng Shen, Michael W. Mahoney, Kurt Keutzer |

|

Github Paper |

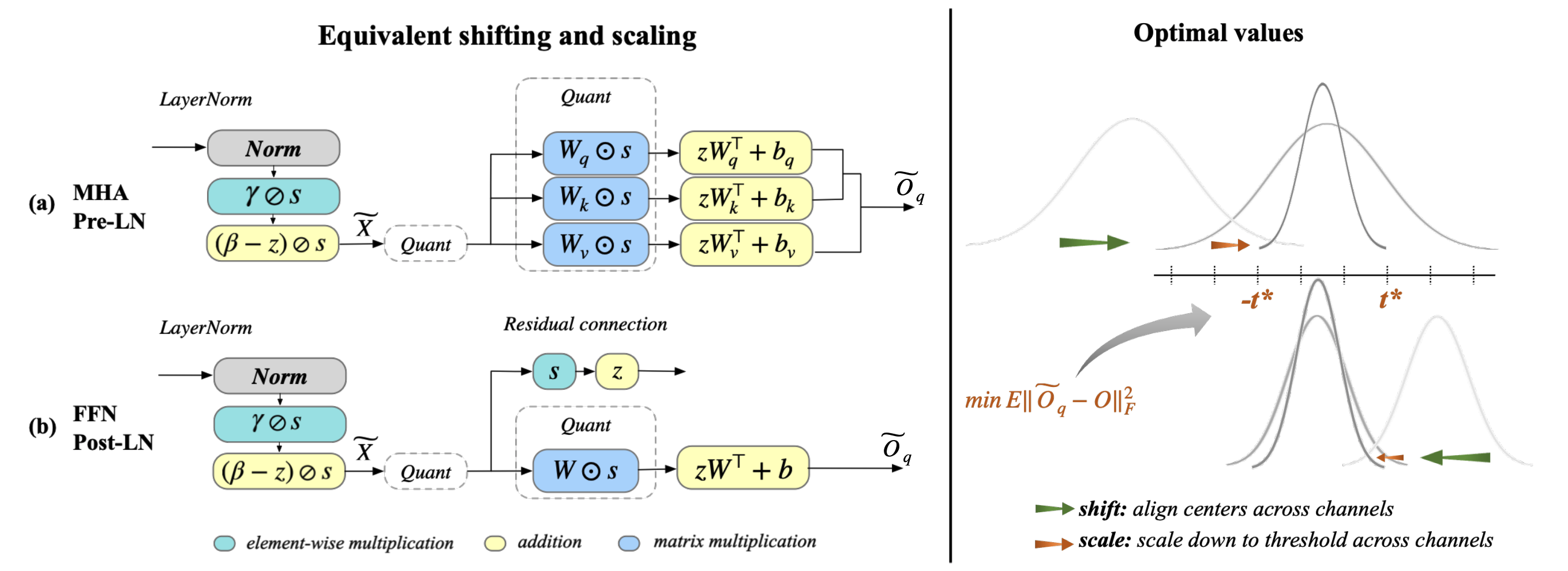

| Outlier Suppression+: Accurate quantization of large language models by equivalent and optimal shifting and scaling Xiuying Wei , Yunchen Zhang, Yuhang Li, Xiangguo Zhang, Ruihao Gong, Jinyang Guo, Xianglong Liu |

|

Paper |

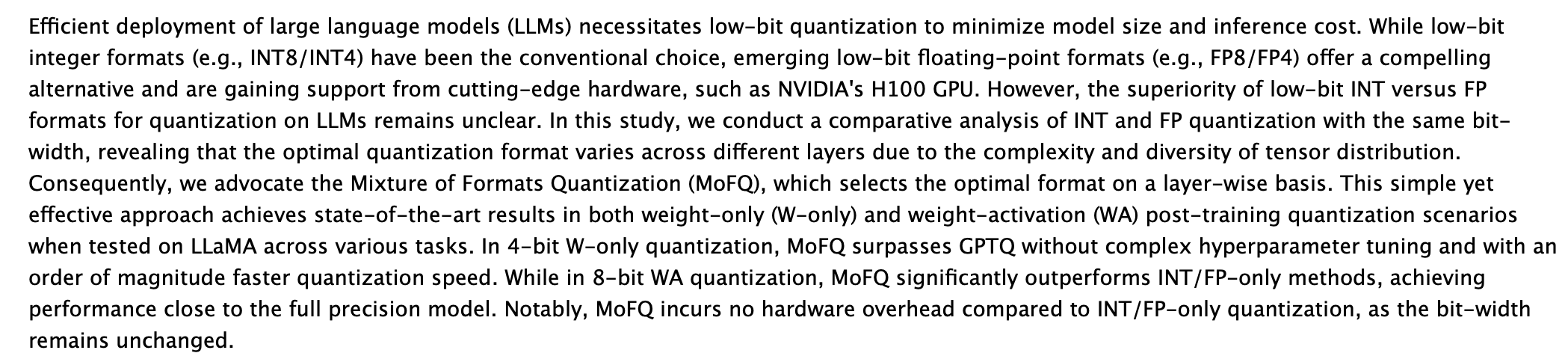

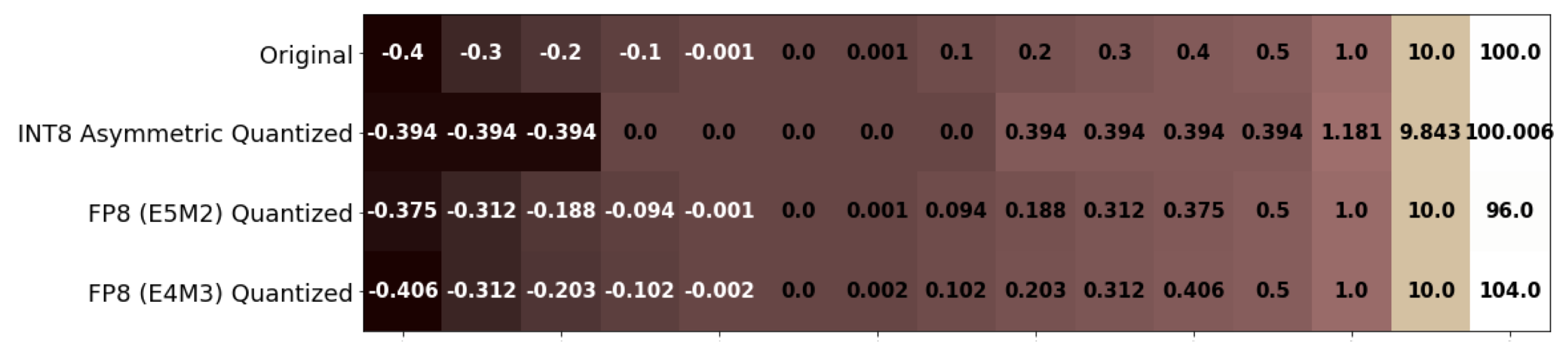

| Integer or Floating Point? New Outlooks for Low-Bit Quantization on Large Language Models Yijia Zhang, Lingran Zhao, Shijie Cao, Wenqiang Wang, Ting Cao, Fan Yang, Mao Yang, Shanghang Zhang, Ningyi Xu |

|

Paper |

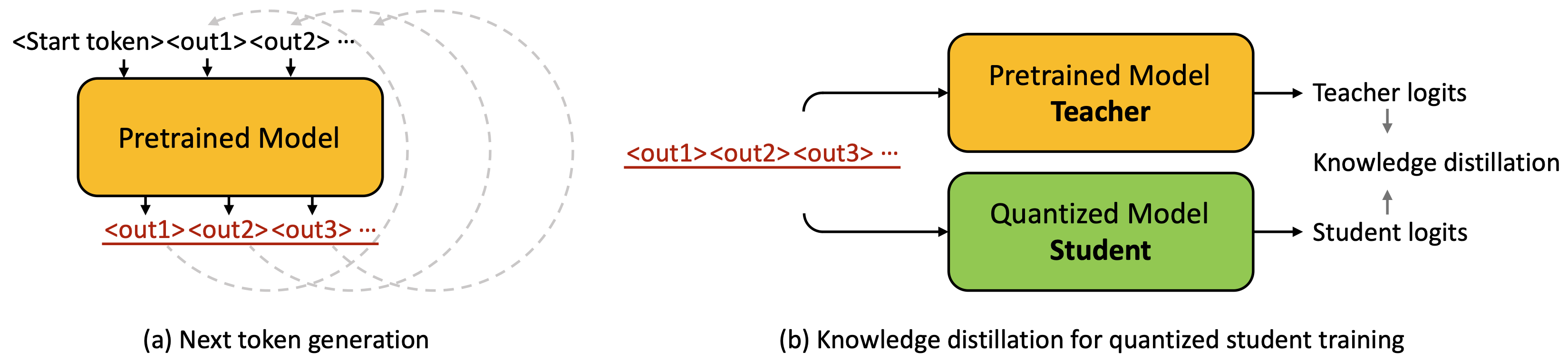

| LLM-QAT: Data-Free Quantization Aware Training for Large Language Models Zechun Liu, Barlas Oguz, Changsheng Zhao, Ernie Chang, Pierre Stock, Yashar Mehdad, Yangyang Shi, Raghuraman Krishnamoorthi, Vikas Chandra |

|

Paper |

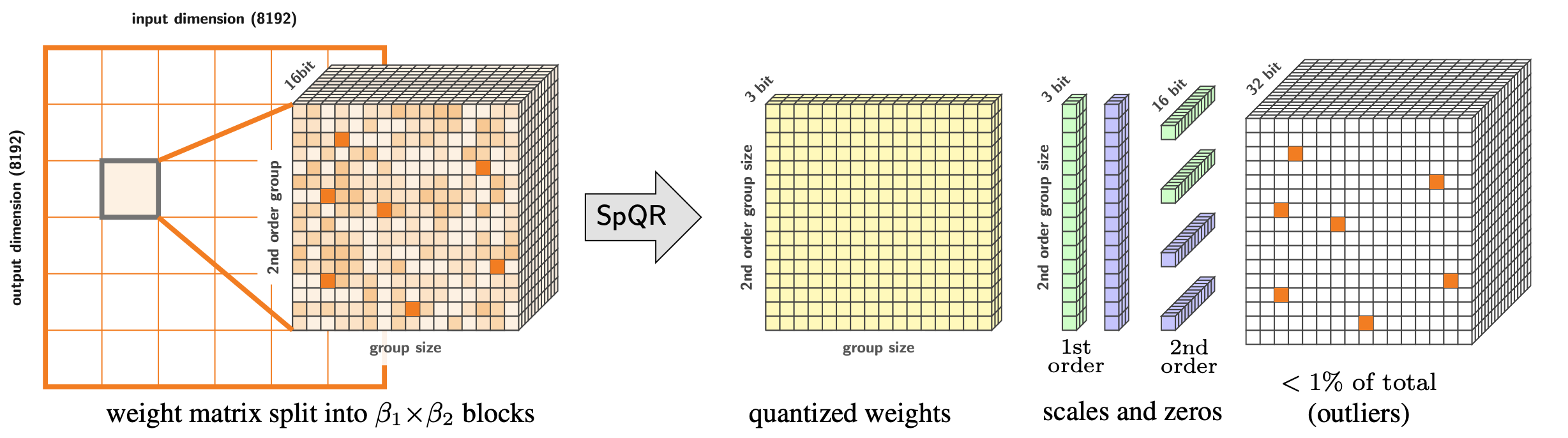

SpQR: A Sparse-Quantized Representation for Near-Lossless LLM Weight Compression Tim Dettmers, Ruslan Svirschevski, Vage Egiazarian, Denis Kuznedelev, Elias Frantar, Saleh Ashkboos, Alexander Borzunov, Torsten Hoefler, Dan Alistarh |

|

Github Paper |

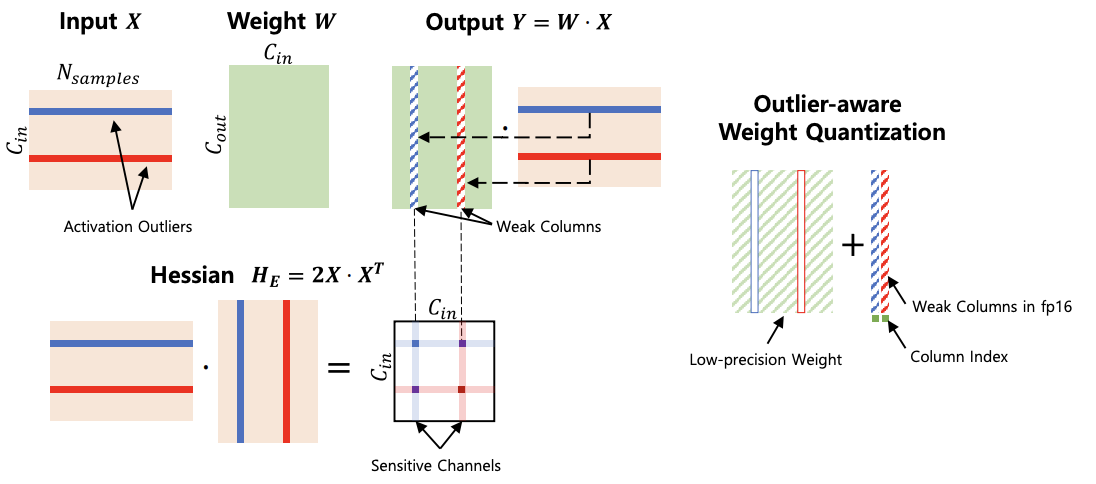

OWQ: Lessons learned from activation outliers for weight quantization in large language models Changhun Lee, Jungyu Jin, Taesu Kim, Hyungjun Kim, Eunhyeok Park |

|

Github Paper |

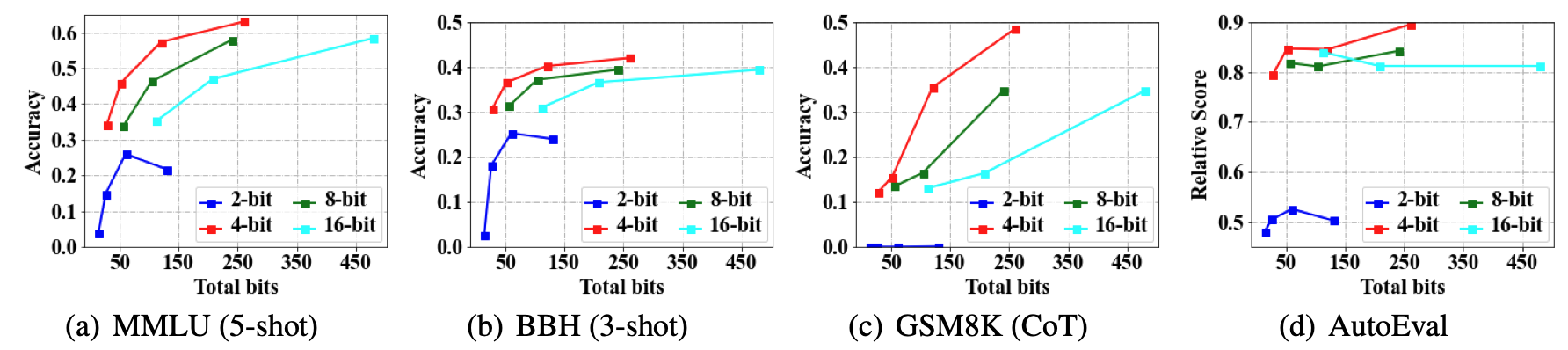

Do Emergent Abilities Exist in Quantized Large Language Models: An Empirical Study Peiyu Liu, Zikang Liu, Ze-Feng Gao, Dawei Gao, Wayne Xin Zhao, Yaliang Li, Bolin Ding, Ji-Rong Wen |

|

Github Paper |

| ZeroQuant-FP: A Leap Forward in LLMs Post-Training W4A8 Quantization Using Floating-Point Formats Xiaoxia Wu, Zhewei Yao, Yuxiong He |

|

Paper |

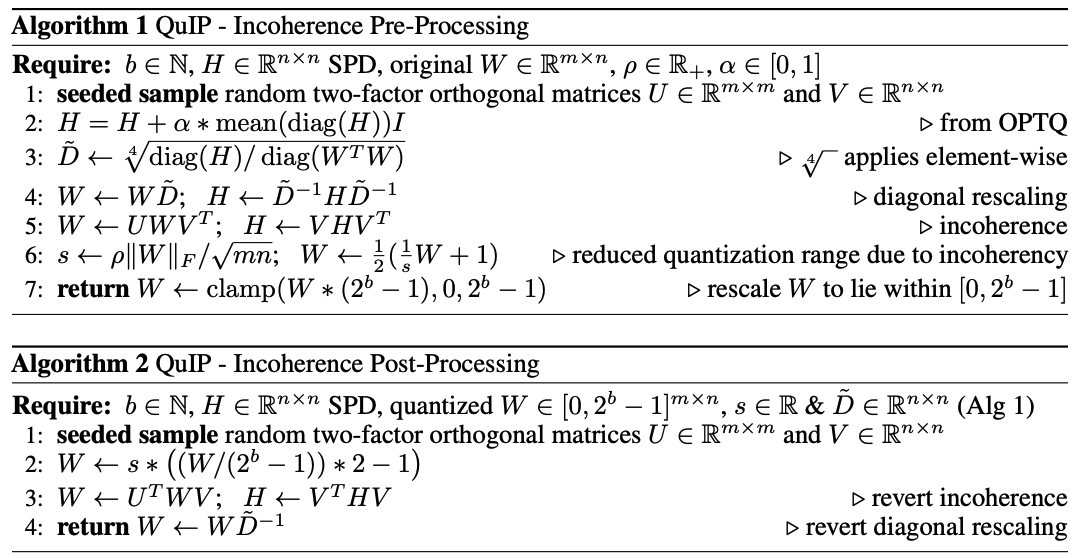

QuIP: 2-Bit Quantization of Large Language Models With Guarantees Jerry Chee, Yaohui Cai, Volodymyr Kuleshov, Christopher De SaXQ |

|

Github Paper |

| FPTQ: Fine-grained Post-Training Quantization for Large Language Models Qingyuan Li, Yifan Zhang, Liang Li, Peng Yao, Bo Zhang, Xiangxiang Chu, Yerui Sun, Li Du, Yuchen Xie |

|

Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

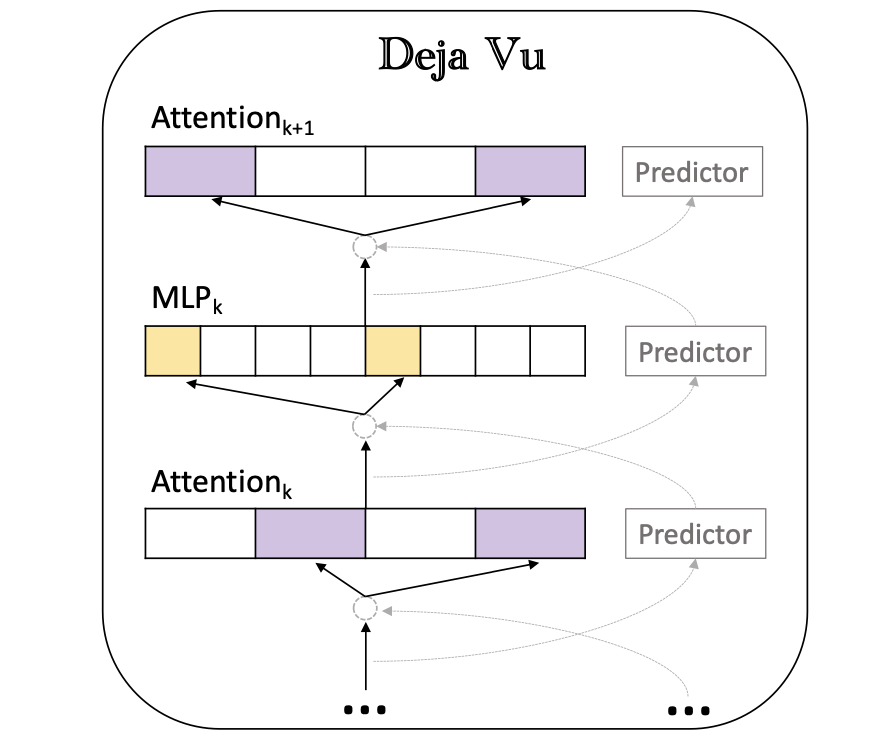

Deja Vu: Contextual Sparsity for Efficient LLMs at Inference Time Zichang Liu, Jue WANG, Tri Dao, Tianyi Zhou, Binhang Yuan, Zhao Song, Anshumali Shrivastava, Ce Zhang, Yuandong Tian, Christopher Re, Beidi Chen |

|

Github Paper |

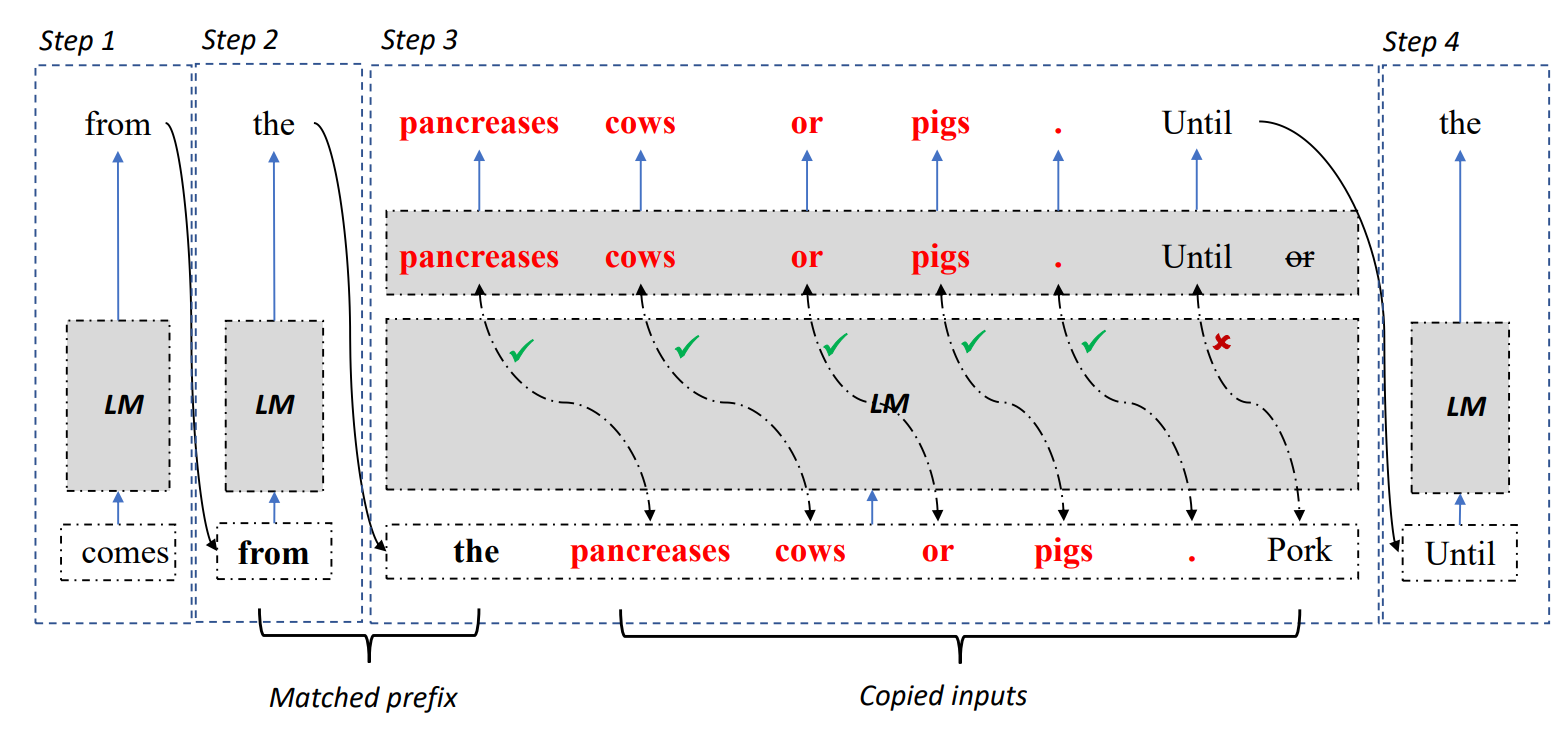

| Inference with Reference: Lossless Acceleration of Large Language Models Nan Yang, Tao Ge, Liang Wang, Binxing Jiao, Daxin Jiang, Linjun Yang, Rangan Majumder, Furu Wei |

|

Github paper |

SpecInfer: Accelerating Generative LLM Serving with Speculative Inference and Token Tree Verification Xupeng Miao, Gabriele Oliaro, Zhihao Zhang, Xinhao Cheng, Zeyu Wang, Rae Ying Yee Wong, Zhuoming Chen, Daiyaan Arfeen, Reyna Abhyankar, Zhihao Jia |

|

Github paper |

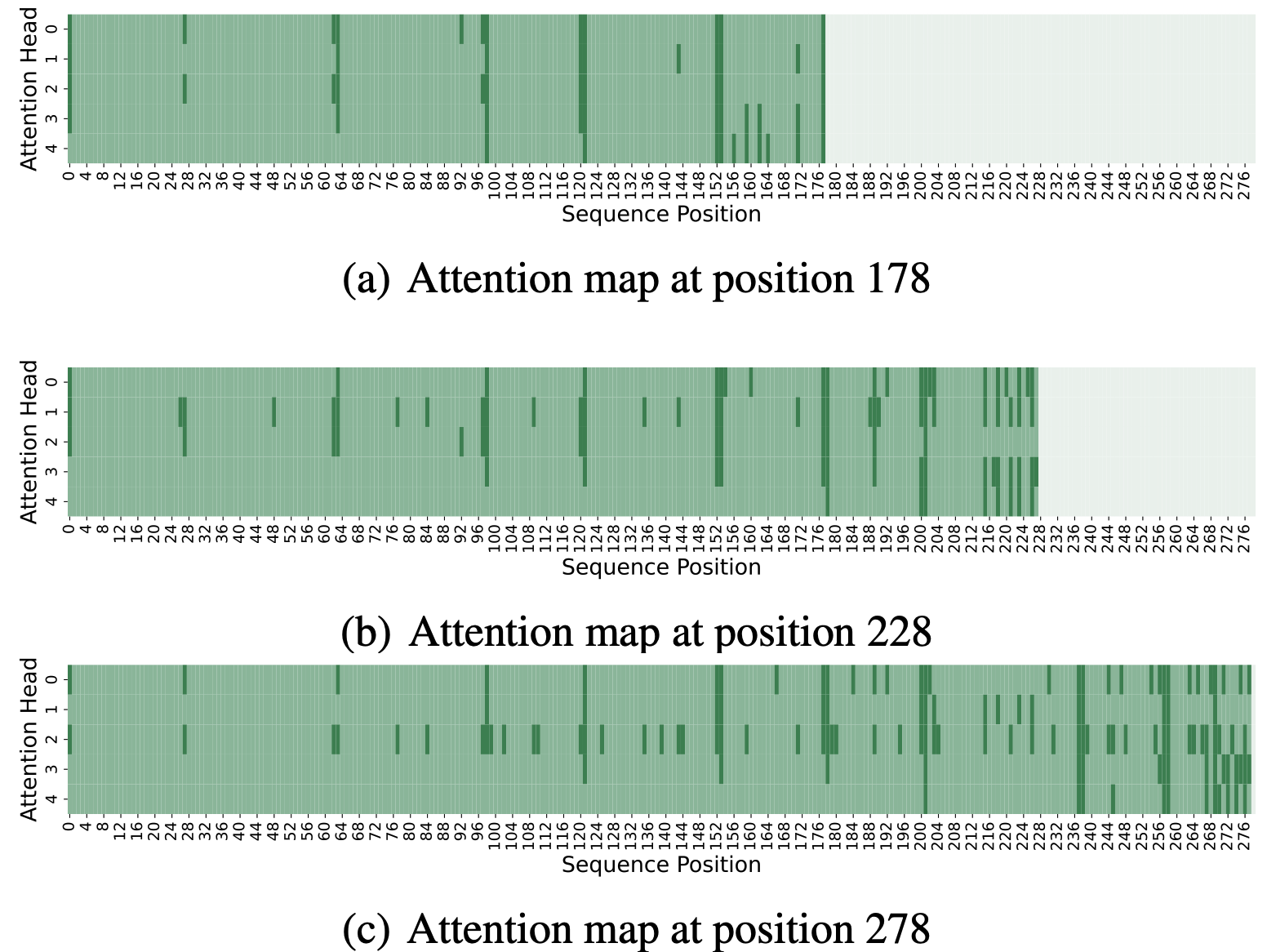

| Scissorhands: Exploiting the Persistence of Importance Hypothesis for LLM KV Cache Compression at Test Time Zichang Liu, Aditya Desai, Fangshuo Liao, Weitao Wang, Victor Xie, Zhaozhuo Xu, Anastasios Kyrillidis, Anshumali Shrivastava |

|

Paper |

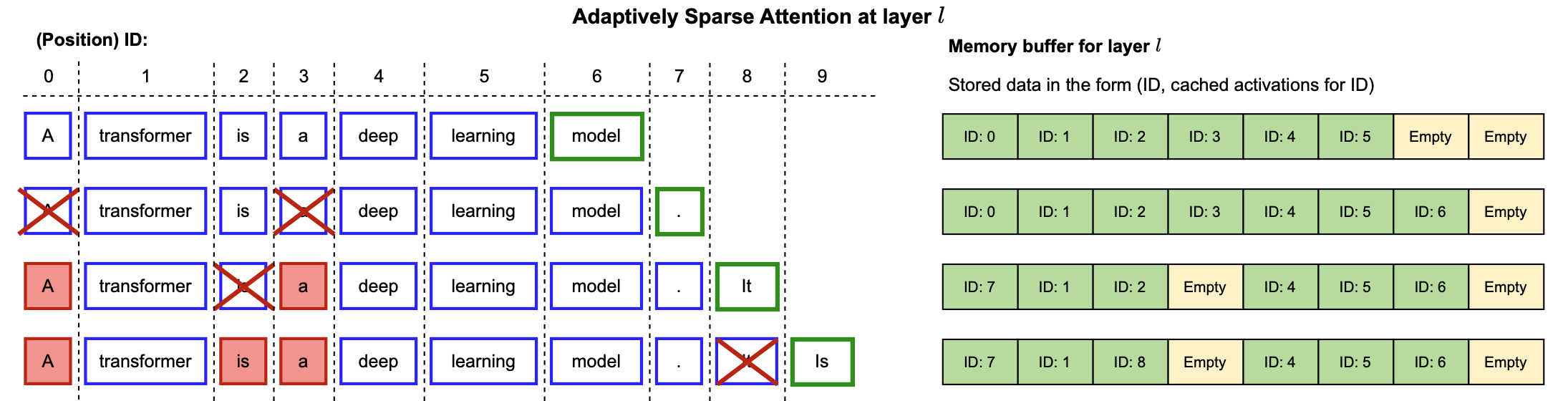

| Dynamic Context Pruning for Efficient and Interpretable Autoregressive Transformers Sotiris Anagnostidis, Dario Pavllo, Luca Biggio, Lorenzo Noci, Aurelien Lucchi, Thomas Hofmann |

|

Paper |

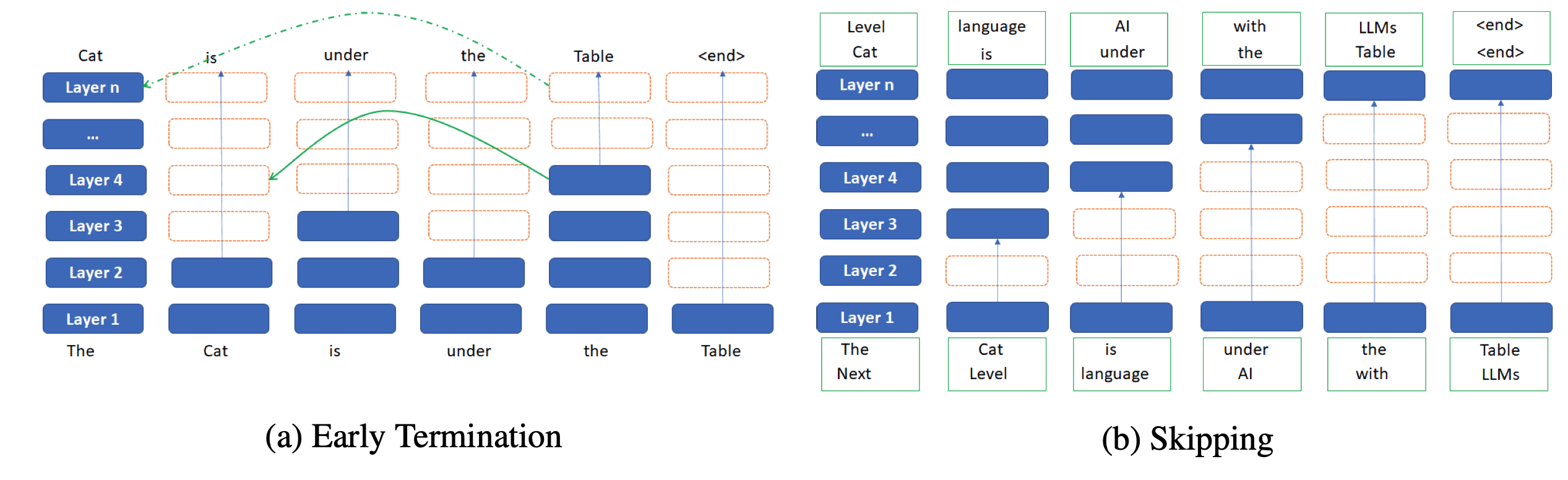

| SkipDecode: Autoregressive Skip Decoding with Batching and Caching for Efficient LLM Inference Luciano Del Corro, Allie Del Giorno, Sahaj Agarwal, Bin Yu, Ahmed Awadallah, Subhabrata Mukherjee |

|

Paper |

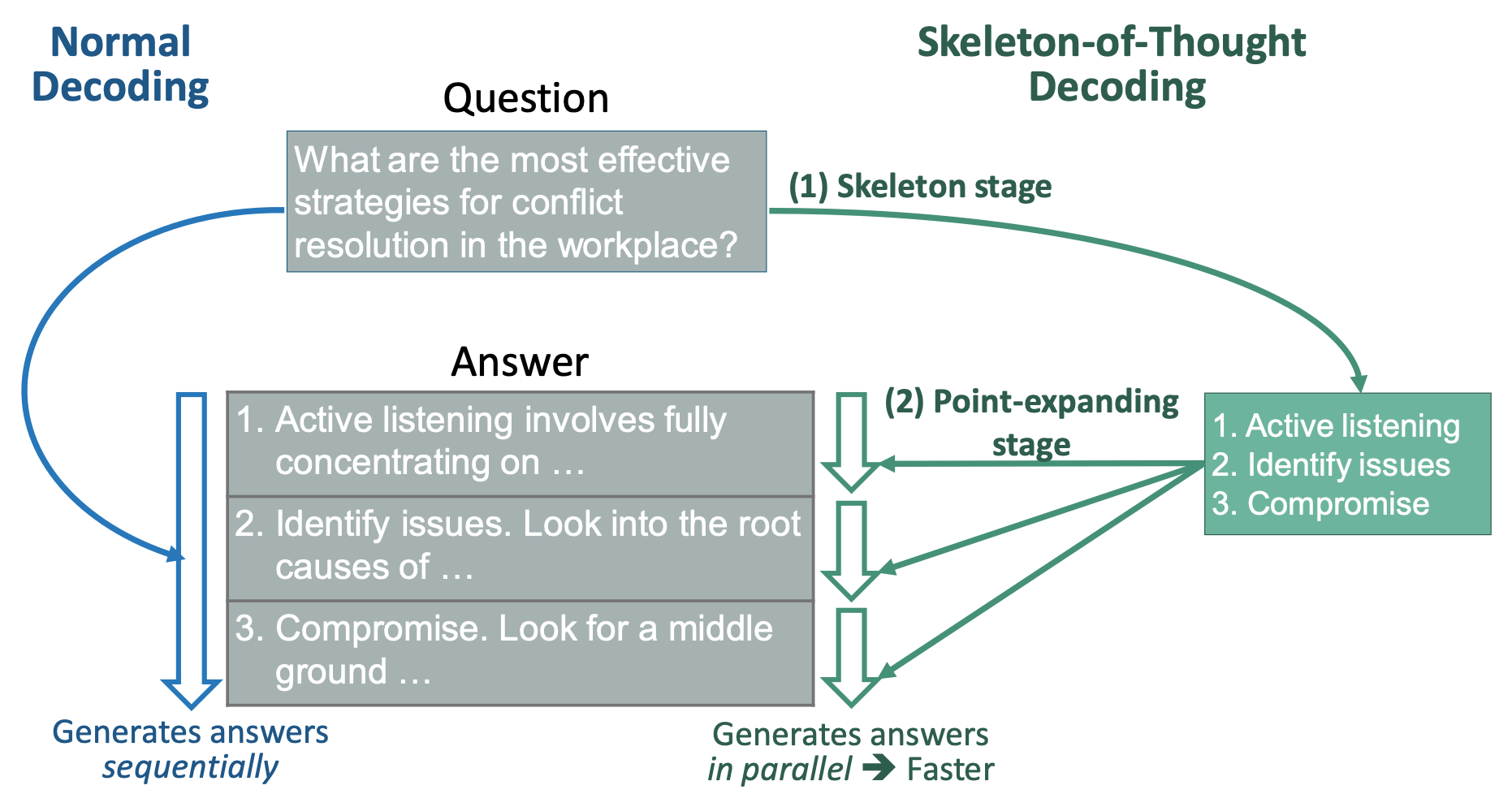

| Skeleton-of-Thought: Large Language Models Can Do Parallel Decoding Xuefei Ning, Zinan Lin, Zixuan Zhou, Huazhong Yang, Yu Wang |

|

Paper |

Accelerating LLM Inference with Staged Speculative Decoding Benjamin Spector, Chris Re |

|

Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

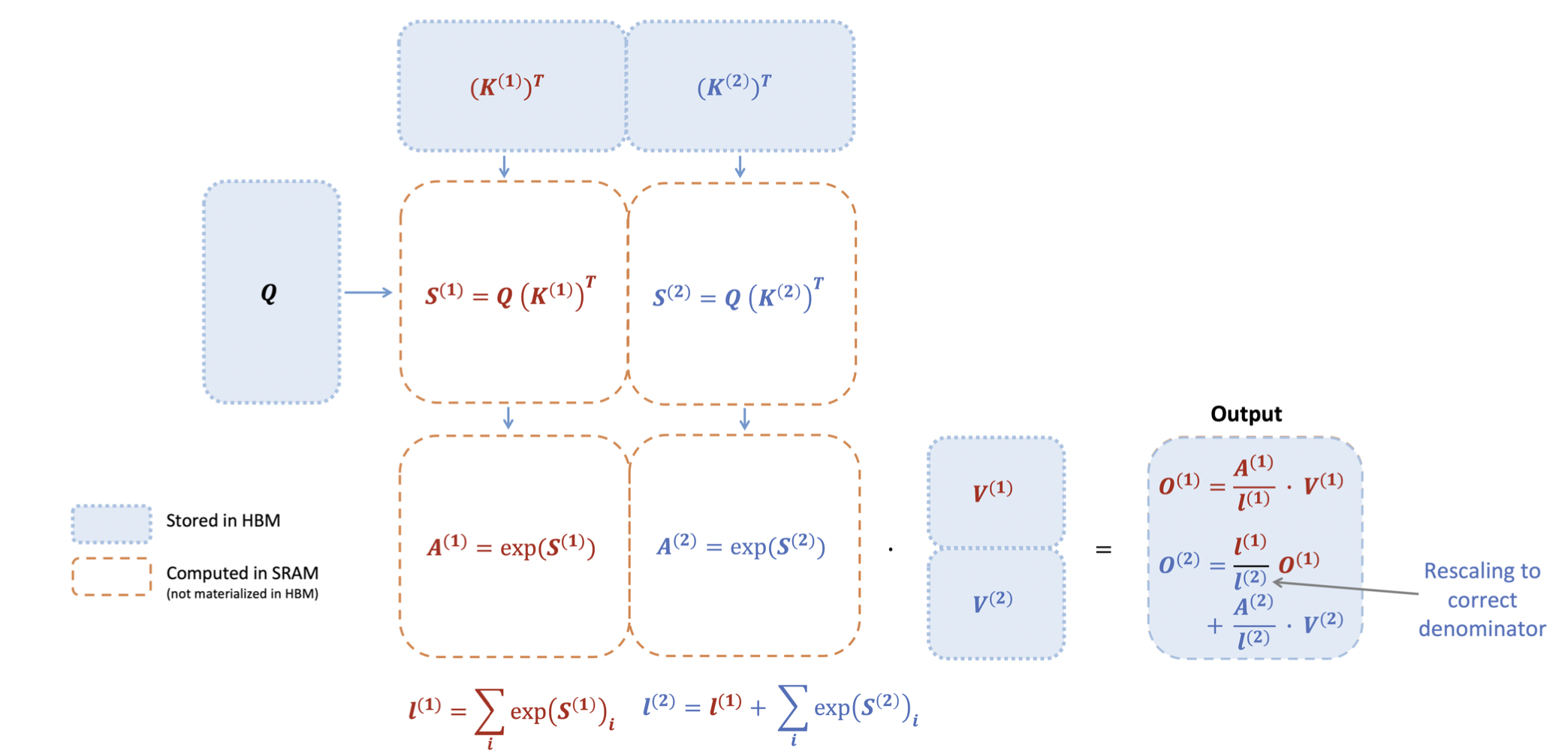

FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness Tri Dao, Daniel Y. Fu, Stefano Ermon, Atri Rudra, Christopher Ré |

|

Github Paper |

FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning Tri Dao |

|

Github Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

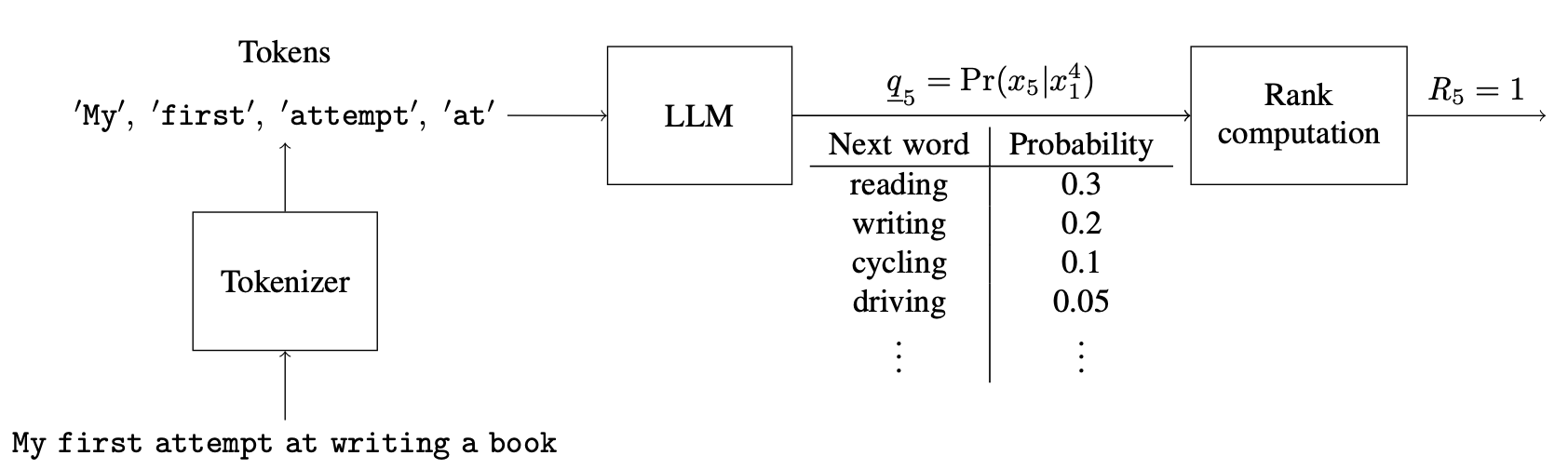

| LLMZip: Lossless Text Compression using Large Language Models Chandra Shekhara Kaushik Valmeekam, Krishna Narayanan, Dileep Kalathil, Jean-Francois Chamberland, Srinivas Shakkottai |

|

Paper | Unofficial Github |

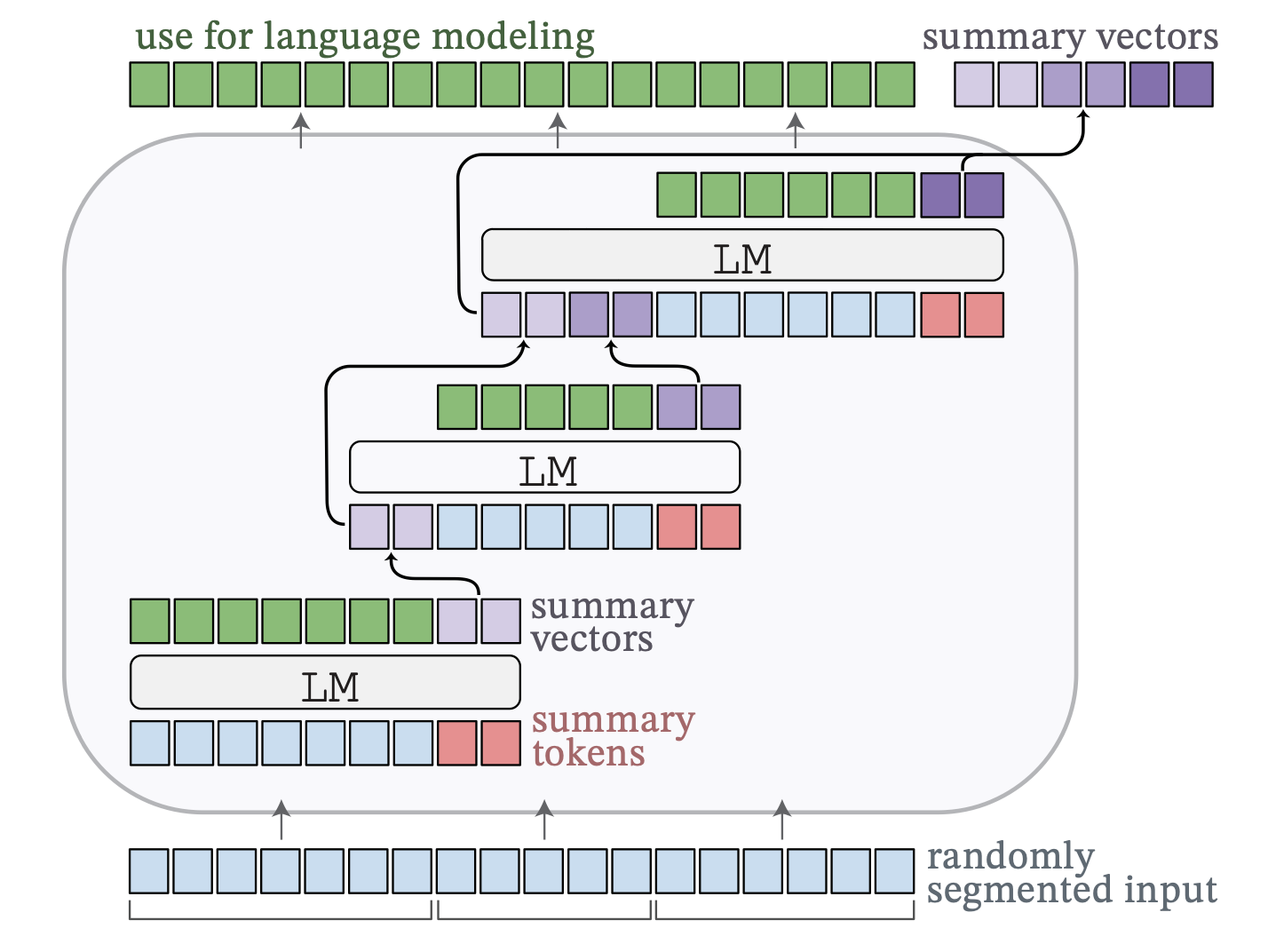

Adapting Language Models to Compress Contexts Alexis Chevalier, Alexander Wettig, Anirudh Ajith, Danqi Chen |

|

Github Paper |

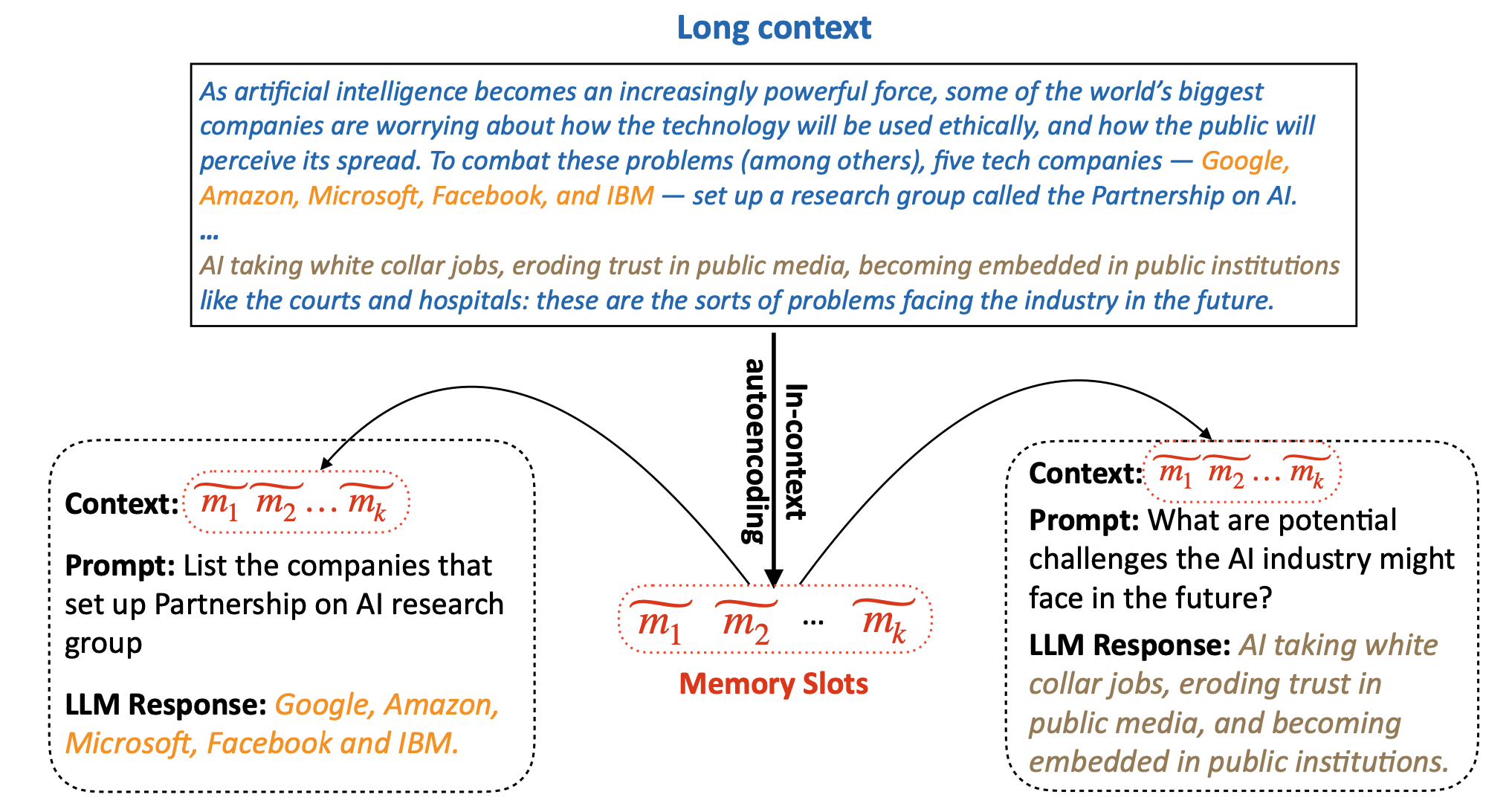

| In-context Autoencoder for Context Compression in a Large Language Model Tao Ge, Jing Hu, Xun Wang, Si-Qing Chen, Furu Wei |

|

Paper |

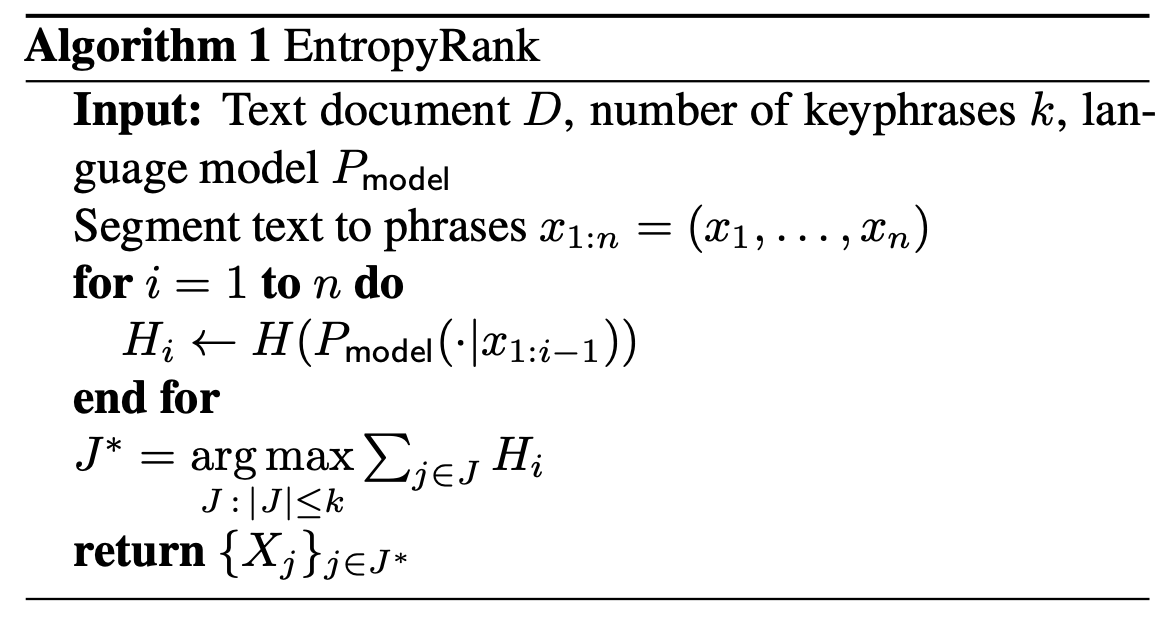

EntropyRank: Unsupervised Keyphrase Extraction via Side-Information Optimization for Language Model-based Text Compression Alexander Tsvetkov. Alon Kipnis |

|

Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

LoSparse: Structured Compression of Large Language Models based on Low-Rank and Sparse Approximation Yixiao Li, Yifan Yu, Qingru Zhang, Chen Liang, Pengcheng He, Weizhu Chen, Tuo Zhao |

|

Github Paper |

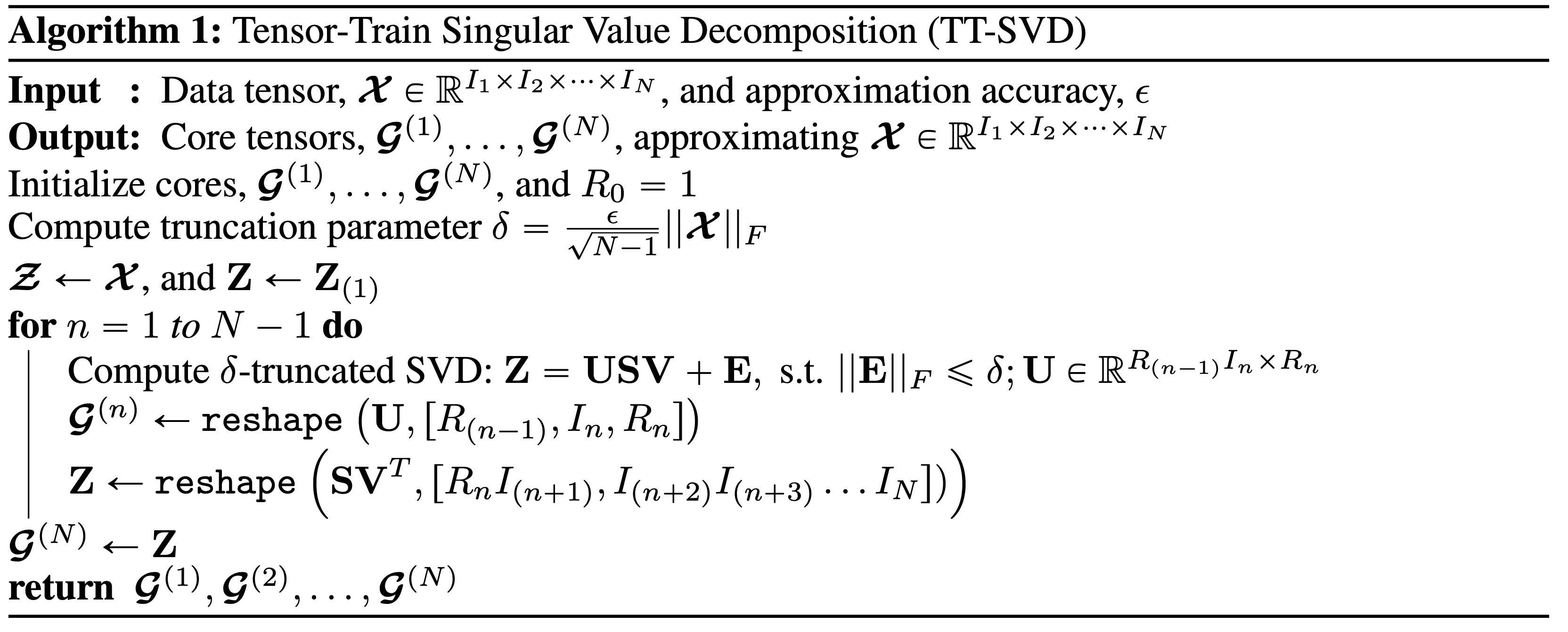

| TensorGPT: Efficient Compression of the Embedding Layer in LLMs based on the Tensor-Train Decomposition Mingxue Xu, Yao Lei Xu, Danilo P. Mandic |

|

Paper |

| Title & Authors | Introduction | Links |

|---|---|---|

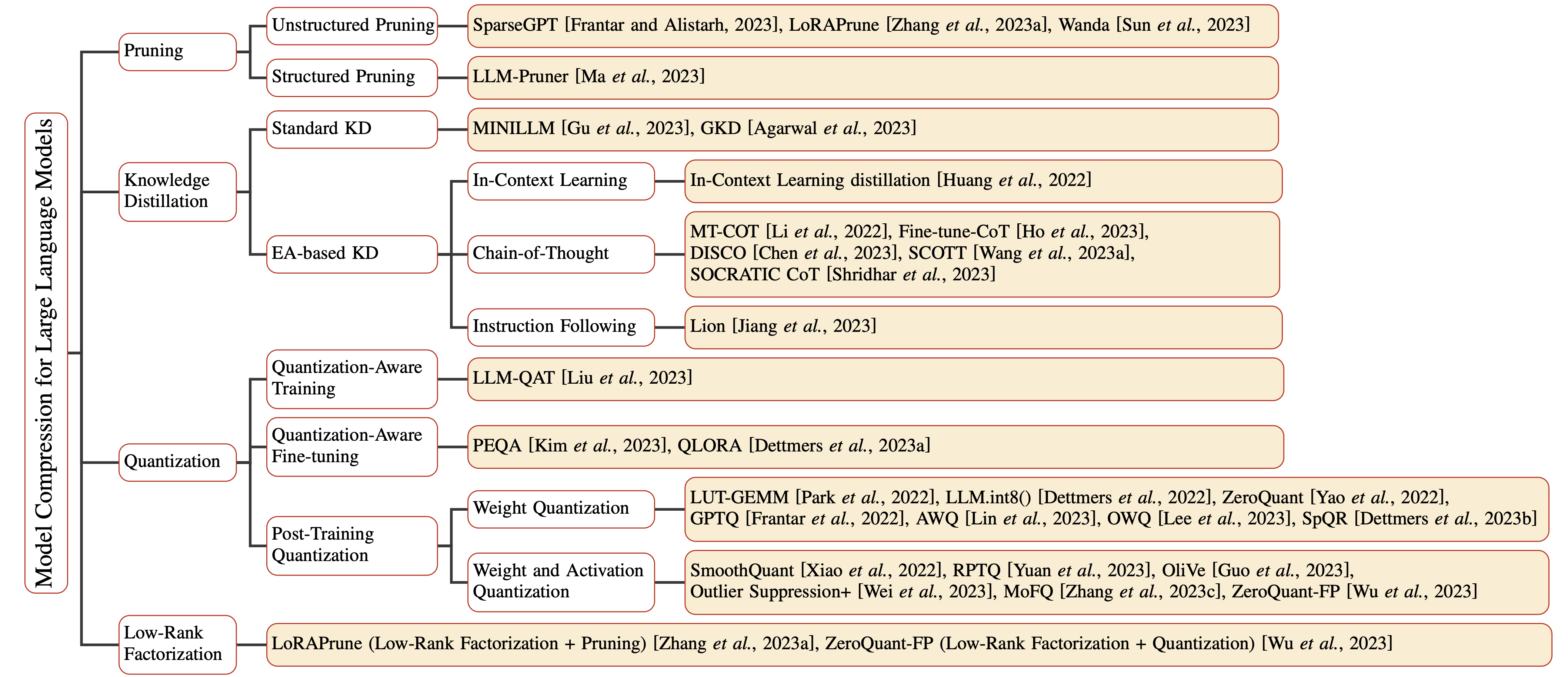

| A Survey on Model Compression for Large Language Models Xunyu Zhu, Jian Li, Yong Liu, Can Ma, Weiping Wang |

|

Paper |

- EdgeMoE: Fast On-Device Inference of MoE-based Large Language Models. Rongjie Yi, Liwei Guo, Shiyun Wei, Ao Zhou, Shangguang Wang, Mengwei Xu. [Paper]

- CPET: Effective Parameter-Efficient Tuning for Compressed Large Language Models. Weilin Zhao, Yuxiang Huang, Xu Han, Zhiyuan Liu, Zhengyan Zhang, Maosong Sun. [Paper]