To start this application run the following command:

python app.py

and navigate to the following url: http://localhost:8080

NOTE: it might take a minute to respond the first time

NOTE: Before running the unit tests, make sure the previous command is running

To run all the tests (summary style):

python run-tests.py

To run all the test (verbose style):

python run-tests.py -v

To run only the api tests

python unittests/ApiTests.py

To run only the model tests

python unittests/ModelTests.py

A script is available to automate the ingestion of observations (and re-train all models):

python solution_guidance/model.py

it takes Random Forest Regression by default, however Extra Trees Regression is also available as an option when adding the following argument:

python solution_guidance/model.py extratrees

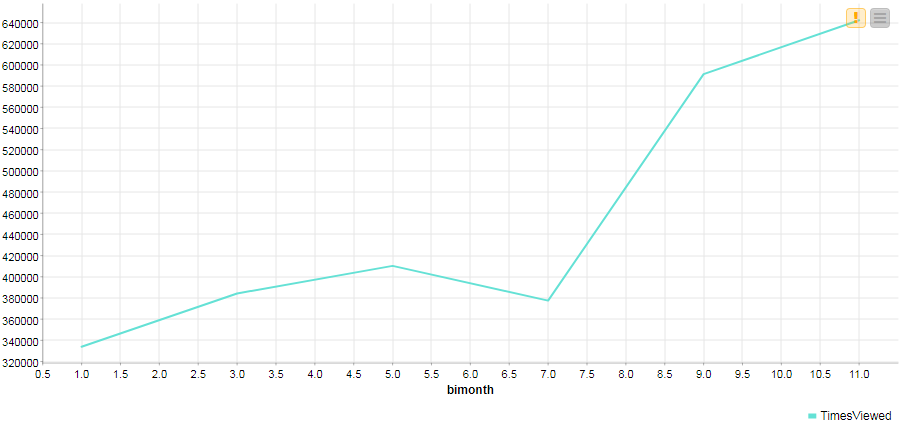

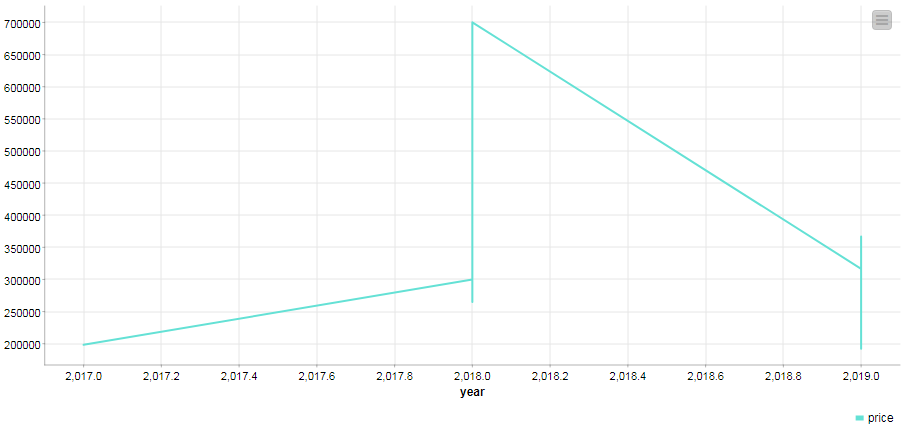

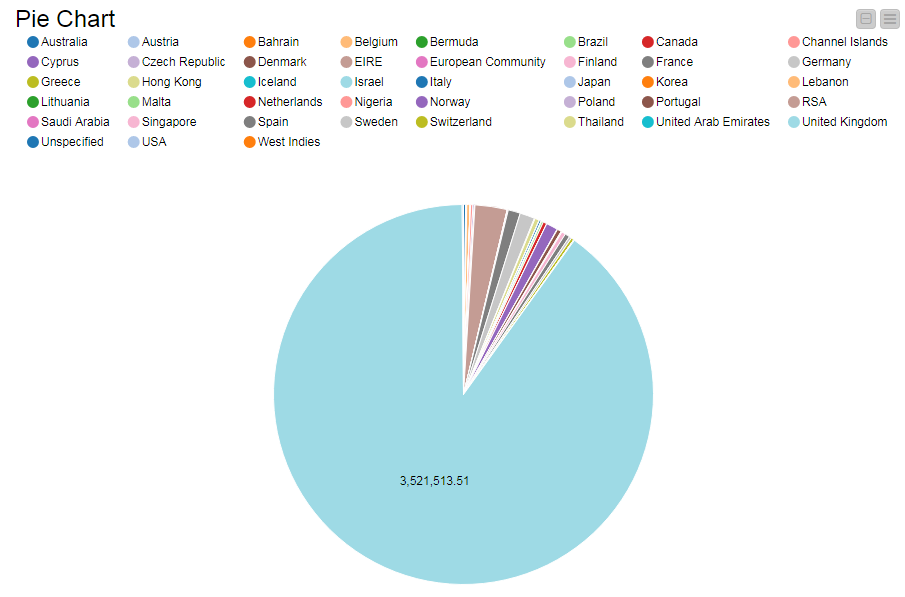

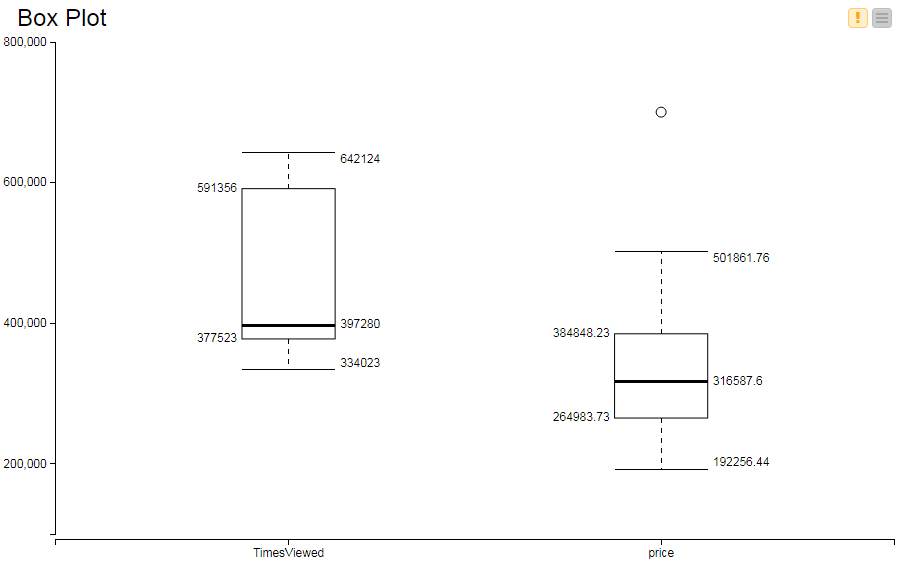

As part of the EDA investigation, these graphs were created:

Course link: learn/ibm-ai-workflow-ai-production

The following questions are being evaluated as part of the peer review submission:

- Are there unit tests for the API?

- Are there unit tests for the model?

- Are there unit tests for the logging?

- Can all of the unit tests be run with a single script and do all of the unit 1. tests pass?

- Is there a mechanism to monitor performance?

- Was there an attempt to isolate the read/write unit tests from production 1. models and logs?

- Does the API work as expected? For example, can you get predictions for a 1. specific country as well as for all countries combined?

- Does the data ingestion exists as a function or script to facilitate 1. automation?

- Were multiple models compared?

- Did the EDA investigation use visualizations?

- Is everything containerized within a working Docker image?

- Did they use a visualization to compare their model to the baseline model?