This repository is the official implementation of Gen-L-Video.

Gen-L-Video: Multi-Text Conditioned Long Video Generation via Temporal Co-Denoising

Fu-Yun Wang, Wenshuo Chen, Guanglu Song, Han-Jia Ye, Yu Liu, Hongsheng Li

Introduction • Comparisons • Setup • Results • Relevant Works • Acknowledgments • Citation • Contact

🔥🔥 TL;DR: A universal methodology that extends short video diffusion models for efficient multi-text conditioned long video generation and editing.

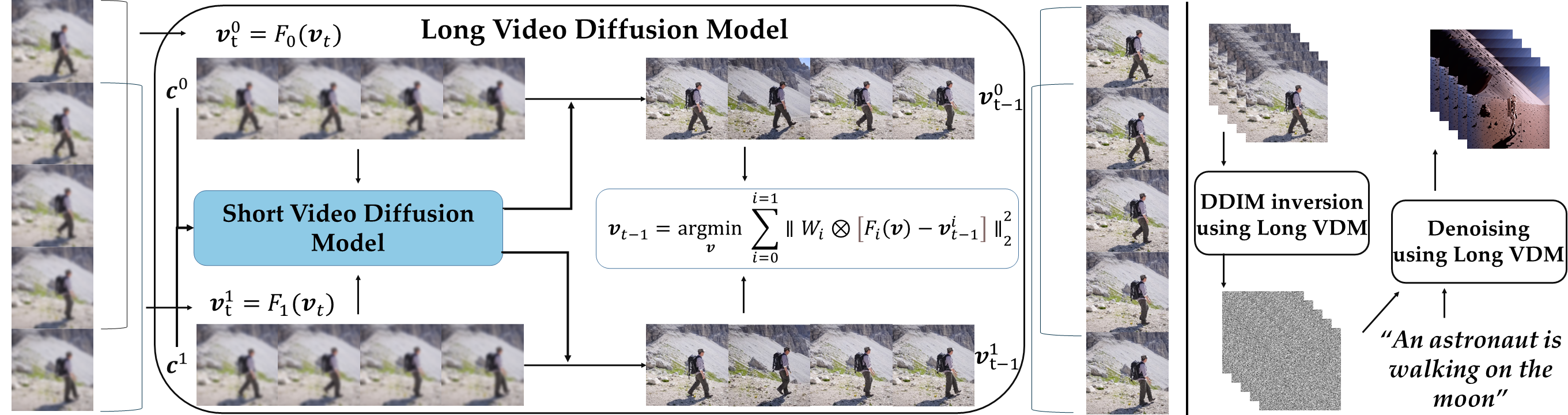

Current methodologies for video generation and editing, while innovative, are often confined to extremely short videos (typically less than 24 frames) and are limited to a single text condition. These constraints significantly limit their applications given that real-world videos usually consist of multiple segments, each bearing different semantic information. To address this challenge, we introduce a novel paradigm dubbed as Gen-L-Video capable of extending off-the-shelf short video diffusion models for generating and editing videos comprising hundreds of frames with diverse semantic segments without introducing additional training, all while preserving content consistency.

Essentially, this procedure establishes an abstract long video generator and editor without necessitating any additional training, enabling the generation and editing of videos of any length using established short video generation and editing methodologies.

- [2023.05.30]: Our paper is now available on arXiv.

- [2023.05.30]: Our project page is now available on gen-long-video.

- [2023.06.01]: Basic code framework is now open-sourced GLV.

- [2023.06.01]: Scripts: one-shot-tuning, tuning-free-mix, tuning-free-inpaint is now available.

- [2023.06.02]: Scripts for preparing control videos including

canny,hough,hed,scribble,fake_scribble,pose,seg,depth, andnormalis now available, following the instruction to get your own control videos.

🤗🤗🤗More training/inference scripts will be available in a few days.

Feel free to open issues for any possible setup problems.

conda env create -f environment.yml

conda activate glvpip install git+https://github.com/facebookresearch/segment-anything.git

pip install git+https://github.com/IDEA-Research/GroundingDINO.gitor

git clone https://github.com/facebookresearch/segment-anything.git

cd segment-anything

pip install -e .

cd ..

git clone https://github.com/IDEA-Research/GroundingDINO.git

cd GroundingDINO

pip install -e .Note that if you are using GPU clusters that the management node has no access to GPU resources, you should submit the pip install -e . to the computing node as a computing task when building the GroundingDINO. Otherwise, it will not support detection computing through GPU.

mkdir weights

cd weights

# Vit-H SAM model.

wget https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pth

# Part Grounding Swin-Base Model.

wget https://github.com/Cheems-Seminar/segment-anything-and-name-

it/releases/download/v1.0/swinbase_part_0a0000.pth

# Grounding DINO Model.

wget https://github.com/IDEA-Research/GroundingDINO/releases/download/v0.1.0-alpha2/groundingdino_swinb_cogcoor.pth

Download the Pretrained T2I-Adapters

git clone https://huggingface.co/TencentARC/T2I-AdapterAfter downloading them, you should specify the absolute/relative path of them in the config files.

If you download all the above pretrained weights in the folder weights , set the configs files as follows:

- In

configs/tuning-free-inpaint/girl-glass.yaml

sam_checkpoint: "weights/sam_vit_h_4b8939.pth"

groundingdino_checkpoint: "weights/groundingdino_swinb_cogcoor.pth"- In

one-shot-tuning.py, set

adapter_paths={

"pose":"weights/T2I-Adapter/models/t2iadapter_openpose_sd14v1.pth",

"sketch":"weights/T2I-Adapter/models/t2iadapter_sketch_sd14v1.pth",

"seg": "weights/T2I-Adapter/models/t2iadapter_seg_sd14v1.pth",

"depth":"weights/T2I-Adapter/models/t2iadapter_depth_sd14v1.pth",

"canny":"weights/T2I-Adapter/models/t2iadapter_canny_sd14v1.pth"

}Then all the other weights are able to be automatically downloaded through the API of Hugging Face.

Here is an additional instruction for installing and running grounding dino.

cd GroundingDINO/groundingdino/config/

vim GroundingDINO_SwinB_cfg.pyset

text_encoder_type = "[Your Path]/bert-base-uncased"Then

vim GroundingDINO/groundingdino/util/get_tokenlizer.pySet

def get_pretrained_language_model(text_encoder_type):

if text_encoder_type == "bert-base-uncased" or text_encoder_type.split("/")[-1]=="bert-base-uncased":

return BertModel.from_pretrained(text_encoder_type)

if text_encoder_type == "roberta-base":

return RobertaModel.from_pretrained(text_encoder_type)

raise ValueError("Unknown text_encoder_type {}".format(text_encoder_type))Now you should be able to run your Grounding DINO with pre-downloaded bert weights.

git clone https://github.com/lllyasviel/ControlNet.git

cd ControlNet

git checkout f4748e3

mv ../process_data.py .

python process_data.py --v_path=../data --t_path==../t_data --c_path==../c_data --fps=10- One-Shot Tuning Method

accelerate launch one-shot-tuning.py --control=[your control][your control] can be set as pose , depth, seg, sketch, canny.

pose and depth are recommended.

- Tuning-Free Method for videos with smooth semantic changes.

accelerate launch tuning-free-mix.py- Tuning-Free Edit Anything in Videos.

accelerate launch tuning-free-inpaint.py| Method | Long Video | Multi-Text Conditioned | Pretraining-Free | Parallel Denoising | Versatile |

| Tune-A-Video | ❌ | ❌ | ✔ | ❌ | ❌ |

| LVDM | ✔ | ❌ | ❌ | ❌ | ❌ |

| NUWA-XL | ✔ | ✔ | ❌ | ✔ | ❌ |

| Gen-L-Video | ✔ | ✔ | ✔ | ✔ | ✔ |

Most of the results can be generated with a single RTX 3090.

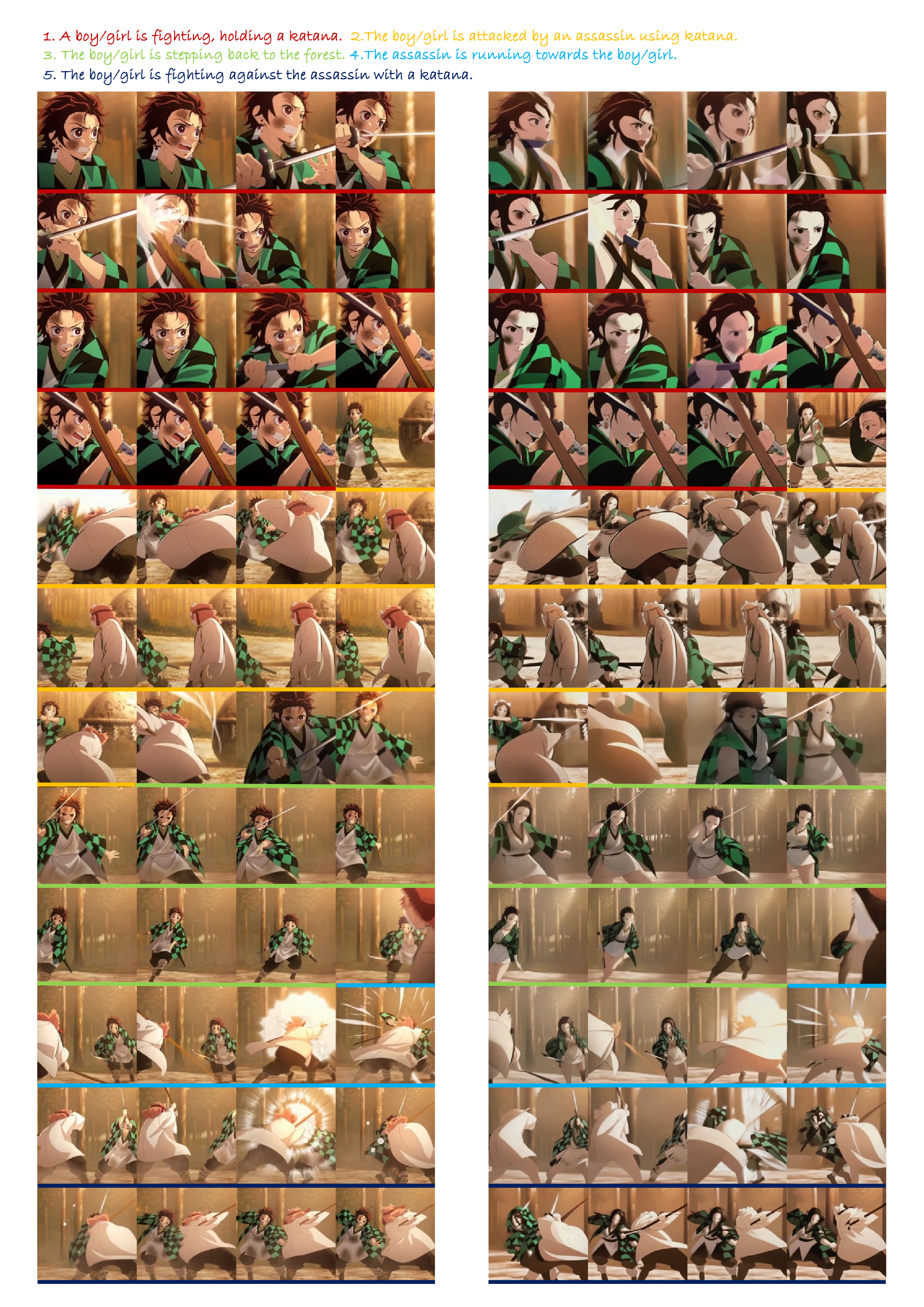

demon_slayer_resize.mp4

This video containing clips bearing various semantic information.

All the following videos are directly generated with the pretrained Stable Diffusion weight without additional training.

All the following videos are directly generated with the pretrained Stable Diffusion weight without additional training.

All the following videos are directly generated with the pre-trained VideoCrafter without additional training.

| Additional Results |

|

|

|

|

|

|

Tune-A-Video: One-Shot Tuning of Image Diffusion Models for Text-to-Video Generation. [paper] [code]

Fate-Zero: Fusing Attentions for Zero-shot Text-based Video Editing. [paper] [code]

Pix2Video: Video Editing using Image Diffusion. [paper] [code]

VideoCrafter: A Toolkit for Text-to-Video Generation and Editing. [paper] [code]

ControlVideo: Training-free Controllable Text-to-Video Generation. [paper] [code]

Text2Video-Zero: Text-to-Image Diffusion Models are Zero-Shot Video Generators. [paper] [code]

Other relevant works about video generation/editing can be obtained by this repo: Awesome-Video-Diffusion.

- This code is heavily built upon diffusers and Tune-A-Video. If you use this code in your research, please also acknowledge their work.

- This project leverages Stable-Diffusion,Stable-Diffusion-Inpaint, Stable-Diffusion-Depth , LoRA, CLIP, VideoCrafter, ControlNet, T2I-Adapter, GroundingDINO,Edit-Anything and Segment Anything. We thank them for open-sourcing the code and pre-trained models.

If you use any content of this repo for your work, please cite the following bib entry:

@article{wang2023gen,

title={Gen-L-Video: Multi-Text to Long Video Generation via Temporal Co-Denoising},

author={Wang, Fu-Yun and Chen, Wenshuo and Song, Guanglu and Ye, Han-Jia and Liu, Yu and Li, Hongsheng},

journal={arXiv preprint arXiv:2305.18264},

year={2023}

}I welcome collaborations from individuals/institutions who share a common interest in my work. Whether you have ideas to contribute, suggestions for improvements, or would like to explore partnership opportunities, I am open to discussing any form of collaboration. Please feel free to contact the author: Fu-Yun Wang (wangfuyun@smail.nju.edu.cn). Enjoy the code.