🔥 2024/02/27: Our paper GPT4Point is accepted by CVPR'24!

🔥 2024/01/19: We release the Objaverse-XL (Point Cloud Format) Extraction way.

🔥 2024/01/10: We release the Objaverse-XL (Point Cloud Format) Download way.

🔥 2023/12/05: The paper GPT4Point (arxiv) has been released, we unified the Point-language Understanding and Generation.

🔥 2023/08/13: Two-stage Pre-training code of PointBLIP has been released.

🔥 2023/08/13: Part of datasets used and result files has been uploaded.

This project presents GPT4Point ![]() , a 3D multi-modality model that aligns 3D point clouds with language. More details are shown in project page.

, a 3D multi-modality model that aligns 3D point clouds with language. More details are shown in project page.

-

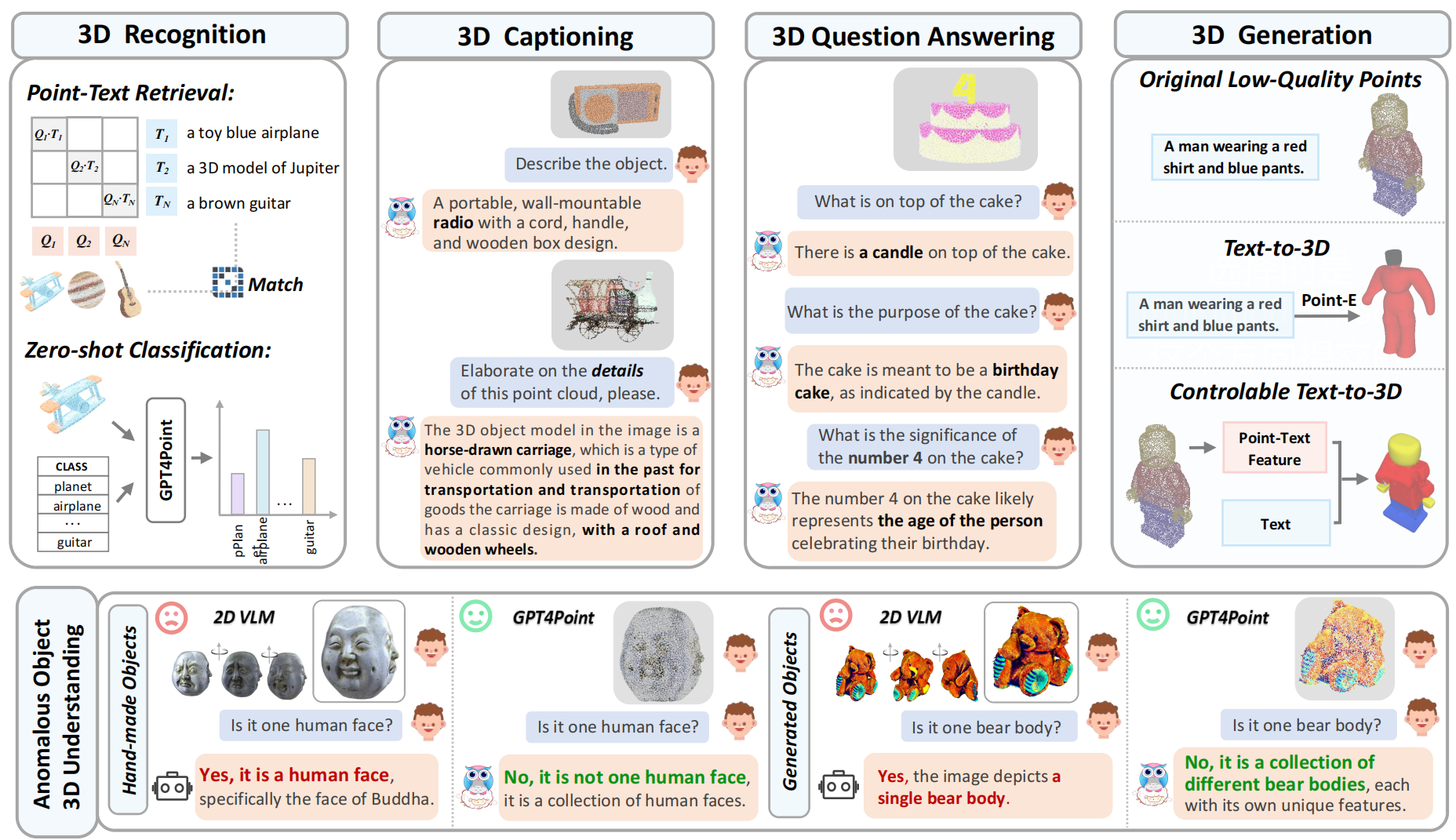

Unified Framework for Point-language Understanding and Generation. We present the unified framework for point-language understanding and generation GPT4Point, including the 3D MLLM for point-text tasks and controlled 3D generation.

-

Automated Point-language Dataset Annotation Engine Pyramid-XL. We introduce the automated point-language dataset annotation engine Pyramid-XL based on Objaverse-XL, currently encompassing 1M pairs of varying levels of coarseness and can be extended cost-effectively.

-

Object-level Point Cloud Benchmark. Establishing a novel object-level point cloud benchmark with comprehensive evaluation metrics for 3D point cloud language tasks. This benchmark thoroughly assesses models' understanding capabilities and facilitates the evaluation of generated 3D objects.

Note that you should cd in the Objaverse-xl_Download directory.

cd ./Objaverse-xl_DownloadThen please see the folder Objaverse-xl_Download for details.

Please see the Extract_Pointcloud for details.

Dataset and Data Engine

- [✔] Release the arxiv and the project page.

- [✔] Release the dataset (Objaverse-Xl) Download way.

- [✔] Release the dataset (Objaverse-Xl) rendering (points) way.

- Release dataset and data annotation engine (Pyramid-XL).

- Add inferencing codes with checkpoints.

- Add Huggingface Demo🤗.

- Add training codes.

- Add evaluation codes.

- Add gradio demo codes.

If you find our work helpful, please cite:

@misc{qi2023gpt4point,

title={GPT4Point: A Unified Framework for Point-Language Understanding and Generation},

author={Zhangyang Qi and Ye Fang and Zeyi Sun and Xiaoyang Wu and Tong Wu and Jiaqi Wang and Dahua Lin and Hengshuang Zhao},

year={2023},

eprint={2312.02980},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

This work is under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Together, Let's make LLM for 3D great!

- Point-Bind & Point-LLM: It aligns point clouds with Image-Bind to reason multi-modality input without 3D-instruction data training.

- 3D-LLM: employs 2D foundation models to encode multi-view images of 3D point clouds.

- PointLLM: employs 3D point clouds with LLaVA.