Efficient Transformer for Single Image Super-Resolution

#######22.03.17########

The result images of our method are collected in fold "/result".

- pytorch >=1.0

- python 3.6

- numpy

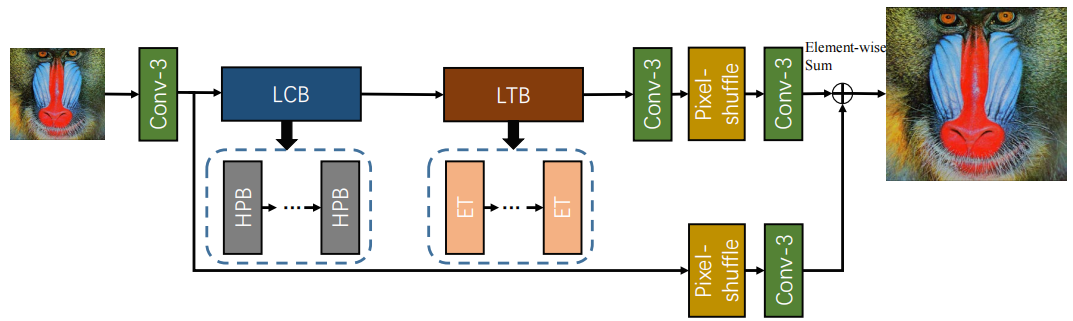

The overall architecture of the proposed Efficient SR Transformer (ESRT).

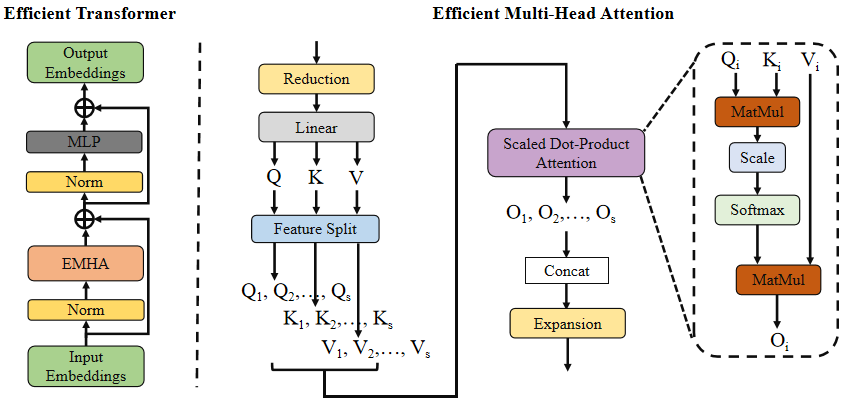

Efficient Transformer and Efficient Multi-Head Attention.

-

dataset: DIV2K

-

prepare

Like IMDN, convert png files in DIV2K to npy files:

python scripts/png2npy.py --pathFrom /path/to/DIV2K/ --pathTo /path/to/DIV2K_decoded/

-

Training

python train.py --scale 2 --patch_size 96

python train.py --scale 3 --patch_size 144

python train.py --scale 4 --patch_size 192If you want a better result, use 128/192/256 patch_size for each scale.

Example:

- test B100 X4

python test.py --is_y --test_hr_folder dataset/benchmark/B100/HR/ --test_lr_folder dataset/benchmark/B100/LR_bicubic/X4/ --output_folder results/B100/x4 --checkpoint experiment/checkpoint/x4/epoch_990.pth --upscale_factor 4