This project serves to demonstrate proficiency in Data Engineering, problem-solving abilities, and knowledge in Software development. The challenge involves making the massive data set of fire incidents in San Francisco, United States, available in a data warehouse for analysis and visualization. The dataset, hosted by Socrata, comprises approximately 650k rows and 64 columns. Detailed information about the dataset can be found here.

The project's cloud infrastructure is entirely coded using Terraform, located in

the infra-as-code folder. Additionally, it integrates with a dbt project under

the data_engineering_challenge folder, allowing analytics to organize and run their

SQL scripts on Snowflake seamlessly. The project utilizes Pipenv as its Python

virtualenv management tool.

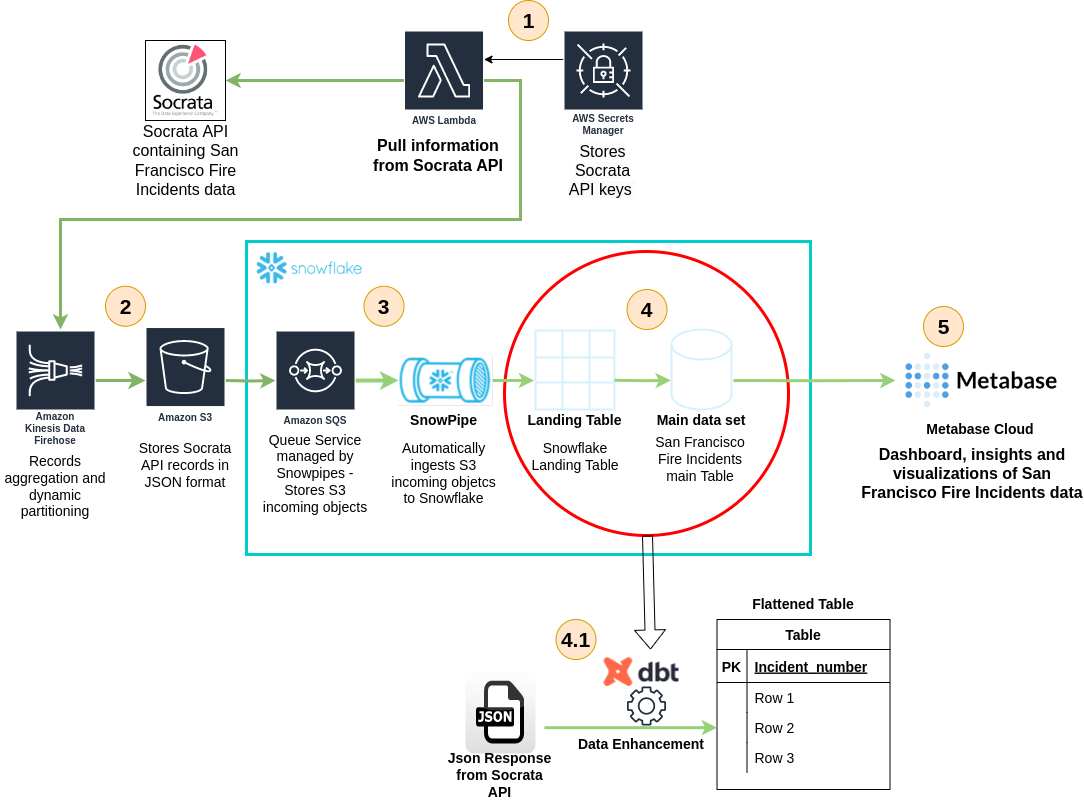

Given the large size of the fire incidents dataset, handling, transforming, and storing it becomes a real-world data engineering challenge. Luckily, Socrata provides a comprehensive API for fetching data programmatically using pagination and query statements. The chosen solution involves several stages outlined below.

Let us go through each stage of the data journey, one piece at a time in order to clarify what is happening on the image above.

A Python code snippet deployed within a Lambda function fetches data from Socrata using

AWS Secret Manager to retrieve API keys. This code retrieves 50,000 dataset records per request,

transforms geo-coordinate points within the JSON structure, and fetches missing points from

the Google Maps API using street addresses. The Lambda then aggregates batches of

500 records and sends them to Kinesis Data Firehose using the put_record_batch method from the

Firehose SDK API.

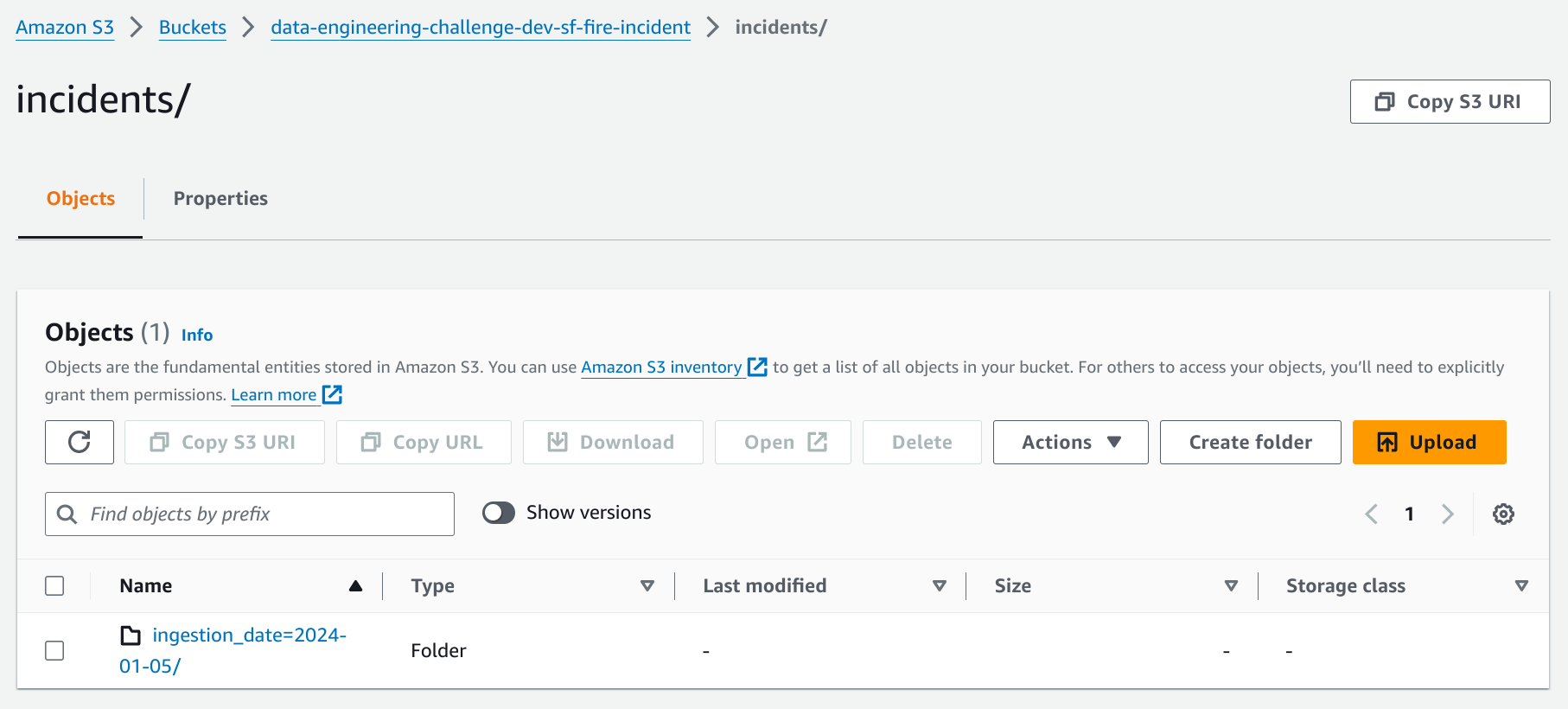

Firehose buffers records for 60 seconds or until 64MB chunks are reached. It dynamically partitions records by the files ingestion date and delivers them to S3. Check the image below to observe the partitioning of records in S3 facilitated by Firehose.

Snowflake is chosen as the data warehouse due to its high availability, seamless data sharing, impressive performance, and the absence of any manual maintenance requirements. To seamlessly ingest data from S3 into Snowflake, the Snowpipe mechanism is employed. Snowpipe enables loading data from files as soon as they’re available in a stage, ensuring data availability in micro-batches. Snowpipe makes the data available to users within minutes, rather than manually executing COPY statements on a schedule to load larger batches. This document explain step by step how snowpipe is configured. Within the context of this project, this file contains the commands utilized for setting up Snowpipe, facilitating the ingestion of San Francisco fire incident data into Snowflake

A dbt project is integrated to flatten incoming JSON files from Snowpipe.

This project, located in the data_engineering_challenge folder, includes a dbt

model that flattens the JSON files and builds an incremental table within Snowflake,

containing all 650k records and 64 columns from the SF fire incidents dataset.

The dbt project is automatically deployed to AWS using a CI/CD pipeline.

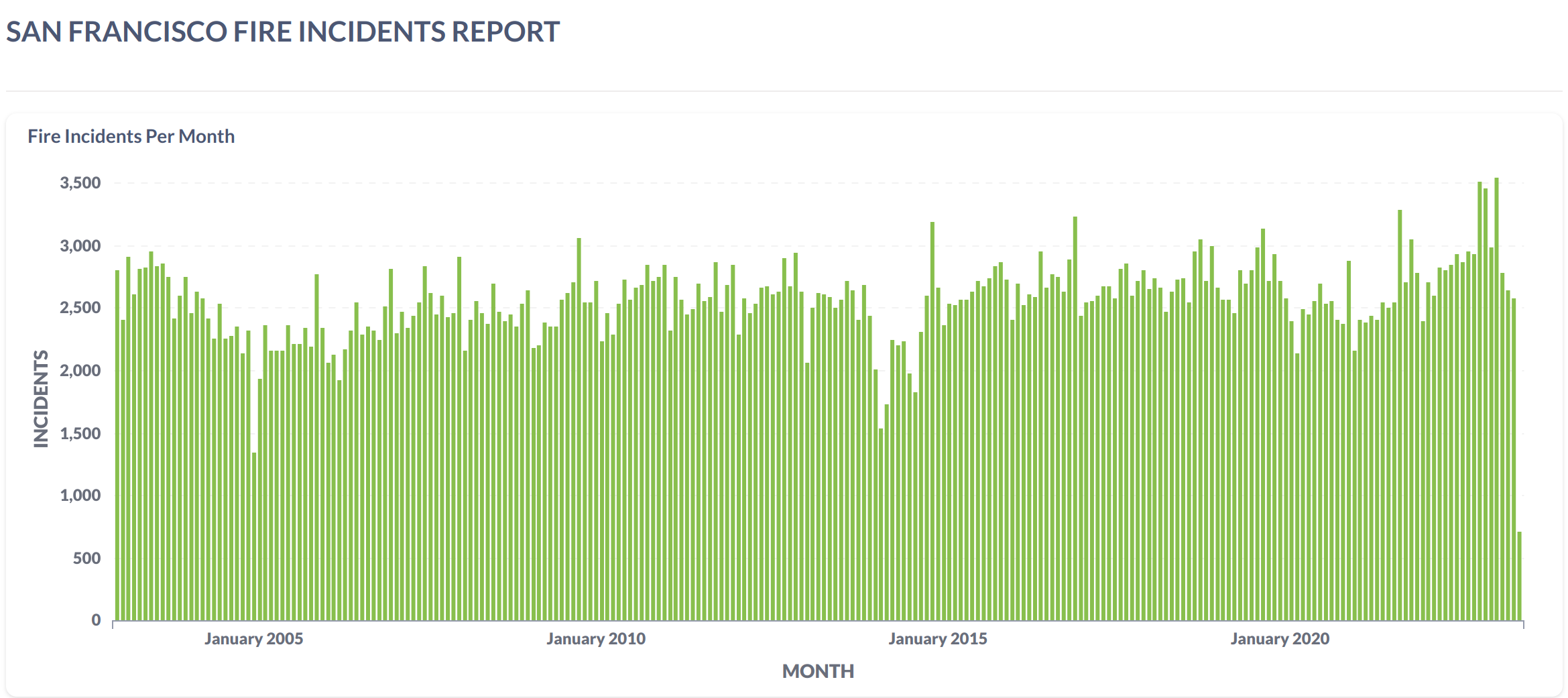

Metabase, an open-source visualization tool, is chosen for creating dashboards and exploring the SF fire incident data. For this challenge, Metabase Cloud is used to make the analysis public with minimal maintenance. Access the dashboards and visualizations here.

├── data_engineering_challenge

│ ├── analyses

│ ├── dbt_project.yml

│ ├── macros

│ ├── models

│ │ ├── analytics

│ │ │ ├── fire_incidents_per_year_battalion.sql

│ │ │ ├── fire_incidents_per_year_district.sql

│ │ │ └── fire_incidents_per_year.sql

│ │ ├── san_francisco_fire_incidents.sql

│ │ └── sources.yml

│ ├── profiles.yml

│ ├── README.md

│ ├── scripts

│ │ └── dbt_container_init_commands.sh

│ ├── seeds

│ ├── snapshots

│ └── tests

├── dbt.dockerfile

├── infra-as-code

│ ├── backend.tf

│ ├── dbt.compute.engine.settings.tf

│ ├── main.tf

│ ├── modules

│ │ ├── ecr

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── ecs-cluster

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ ├── ecs-task-definition

│ │ │ ├── logging

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ └── variables.tf

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ ├── permissions

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ └── variables.tf

│ │ │ └── variables.tf

│ │ ├── kinesis-data-firehose

│ │ │ ├── main.tf

│ │ │ ├── observability

│ │ │ │ ├── main.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── outputs.tf

│ │ │ ├── permissions

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ ├── lambda

│ │ │ ├── cloudwatch-trigger

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── invoker

│ │ │ │ ├── main.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── main.tf

│ │ │ ├── observability

│ │ │ │ ├── main.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── outputs.tf

│ │ │ ├── package

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ ├── lambda-layer

│ │ │ ├── build

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ ├── variables.tf

│ │ │ │ └── versions.tf

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ ├── permissions

│ │ │ ├── lambda-dbt-trigger-service-role

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ └── variables.tf

│ │ │ ├── lambda-fetcher-service-role

│ │ │ │ ├── main.tf

│ │ │ │ ├── outputs.tf

│ │ │ │ └── variables.tf

│ │ │ └── s3-access-role-for-snowflake

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ ├── s3

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ ├── variables.tf

│ │ │ └── versions.tf

│ │ └── vpc-resources

│ │ ├── elastic-ip

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── internet-gateway

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── nat-gateway

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── route-table

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── security-group

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ ├── subnet

│ │ │ ├── main.tf

│ │ │ ├── outputs.tf

│ │ │ └── variables.tf

│ │ └── vpc

│ │ ├── main.tf

│ │ ├── outputs.tf

│ │ └── variables.tf

│ ├── s3.bucket.settings.tf

│ ├── variables.default.tf

│ └── vpc.settings.tf

├── lambda-code

│ ├── dbt_trigger.py

│ └── fetcher.py

├── Pipfile

├── Pipfile.lock

├── README.md

├── requirements_dbt.txt

├── requirements.txt

└── utils

├── images

│ ├── dashboard.png

│ ├── data-engineering-challenge.png

│ ├── dbt_deployment_on_ECS_Fargte_architecture.png

│ ├── deployment_pipeline_snapshot.png

│ ├── s3_bucket_snapshot.png

│ └── vpc_configuration.png

├── lambda-deployment-packages

│ └── lambda-layer.zip

├── lambda_layer_builder.sh

└── snowpipe_creation.sql2.1. Clone this repository:

git clone git@github.com:gpass0s/data-engineering-challenge.git2.2. Access the project's "infra-as-code" folder:

cd data-engineering-challenge/infra-as-code2.3. Access the project's "infra-as-code" folder:

export AWS_ACCESS_KEY_ID=<your-aws-access-key-id>

export AWS_SECRET_ACCESS_KEY=<your-aws-secret-access-key>

export AWS_DEFAULT_REGION=<your-aws-region>2.4. Set the Terraform:

terraform init --backend-config="<your-bucket>"2.5. Set the Terraform Environment:

terraform workspace select $ENV || terraform workspace new $ENV2.6. Create a Terraform plan:

terraform plan2.7. Apply the terraform project on your own AWS account:

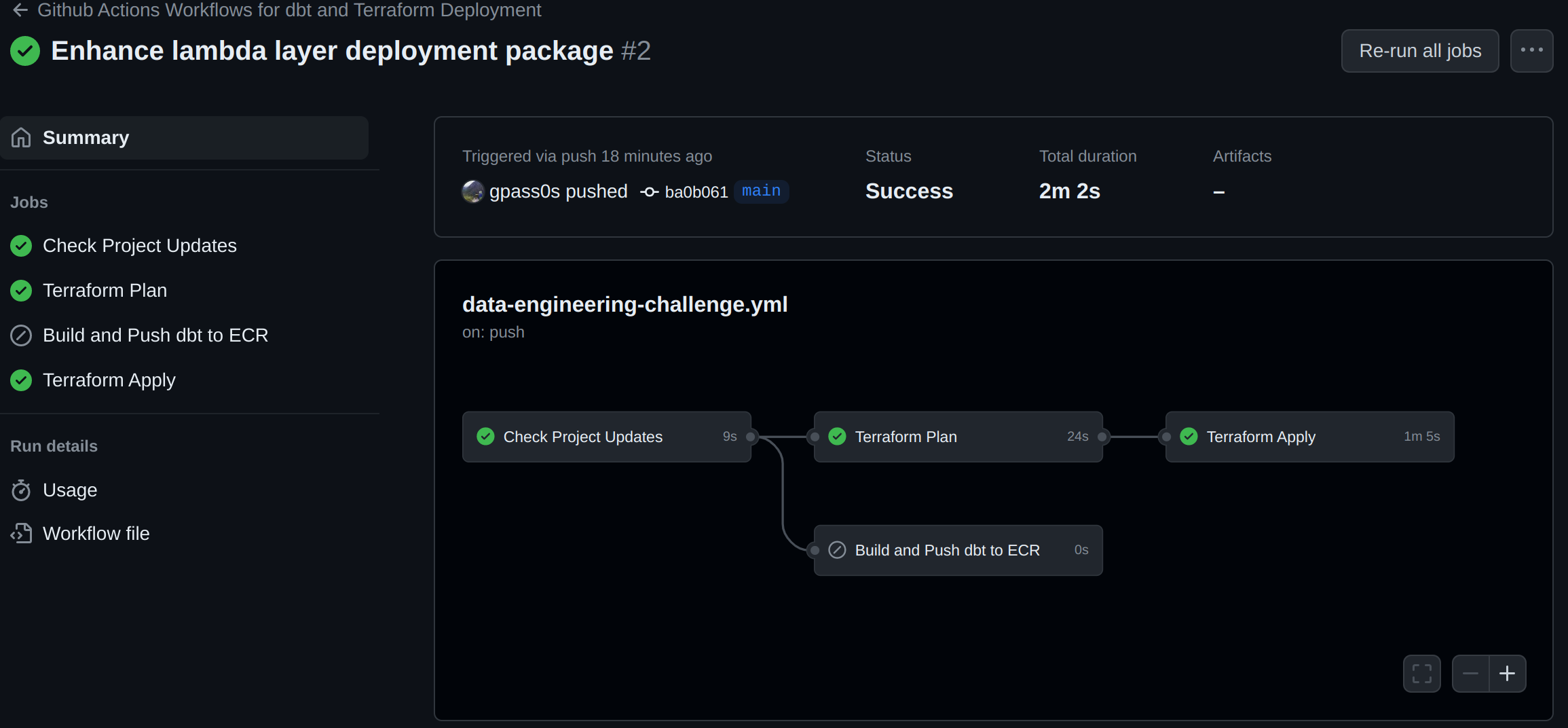

terraform apply -auto-approveThis repository employs GitHub Actions workflows to automate the deployment of both the Terraform infrastructure and dbt project on AWS. The workflow, outlined in this YAML file, orchestrates the seamless execution of deployment processes.

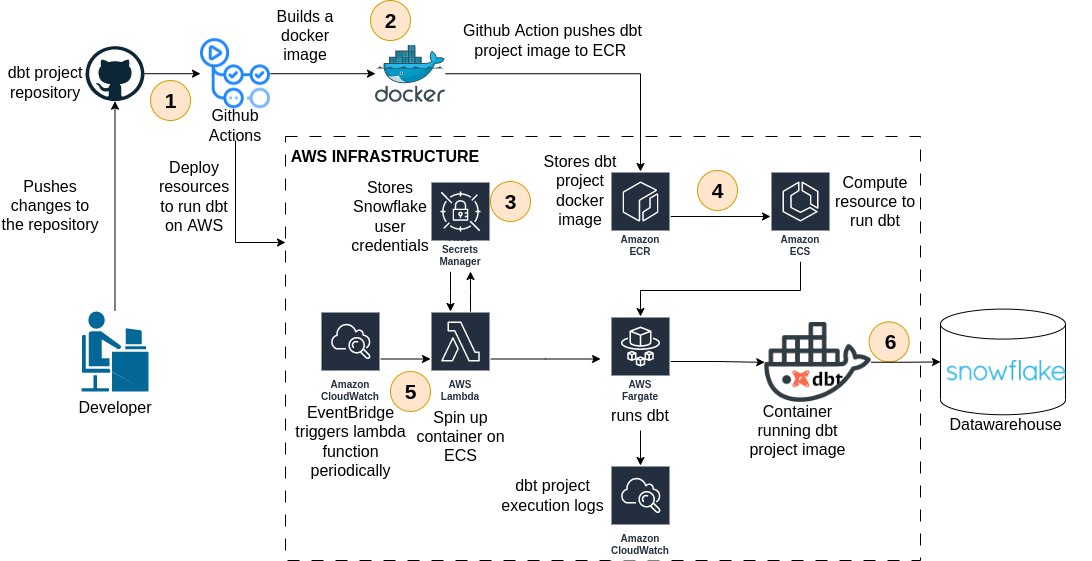

This repository implements a demo CI/CD pipeline designed to deploy the dbt project

on ECS Fargate through GitHub Actions automatically.

The pipeline is triggered whenever a developer pushes changes to the

data_engineering_challenge folder. Next, a GitHub Actions job starts by building

a docker image defined in the dbt.dockerfile located in the

main directory of this project. This job then pushes the image to an AWS ECR

repository and deploys an ECS cluster, ECS task definition in Fargate mode, and a

Lambda function. The Lambda function called dbt_trigger executes a Python code that retrieves Snowflake

credentials from AWS Secret Manager and spins up the dbt project container on ECS.

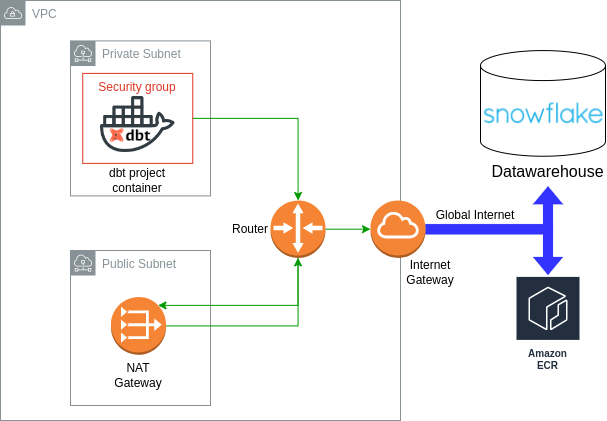

This section address the essential networking configurations required for running dbt containers on ECS. As depicted in Figure 8, the ECS task definition specifies its network mode parameter as “awsvpc”, indicating that the task container must operate within a subnet and be governed by a security group regulating its inbound and outbound traffic. Opting for a private subnet deployment involves setting up a NAT gateway, a public subnet, and an internet gateway to ensure connectivity from the private subnet to the global internet. Note that communication between the dbt containers and the Snowflake data warehouse account traverses the global internet.