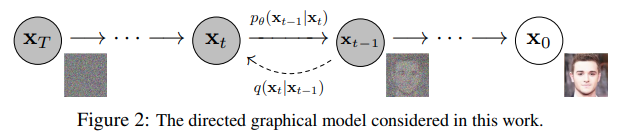

Implementation of Denoising Diffusion Probabilistic Model in Pytorch. It is a new approach to generative modeling that may have the potential to rival GANs. It uses denoising score matching to estimate the gradient of the data distribution, followed by Langevin sampling to sample from the true distribution.

This implementation was transcribed from the official Tensorflow version here

$ pip install denoising_diffusion_pytorchimport torch

from denoising_diffusion_pytorch import Unet, GaussianDiffusion

model = Unet(

dim = 64,

dim_mults = (1, 2, 4, 8)

)

diffusion = GaussianDiffusion(

model,

image_size = 128,

timesteps = 1000, # number of steps

loss_type = 'l1' # L1 or L2

)

training_images = torch.randn(8, 3, 128, 128) # your images need to be normalized from a range of -1 to +1

loss = diffusion(training_images)

loss.backward()

# after a lot of training

sampled_images = diffusion.sample(batch_size = 4)

sampled_images.shape # (4, 3, 128, 128)Or, if you simply want to pass in a folder name and the desired image dimensions, you can use the Trainer class to easily train a model.

from denoising_diffusion_pytorch import Unet, GaussianDiffusion, Trainer

model = Unet(

dim = 64,

dim_mults = (1, 2, 4, 8)

).cuda()

diffusion = GaussianDiffusion(

model,

image_size = 128,

timesteps = 1000, # number of steps

loss_type = 'l1' # L1 or L2

).cuda()

trainer = Trainer(

diffusion,

'path/to/your/images',

train_batch_size = 32,

train_lr = 1e-4,

train_num_steps = 700000, # total training steps

gradient_accumulate_every = 2, # gradient accumulation steps

ema_decay = 0.995, # exponential moving average decay

amp = True # turn on mixed precision

)

trainer.train()Samples and model checkpoints will be logged to ./results periodically

@inproceedings{NEURIPS2020_4c5bcfec,

author = {Ho, Jonathan and Jain, Ajay and Abbeel, Pieter},

booktitle = {Advances in Neural Information Processing Systems},

editor = {H. Larochelle and M. Ranzato and R. Hadsell and M.F. Balcan and H. Lin},

pages = {6840--6851},

publisher = {Curran Associates, Inc.},

title = {Denoising Diffusion Probabilistic Models},

url = {https://proceedings.neurips.cc/paper/2020/file/4c5bcfec8584af0d967f1ab10179ca4b-Paper.pdf},

volume = {33},

year = {2020}

}@InProceedings{pmlr-v139-nichol21a,

title = {Improved Denoising Diffusion Probabilistic Models},

author = {Nichol, Alexander Quinn and Dhariwal, Prafulla},

booktitle = {Proceedings of the 38th International Conference on Machine Learning},

pages = {8162--8171},

year = {2021},

editor = {Meila, Marina and Zhang, Tong},

volume = {139},

series = {Proceedings of Machine Learning Research},

month = {18--24 Jul},

publisher = {PMLR},

pdf = {http://proceedings.mlr.press/v139/nichol21a/nichol21a.pdf},

url = {https://proceedings.mlr.press/v139/nichol21a.html},

}