Official experiment implementation of the following paper:

Deep Learning Theory Review: An Optimal Control and Dynamical Systems Perspective.

The repo reproduces some of the results from the Deep Information Propagation paper. However, it is not the official release of DIP. Most APIs in meanfield.py are consistent with the mean-field-cnns repo.

Contribution is wellcomed. Plase cite the following BibTex if you find this repo helpful.

@article{Liu2019DeepLT,

title={Deep Learning Theory Review: An Optimal Control and Dynamical Systems Perspective},

author={Liu, Guan-Horng and Theodorou, Evangelos A.},

journal={arXiv preprint arXiv:1908.10920},

year={2019}

}

- Python3

- Pytorch

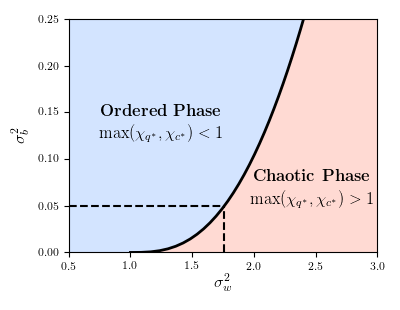

python3 plot-phase-diagram.py- Result:

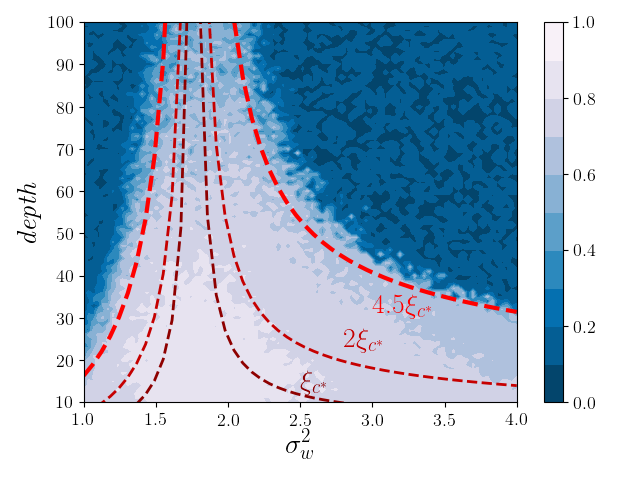

python3 plot-trainability.py \

--depth-scale-file 'depth_scale.npz' \

--train-acc-file 'train_acc.npz'-

You can specify the argument

--{depth-scale,train-acc}-fileto load the pre-computed results; otherwise it will run the full experiment from scratch. -

Other arguments for the hyper-parameters (e.g. learning rate, batch size... etc) of the training process can be founded in

plot-trainability.py -

Result: