Experimental usage of stable-fast and TensorRT.

Note

Official TensorRT node https://github.com/comfyanonymous/ComfyUI_TensorRT

The project is no longer active because stable-fast is no longer active and it is difficult for third-party nodes to implement a flexible TensorRT for ComfyUI.

git clone https://github.com/gameltb/ComfyUI_stable_fast custom_nodes/ComfyUI_stable_fastYou'll need to follow the guide below to enable stable fast node.

Note

Requires stable-fast >= 1.0.0 .

Note

Currently only tested on linux, Not tested on Windows.

The following needs to be installed when you use TensorRT.

pip install onnx zstandard

pip install --pre --upgrade --extra-index-url https://pypi.nvidia.com tensorrt==10.0.1

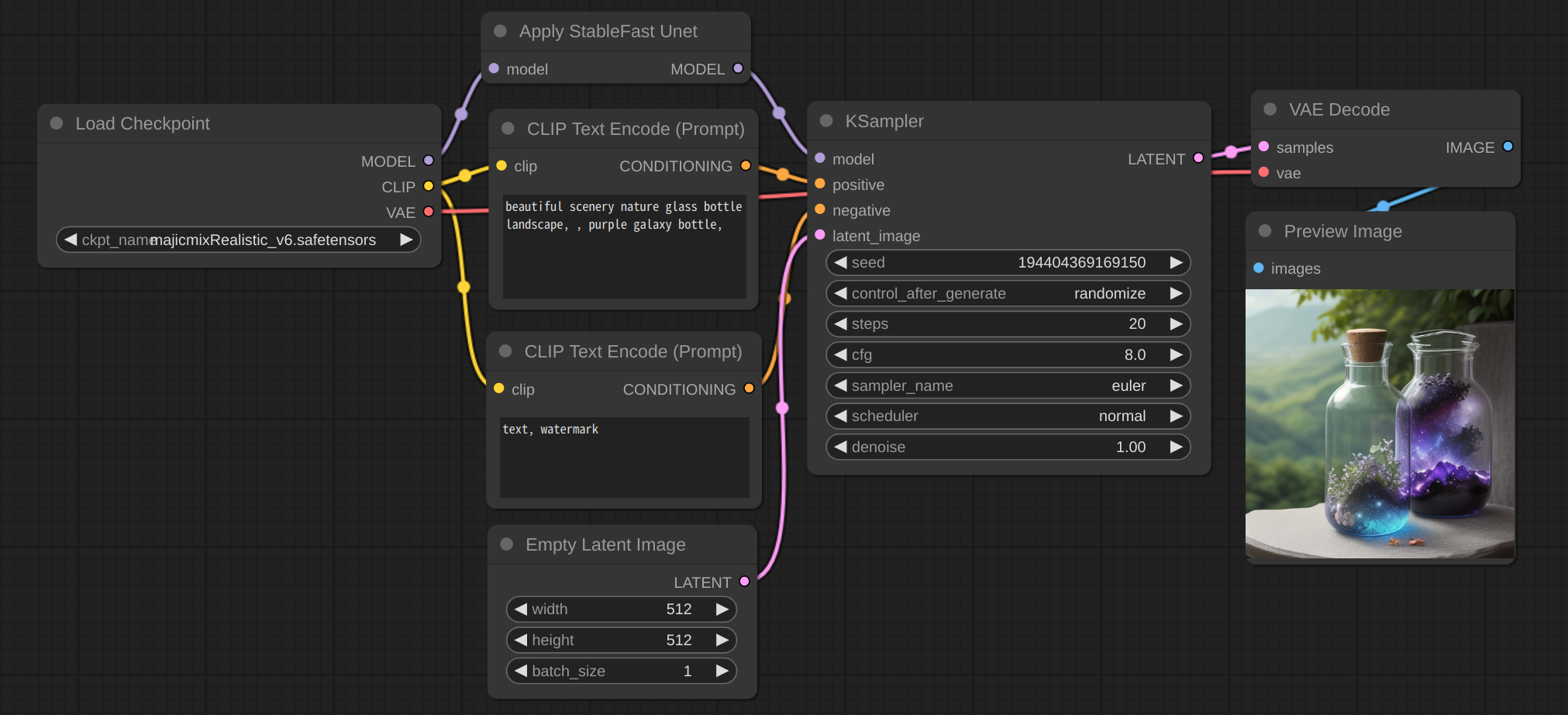

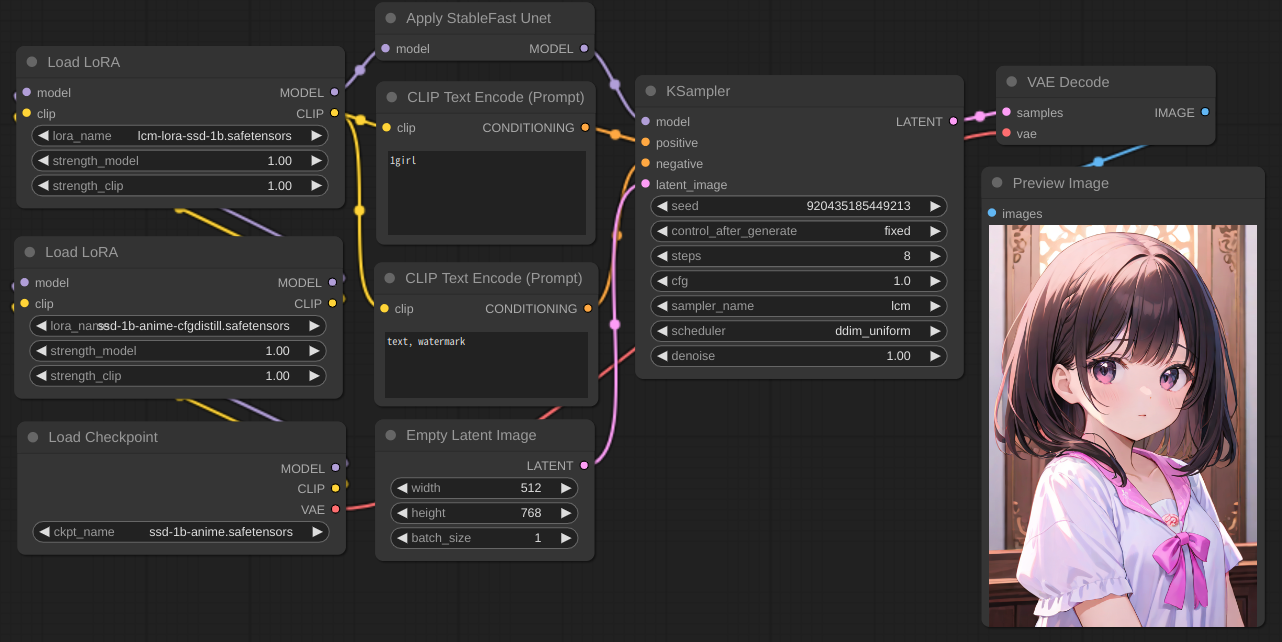

pip install onnx-graphsurgeon polygraphy --extra-index-url https://pypi.ngc.nvidia.comPlease refer to the screenshot

It can work with Lora, ControlNet and lcm. SD1.5 and SSD-1B are supported. SDXL should work.

Run ComfyUI with --disable-cuda-malloc may be possible to optimize the speed further.

Note

- FreeU and PatchModelAddDownscale are now supported experimentally, Just use the comfy node normally.

- stable fast not work well with accelerate, So this node has no effect when the vram is low. For example: 6G vram card run SDXL.

- stable fast will optimize the speed when generating images using the same model for the second time. if you switch models or Lora frequently, please consider disable enable_cuda_graph.

- It is better to connect the

Apply StableFast Unetnode directly to theKSamplernode, and there should be no nodes between them that will change the weight, such as theLoad LoRAnode, but for some nodes, placing it between them can prevent useless recompilation caused by modifying the node parameters, such as theFreeUnode, you can try to use other nodes, but I can't guarantee that it will work properly.

Run ComfyUI with --disable-xformers --force-fp16 --fp16-vae and use Apply TensorRT Unet like Apply StableFast Unet.

The Engine will be cached in tensorrt_engine_cache.

Note

- If you encounter an error after updating, you can try deleting the

tensorrt_engine_cache.

| Stable Fast | TensorRT(UNET) | TensorRT(UNET_BLOCK) | |

|---|---|---|---|

| SD1.5 | ✓ | ✓ | ✓ |

| SDXL | untested(Should work) | WIP | untested |

| SSD-1B | ✓ | WIP | ✓ |

| Lora | ✓ | ✓ | ✓ |

| ControlNet Unet | ✓ | ✓ | ✓ |

| VAE decode | WIP | ✓ | ✓ |

| ControlNet Model | WIP | WIP | WIP |

| Stable Fast | TensorRT(UNET) | TensorRT(UNET_BLOCK) | |

|---|---|---|---|

| Load LoRA | ✓ | ✓ | ✓ |

| FreeU(FreeU_V2) | ✓ | ✗ | ✓ |

| PatchModelAddDownscale | ✓ | WIP | ✓ |

GeForce RTX 3060 Mobile (80W) 6GB, Linux , torch 2.1.1, stable fast 0.0.14, tensorrt 9.2.0.post12.dev5, xformers 0.0.23.

workflow: SD1.5, 512x512 bantch_size 1, euler_ancestral karras, 20 steps, use fp16.

Test Stable Fast and xformers run ComfyUI with --disable-cuda-malloc.

Test TensorRT and pytorch run ComfyUI with --disable-xformers.

For the TensorRT first launch, it will take up to 10 minutes to build the engine; with timing cache, it will reduce to about 2–3 minutes; with engine cache, it will reduce to about 20–30 seconds for now.

| Stable Fast (enable_cuda_graph) | TensorRT (UNET) | TensorRT (UNET_BLOCK) | pytorch cross attention | xformers | |

|---|---|---|---|---|---|

| 10.10 it/s | 10.95it/s | 10.66it/s | 7.02it/s | 7.90it/s | |

| enable FreeU | 9.42 it/s | ✗ | 10.04it/s | 6.75it/s | 7.54it/s |

| enable Patch Model Add Downscale | 10.81 it/s | ✗ | 11.30it/s | 7.46it/s | 8.41it/s |

| workflow | Stable Fast (enable_cuda_graph) | TensorRT (UNET) | TensorRT (UNET_BLOCK) | pytorch cross attention | xformers |

|---|---|---|---|---|---|

| 2.21s (first 17s) | 2.05s | 2.10s | 3.06s | 2.76s | |

| enable FreeU | 2.35s (first 18.5s) | ✗ | 2.24s | 3.18s | 2.88 |

| enable Patch Model Add Downscale | 2.08s (first 31.37s) | ✗ | 2.03s | 2.89s | 2.61s |