Experiments of coordinate-based MLPs based on Pytorch-lightning

graph LR

1[x]-->3[P.E.]-->4[Linear layers + activation<br>256]-->5[Linear layers + activation<br>256]-->6[Linear layers + activation<br>256]-->7[Linear layers + Sgmoid<br>256]

2[y]-->3[P.E.]

7-->8[R]

7-->9[G]

7-->10[B]

Loading

| Positional Encoding |

Equation |

| Fourier feature mapping |

$\gamma(v) = [..., \text{cos}(2\pi \sigma^{\frac{j}{m}}v), \text{sin}(2\pi \sigma^{\frac{j}{m}}v), ...]^T \ \text{for j in } [0, m-1]$ |

| Fourier feature mapping (Gaussian distribution) |

$\gamma(v) = [..., \text{cos}(2\pi Bv), \text{sin}(2\pi Bv), ...]^T \text{where each entry in B} \in \mathbb{R}^{m\times d} \text{is sampled from }\mathcal{N}(0, \sigma^2)$ |

|

|

| Activation function |

Equation |

| ReLU |

$\text{max}(0,x)$ |

| Siren |

$\text{sin}(w_0\times W_x + b)$ |

| Gaussian |

$e^{\frac{-0.5x^2}{a^2}}$ |

| Quadratic |

$\frac{1}{1+(ax)^2}$ |

| Multi Quadratic |

$\frac{1}{\sqrt{1+(ax)^2}}$ |

| Laplacian |

$e^{\frac{-\lvert x \rvert}{a}}$ |

| Super-Gaussian |

$\left [ e^{\frac{-0.5x^2}{a^2}}\right ]^b$ |

| ExpSin |

$e^{-\text{sin}(ax)}$ |

- build a directory "data/"

- make sure your own images put in "data/"

- Data used in My Experiment: Pluto image: NASA

# raw MLPs with RuLU activation function without positional encoding

python train.py --arch=relu --use_pe=False --exp_name=raw_mlps_800*800_1024

Run all experiments at once

# run exp with defualt setting: Image_wh=800*800 batch_size=1024

bash exp.sh

# run exp with setting: Image_wh=800*800 batch_size=800*800

bash exp_640000.sh

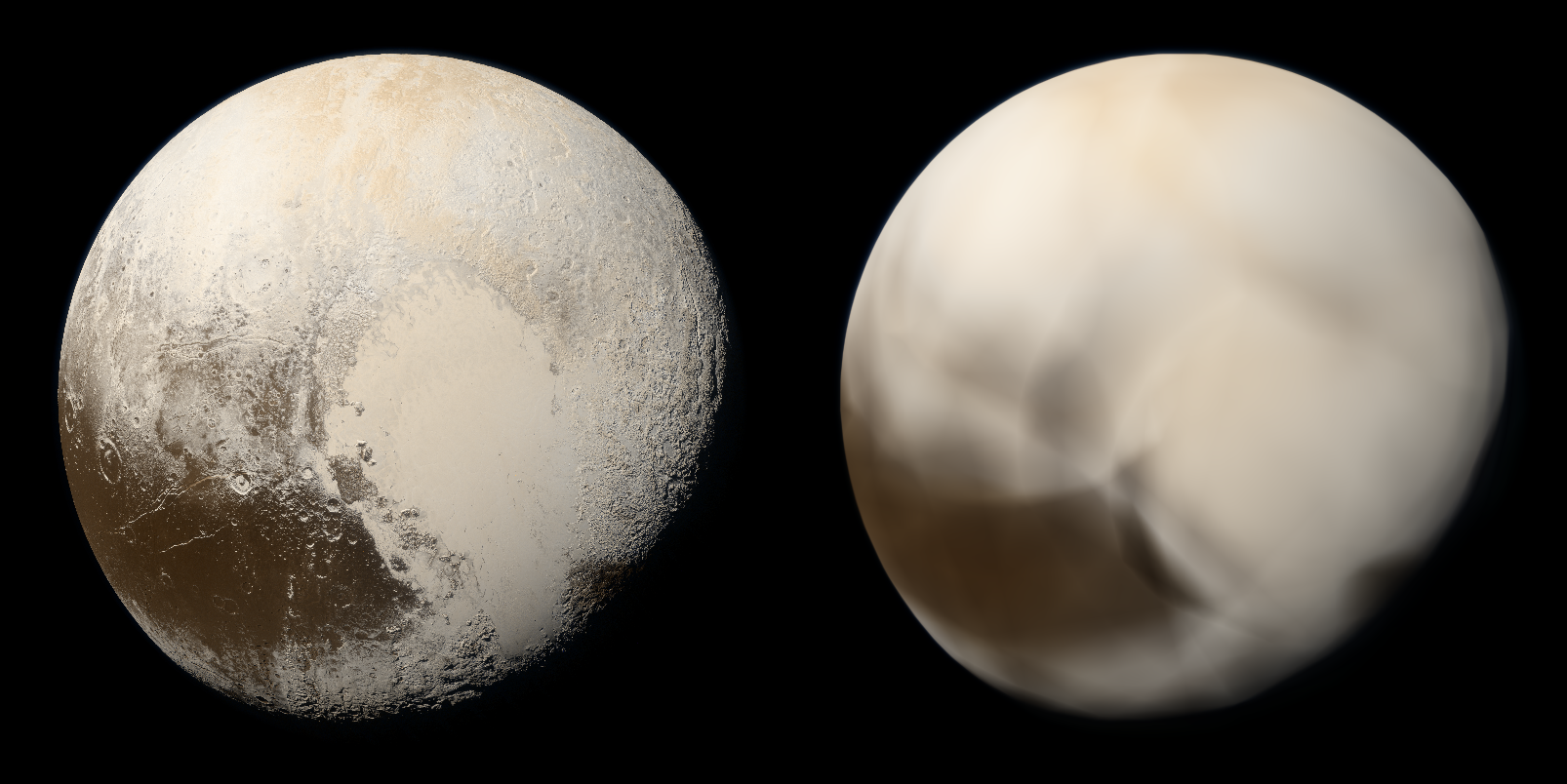

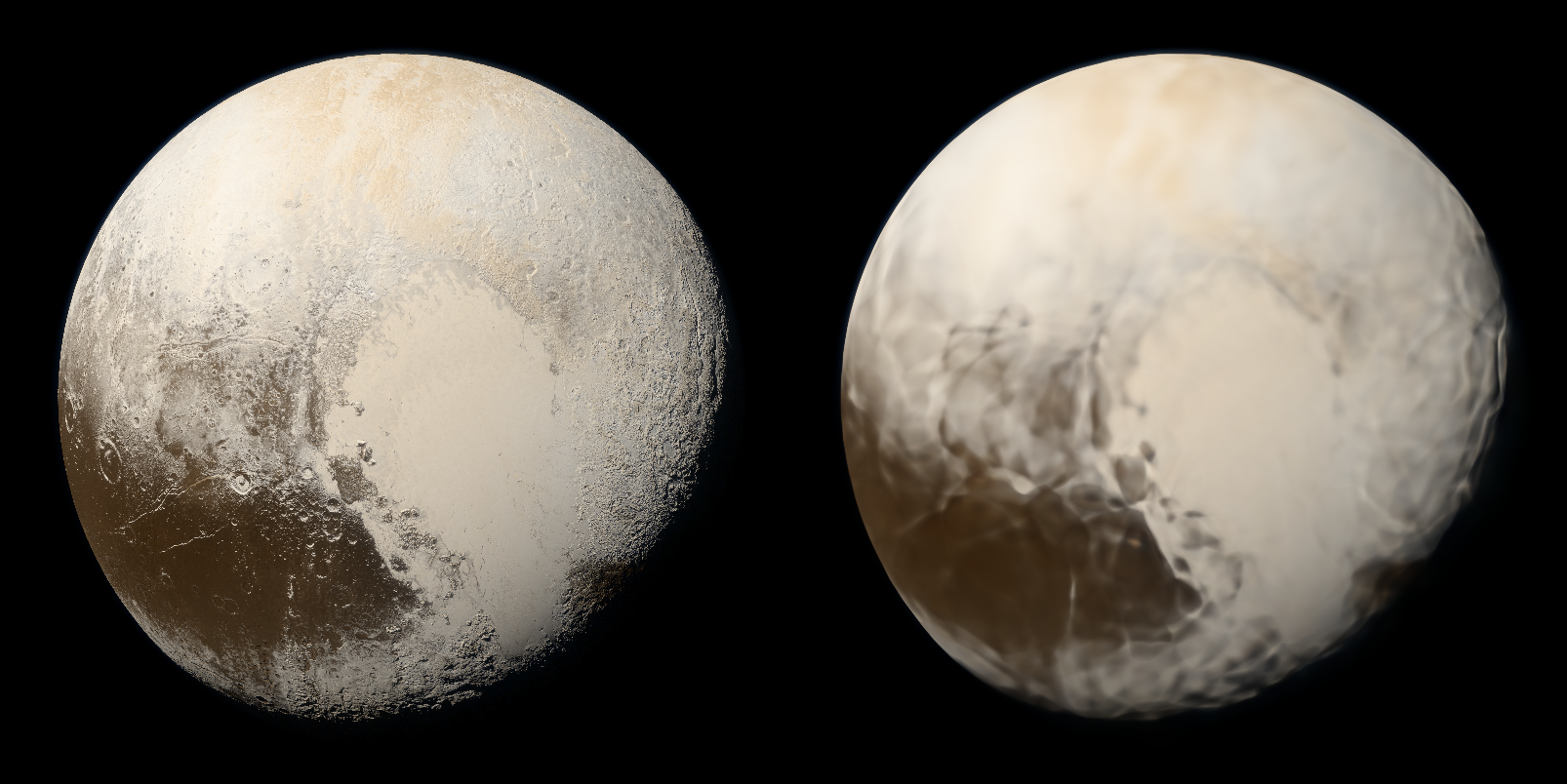

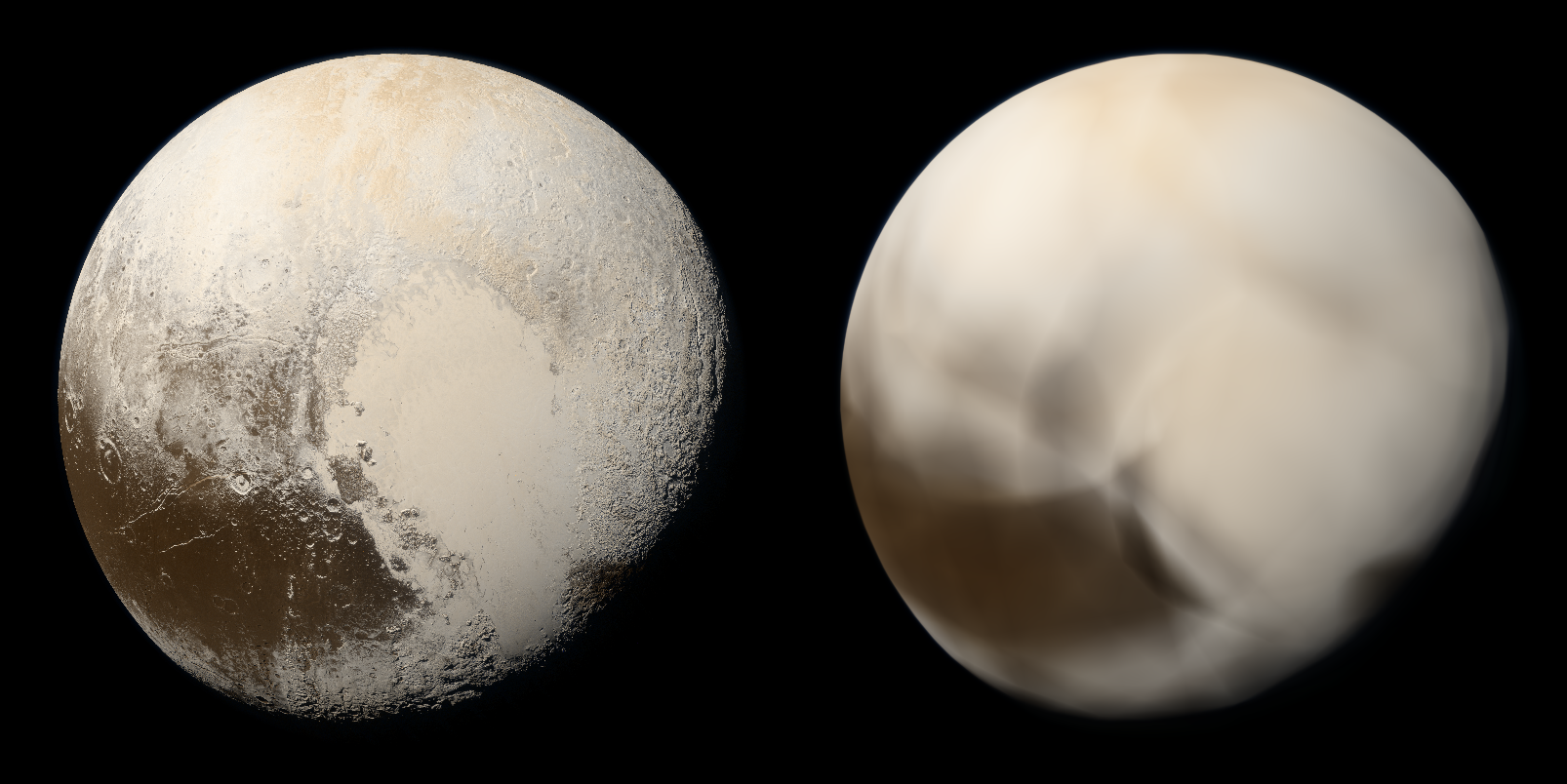

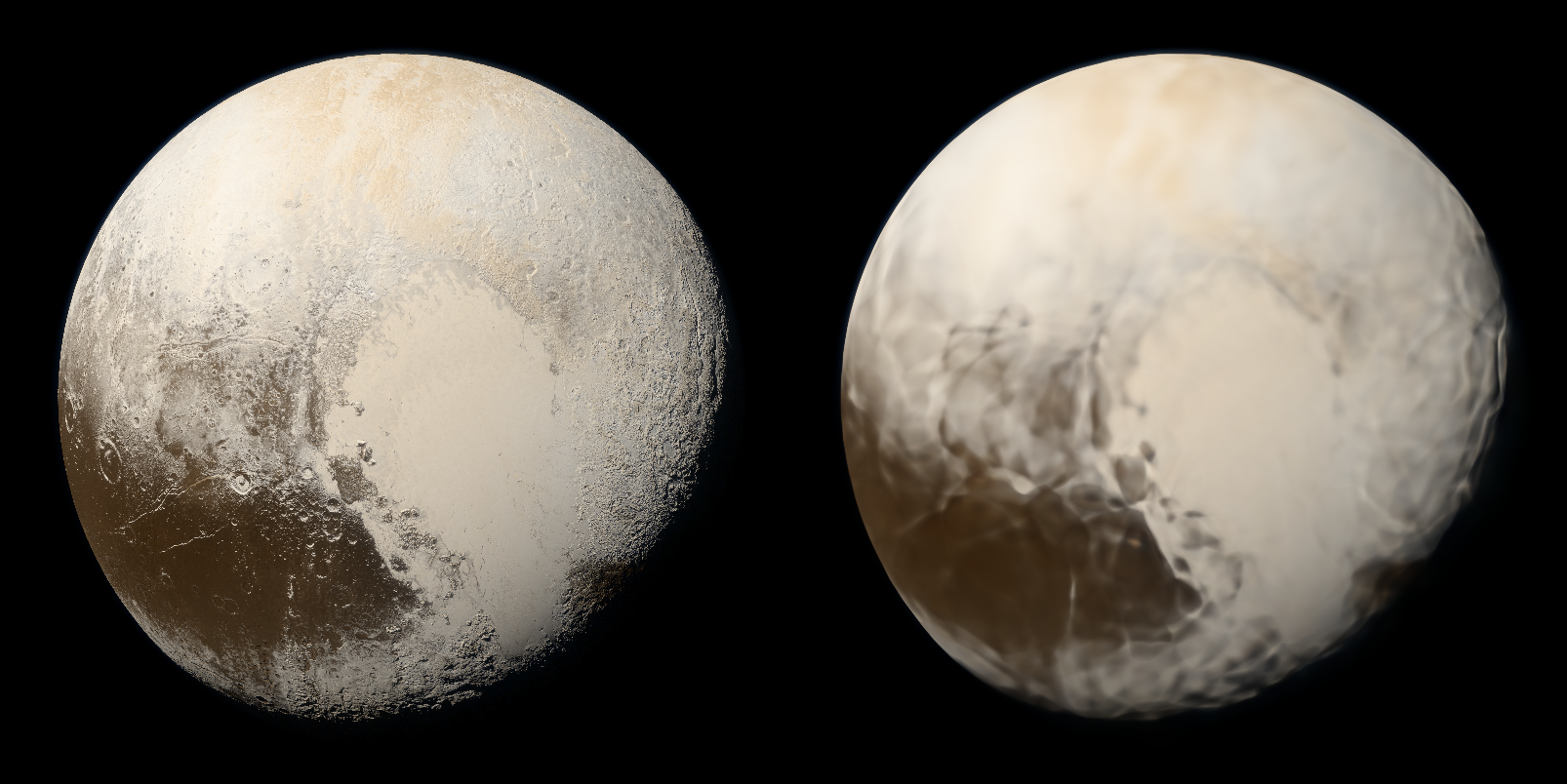

ReLU P.E.(Fourier Mapping)

Without Positional Encoding

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

33.262 |

21.601 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

22.405 |

16.957 |

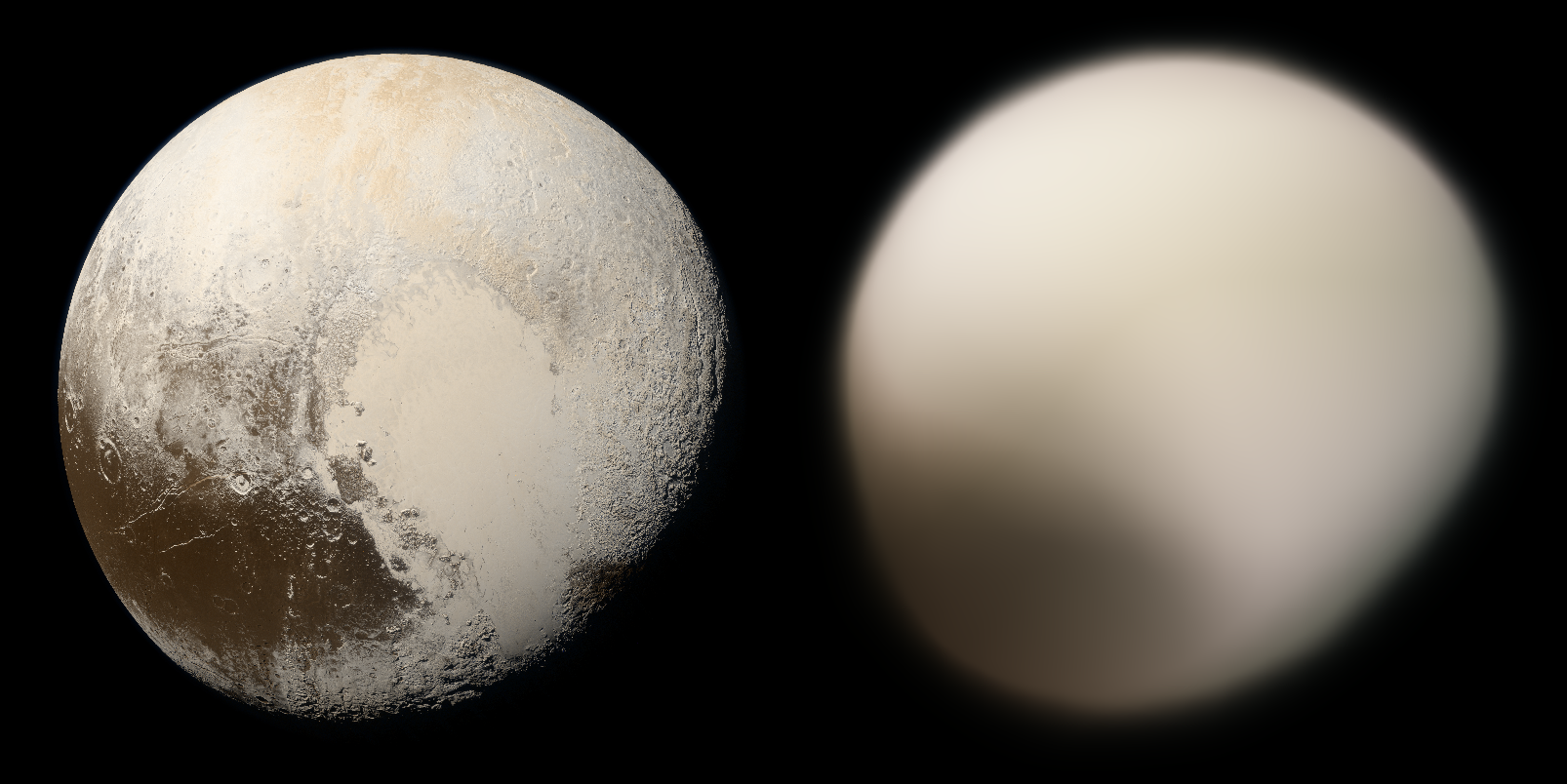

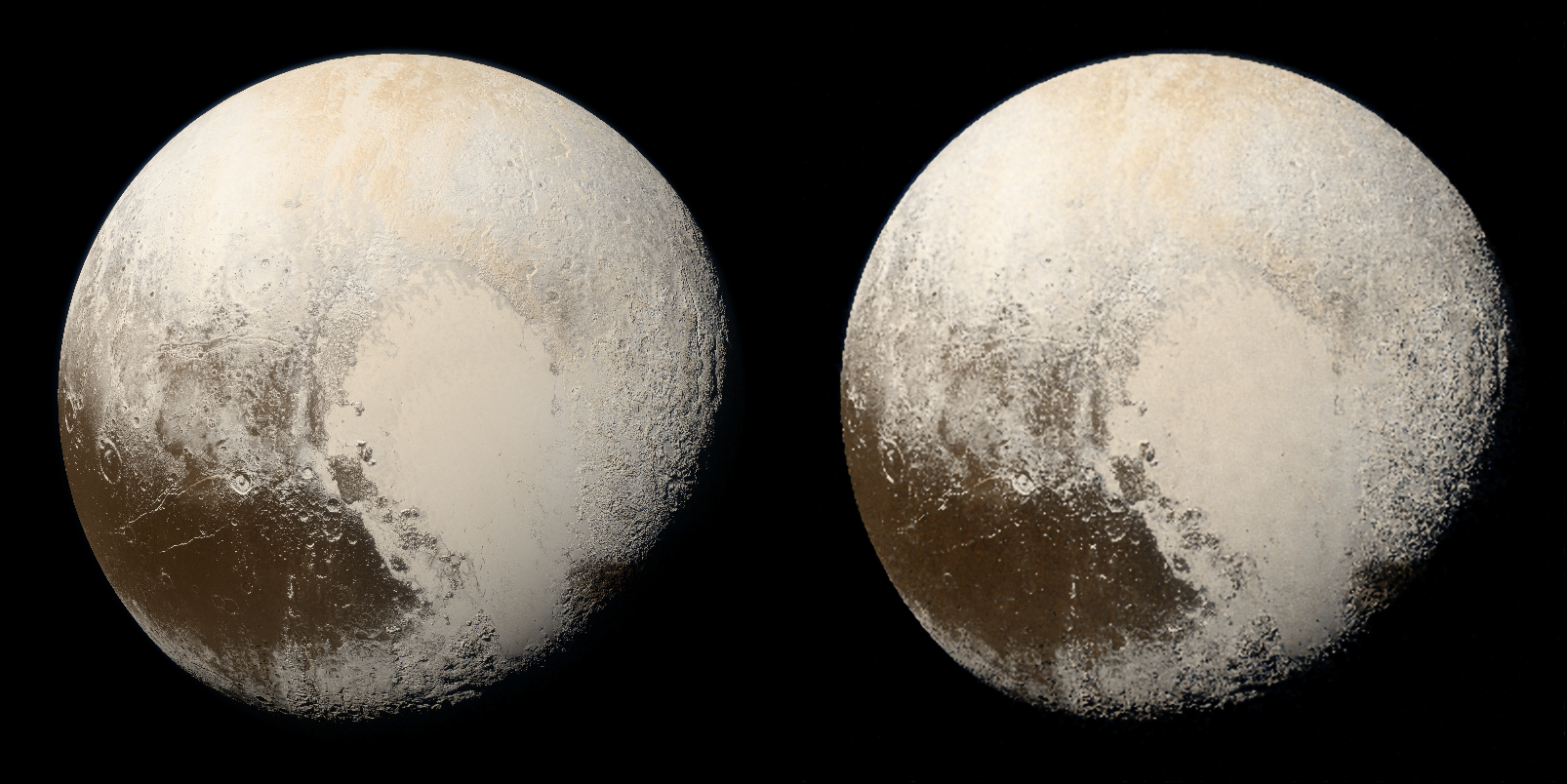

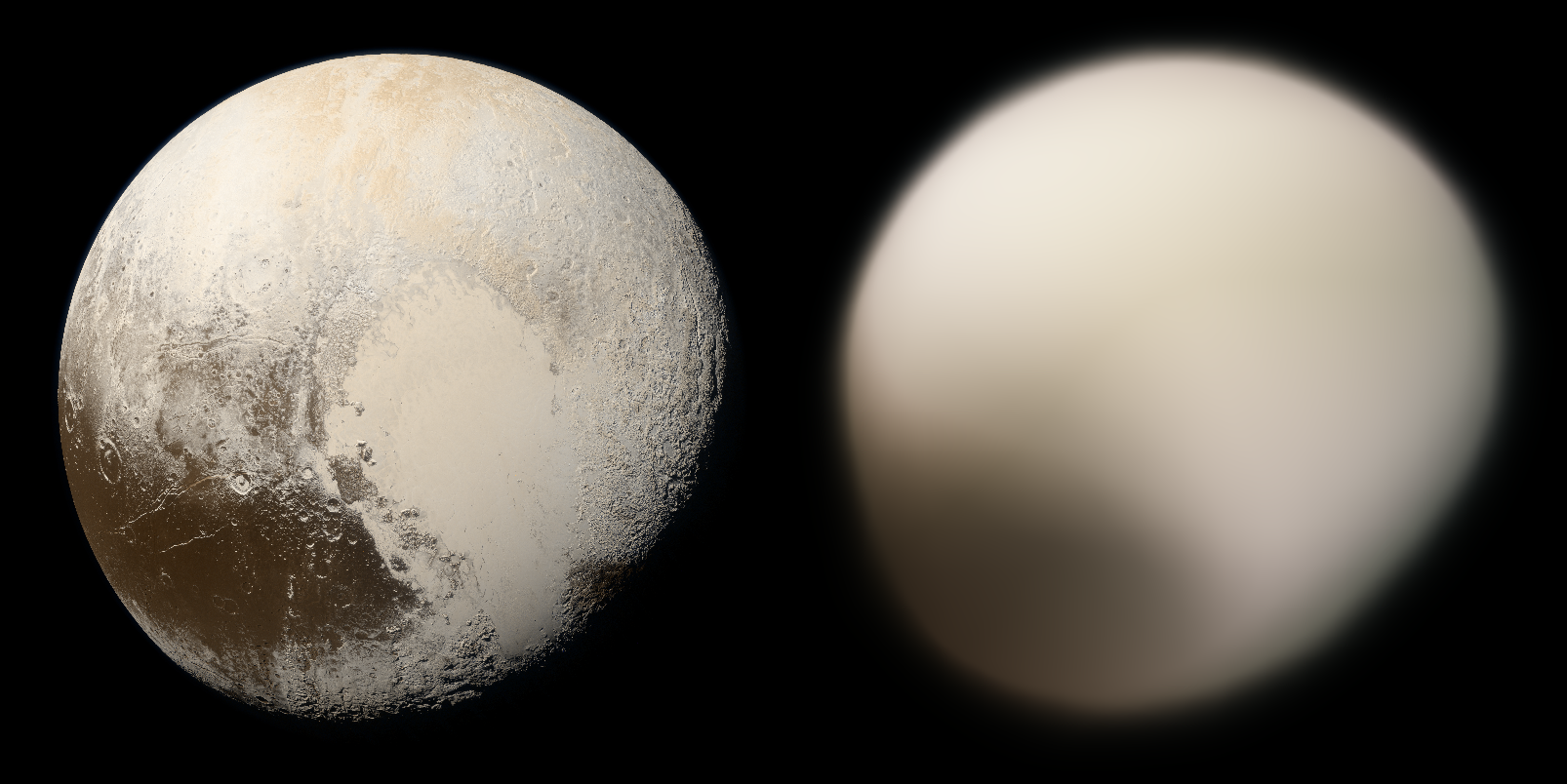

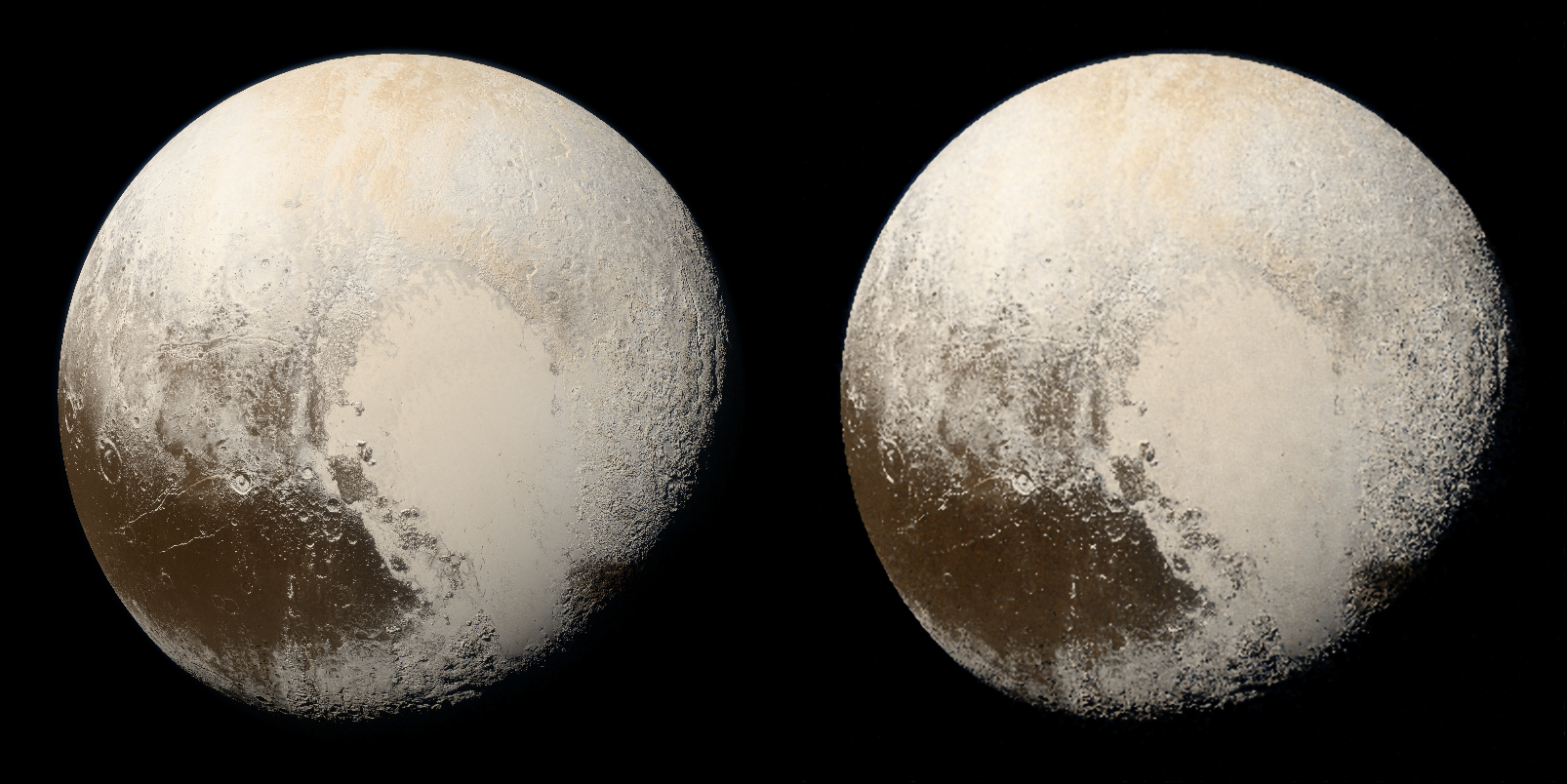

ReLU Fourier Mapping( Gaussian distribution)

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

31.523 |

24.383 |

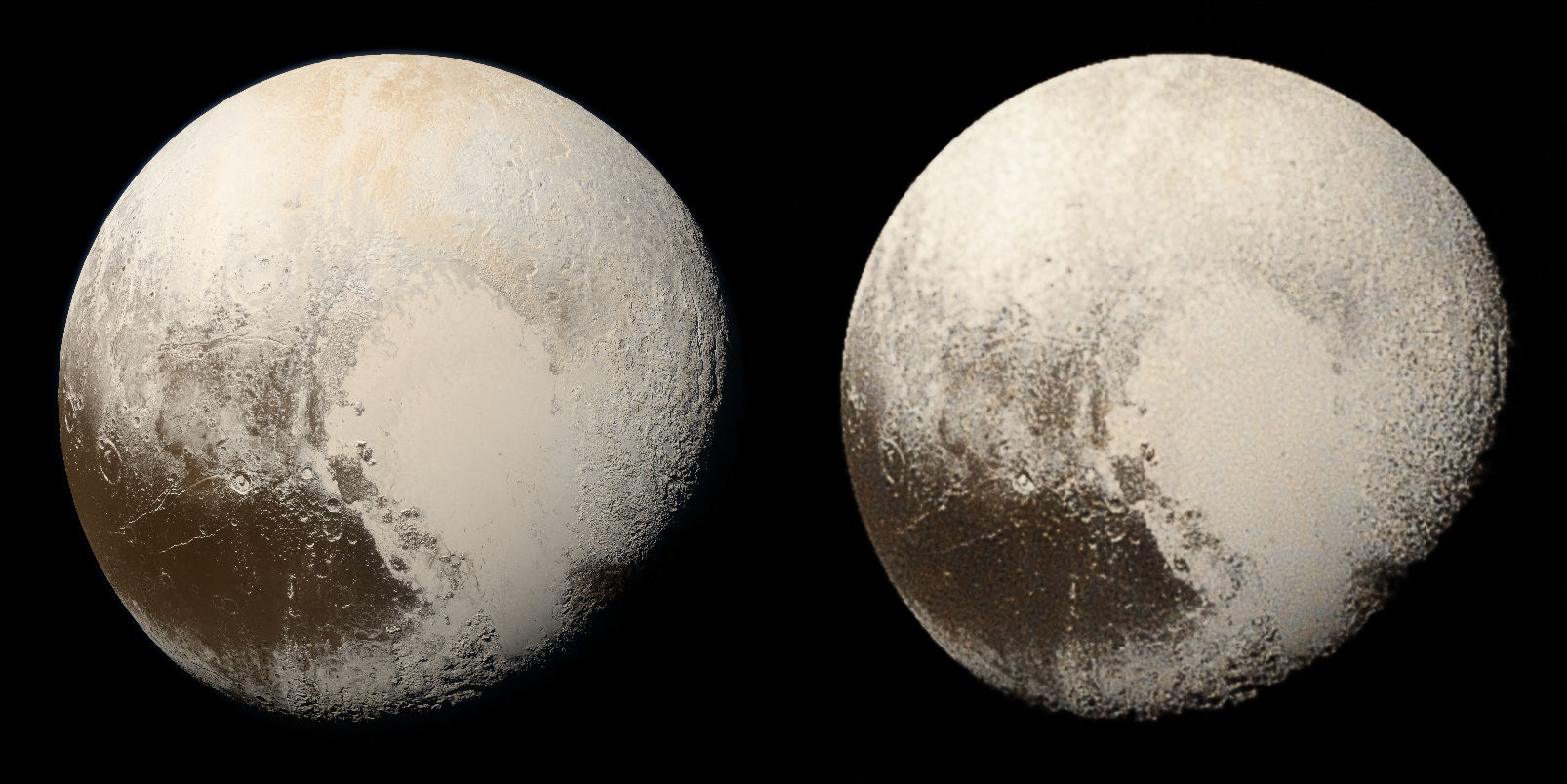

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

30.004 |

25.222 |

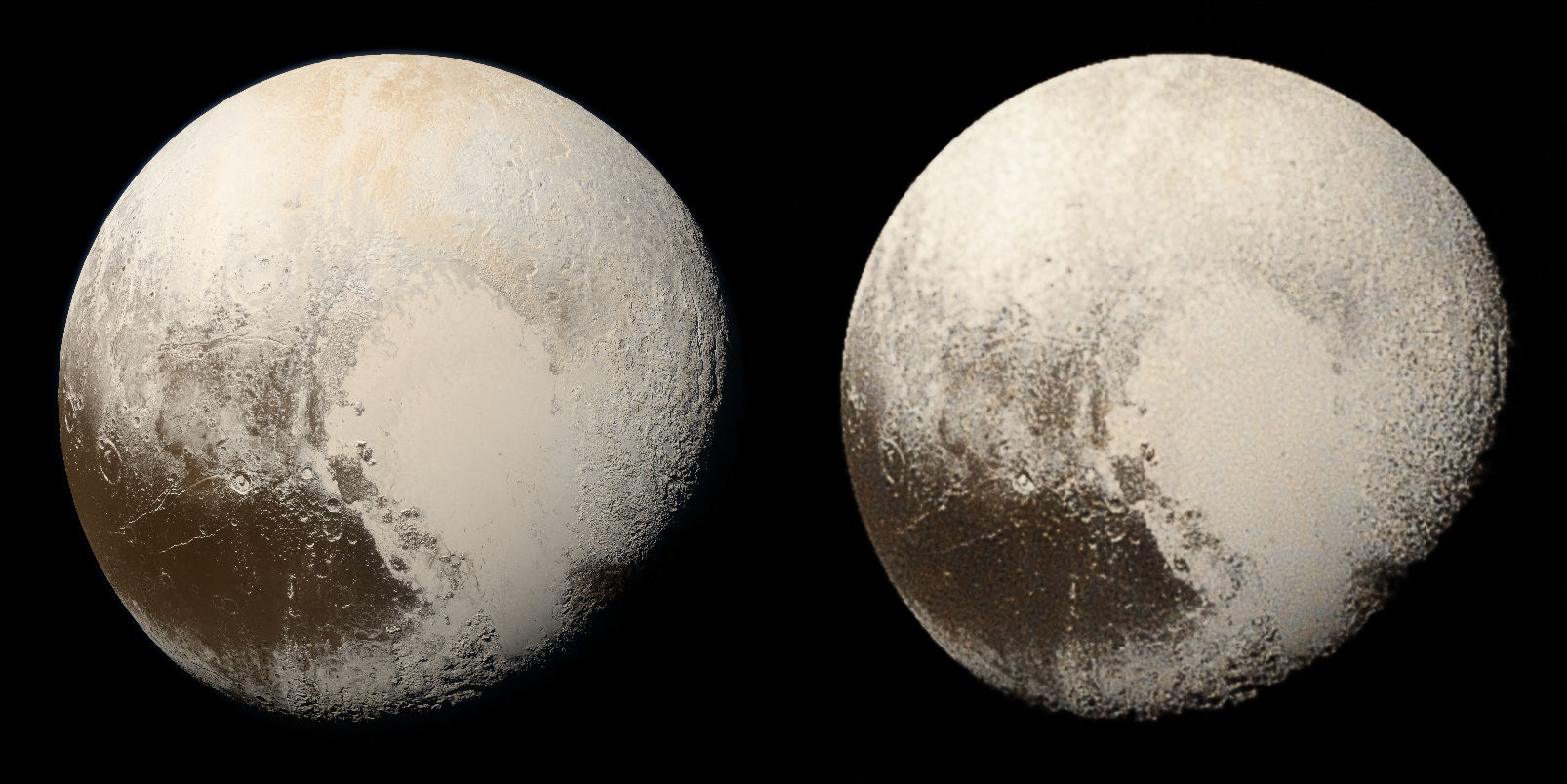

|

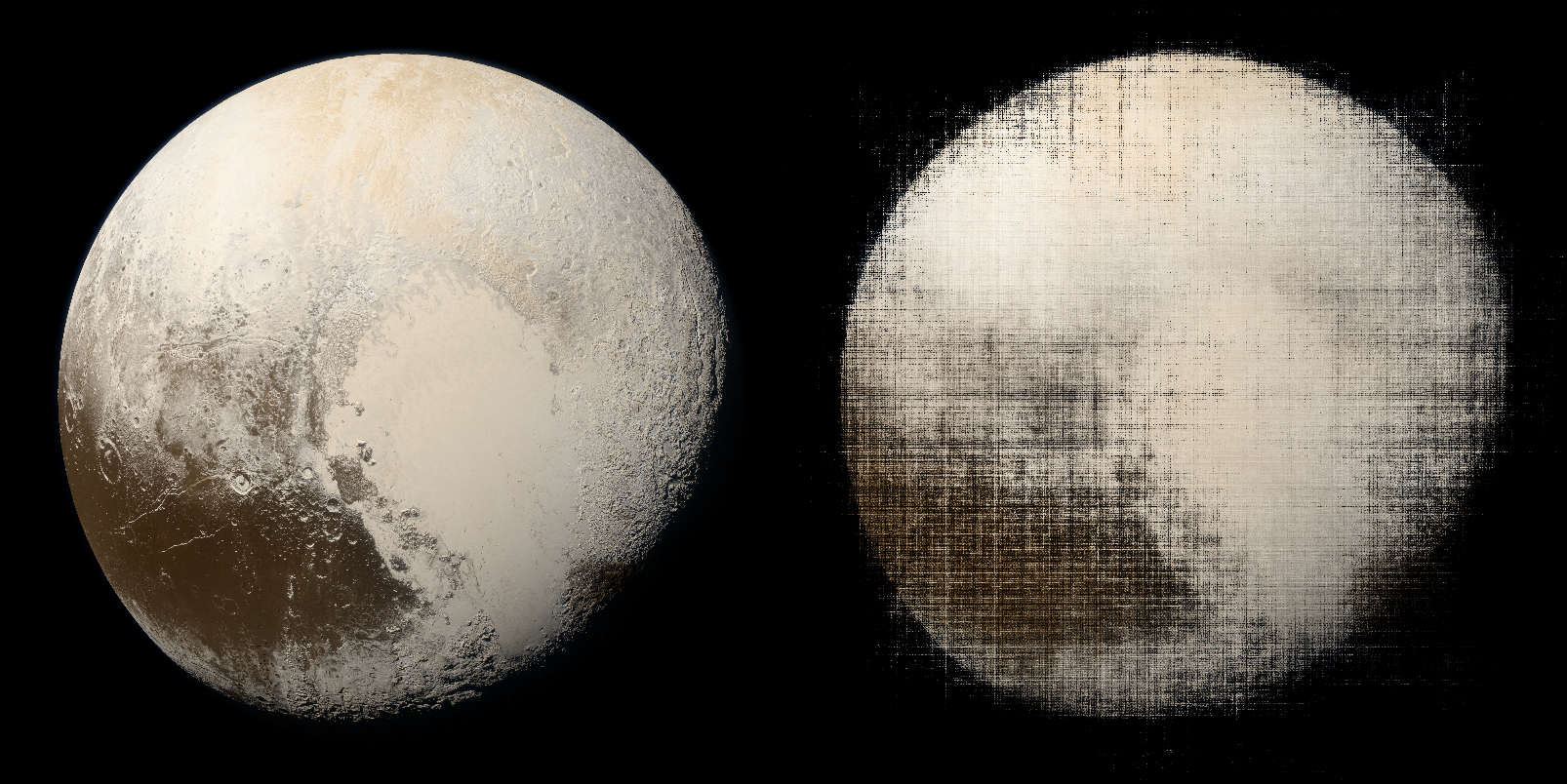

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.585 |

7.146 |

As Siren dependent on quality of initialization, in this experiment I didn't initialize it specially, so the outputs are bad.

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.767 |

22.326 |

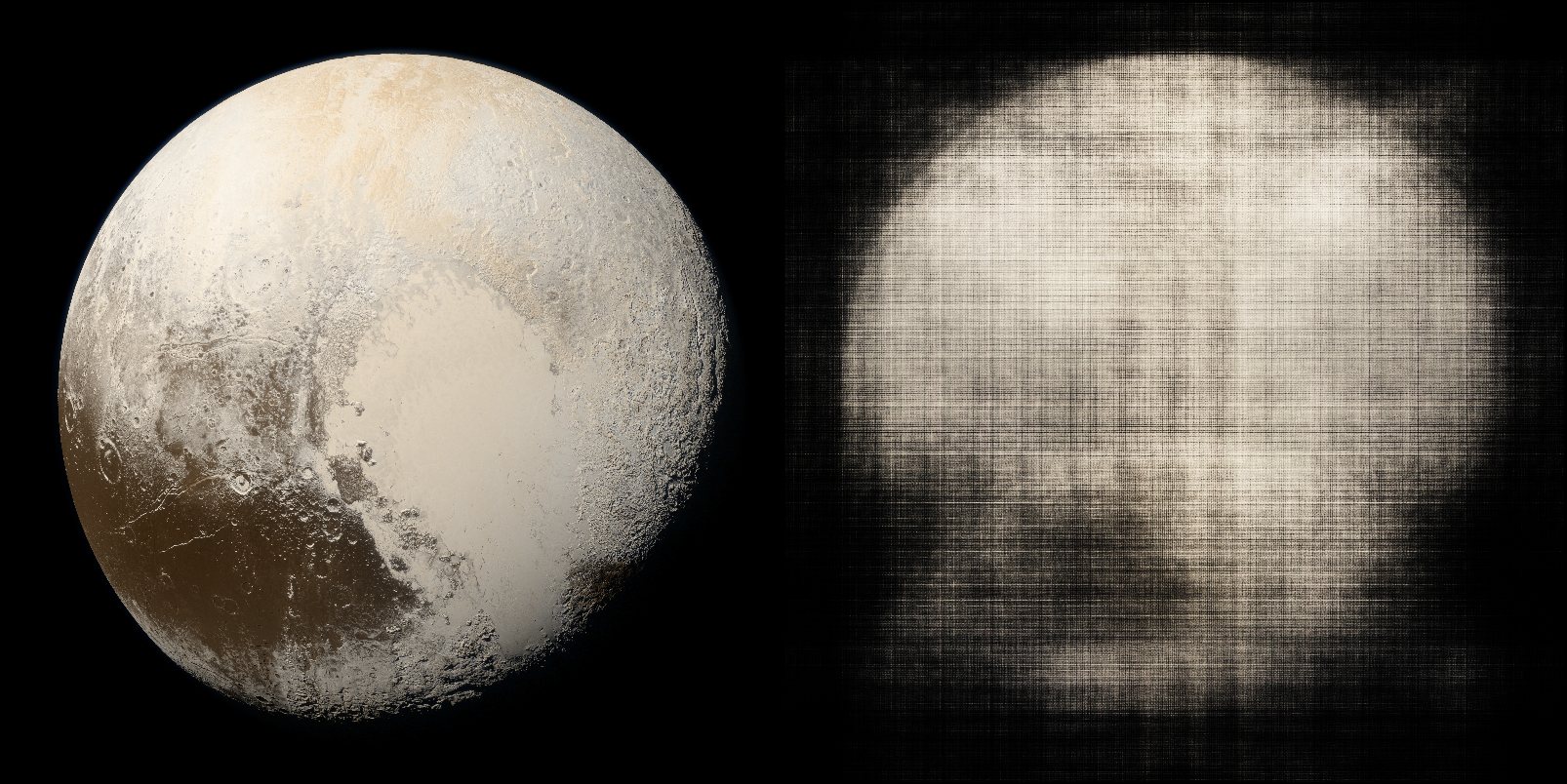

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.36 |

8.61 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

30.677 |

23.899 |

Super-Gaussian activation

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.347 |

9.494 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

30.644 |

24.183 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

9.589 |

9.259 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

24.268 |

9.204 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.273 |

9.268 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

31.283 |

24.006 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.417 |

8.572 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

31.222 |

23.861 |

Multi-Quadratic activation

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

8.448 |

8.846 |

|

Image size 800*800, batch size 1024 |

Image size 800*800, batch size 800*800 |

|

|

|

| MAX_PSNR |

30.355 |

23.944 |

Thanks for kwea123's wonderful live stream and his repo