Goal: analyze the distribution of outcomes in a badminton tournament.

Approach: apply Bayesian data analysis on historical data of badminton tournaments. The estimand of interest is the probability of a certain outcome. The modelling of a match outcome will be explained more in section 2.

Implementation:

- Start with some naive assumption of the estimand, in order to choose the model later

- Collect and preprocess data

- Decide on prior choices and models

- Do stan analysis on each models

- Model comparision (using PSIS-LOO)

- Do posterior predictive comparision between models

- Conclusion, possible improvements

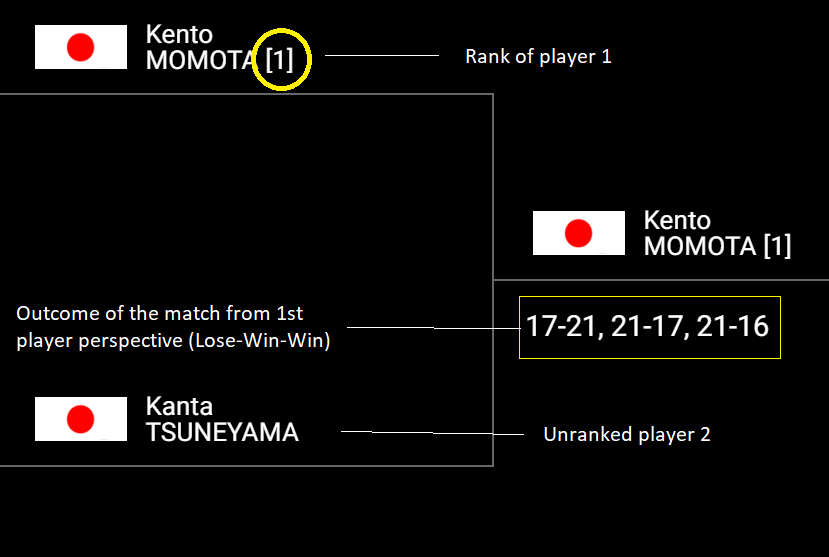

In one tournament, there are 8 seed players and some unranked players. To discretize the ranking spread, we chose 12th as the rank for all unranked players. The spread is calculated as follows:

Spread (from 1st player perspective) = Rank(2nd player) - Rank(1st player)

E.g.

- Spread(from 1st rank player to 8th rank player) = 8 - 1 = 7

- Spread(from 2nd rank player to unrank player) = 12 - 2 = 10

- Spread(from unrank player to 3rd rank player) = 3 - 12 = -9

The discrete space for ranking spread is then [-11,-10,-9,...,9,10,11]

A match (which has at most 3 games) has 6 possible outcomes:

- Lose Lose -> Lose

- Lose Win Lose -> Lose

- Win Lose Lose -> Lose

- Lose Win Win -> Win

- Win Lose Win -> Win

- Win Win -> Win

To discretize this parameter, we map the outcome of a match to [1,2,3,4,5,6] in terms of win degree (i.e. win degree 1 is the worst, and win degree 6 is the best).

For the match below, ranking spread is 11, win degree is 4

Unless stated otherwise, all information in the data are from 1st player perspective

An observation of a match includes 2 pieces of information:

- ranking spread

- win degree

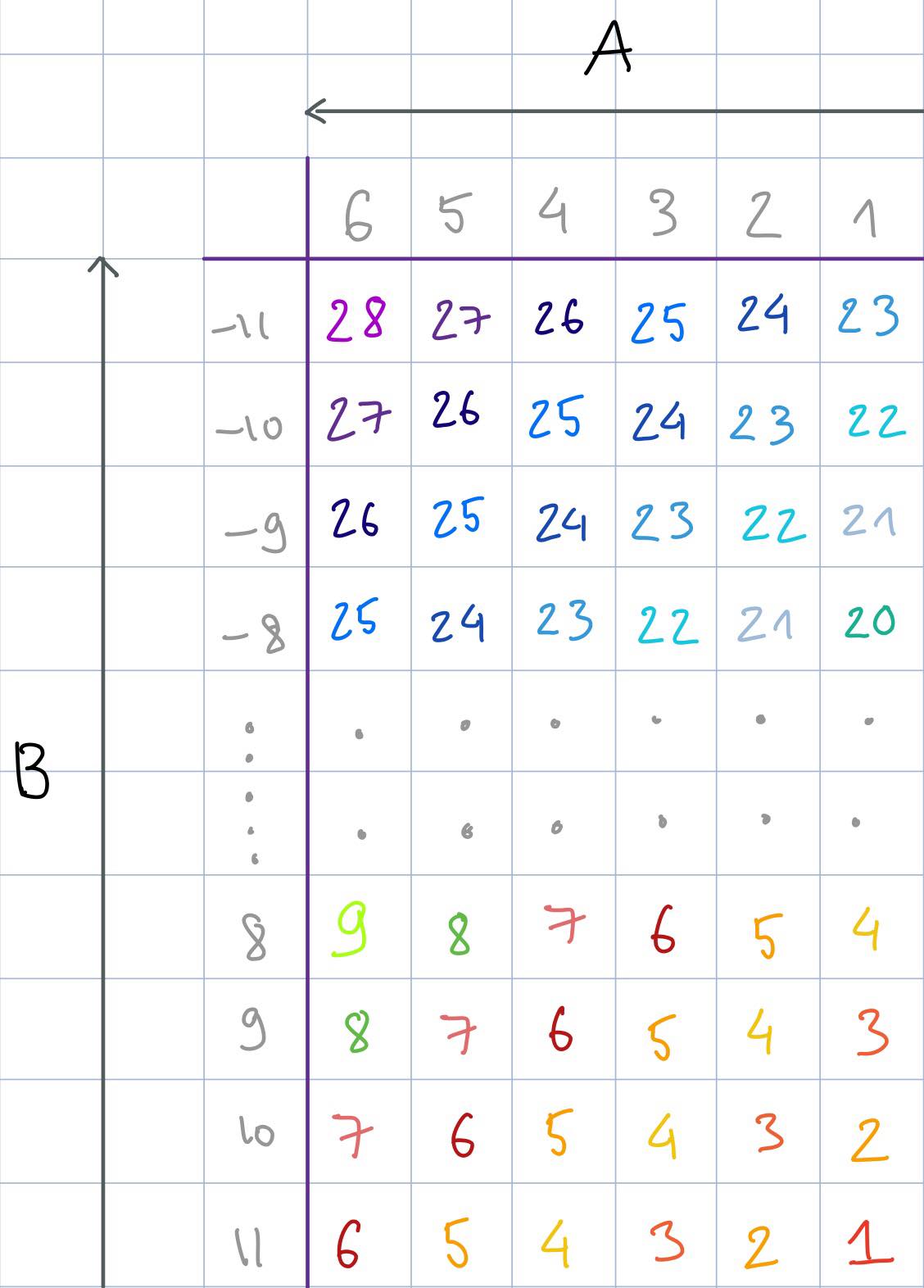

To formulate the observations as a one dimensional space collection, we need to reduce the matrix 23x6 (23 different spreads, 6 different win degrees) of all possible raw observations. The intuition of the reduction is as follows:

- With the same ranking spread, higher win degree correlates to higher value (see arrow A in image below)

- With the same win degree, lower ranking spread correlates to higher value (see arrow B in image below)

- Step between value is 1

With the given constraint, we will have 28 possible values of observation from [1,28]. The mapping is as follow (columns are win degrees and rows are ranking spreads)

The problem analysis explores the distribution of the observations, especially concentrating on the predictive distribution of the new tournament. Furthermore, we will try to analyze the affect of the different models and prior choices.

The dataset is collected from Badminton World Federation (BWF) tournament database using Scrapy crawler. After collecting, the data is preprocessed as stated in the previous section. In the end, the format of data is similar to the factory data assignments. A peek of the data:

show_first_rows_of_data()| Tournament 1 | Tournament 2 | Tournament 3 | Tournament 4 | Tournament 5 | |

|---|---|---|---|---|---|

| Match 1 | 6.0 | 17.0 | 16.0 | 6.0 | 1.0 |

| Match 2 | 15.0 | 15.0 | 17.0 | 13.0 | 17.0 |

| Match 3 | 16.0 | 7.0 | 4.0 | 17.0 | 5.0 |

| Match 4 | 15.0 | 13.0 | 17.0 | 17.0 | 13.0 |

| Match 5 | 19.0 | 20.0 | 20.0 | 15.0 | 17.0 |

show_summary_of_data()| Tournament 1 | Tournament 2 | Tournament 3 | Tournament 4 | Tournament 5 | |

|---|---|---|---|---|---|

| count | 67.000000 | 67.000000 | 67.000000 | 67.000000 | 67.000000 |

| mean | 13.776119 | 14.388060 | 13.746269 | 14.880597 | 14.164179 |

| std | 5.746727 | 5.635266 | 5.329572 | 5.878885 | 5.703792 |

| min | 4.000000 | 3.000000 | 4.000000 | 3.000000 | 1.000000 |

| 25% | 9.000000 | 10.000000 | 8.000000 | 10.000000 | 10.000000 |

| 50% | 15.000000 | 15.000000 | 15.000000 | 16.000000 | 16.000000 |

| 75% | 17.000000 | 18.000000 | 17.000000 | 17.000000 | 17.000000 |

| max | 25.000000 | 27.000000 | 26.000000 | 27.000000 | 27.000000 |

We decided to use two different priors:

- Inverse gamma is chosen on variance because it is the conjugate prior to normal likelihood and it has a closed form solution for the outcome of the posterior

- Uniform is chosen as weak prior to observe how sensitive is outcome in regards the prior and the data input

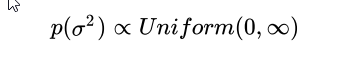

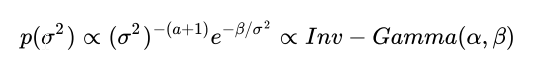

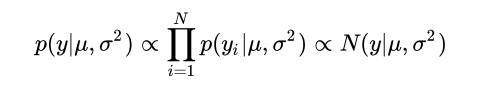

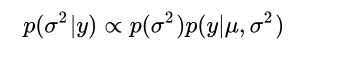

In normal distribution where mu is known and sigma is unknown, the marginal posterior distribution p(sigma^2 | y) , can be computed as described below. The posterior distribution is computed using two different priors, whereas the first is an uniformative (uniform) and the second an informative (inverse gamma) prior.

Priors:

Uniform prior

Inverse gamma prior

where α and β are the shape and scale parameters. Our prior assumption is that variance will be relatively small but we are not sure how small it is, and our guess is around [1,2]. The pdf of inverse gamma with α=1 and β=1 is a good fit for our assumption.

Likelihood:

where mu is known.

Posterior:

Since one of the objective is to predict the distribution of a new tournament, we will use pooled and hierarchical model. The separate model is excluded because it handles the tournaments uniquely without having any common parameters which could be used to predict the new tournament.

In the pooled model the mean and the variance is computed from the combined data of all the tournaments and there is no distinction between different tournaments. This means that also the new tournament will have similar distibution as the predictive distribution of the tournaments.

In the hierarchical model each tournament is handled separately having own mean and common standard deviation. Furthermore, all the means are controlled by common hyperparameters (

Then based on the prior choices, we have 4 different models:

- pooled with uniform prior

- pooled with inverse gamma prior for variance

- hierarchical with uniform prior

- hierarchical with inverse gamma prior for variance

For each model, we will show the Stan model and convergence diagnostic.

The Stan model is fitted using Stan's default parameters (4 chains, 1000 warmup iterations, 1000 sampling iteration, ending up to 4000 samples and 10 as maximum tree depth). In addition, to avoid false positive conclusion about divergences, the adapt_delta value is set to 0.9. This means that the fitting uses larger target acceptance probability and therefore all the divergences can be seen. If the resulting value is still 0 after this, we can verify that there are no divergences. If not, the divergences could be further analyzed by increasing adapt_delta.

Besides divergences, the convergence diagnostic includes a short discussion about

The stan code of the model:

data {

int<lower=0> N; // Number of observations

vector[N] y; // N observations for J tournaments

}

parameters {

real mu; // Common mean

real<lower=0> sigma; // Common std

}

model {

y ~ normal(mu, sigma);// Model for fitting data using tournament specific mu and common std

}

generated quantities {

vector[N] log_lik;

real ypred;

ypred = normal_rng(mu, sigma); //Prediction of tournament

for (n in 1:N)

log_lik[n] = normal_lpdf(y[n] | mu, sigma); //Log-likelihood

}

After compiling the model and fitting the combined data of the tournaments, the diagnostic of the fit was examined:

| Diagnostic | Result |

|---|---|

| All |

OK |

| Low |

OK |

| Divergences is 0 | OK |

All the results for pooled uniform prior model fitting are good. The full fit can be found in the Attachment 1 and a shorter summary is shown below.

# Fit pooled uniform model

pool_uni_df, pool_uni_fit = compute_model(r'stan_code/pool_uniform_prior.stan', pooled_data_model)

# Print summary of the fit

print_compact_fit(pool_uni_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu | 14.1935 | 0.00603298 | 0.314179 | 13.5613 | 13.9842 | 14.2001 | 14.409 | 14.8204 | 2712 | 1.00109 |

| sigma | 5.66282 | 0.00441324 | 0.224035 | 5.24378 | 5.50642 | 5.65253 | 5.80839 | 6.12739 | 2577 | 1.00018 |

| log_lik[0] | -3.70522 | 0.00177279 | 0.091105 | -3.89356 | -3.76527 | -3.70164 | -3.64204 | -3.53086 | 2641 | 1.00078 |

| log_lik[1] | -2.66381 | 0.000781838 | 0.0394576 | -2.7439 | -2.68967 | -2.66273 | -2.63632 | -2.58826 | 2547 | 1.00023 |

| log_lik[2] | -2.70475 | 0.000794411 | 0.0395853 | -2.78604 | -2.73049 | -2.70434 | -2.67761 | -2.62968 | 2483 | 1.00032 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| log_lik[332] | -2.70475 | 0.000794411 | 0.0395853 | -2.78604 | -2.73049 | -2.70434 | -2.67761 | -2.62968 | 2483 | 1.00032 |

| log_lik[333] | -2.81336 | 0.000768998 | 0.0414903 | -2.89455 | -2.84142 | -2.81289 | -2.78428 | -2.73293 | 2911 | 1.00091 |

| log_lik[334] | -3.46419 | 0.001425 | 0.0745643 | -3.61728 | -3.51306 | -3.46244 | -3.41316 | -3.32091 | 2738 | 1.00092 |

| ypred | 14.2868 | 0.0901683 | 5.70274 | 3.42566 | 10.3648 | 14.2842 | 18.1957 | 25.5974 | 4000 | 0.999995 |

| lp__ | -746.025 | 0.0248809 | 1.00668 | -748.748 | -746.425 | -745.714 | -745.295 | -745.018 | 1637 | 0.999565 |

# Print additional checking of the fit

print_compact_fit_checking(pool_uni_fit, pool_uni_df)Maximum value of the Rhat:

1.0012218111524749

Divergences:

0.0 of 4000 iterations ended with a divergence (0.0%)

The stan code of the model:

data {

int<lower=0> N; // Number of observations

vector[N] y; // N observations for J tournaments

real<lower=0.1> alpha; //Shape

real<lower=0.1> beta; //Scale

}

parameters {

real mu; // Common mean

real<lower=0> sigmaSq; // Common var

}

transformed parameters {

real<lower=0> sigma;

sigma <- sqrt(sigmaSq);

}

model {

sigmaSq ~ inv_gamma(alpha,beta); // Prior

y ~ normal(mu, sigma); // Fitting of the model

}

generated quantities {

vector[N] log_lik;

real ypred;

ypred = normal_rng(mu, sigma); // Prediction of tournament

for (n in 1:N)

log_lik[n] = normal_lpdf(y[n] | mu, sigma); //Log-likelihood

}

The same procedure is followed here (as in the previous section) and similar results were obtained:

| Diagnostic | Result |

|---|---|

| All |

OK |

| Low |

OK |

| Divergences is 0 | OK |

All the results for pooled inverse gamma prior model fitting are good. The full fit can be found in the Attachment 2 and a shorter summary is shown below.

# Fit pooled inverse gamma model

pool_inv_df, pool_inv_fit = compute_model(r'stan_code/pool_inverse_gamma_prior.stan', pooled_data_model)

# Print summary of the fit

print_compact_fit(pool_inv_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu | 14.1912 | 0.00659562 | 0.311324 | 13.5848 | 13.9808 | 14.1944 | 14.3957 | 14.8102 | 2228 | 1.00237 |

| sigmaSq | 31.819 | 0.0465451 | 2.42705 | 27.4953 | 30.0994 | 31.688 | 33.3725 | 36.8217 | 2719 | 1.00037 |

| sigma | 5.63676 | 0.0040921 | 0.21424 | 5.2436 | 5.48629 | 5.62921 | 5.77689 | 6.06809 | 2741 | 1.00037 |

| log_lik[0] | -3.70983 | 0.00189007 | 0.0933245 | -3.89964 | -3.77044 | -3.7058 | -3.64535 | -3.53664 | 2438 | 1.00087 |

| log_lik[1] | -2.65936 | 0.000735954 | 0.0384743 | -2.73647 | -2.68499 | -2.65882 | -2.63316 | -2.58596 | 2733 | 0.999909 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| log_lik[332] | -2.70068 | 0.000759725 | 0.039352 | -2.78049 | -2.72698 | -2.70021 | -2.67379 | -2.62523 | 2683 | 0.999668 |

| log_lik[333] | -2.81015 | 0.000869736 | 0.0394174 | -2.88917 | -2.83559 | -2.80985 | -2.78294 | -2.7354 | 2054 | 1.00321 |

| log_lik[334] | -3.46668 | 0.0015697 | 0.0761101 | -3.62117 | -3.51465 | -3.46372 | -3.41446 | -3.3255 | 2351 | 1.00139 |

| ypred | 14.2633 | 0.08992 | 5.61407 | 3.23815 | 10.4847 | 14.328 | 18.0029 | 25.224 | 3898 | 0.999202 |

| lp__ | -751.202 | 0.0262385 | 1.01486 | -753.917 | -751.573 | -750.898 | -750.491 | -750.235 | 1496 | 1.00201 |

# Print additional checking of the fit

print_compact_fit_checking(pool_inv_fit, pool_inv_df)Maximum value of the Rhat:

1.00330613987631

Divergences:

0.0 of 4000 iterations ended with a divergence (0.0%)

The stan code of the model:

data {

int<lower=0> N; // Number of observations

int<lower=0> J; // Number of tournaments

matrix[N,J] y; // N observations for J tournaments

}

parameters {

real mu0; // Common mu for each J tournament's mu

real<lower=0> sigma0; // Common std for each J tournament's mu

real<lower=0> sigma; // Common std between tournaments

real mu_tilde[J]; // Tournament specific mu

}

model {

for (j in 1:J)

mu[j] ~ normal(mu0, sigma0); // Model for computing tournament specific mu from common mu0 and sigma0

for (j in 1:J)

y[:,j] ~ normal(mu[j], sigma); // Model for fitting data using tournament specific mu and common std

}

generated quantities {

matrix[N,J] log_lik;

real ypred[J];

real mu_new;

real ypred_new;

for (j in 1:J)

ypred[j] = normal_rng(mu[j], sigma); // Predictive distibutions of all the tournaments

mu_new = normal_rng(mu0, sigma0); // Next posterior distribution from commonly learned mu0 and sigma0

ypred_new = normal_rng(mu_new, sigma); // Next predictive distibutions of new tournament

for (j in 1:J)

for (n in 1:N)

log_lik[n,j] = normal_lpdf(y[n,j] | mu[j], sigma); //Log-likelihood

}

The same procedure is followed here (as in the previous section) with minor change. The data used for fitting is a matrix where columns are the tournaments and rows are the matches in the tournaments. The diagnostic results are:

| Diagnostic | Result |

|---|---|

| All |

NO lp__ is 1.4 |

| Low |

NO |

| Divergences is 0 |

NO 8% of the target posterior was not explored |

Because none of the conditions were fulfilled, in the next step we will try to improve the results by reducing the accuracy of the simulations by increasing the value of the adapt_delta parameter.

The results of the attempt 1 can be seen below.

# Fit hierarchical uniform model

hier_uni_df, hier_uni_fit = compute_model(r'stan_code/hier_uniform_prior.stan', hierarchical_data_model)

# Print the summary of the fit

print_compact_fit(hier_uni_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu0 | 14.222 | 0.0306316 | 0.518028 | 13.2403 | 13.9695 | 14.264 | 14.4845 | 15.0738 | 286 | 1.0193 |

| sigma0 | 0.560246 | 0.0731073 | 0.759754 | 0.034287 | 0.172624 | 0.381537 | 0.709618 | 2.13192 | 108 | 1.03272 |

| mu[0] | 14.1029 | 0.0484593 | 0.477268 | 13.0482 | 13.8036 | 14.1453 | 14.4149 | 14.938 | 97 | 1.03988 |

| mu[1] | 14.2979 | 0.0144251 | 0.458663 | 13.3891 | 14.0025 | 14.3344 | 14.5531 | 15.27 | 1011 | 1.0106 |

| mu[2] | 14.0964 | 0.0545487 | 0.496962 | 13.0192 | 13.7931 | 14.1295 | 14.4328 | 14.9691 | 83 | 1.044 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| ypred[3] | 14.4326 | 0.0903994 | 5.6887 | 3.38663 | 10.585 | 14.451 | 18.2019 | 25.6342 | 3960 | 0.999456 |

| ypred[4] | 14.3471 | 0.0899178 | 5.6869 | 3.39243 | 10.5848 | 14.3392 | 18.2295 | 25.5183 | 4000 | 1.00017 |

| mu_new | 14.208 | 0.0255003 | 1.1708 | 12.3481 | 13.8682 | 14.2873 | 14.5676 | 15.8755 | 2108 | 1.00535 |

| ypred_new | 14.2926 | 0.0919394 | 5.81476 | 2.84883 | 10.4361 | 14.4064 | 18.2223 | 25.4268 | 4000 | 1.00122 |

| lp__ | -743.541 | 1.47587 | 4.89491 | -752.243 | -746.688 | -744.196 | -741.133 | -732.58 | 11 | 1.42308 |

# Print additional checking of the fit

print_compact_fit_checking(hier_uni_fit, hier_uni_df)Maximum value of the Rhat:

1.423080255915649

Divergences:

308.0 of 4000 iterations ended with a divergence (7.7%)

Try running with larger adapt_delta to remove the divergences

After changing the accuracy of the simulations from 0.9 to 0.93, the data is re-fit and following results are gained:

| Diagnostic | Result |

|---|---|

| All |

OK |

| Low |

OK |

| Divergences is 0 |

NO still 2% of the target posterior was not explored |

With these results we can verify that the hierarchical uniform prior model fitting is almost successful. Although, this is not the desired result and therefore, in the next step the further improvement is discussed.

The results of the attempt 2 is shown below.

# Fit hierarchical uniform model

hier_uni_df, hier_uni_fit = compute_model(r'stan_code/hier_uniform_prior.stan',

hierarchical_data_model, adapt_delta=0.93)

# Print the summary of the fit

print_compact_fit(hier_uni_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu0 | 14.1722 | 0.0133645 | 0.44123 | 13.3144 | 13.9179 | 14.159 | 14.4377 | 15.0467 | 1090 | 1.00438 |

| sigma0 | 0.563965 | 0.0243783 | 0.571721 | 0.0510735 | 0.200409 | 0.40928 | 0.731901 | 2.12706 | 550 | 1.00114 |

| mu[0] | 14.0559 | 0.0143581 | 0.480942 | 13.0217 | 13.7558 | 14.0839 | 14.3739 | 14.9692 | 1122 | 1.0034 |

| mu[1] | 14.2551 | 0.013469 | 0.481318 | 13.3542 | 13.9509 | 14.2275 | 14.5544 | 15.2806 | 1277 | 1.00411 |

| mu[2] | 14.0422 | 0.0138805 | 0.481636 | 12.9916 | 13.76 | 14.056 | 14.3534 | 14.9512 | 1204 | 1.00298 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| ypred[3] | 14.3333 | 0.0915444 | 5.68976 | 3.33591 | 10.4447 | 14.3595 | 18.0902 | 25.5877 | 3863 | 0.99995 |

| ypred[4] | 14.2131 | 0.0884799 | 5.58756 | 3.2083 | 10.4591 | 14.2649 | 18.0418 | 24.8965 | 3988 | 1.00015 |

| mu_new | 14.1864 | 0.0184552 | 0.961623 | 12.4116 | 13.7922 | 14.1716 | 14.569 | 16.0339 | 2715 | 1.00083 |

| ypred_new | 14.2098 | 0.0896986 | 5.67304 | 2.94677 | 10.4409 | 14.1165 | 17.8849 | 25.5395 | 4000 | 0.999853 |

| lp__ | -744.229 | 0.245898 | 4.09994 | -752.034 | -747.03 | -744.541 | -741.593 | -735.542 | 278 | 1.00407 |

# Print additional checking of the fit

print_compact_fit_checking(hier_uni_fit, hier_uni_df)Maximum value of the Rhat:

1.0043815114485763

Divergences:

79.0 of 4000 iterations ended with a divergence (1.975%)

Try running with larger adapt_delta to remove the divergences

In this section, we will modify the Stan code and use exactly the same approach as described in pystan's workflow (http://mc-stan.org/users/documentation/case-studies/pystan_workflow.html, A Successful Fit). Because the fit which uses the centered parametrization is not successful, we should change the Stan code using non-centered parametrization.

Centered parametrization of parameter mu

parameters {

...

real mu[J]; // Tournament specific mu

...

}

model {

for (j in 1:J) // Model for computing tournament specific mu from common mu0 and sigma0

mu[j] ~ normal(mu0, sigma0);

...

}

is converted to non-centered parametrization

parameters {

...

real mu_tilde[J];

}

transformed parameters {

real mu[J];// Tournament specific mu

for (j in 1:J)

mu[j] = mu0 + sigma0 * mu_tilde[j];

}

model {

for (j in 1:J) // Model for computing tournament specific mu from common mu0 and sigma0

mu_tilde[j] ~ normal(0, 1); // Implies mu[j] ~ normal(mu0,sigma0)

...

}

The full updated Stan code is shown in the next section.

The stan code of the model:

data {

int<lower=0> N; // Number of observations

int<lower=0> J; // Number of tournaments

matrix[N,J] y; // N observations for J tournaments

}

parameters {

real mu0; // Common mu for each J tournament's mu

real<lower=0> sigma0; // Common std for each J tournament's mu

real<lower=0> sigma; // Common std between tournaments

real mu_tilde[J];

}

transformed parameters {

real mu[J]; // Tournament specific mu

for (j in 1:J)

mu[j] = mu0 + sigma0 * mu_tilde[j];

}

model {

for (j in 1:J) // Model for computing tournament specific mu from common mu0 and sigma0

mu_tilde[j] ~ normal(0, 1); // Implies mu[j] ~ normal(mu0,sigma0)

for (j in 1:J)

y[:,j] ~ normal(mu[j], sigma); // Model for fitting data using machine specific mu and common std

}

generated quantities {

matrix[N,J] log_lik;

real ypred[J];

real mu_new;

real ypred_new;

for (j in 1:J)

ypred[j] = normal_rng(mu[j], sigma); // Predictive distibutions of all the tournaments

mu_new = normal_rng(mu0, sigma0); // Next posterior distribution from commonly learned mu0 and sigma0

ypred_new = normal_rng(mu_new, sigma); // Next predictive distibutions of new tournament

for (j in 1:J)

for (n in 1:N)

log_lik[n,j] = normal_lpdf(y[n,j] | mu[j], sigma); //Log-likelihood

}

The same procedure is followed (as in the previous section) and improved results were obtained:

| Diagnostic | Result |

|---|---|

| All |

OK |

| Low |

OK |

| Divergences is 0 | GOOD ENOUGH still 0.025% of the target posterior was not expolred and increasing adapt_delta did not improve this result |

All of these verify that hierarchical uniform prior model fitting is successful enough. The full fit can be found in the Attachment 3 and a shorter summary is shown below.

# Fit hierarchical uniform model

hier_uni_df, hier_uni_fit = compute_model(r'stan_code/hier_uniform_prior_v2.stan', hierarchical_data_model)

# Print the summary of the fit

print_compact_fit(hier_uni_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu0 | 14.1961 | 0.0141698 | 0.458058 | 13.2531 | 13.9249 | 14.1917 | 14.464 | 15.1017 | 1045 | 1.00285 |

| sigma0 | 0.550535 | 0.0179981 | 0.576781 | 0.0140076 | 0.18154 | 0.388406 | 0.717958 | 2.06309 | 1027 | 1.00309 |

| sigma | 5.66836 | 0.00337786 | 0.213634 | 5.26576 | 5.52314 | 5.66113 | 5.80597 | 6.10786 | 4000 | 0.999766 |

| mu_tilde[0] | -0.207582 | 0.0159645 | 0.883688 | -1.97272 | -0.795004 | -0.21698 | 0.373531 | 1.49234 | 3064 | 0.999875 |

| mu_tilde[1] | 0.10843 | 0.0154156 | 0.883542 | -1.68338 | -0.477808 | 0.101281 | 0.691408 | 1.87599 | 3285 | 1.00029 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| ypred[3] | 14.4115 | 0.0933838 | 5.77547 | 2.91519 | 10.6273 | 14.3439 | 18.2793 | 25.8232 | 3825 | 0.999828 |

| ypred[4] | 14.1985 | 0.0891236 | 5.63667 | 3.05899 | 10.3145 | 14.244 | 18.0424 | 24.8852 | 4000 | 0.999548 |

| mu_new | 14.2178 | 0.0179958 | 0.940442 | 12.446 | 13.8592 | 14.2051 | 14.5741 | 16.0938 | 2731 | 0.999788 |

| ypred_new | 14.198 | 0.0917411 | 5.80221 | 2.83785 | 10.2555 | 14.1872 | 18.1655 | 25.4919 | 4000 | 0.999715 |

| lp__ | -749.31 | 0.0670804 | 2.27085 | -754.317 | -750.67 | -749.121 | -747.698 | -745.474 | 1146 | 1.00138 |

print_compact_fit_checking(hier_uni_fit, hier_uni_df)Maximum value of the Rhat:

1.0030853274280642

Divergences:

1.0 of 4000 iterations ended with a divergence (0.025%)

Try running with larger adapt_delta to remove the divergences

The stan code of the model (using non-centered parametrization):

data {

int<lower=0> N; // Number of observations

int<lower=0> J; // Number of tournaments

matrix[N,J] y; // N measurements for J tournaments

}

parameters {

real mu0; // Common mu for each J tournaments's mu

real<lower=0> sigma0; // Common std for each J tournament's mu

real<lower=0> sigma; // Common std

real mu_tilde[J];

}

transformed parameters {

real mu[J]; // Tournament specific mu

for (j in 1:J)

mu[j] = mu0 + sigma0 * mu_tilde[j];

}

model {

for (j in 1:J) // Model for computing tournament specific mu from common mu0 and sigma0

mu_tilde[j] ~ normal(0, 1); // Implies mu[j] ~ normal(mu0,sigma0)

for (j in 1:J)

y[:,j] ~ normal(mu[j], sigma); // Model for fitting data using tournament specific mu and common std

}

generated quantities {

matrix[N,J] log_lik;

real ypred[J];

real mu_new;

real ypred_new;

for (j in 1:J)

ypred[j] = normal_rng(mu[j], sigma); // Predictive distibutions of all the tournaments

mu_new = normal_rng(mu0, sigma0); // Next posterior distribution from commonly learned mu0 and sigma0

ypred_new = normal_rng(mu_new, sigma); // Next predictive distibutions of new tournament

for (j in 1:J)

for (n in 1:N)

log_lik[n,j] = normal_lpdf(y[n,j] | mu[j], sigma); //Log-likelihood

}

The same procedure is followed (as in the previous section) and the diagnostic results are:

| Diagnostic | Result |

|---|---|

| All |

OK |

| Low |

OK |

| Divergences is 0 | GOOD ENOUGH still 0.025% of the target posterior was not expolred and increasing adapt_delta did not improve this result |

All of these verify that hierarchical inverse gamma prior model fitting is successful enough. The full fit can be found in the Attachment 4 and a shorter summary is shown below.

# Fit hierarchical uniform model

hier_inv_df, hier_inv_fit = compute_model(r'stan_code/hier_inverse_gamma_prior_v2.stan', hierarchical_data_model)

print_compact_fit(hier_inv_df)Using cached StanModel

| mean | se_mean | sd | 2.5% | 25% | 50% | 75% | 97.5% | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|---|---|---|

| mu0 | 14.1742 | 0.0106244 | 0.429863 | 13.3323 | 13.9126 | 14.1783 | 14.4469 | 15.0025 | 1637 | 1.00147 |

| sigma0 | 0.55408 | 0.0187816 | 0.567502 | 0.0227001 | 0.193471 | 0.403468 | 0.728336 | 2.04482 | 913 | 1.0023 |

| mu_tilde[0] | -0.202636 | 0.0156438 | 0.883703 | -1.96254 | -0.760705 | -0.211218 | 0.37144 | 1.55705 | 3191 | 1.0007 |

| mu_tilde[1] | 0.115359 | 0.0161837 | 0.880342 | -1.68287 | -0.427827 | 0.10454 | 0.695337 | 1.82562 | 2959 | 1.00076 |

| mu_tilde[2] | -0.200715 | 0.0146266 | 0.857001 | -1.93516 | -0.749326 | -0.218871 | 0.354451 | 1.48706 | 3433 | 1.00081 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| ypred[3] | 14.5288 | 0.0921506 | 5.67531 | 3.5257 | 10.7779 | 14.5297 | 18.2851 | 26.0277 | 3793 | 0.999918 |

| ypred[4] | 14.1416 | 0.0893992 | 5.6541 | 3.05447 | 10.3961 | 14.0928 | 17.9503 | 25.1413 | 4000 | 0.999683 |

| mu_new | 14.1745 | 0.017315 | 0.895872 | 12.3202 | 13.8097 | 14.1975 | 14.5734 | 15.8751 | 2677 | 1.00043 |

| ypred_new | 14.2066 | 0.0899841 | 5.69109 | 3.30853 | 10.3106 | 14.0458 | 18.1636 | 25.1357 | 4000 | 0.999641 |

| lp__ | -754.52 | 0.0668638 | 2.37343 | -759.858 | -756.017 | -754.246 | -752.799 | -750.641 | 1260 | 1.00191 |

print_compact_fit_checking(hier_inv_fit, hier_inv_df)Maximum value of the Rhat:

1.0022963849717355

Divergences:

1.0 of 4000 iterations ended with a divergence (0.025%)

Try running with larger adapt_delta to remove the divergences

Based on the diagnostic results, all the four models can be used for further analysis (next section).

- Model selection according to the hightest LOO-CV sum

- Reliability based on the k values: < 0.7 ok, < 0.5 good

The PSIS-LOO values of the models can be computed using provided psisloo function. The function returns observation specific k-values and PSIS-LOO-CV values. In addition, it returns the sum of the PSIS-LOO-CV values, hence the sum of the LOO log desnities:

\begin{equation*} lppd_{loo-cv} = \sum_{i=1}^{N} log \left( \frac{1}{S} \sum_{s=1}^{S} p(y_i|\theta^{is}) \right) \end{equation*}

The estimated effective number of parameters (

\begin{equation*} p_{loo-cv} = lppd-lppd_{loo-cv} \end{equation*}

where

All the PSIS-LOO values, estimated effective number of parameters and k-values are shown below.

compare_psis_loo(fits=[pool_uni_fit, pool_inv_fit, hier_uni_fit, hier_inv_fit], model_labels=[

'Pooled model with uniform prior',

'Pooled model with inverse gamma prior',

'Hierarchical model with uniform prior',

'Hierarchical model with inverse gamma prior'])| Models | Psisloo | P_eff | Max k value | Min k value | Mean k value | |

|---|---|---|---|---|---|---|

| 0 | Pooled model with uniform prior | -1056.45 | 1.67 | -0.07 | -0.25 | -0.18 |

| 1 | Pooled model with inverse gamma prior | -1056.38 | 1.64 | -0.01 | -0.16 | -0.11 |

| 2 | Hierarchical model with uniform prior | -1057.25 | 2.96 | 0.10 | -0.14 | -0.05 |

| 3 | Hierarchical model with inverse gamma prior | -1057.39 | 3.10 | 0.11 | -0.18 | -0.04 |

All the models are considered very reliable with very low k-values. If we consider towards model with best predictive accuracy, then Pooled model with inverse gamma prior should be selected, because its PSIS-LOO value is the highest (in other words the sum of log predictive density is the highest)

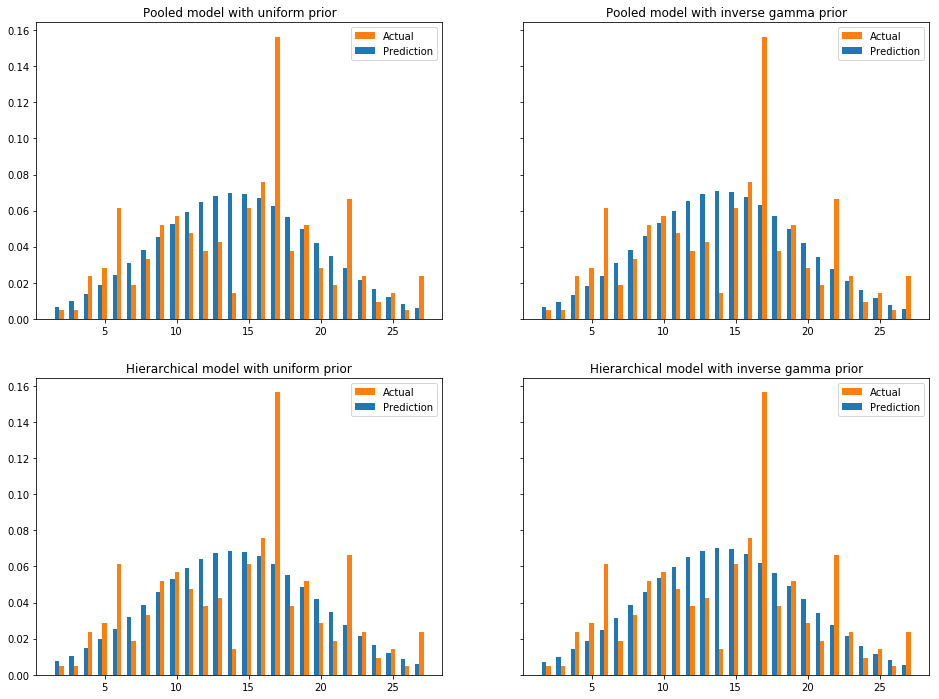

In this section, for all 4 models evaluated in the previous sessions, we will compare predictive distribution versus actual distribution for a new tournament

compare_predictive_vs_actual(fits=[pool_uni_fit, pool_inv_fit, hier_uni_fit, hier_inv_fit], labels=[

'Pooled model with uniform prior',

'Pooled model with inverse gamma prior',

'Hierarchical model with uniform prior',

'Hierarchical model with inverse gamma prior'],

ypred_accessors= ['ypred', 'ypred', 'ypred_new', 'ypred_new'], new_data=last)Posterior predictive distribution are almost identical among all models. This can be guessed already in session 3, where the data can be seen to by highly consistent throughout different tournaments.

Errors are considerable between prediction and actual distribution. Albeit the posterior of the estimand seems to follow a normal distribution, the amount of data in only one new tournament is too small to construct a normal distribution, hence the difference between prediction and actual values.

Problems

- Data model cannot be used for direct inference of a single match

- The values of the divergences for the hierarchical models were 0.025% instead of exact 0% (0.025% of the target posterior was not explored). Because the value is quite small, we decided to use the hierarchical models in the further analysis.

Potential improvements

- Given the outcome of this report, binomial can be a good fit as well

- Data model can be improved so that the estimand is a joint distribution of some parameters (e.g. absolute ranking + win degree)

- Data model can be modified to fit with multinomial model

- Prior and model analysis could be improved by adding the sensitivity analysis

Discussion

From the badminton domain perspective, the result is satisfiable:

- There is a visible correlation between ranking spread and win degree

- Probability of extreme outcome (towards 1 or 28) are low, and not expected in the tournament

From the statistical inference perspective, the result is also satisfiable

- Given the domain knowledge, one would expect the distribution of the estimand to be a normal distribution

- Given the found posteriors, we can see the result is highly data-driven

- Given two models, pooled and hierarchical, we can see that hierarchical model ends up as pooled model

# Import libraries

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import os

from scipy import stats

import pystan

import stan_utility

import psis

import warnings

# For hiding warnings that do not effect the functionality of the code

warnings.filterwarnings('ignore')# Data is provided as files, 1 file contains all matches result of a tournament

# We will use data from all tournaments to fit model

# Except for the last one which will be used to evaluate prediction accuracy

data = []

filenames = os.listdir(r'./data/')

for idx, filename in enumerate(filenames):

col = np.loadtxt(f'data//{filename}').tolist()

if (idx == (len(filenames) - 1)):

last = col

else:

data.append(col[0:67])

np_data = np.array(data)

# Show the data in dataframe and change the names of the rows and columns to more descriable

def show_first_rows_of_data():

df = pd.DataFrame(np_data.T)

df.columns=['Tournament '+str(i+1) for i in range(np_data.shape[0])]

df = df.rename({i: 'Match '+str(i+1) for i in range(np_data.shape[1])}, axis='index')

return df.head()

# Show the data summary: mean, min, max, ....

def show_summary_of_data():

df = pd.DataFrame(np_data.T)

df.columns=['Tournament '+str(i+1) for i in range(np_data.shape[0])]

return df.describe()

# Pooled data and its model for Stan compiler

pooled_data = np_data.flatten()

pooled_data_model = dict(N=len(pooled_data), y=pooled_data, alpha=1, beta=1)

#pooled_inv_g_data_model = dict(N=len(pooled_data), y=pooled_data)

# Hierarchical data (np_data.T) and its model for Stan compiler

hierarchical_data_model = dict(N = np_data.shape[1], J= np_data.shape[0],y = np_data.T, alpha=1, beta=1)

# Compile the given model and fit the given data.

# Parameters:

# file_path: ''

# The path of the stan model code

# data: numpy array

# The data to be fitted

# adapt_delta: 0...1

# Effects to divergences, hence to the accuracy of the posterior.

# The smaller the value is the more strict the Stan model is in accepting sampels.

# The bigger the value is the easier the Stan model accepts samples.

# Returns the summary of the fit and the fit itself.

def compute_model(file_path, data, adapt_delta=0.9):

# Compile model for both separated and pooled

model = stan_utility.compile_model(file_path)

# Fit model: adapt_delta is used for divergences

fit = model.sampling(data=data, seed=194838,control=dict(adapt_delta=adapt_delta))

# get summary of the fit, use pandas data frame for layout

summary = fit.summary()

df = pd.DataFrame(summary['summary'], index=summary['summary_rownames'], columns=summary['summary_colnames'])

return df, fit

# Show compact details of the fit instead of showing the whole fit

def print_compact_fit(fit_df, number_of_rows_head=5, number_of_rows_tail=5):

df = fit_df.head(number_of_rows_head)

df = df.append([{'mean':'...','se_mean':'...','sd':'...','2.5%':'...','25%':'...',

'50%':'...','75%':'...','97.5%':'...','n_eff':'...','Rhat':'...'}])

df = df.rename({0: '...'}, axis='index')

df = df.append(fit_df.tail(number_of_rows_tail))

return df

# Show key details of the checking of the fit: rhat and divergences

def print_compact_fit_checking(fit, df):

#Check the maximum value of the Rhat

print("Maximum value of the Rhat: ")

print(df.describe()['Rhat'][7])

print("")

# Check divergences

print("Divergences:")

stan_utility.check_div(fit)

print("")

# Compare PSIS-LOO values

def compare_psis_loo(fits, model_labels):

psis_loos, p_effs, k_max, k_min, k_mean = [], [], [], [], []

for fit in fits:

psis_loo, p_eff, ks = extract_psis_loo(fit)

psis_loos.append(psis_loo)

p_effs.append(p_eff)

k_max.append(np.max(ks))

k_min.append(np.min(ks))

k_mean.append(np.mean(ks))

df = pd.DataFrame({

'Models': model_labels,

'Psisloo': psis_loos,

'P_eff': p_effs,

'Max k value': k_max,

'Min k value': k_min,

'Mean k value': k_mean

})

return df.round(2)

# Get the effective sample size of the parameters

def get_p_eff(log_lik, lppd_loocv):

likelihoods = np.asarray([np.exp(log_likelihood.flatten()) for log_likelihood in log_lik])

num_sim, num_obs = likelihoods.shape

lppd = 0

for obs in range(num_obs):

lppd += np.log(np.sum(likelihoods[:, obs]) / num_sim)

p_eff = lppd - lppd_loocv

return p_eff

def extract_psis_loo(samples, plot_title=''):

log_lik_matrix = np.asarray([single_sample.flatten() for single_sample in samples['log_lik']])

loo, loos, ks = psis.psisloo(log_lik_matrix)

# Calculate p_eff

p_eff = get_p_eff(log_lik_matrix, loo)

return loo, p_eff, ks

def compare_predictive_vs_actual(fits, labels, ypred_accessors, new_data):

bar_width = 0.3

fig, axes = plt.subplots(nrows=2, ncols=2, sharey=True,figsize=(16,12), subplot_kw=dict(aspect='auto'))

plots = [axes[0,0], axes[0,1], axes[1,0], axes[1,1]]

# Group new_data

# e.g. [1,2,3,2,2] => [1: 0.2, 2: 0.6, 3: 0.2]

aggregated = dict()

for i in new_data:

if i in aggregated:

aggregated[i] += 1

else:

aggregated[i] = 1

# Dist of new_data

x = np.array(list(aggregated.keys()))

y = np.array(list(aggregated.values())) / len(new_data)

for i in range(0, len(fits)):

fit = fits[i]

label = labels[i]

ypred_accessor = ypred_accessors[i]

ypred = fit.extract(permuted=True)[ypred_accessor]

# Dist of ypred for new_data values

y2_dist = stats.norm(np.mean(ypred), np.std(ypred))

y2 = y2_dist.pdf(x)

partial_plt = plots[i]

partial_plt.bar(x,y,width=bar_width,label="Actual", color="C1")

partial_plt.bar(x-bar_width,y2,width=bar_width,label="Prediction", color="C0")

partial_plt.set_title(label)

partial_plt.legend()

plt.show()

def show_attachments():

print("##########################################################################")

print("########## Attachment 1: Fit of pooled model with uniform prior ##########")

print("##########################################################################")

print(""); print(pool_uni_fit); print("");

print("##########################################################################")

print("####### Attachment 2: Fit of pooled model with inverse gamma prior #######")

print("##########################################################################")

print(""); print(pool_inv_fit); print("");

print("##########################################################################")

print("####### Attachment 3: Fit of hierarchical model with uniform prior #######")

print("##########################################################################")

print(""); print(hier_uni_fit); print("")

print("##########################################################################")

print("#### Attachment 4: Fit of hierarchical model with inverse gamma prior ####")

print("##########################################################################")

print(""); print(hier_inv_fit); print("");

#show_attachments()##########################################################################

########## Attachment 1: Fit of pooled model with uniform prior ##########

##########################################################################

Inference for Stan model: anon_model_9b8e95f23a292c6baefb5978c5223890.

4 chains, each with iter=2000; warmup=1000; thin=1;

post-warmup draws per chain=1000, total post-warmup draws=4000.

mean se_mean sd 2.5% 25% 50% 75% 97.5% n_eff Rhat

mu 14.19 6.0e-3 0.31 13.56 13.98 14.2 14.41 14.82 2712 1.0

sigma 5.66 4.4e-3 0.22 5.24 5.51 5.65 5.81 6.13 2577 1.0

log_lik[0] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[1] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[2] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[3] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[4] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[5] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[6] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[7] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[8] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[9] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[10] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[11] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[12] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[13] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[14] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[15] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[16] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[17] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[18] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[19] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[20] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[21] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[22] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[23] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[24] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[25] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[26] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[27] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[28] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[29] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[30] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[31] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[32] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[33] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[34] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[35] -4.48 2.8e-3 0.15 -4.79 -4.58 -4.48 -4.38 -4.21 2940 1.0

log_lik[36] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[37] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[38] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[39] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[40] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[41] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[42] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[43] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[44] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[45] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[46] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[47] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[48] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[49] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[50] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[51] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[52] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[53] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[54] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[55] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[56] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[57] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[58] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[59] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[60] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[61] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[62] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[63] -3.46 1.4e-3 0.07 -3.62 -3.51 -3.46 -3.41 -3.32 2738 1.0

log_lik[64] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[65] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[66] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[67] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[68] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[69] -3.46 1.4e-3 0.07 -3.62 -3.51 -3.46 -3.41 -3.32 2738 1.0

log_lik[70] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[71] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[72] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[73] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[74] -5.22 3.8e-3 0.21 -5.65 -5.36 -5.22 -5.08 -4.85 2963 1.0

log_lik[75] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[76] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[77] -2.65 7.8e-4 0.04 -2.73 -2.68 -2.65 -2.63 -2.58 2561 1.0

log_lik[78] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[79] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[80] -3.46 1.4e-3 0.07 -3.62 -3.51 -3.46 -3.41 -3.32 2738 1.0

log_lik[81] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[82] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[83] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[84] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[85] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[86] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[87] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[88] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[89] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[90] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[91] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[92] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[93] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[94] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[95] -2.65 7.8e-4 0.04 -2.73 -2.68 -2.65 -2.63 -2.58 2561 1.0

log_lik[96] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[97] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[98] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[99] -4.62 3.1e-3 0.16 -4.95 -4.72 -4.61 -4.51 -4.3 2548 1.0

log_lik[100] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[101] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[102] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[103] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[104] -4.48 2.8e-3 0.15 -4.79 -4.58 -4.48 -4.38 -4.21 2940 1.0

log_lik[105] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[106] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[107] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[108] -3.46 1.4e-3 0.07 -3.62 -3.51 -3.46 -3.41 -3.32 2738 1.0

log_lik[109] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[110] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[111] -2.65 7.8e-4 0.04 -2.73 -2.68 -2.65 -2.63 -2.58 2561 1.0

log_lik[112] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[113] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[114] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[115] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[116] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[117] -4.48 2.8e-3 0.15 -4.79 -4.58 -4.48 -4.38 -4.21 2940 1.0

log_lik[118] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[119] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[120] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[121] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[122] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[123] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[124] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[125] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[126] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[127] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[128] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[129] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[130] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[131] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[132] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[133] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[134] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[135] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[136] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[137] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[138] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[139] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[140] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[141] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[142] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[143] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[144] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[145] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[146] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[147] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[148] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[149] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[150] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[151] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[152] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[153] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[154] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[155] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[156] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[157] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[158] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[159] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[160] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[161] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[162] -3.87 1.9e-3 0.1 -4.08 -3.94 -3.86 -3.8 -3.68 2892 1.0

log_lik[163] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[164] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[165] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[166] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[167] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[168] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[169] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[170] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[171] -4.84 3.3e-3 0.18 -5.2 -4.96 -4.83 -4.71 -4.52 2953 1.0

log_lik[172] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[173] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[174] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[175] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[176] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[177] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[178] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[179] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[180] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[181] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[182] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[183] -3.87 1.9e-3 0.1 -4.08 -3.94 -3.86 -3.8 -3.68 2892 1.0

log_lik[184] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[185] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[186] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[187] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[188] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[189] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[190] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[191] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[192] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[193] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[194] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[195] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[196] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[197] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[198] -2.65 7.8e-4 0.04 -2.73 -2.68 -2.65 -2.63 -2.58 2561 1.0

log_lik[199] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[200] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[201] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[202] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[203] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[204] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[205] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[206] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[207] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[208] -4.84 3.3e-3 0.18 -5.2 -4.96 -4.83 -4.71 -4.52 2953 1.0

log_lik[209] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[210] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[211] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[212] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[213] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[214] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[215] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[216] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[217] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[218] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[219] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[220] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[221] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[222] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[223] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[224] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[225] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[226] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[227] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[228] -3.02 9.3e-4 0.05 -3.12 -3.05 -3.01 -2.98 -2.92 2698 1.0

log_lik[229] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[230] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[231] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[232] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[233] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[234] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[235] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[236] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[237] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[238] -5.22 3.8e-3 0.21 -5.65 -5.36 -5.22 -5.08 -4.85 2963 1.0

log_lik[239] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[240] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[241] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[242] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[243] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[244] -4.84 3.3e-3 0.18 -5.2 -4.96 -4.83 -4.71 -4.52 2953 1.0

log_lik[245] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[246] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[247] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[248] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[249] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[250] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[251] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[252] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[253] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[254] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[255] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[256] -4.62 3.1e-3 0.16 -4.95 -4.72 -4.61 -4.51 -4.3 2548 1.0

log_lik[257] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[258] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[259] -4.16 2.3e-3 0.13 -4.42 -4.24 -4.15 -4.07 -3.94 2920 1.0

log_lik[260] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[261] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[262] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[263] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[264] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[265] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[266] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[267] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[268] -5.38 4.3e-3 0.22 -5.83 -5.52 -5.37 -5.23 -4.96 2533 1.0

log_lik[269] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[270] -3.98 2.2e-3 0.11 -4.21 -4.05 -3.97 -3.9 -3.76 2595 1.0

log_lik[271] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[272] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[273] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[274] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[275] -3.87 1.9e-3 0.1 -4.08 -3.94 -3.86 -3.8 -3.68 2892 1.0

log_lik[276] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[277] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[278] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[279] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[280] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[281] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[282] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[283] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[284] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[285] -3.18 1.1e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2746 1.0

log_lik[286] -5.22 3.8e-3 0.21 -5.65 -5.36 -5.22 -5.08 -4.85 2963 1.0

log_lik[287] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[288] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[289] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[290] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[291] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[292] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[293] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[294] -4.62 3.1e-3 0.16 -4.95 -4.72 -4.61 -4.51 -4.3 2548 1.0

log_lik[295] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[296] -3.08 9.7e-4 0.05 -3.18 -3.11 -3.08 -3.04 -2.98 2793 1.0

log_lik[297] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[298] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[299] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[300] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[301] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[302] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[303] -4.28 2.6e-3 0.13 -4.56 -4.37 -4.28 -4.19 -4.02 2565 1.0

log_lik[304] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[305] -2.68 7.7e-4 0.04 -2.76 -2.7 -2.67 -2.65 -2.6 2661 1.0

log_lik[306] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[307] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[308] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[309] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[310] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[311] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[312] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[313] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[314] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[315] -2.88 8.5e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2565 1.0

log_lik[316] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[317] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[318] -2.66 7.8e-4 0.04 -2.74 -2.69 -2.66 -2.64 -2.59 2547 1.0

log_lik[319] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[320] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[321] -3.71 1.8e-3 0.09 -3.89 -3.77 -3.7 -3.64 -3.53 2641 1.0

log_lik[322] -2.65 7.8e-4 0.04 -2.73 -2.68 -2.65 -2.63 -2.58 2561 1.0

log_lik[323] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[324] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[325] -2.73 7.4e-4 0.04 -2.81 -2.76 -2.73 -2.7 -2.65 2942 1.0

log_lik[326] -3.25 1.2e-3 0.06 -3.38 -3.3 -3.25 -3.21 -3.14 2754 1.0

log_lik[327] -2.93 8.4e-4 0.05 -3.02 -2.96 -2.93 -2.9 -2.84 2853 1.0

log_lik[328] -2.78 8.1e-4 0.04 -2.86 -2.8 -2.78 -2.75 -2.7 2484 1.0

log_lik[329] -4.84 3.3e-3 0.18 -5.2 -4.96 -4.83 -4.71 -4.52 2953 1.0

log_lik[330] -3.38 1.3e-3 0.07 -3.52 -3.43 -3.38 -3.33 -3.25 2803 1.0

log_lik[331] -3.61 1.6e-3 0.08 -3.78 -3.66 -3.6 -3.55 -3.46 2853 1.0

log_lik[332] -2.7 7.9e-4 0.04 -2.79 -2.73 -2.7 -2.68 -2.63 2483 1.0

log_lik[333] -2.81 7.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.73 2911 1.0

log_lik[334] -3.46 1.4e-3 0.07 -3.62 -3.51 -3.46 -3.41 -3.32 2738 1.0

ypred 14.29 0.09 5.7 3.43 10.36 14.28 18.2 25.6 4000 1.0

lp__ -746.0 0.02 1.01 -748.7 -746.4 -745.7 -745.3 -745.0 1637 1.0

Samples were drawn using NUTS at Sun Dec 9 13:47:05 2018.

For each parameter, n_eff is a crude measure of effective sample size,

and Rhat is the potential scale reduction factor on split chains (at

convergence, Rhat=1).

##########################################################################

####### Attachment 2: Fit of pooled model with inverse gamma prior #######

##########################################################################

Inference for Stan model: anon_model_fa6169eb72725ff16af37d26935097d5.

4 chains, each with iter=2000; warmup=1000; thin=1;

post-warmup draws per chain=1000, total post-warmup draws=4000.

mean se_mean sd 2.5% 25% 50% 75% 97.5% n_eff Rhat

mu 14.19 6.6e-3 0.31 13.58 13.98 14.19 14.4 14.81 2228 1.0

sigmaSq 31.82 0.05 2.43 27.5 30.1 31.69 33.37 36.82 2719 1.0

sigma 5.64 4.1e-3 0.21 5.24 5.49 5.63 5.78 6.07 2741 1.0

log_lik[0] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[1] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[2] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[3] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[4] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[5] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[6] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[7] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[8] -3.98 2.3e-3 0.11 -4.22 -4.06 -3.98 -3.91 -3.78 2513 1.0

log_lik[9] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[10] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[11] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[12] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[13] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[14] -3.98 2.3e-3 0.11 -4.22 -4.06 -3.98 -3.91 -3.78 2513 1.0

log_lik[15] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[16] -2.81 8.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.74 2054 1.0

log_lik[17] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[18] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[19] -3.38 1.5e-3 0.07 -3.52 -3.42 -3.38 -3.34 -3.25 2112 1.0

log_lik[20] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[21] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[22] -4.17 2.6e-3 0.12 -4.41 -4.25 -4.16 -4.09 -3.95 2091 1.0

log_lik[23] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[24] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[25] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[26] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[27] -2.73 8.1e-4 0.04 -2.8 -2.75 -2.72 -2.7 -2.65 2145 1.0

log_lik[28] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[29] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[30] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[31] -2.73 8.1e-4 0.04 -2.8 -2.75 -2.72 -2.7 -2.65 2145 1.0

log_lik[32] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[33] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[34] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[35] -4.5 3.1e-3 0.14 -4.79 -4.59 -4.49 -4.4 -4.23 2112 1.0

log_lik[36] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[37] -4.17 2.6e-3 0.12 -4.41 -4.25 -4.16 -4.09 -3.95 2091 1.0

log_lik[38] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[39] -3.98 2.3e-3 0.11 -4.22 -4.06 -3.98 -3.91 -3.78 2513 1.0

log_lik[40] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[41] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[42] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[43] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[44] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[45] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[46] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[47] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[48] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[49] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[50] -3.38 1.5e-3 0.07 -3.52 -3.42 -3.38 -3.34 -3.25 2112 1.0

log_lik[51] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[52] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[53] -4.29 2.7e-3 0.14 -4.57 -4.38 -4.28 -4.2 -4.04 2574 1.0

log_lik[54] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[55] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[56] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[57] -2.73 8.1e-4 0.04 -2.8 -2.75 -2.72 -2.7 -2.65 2145 1.0

log_lik[58] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[59] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[60] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[61] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[62] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[63] -3.47 1.6e-3 0.08 -3.62 -3.51 -3.46 -3.41 -3.33 2351 1.0

log_lik[64] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[65] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[66] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[67] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[68] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[69] -3.47 1.6e-3 0.08 -3.62 -3.51 -3.46 -3.41 -3.33 2351 1.0

log_lik[70] -2.67 7.8e-4 0.04 -2.75 -2.7 -2.67 -2.65 -2.6 2296 1.0

log_lik[71] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[72] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[73] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[74] -5.24 4.2e-3 0.2 -5.63 -5.37 -5.24 -5.11 -4.88 2161 1.0

log_lik[75] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[76] -2.73 8.1e-4 0.04 -2.8 -2.75 -2.72 -2.7 -2.65 2145 1.0

log_lik[77] -2.65 7.3e-4 0.04 -2.73 -2.67 -2.65 -2.62 -2.58 2690 1.0

log_lik[78] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[79] -3.38 1.5e-3 0.07 -3.52 -3.42 -3.38 -3.34 -3.25 2112 1.0

log_lik[80] -3.47 1.6e-3 0.08 -3.62 -3.51 -3.46 -3.41 -3.33 2351 1.0

log_lik[81] -2.81 8.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.74 2054 1.0

log_lik[82] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[83] -2.67 7.8e-4 0.04 -2.75 -2.7 -2.67 -2.65 -2.6 2296 1.0

log_lik[84] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[85] -2.73 8.1e-4 0.04 -2.8 -2.75 -2.72 -2.7 -2.65 2145 1.0

log_lik[86] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[87] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[88] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[89] -4.17 2.6e-3 0.12 -4.41 -4.25 -4.16 -4.09 -3.95 2091 1.0

log_lik[90] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[91] -2.67 7.8e-4 0.04 -2.75 -2.7 -2.67 -2.65 -2.6 2296 1.0

log_lik[92] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[93] -3.38 1.5e-3 0.07 -3.52 -3.42 -3.38 -3.34 -3.25 2112 1.0

log_lik[94] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[95] -2.65 7.3e-4 0.04 -2.73 -2.67 -2.65 -2.62 -2.58 2690 1.0

log_lik[96] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[97] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[98] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[99] -4.63 3.2e-3 0.16 -4.96 -4.73 -4.62 -4.52 -4.33 2624 1.0

log_lik[100] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[101] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[102] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[103] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[104] -4.5 3.1e-3 0.14 -4.79 -4.59 -4.49 -4.4 -4.23 2112 1.0

log_lik[105] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[106] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[107] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[108] -3.47 1.6e-3 0.08 -3.62 -3.51 -3.46 -3.41 -3.33 2351 1.0

log_lik[109] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[110] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[111] -2.65 7.3e-4 0.04 -2.73 -2.67 -2.65 -2.62 -2.58 2690 1.0

log_lik[112] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[113] -2.67 7.8e-4 0.04 -2.75 -2.7 -2.67 -2.65 -2.6 2296 1.0

log_lik[114] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[115] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[116] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[117] -4.5 3.1e-3 0.14 -4.79 -4.59 -4.49 -4.4 -4.23 2112 1.0

log_lik[118] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[119] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[120] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[121] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[122] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[123] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[124] -2.81 8.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.74 2054 1.0

log_lik[125] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[126] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[127] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[128] -2.67 7.8e-4 0.04 -2.75 -2.7 -2.67 -2.65 -2.6 2296 1.0

log_lik[129] -4.29 2.7e-3 0.14 -4.57 -4.38 -4.28 -4.2 -4.04 2574 1.0

log_lik[130] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[131] -2.88 8.9e-4 0.04 -2.97 -2.91 -2.88 -2.85 -2.8 2399 1.0

log_lik[132] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[133] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[134] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[135] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[136] -4.29 2.7e-3 0.14 -4.57 -4.38 -4.28 -4.2 -4.04 2574 1.0

log_lik[137] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[138] -3.18 1.2e-3 0.06 -3.3 -3.22 -3.18 -3.14 -3.07 2205 1.0

log_lik[139] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[140] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[141] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[142] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[143] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[144] -3.98 2.3e-3 0.11 -4.22 -4.06 -3.98 -3.91 -3.78 2513 1.0

log_lik[145] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[146] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[147] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[148] -2.7 7.6e-4 0.04 -2.78 -2.73 -2.7 -2.67 -2.63 2683 1.0

log_lik[149] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[150] -2.81 8.7e-4 0.04 -2.89 -2.84 -2.81 -2.78 -2.74 2054 1.0

log_lik[151] -3.08 1.1e-3 0.05 -3.18 -3.11 -3.07 -3.04 -2.98 2186 1.0

log_lik[152] -2.93 9.6e-4 0.04 -3.02 -2.95 -2.93 -2.9 -2.85 2055 1.0

log_lik[153] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[154] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[155] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[156] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[157] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[158] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[159] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[160] -3.61 1.8e-3 0.08 -3.77 -3.67 -3.61 -3.56 -3.46 2080 1.0

log_lik[161] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[162] -3.88 2.2e-3 0.1 -4.08 -3.94 -3.87 -3.81 -3.69 2077 1.0

log_lik[163] -3.01 1.0e-3 0.05 -3.11 -3.05 -3.01 -2.98 -2.92 2272 1.0

log_lik[164] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[165] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[166] -3.26 1.3e-3 0.06 -3.38 -3.29 -3.25 -3.21 -3.14 2263 1.0

log_lik[167] -3.71 1.9e-3 0.09 -3.9 -3.77 -3.71 -3.65 -3.54 2438 1.0

log_lik[168] -2.77 8.1e-4 0.04 -2.86 -2.8 -2.77 -2.75 -2.7 2555 1.0

log_lik[169] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0

log_lik[170] -2.66 7.4e-4 0.04 -2.74 -2.68 -2.66 -2.63 -2.59 2733 1.0