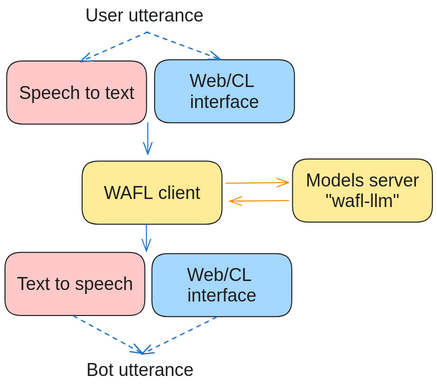

WAFL is built to run as a two-part system. Both can be installed on the same machine. This is the LLM side of the WAFL project.

This is a model server for the speech-to-text model, the LLM, the embedding system, and the text-to-speech model.

In order to quickly run the LLM side, you can use the following installation commands:

pip install wafl-llm

wafl-llm startwhich will use the default models and start the server on port 8080.

The installation will require MPI and Java installed on the system. One can install both with the following commands

sudo apt install libopenmpi-dev

sudo apt install default-jdkA use-case specific configuration can be set by creating a config.json file in the path where wafl-llm start is executed.

The file should look like this (the default configuration)

{

"llm_model": "mistralai/Mistral-7B-Instruct-v0.1",

"speaker_model": "facebook/fastspeech2-en-ljspeech",

"whisper_model": "fractalego/personal-whisper-distilled-model",

"sentence_embedder_models": "TaylorAI/gte-tiny"

}The models are downloaded from the HugggingFace repository. Any other compatible model should work.

Run the following

docker/build.sh

docker run -p8080:8080 --env NVIDIA_DISABLE_REQUIRE=1 --gpus all wafl-llm