relevant-evidence-detection

Official repository for the "RED-DOT: Multimodal Fact-checking via Relevant Evidence Detection" paper. You can read the pre-print here: https://doi.org/10.48550/arXiv.2311.09939

Abstract

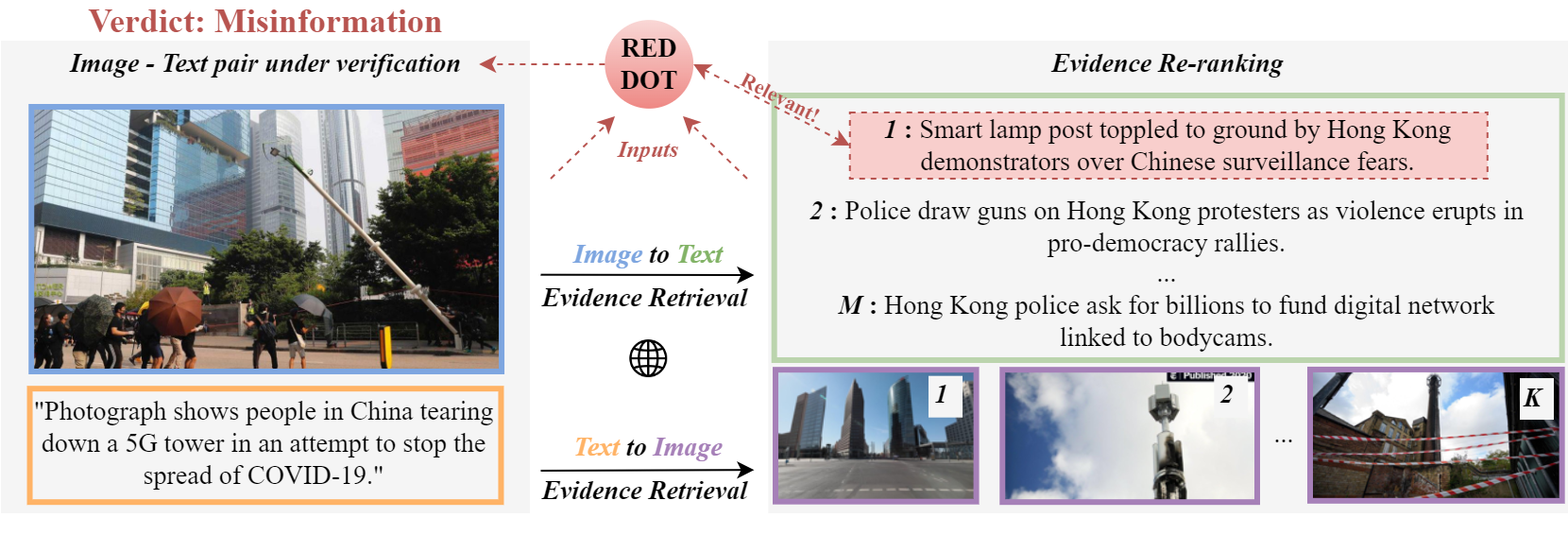

Online misinformation is often multimodal in nature, i.e., it is caused by misleading associations between texts and accompanying images. To support the fact-checking process, researchers have been recently developing automatic multimodal methods that gather and analyze external information, evidence, related to the image-text pairs under examination. However, prior works assumed all collected evidence to be relevant. In this study, we introduce a “Relevant Evidence Detection” (RED) module to discern whether each piece of evidence is relevant, to support or refute the claim. Specifically, we develop the “Relevant Evidence Detection Directed Transformer” (RED-DOT) and explore multiple architectural variants (e.g., single or dual-stage) and mechanisms (e.g., “guided attention”). Extensive ablation and comparative experiments demonstrate that RED-DOT achieves significant improvements over the state-of-the-art on the VERITE benchmark by up to 28.5%. Furthermore, our evidence re-ranking and element-wise modality fusion led to RED-DOT achieving competitive and even improved performance on NewsCLIPings+, without the need for numerous evidence or multiple backbone encoders. Finally, our qualitative analysis demonstrates that the proposed “guided attention” module has the potential to enhance the architecture’s interpretability.

Preparation

- Clone this repo:

git clone https://github.com/stevejpapad/relevant-evidence-detection

cd relevant-evidence-detection

- Create a python (>= 3.9) environment (Anaconda is recommended)

- Install all dependencies with:

pip install --file requirements.txt.

Datasets

If you want to reproduce the experiments on the paper it is necassary to first download the following datasets and save them in their respective folder:

- VisualNews -> https://github.com/FuxiaoLiu/VisualNews-Repository ->

data/VisualNews/ - NewsCLIPings -> https://github.com/g-luo/news_clippings ->

data/news_clippings/ - NewsCLIPings evidence -> https://github.com/S-Abdelnabi/OoC-multi-modal-fc ->

data/news_clippings/queries_dataset/ - VERITE -> https://github.com/stevejpapad/image-text-verification ->

data/VERITE/(Although we provide all necassary files as well as the external evidence)

Reproducibility

To prepare the datasets, extract CLIP features and reproduce all experiments run:

python src/main.py

Citation

If you find our work useful, please cite:

@article{papadopoulos2023red,

title={RED-DOT: Multimodal Fact-checking via Relevant Evidence Detection},

author={Papadopoulos, Stefanos-Iordanis and Koutlis, Christos and Papadopoulos, Symeon and Petrantonakis, Panagiotis C},

journal={arXiv preprint arXiv:2311.09939},

year={2023}

}

Acknowledgements

This work is partially funded by the project "vera.ai: VERification Assisted by Artificial Intelligence" under grant agreement no. 101070093.

Licence

This project is licensed under the Apache License 2.0 - see the LICENSE file for more details.

Contact

Stefanos-Iordanis Papadopoulos (stefpapad@iti.gr)